文章转载自:https://mp.weixin.qq.com/s/2sWHt6SeCf7GGam0LJEkkA

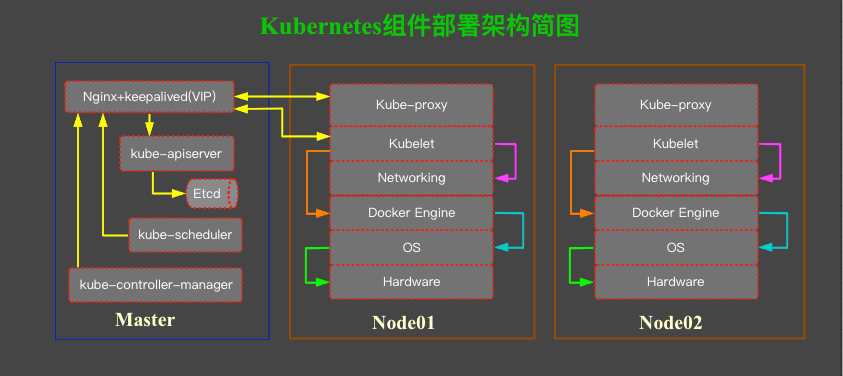

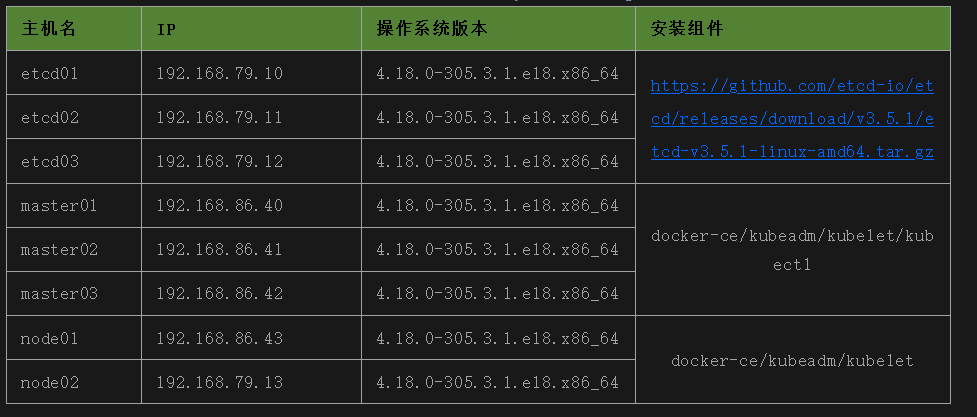

一、环境准备

使用服务器 Centos 8.4 镜像,默认操作系统版本 4.18.0-305.3.1.el8.x86_64。

注意:由于云服务器,无法使用VIP,没有办法使用keepalive+nginx使用三节点VIP,这里在kubeadm init初始化配置文件中指定了一个master01节点的IP。

如果你的环境可以使用VIP,可能参考:第五篇 安装keepalived与Nginx

二、服务器初始化

所有服务器进行初始化,只需要对master和node节点就可以,脚本内容在Centos 8 已经得到验证。

1、主要做了以下操作,安装一些必要依赖包、禁用ipv6、启用时间同步、加载ipvs模块、修改内核参数、禁用swap、关闭防火墙等;

# cat 1_init_all_host.sh

#!/bin/bash

# 1. install common tools,these commands are not required.

source /etc/profile

yum -y install chrony bridge-utils chrony ipvsadm ipset sysstat conntrack libseccomp wget tcpdump screen vim nfs-utils bind-utils wget socat telnet sshpass net-tools sysstat lrzsz yum-utils device-mapper-persistent-data lvm2 tree nc lsof strace nmon iptraf iftop rpcbind mlocate

# 2. disable IPv6

if [ $(cat /etc/default/grub |grep 'ipv6.disable=1' |grep GRUB_CMDLINE_LINUX|wc -l) -eq 0 ];then

sed -i 's/GRUB_CMDLINE_LINUX="/GRUB_CMDLINE_LINUX="ipv6.disable=1 /' /etc/default/grub

/usr/sbin/grub2-mkconfig -o /boot/grub2/grub.cfg

fi

# 3. disable NetworkManager,centos8 use NetworkManager,otherwise network reboot failed.

# systemctl stop NetworkManager

# systemctl disable NetworkManager

# 4. enable chronyd service

systemctl enable chronyd.service

systemctl start chronyd.service

# 5. add bridge-nf-call-ip6tables ,notice: You may need to run '/usr/sbin/modprobe br_netfilter' this commond after reboot.

cat > /etc/rc.sysinit << EOF

#!/bin/bash

for file in /etc/sysconfig/modules/*.modules ; do

[ -x $file ] && $file

done

EOF

cat > /etc/sysconfig/modules/br_netfilter.modules << EOF

modprobe br_netfilter

EOF

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_lc

modprobe -- ip_vs_wlc

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_lblc

modprobe -- ip_vs_lblcr

modprobe -- ip_vs_dh

modprobe -- ip_vs_sh

modprobe -- ip_vs_fo

modprobe -- ip_vs_nq

modprobe -- ip_vs_sed

modprobe -- ip_vs_ftp

modprobe -- nf_conntrack

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

chmod 755 /etc/sysconfig/modules/br_netfilter.modules

# 6. add route forwarding

[ $(cat /etc/sysctl.conf | grep "net.ipv6.conf.all.disable_ipv6=1" |wc -l) -eq 0 ] && echo "net.ipv6.conf.all.disable_ipv6=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv6.conf.default.disable_ipv6=1" |wc -l) -eq 0 ] && echo "net.ipv6.conf.default.disable_ipv6=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv6.conf.lo.disable_ipv6=1" |wc -l) -eq 0 ] && echo "net.ipv6.conf.lo.disable_ipv6=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.neigh.default.gc_stale_time=120" |wc -l) -eq 0 ] && echo "net.ipv4.neigh.default.gc_stale_time=120" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.conf.all.rp_filter=0" |wc -l) -eq 0 ] && echo "net.ipv4.conf.all.rp_filter=0" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "fs.inotify.max_user_instances=8192" |wc -l) -eq 0 ] && echo "fs.inotify.max_user_instances=8192" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "fs.inotify.max_user_watches=1048576" |wc -l) -eq 0 ] && echo "fs.inotify.max_user_watches=1048576" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.ip_forward=1" |wc -l) -eq 0 ] && echo "net.ipv4.ip_forward=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.bridge.bridge-nf-call-iptables=1" |wc -l) -eq 0 ] && echo "net.bridge.bridge-nf-call-iptables=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.bridge.bridge-nf-call-ip6tables=1" |wc -l) -eq 0 ] && echo "net.bridge.bridge-nf-call-ip6tables=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.bridge.bridge-nf-call-arptables=1" |wc -l) -eq 0 ] && echo "net.bridge.bridge-nf-call-arptables=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.conf.default.rp_filter=0" |wc -l) -eq 0 ] && echo "net.ipv4.conf.default.rp_filter=0" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.conf.default.arp_announce=2" |wc -l) -eq 0 ] && echo "net.ipv4.conf.default.arp_announce=2" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.conf.lo.arp_announce=2" |wc -l) -eq 0 ] && echo "net.ipv4.conf.lo.arp_announce=2" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.conf.all.arp_announce=2" |wc -l) -eq 0 ] && echo "net.ipv4.conf.all.arp_announce=2" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.tcp_max_tw_buckets=5000" |wc -l) -eq 0 ] && echo "net.ipv4.tcp_max_tw_buckets=5000" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.tcp_syncookies=1" |wc -l) -eq 0 ] && echo "net.ipv4.tcp_syncookies=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.tcp_max_syn_backlog=1024" |wc -l) -eq 0 ] && echo "net.ipv4.tcp_max_syn_backlog=1024" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.ipv4.tcp_synack_retries=2" |wc -l) -eq 0 ] && echo "net.ipv4.tcp_synack_retries=2" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "fs.may_detach_mounts=1" |wc -l) -eq 0 ] && echo "fs.may_detach_mounts=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "vm.overcommit_memory=1" |wc -l) -eq 0 ] && echo "vm.overcommit_memory=1" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "vm.panic_on_oom=0" |wc -l) -eq 0 ] && echo "vm.panic_on_oom=0" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "vm.swappiness=0" |wc -l) -eq 0 ] && echo "vm.swappiness=0" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "fs.inotify.max_user_watches=89100" |wc -l) -eq 0 ] && echo "fs.inotify.max_user_watches=89100" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "fs.file-max=52706963" |wc -l) -eq 0 ] && echo "fs.file-max=52706963" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "fs.nr_open=52706963" |wc -l) -eq 0 ] && echo "fs.nr_open=52706963" >>/etc/sysctl.conf

[ $(cat /etc/sysctl.conf | grep "net.netfilter.nf_conntrack_max=2310720" |wc -l) -eq 0 ] && echo "net.netfilter.nf_conntrack_max=2310720" >>/etc/sysctl.conf

/usr/sbin/sysctl -p

# 7. modify limit file

[ $(cat /etc/security/limits.conf|grep '* soft nproc 10240000'|wc -l) -eq 0 ]&&echo '* soft nproc 10240000' >>/etc/security/limits.conf

[ $(cat /etc/security/limits.conf|grep '* hard nproc 10240000'|wc -l) -eq 0 ]&&echo '* hard nproc 10240000' >>/etc/security/limits.conf

[ $(cat /etc/security/limits.conf|grep '* soft nofile 10240000'|wc -l) -eq 0 ]&&echo '* soft nofile 10240000' >>/etc/security/limits.conf

[ $(cat /etc/security/limits.conf|grep '* hard nofile 10240000'|wc -l) -eq 0 ]&&echo '* hard nofile 10240000' >>/etc/security/limits.conf

# 8. disable selinux

sed -i '/SELINUX=/s/enforcing/disabled/' /etc/selinux/config

# 9. Close the swap partition

/usr/sbin/swapoff -a

yes | cp /etc/fstab /etc/fstab_bak

cat /etc/fstab_bak |grep -v swap > /etc/fstab

# 10. disable firewalld

systemctl stop firewalld

systemctl disable firewalld

# 11. reset iptables

yum install -y iptables-services

/usr/sbin/iptables -P FORWARD ACCEPT

/usr/sbin/iptables -X

/usr/sbin/iptables -Z

/usr/sbin/iptables -F -t nat

/usr/sbin/iptables -X -t nat

reboot

2、修改主机名和hosts

# cat ip2

192.168.79.10 etcd01

192.168.79.11 etcd02

192.168.79.12 etcd03

192.168.79.13 node02

192.168.86.40 master01

192.168.86.41 master02

192.168.86.42 master03

192.168.86.43 node01

# cat 2_modify_hosts_and_hostname.sh

#!/bin/bash

DIR=`pwd`

cd $DIR

cat ip2 |gawk '{print $2,$1}'>hosts_temp

exec <./ip2

while read A B

do

scp ${DIR}/hosts_temp $A:/root/

ssh -n $A "hostnamectl set-hostname $B && cat /root/hosts_temp >>/etc/hosts && rm -rf hosts_temp"

done

rm -rf hosts_temp

三、安装etcd

1、生成CA根证书,由于使用TLS安全认证功能,需要为etcd访问ca证书和私钥,证书签发原理可以参考:第三篇 PKI基础概念、cfssl工具介绍及kubernetes中证书

# cat 3_ca_root.sh

#!/bin/bash

# 1. download cfssl related files.

while true;

do

echo "Download cfssl, please wait a monment." &&\

curl -L -C - -O https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl_1.6.1_linux_amd64 && \

curl -L -C - -O https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssljson_1.6.1_linux_amd64 && \

curl -L -C - -O https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl-certinfo_1.6.1_linux_amd64

if [ $? -eq 0 ];then

echo "cfssl download success."

break

else

echo "cfssl download failed."

break

fi

done

# 2. Create a binary dirctory to store kubernetes related files.

if [ ! -d /usr/kubernetes/bin/ ];then

mkdir -p /usr/kubernetes/bin/

fi

# 3. copy binary files to before create a binary dirctory.

mv cfssl_1.6.1_linux_amd64 /usr/kubernetes/bin/cfssl

mv cfssljson_1.6.1_linux_amd64 /usr/kubernetes/bin/cfssljson

mv cfssl-certinfo_1.6.1_linux_amd64 /usr/kubernetes/bin/cfssl-certinfo

chmod +x /usr/kubernetes/bin/{cfssl,cfssljson,cfssl-certinfo}

# 4. add environment variables

[ $(cat /etc/profile|grep 'PATH=/usr/kubernetes/bin'|wc -l ) -eq 0 ] && echo 'PATH=/usr/kubernetes/bin:$PATH' >>/etc/profile && source /etc/profile || source /etc/profile

# 5. create a CA certificate directory and access this directory

CA_SSL=/etc/kubernetes/ssl/ca

[ ! -d ${CA_SSL} ] && mkdir -p ${CA_SSL}

cd $CA_SSL

## cfssl print-defaults config > config.json

## cfssl print-defaults csr > csr.json

cat > ${CA_SSL}/ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ${CA_SSL}/ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 6. generate ca.pem, ca-key.pem

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

[ $? -eq 0 ] && echo "CA certificate and private key generated successfully." || echo "CA certificate and private key generation failed."

2、使用私有CA为ETCD签发证书和私钥

# cat 4_ca_etcd.sh

#!/bin/bash

# 2. create csr file.

source /etc/profile

ETCD_SSL="/etc/kubernetes/ssl/etcd/"

[ ! -d ${ETCD_SSL} ] && mkdir ${ETCD_SSL}

cat >$ETCD_SSL/etcd-csr.json << EOF

{

"CN": "etcd",

"hosts": [

"192.168.79.10",

"192.168.79.11",

"192.168.79.12",

"192.168.79.13",

"192.168.86.40",

"192.168.86.41",

"192.168.86.42",

"192.168.86.43"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 3. Determine if the ca required file exits.

[ ! -f /etc/kubernetes/ssl/ca/ca.pem ] && echo "no ca.pem file." && exit 0

[ ! -f /etc/kubernetes/ssl/ca/ca-key.pem ] && echo "no ca-key.pem file" && exit 0

[ ! -f /etc/kubernetes/ssl/ca/ca-config.json ] && echo "no ca-config.json file" && exit 0

# 4. generate etcd private key and public key.

cd $ETCD_SSL

cfssl gencert -ca=/etc/kubernetes/ssl/ca/ca.pem \

-ca-key=/etc/kubernetes/ssl/ca/ca-key.pem \

-config=/etc/kubernetes/ssl/ca/ca-config.json \

-profile=kubernetes etcd-csr.json | cfssljson -bare etcd

[ $? -eq 0 ] && echo "Etcd certificate and private key generated successfully." || echo "Etcd certificate and private key generation failed."

3、copy 数字证书到etcd服务器和master上面

# cat 5_scp_etcd_pem_key.sh

#!/bin/bash

# 1. etcd need these file

for i in `cat ip2|grep etcd|gawk '{print $1}'`

do

scp -r /etc/kubernetes $i:/etc/

done

# 2. k8s master node need these file too.

for i in `cat ip2|grep -v etcd|gawk '{print $1}'`

do

scp -r /etc/kubernetes $i:/etc/

done

4、etcd的三台机器分别执行此脚本,以下脚本为完成etcd的全部安装过程,注意下载etcd安装包时,可以提前下载好,因为国内网络,大家都懂的;

# cat 6_etcd_install.sh

#!/bin/bash

# 1. env info

source /etc/profile

declare -A dict

dict=(['etcd01']=192.168.79.10 ['etcd02']=192.168.79.11 ['etcd03']=192.168.79.12)

IP=`ip a |grep inet|grep -v 127.0.0.1|gawk -F/ '{print $1}'|gawk '{print $NF}'`

for key in $(echo ${!dict[*]})

do

if [[ "$IP" == "${dict[$key]}" ]];then

LOCALIP=$IP

LOCAL_ETCD_NAME=$key

fi

done

if [[ "$LOCALIP" == "" || "$LOCAL_ETCD_NAME" == "" ]];then

echo "Get localhost IP failed." && exit 1

fi

# 2. download etcd source code and decompress.

CURRENT_DIR=`pwd`

cd $CURRENT_DIR

curl -L -C - -O https://github.com/etcd-io/etcd/releases/download/v3.5.1/etcd-v3.5.1-linux-amd64.tar.gz

( [ $? -eq 0 ] && echo "etcd source code download success." ) || ( echo "etcd source code download failed." && exit 1 )

/usr/bin/tar -zxf etcd-v3.5.1-linux-amd64.tar.gz

cp etcd-v3.5.1-linux-amd64/etc* /usr/local/bin/

#rm -rf etcd-v3.3.18-linux-amd64*

# 3. deploy etcd config and enable etcd.service.

ETCD_SSL="/etc/kubernetes/ssl/etcd/"

ETCD_SERVICE=/usr/lib/systemd/system/etcd.service

[ ! -d /data/etcd/ ] && mkdir -p /data/etcd/

# 3.1 create /etc/etcd/etcd.conf configure file.

ETCD_NAME="${LOCAL_ETCD_NAME}"

ETCD_DATA_DIR="/data/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${LOCALIP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${LOCALIP}:2379"

ETCD_LISTEN_CLIENT_URLS2="http://127.0.0.1:2379"

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${LOCALIP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${LOCALIP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${dict['etcd01']}:2380,etcd02=https://${dict['etcd02']}:2380,etcd03=https://${dict['etcd03']}:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

# 3.2 create etcd.service

cat>$ETCD_SERVICE<<EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},${ETCD_LISTEN_CLIENT_URLS2} \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=/etc/kubernetes/ssl/etcd/etcd.pem \

--key-file=/etc/kubernetes/ssl/etcd/etcd-key.pem \

--peer-cert-file=/etc/kubernetes/ssl/etcd/etcd.pem \

--peer-key-file=/etc/kubernetes/ssl/etcd/etcd-key.pem \

--trusted-ca-file=/etc/kubernetes/ssl/ca/ca.pem \

--peer-trusted-ca-file=/etc/kubernetes/ssl/ca/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

# 4. enable etcd.service and start

systemctl daemon-reload

systemctl enable etcd.service

systemctl start etcd.service

systemctl status etcd.service

5、登录etcd服务器,进行etcd安装验证

# cat 6_check_etcd.sh

#!/bin/bash

declare -A dict

dict=(['etcd01']=192.168.79.10 ['etcd02']=192.168.79.11 ['etcd03']=192.168.79.12)

echo "==== check endpoint health ===="

cd /usr/local/bin

ETCDCTL_API=3 ./etcdctl -w table --cacert=/etc/kubernetes/ssl/ca/ca.pem \

--cert=/etc/kubernetes/ssl/etcd/etcd.pem \

--key=/etc/kubernetes/ssl/etcd/etcd-key.pem \

--endpoints="https://${dict['etcd01']}:2379,https://${dict['etcd02']}:2379,https://${dict['etcd03']}:2379" endpoint health

echo

echo

echo "==== check member list ===="

ETCDCTL_API=3 ./etcdctl -w table --cacert=/etc/kubernetes/ssl/ca/ca.pem \

--cert=/etc/kubernetes/ssl/etcd/etcd.pem \

--key=/etc/kubernetes/ssl/etcd/etcd-key.pem \

--endpoints="https://${dict['etcd01']}:2379,https://${dict['etcd02']}:2379,https://${dict['etcd03']}:2379" member list

echo

echo

echo "==== check endpoint status --cluster ===="

ETCDCTL_API=3 ./etcdctl -w table --cacert=/etc/kubernetes/ssl/ca/ca.pem \

--cert=/etc/kubernetes/ssl/etcd/etcd.pem \

--key=/etc/kubernetes/ssl/etcd/etcd-key.pem \

--endpoints="https://${dict['etcd01']}:2379,https://${dict['etcd02']}:2379,https://${dict['etcd03']}:2379" endpoint status --cluster

## delete etcd node

#ETCDCTL_API=3 ./etcdctl --cacert=/etc/kubernetes/ssl/ca/ca.pem \

#--cert=/etc/kubernetes/ssl/etcd/etcd.pem \

#--key=/etc/kubernetes/ssl/etcd/etcd-key.pem \

#--endpoints="https://${dict['etcd01']}:2379,https://${dict['etcd02']}:2379,https://${dict['etcd03']}:2379" member remove xxxxx

## add etcd node

#ETCDCTL_API=3 ./etcdctl --cacert=/etc/kubernetes/ssl/ca/ca.pem \

#--cert=/etc/kubernetes/ssl/etcd/etcd.pem \

#--key=/etc/kubernetes/ssl/etcd/etcd-key.pem \

#--endpoints="https://${dict['etcd01']}:2379,https://${dict['etcd02']}:2379,https://${dict['etcd03']}:2379" member add xxxxx

四、安装docker引擎

# cat 7_docker_install.sh

#!/bin/bash

# 1. install docker repo

for i in `cat ip2|grep -v etcd|gawk '{print $1}'`

do

ssh $i "yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo &&yum makecache && yum -y install docker-ce"

done

# 2. repair daemon.json modify docker data-root.

cat>`pwd`/daemon.json<<EOF

{

"data-root": "/data/docker",

"insecure-registries": ["registry.k8s.vip","192.168.1.100"],

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": [

"https://registry.cn-hangzhou.aliyuncs.com",

"https://docker.mirrors.ustc.edu.cn",

"https://dockerhub.azk8s.cn"

]

}

EOF

# 3. start

for i in `cat ip2|grep -v etcd|gawk '{print $1}'`

do

systemctl daemon-reload

systemctl enable docker.service

systemctl start docker.service

done

# 4. wait docker start finshed.

sleep 10

# 5. use new file daemon.json and restart docker

for i in `cat ip2|grep -v etcd|gawk '{print $1}'`

do

scp `pwd`/daemon.json $i:/etc/docker/

ssh $i "systemctl restart docker.service"

done

rm -f `pwd`/daemon.json

五、安装kubernetes组件

1、配置kubernetes yum源

# cat 8_kubernetes.repo.sh

#!/bin/bash

cat >`pwd`/kubernetes.repo<<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

for i in `cat ip2|grep -v etcd|gawk '{print $1}'`

do

scp `pwd`/kubernetes.repo $i:/etc/yum.repos.d/

done

rm -f `pwd`/kubernetes.repo

2、安装

# cat 9_k8s_common_install.sh

#!/bin/bash

# yum list kubelet --showduplicates | sort -r

# yum install -y kubelet-1.17.3

# install master node

for i in `cat ip2|grep master|gawk '{print $1}'`

do

ssh $i "yum -y install kubelet kubeadm kubectl"

done

# install node

for i in `cat ip2|grep node|gawk '{print $1}'`

do

ssh $i "yum -y install kubelet kubeadm"

done

3、配置kubelet并设置开机启动

现在不用着急启动,使用kubeadm初始化或者加入集群时,会自动启动。

#!/bin/bash

for i in `cat ip2|grep -v etcd|gawk '{print $1}'`

do

ssh $i "echo 'KUBELET_EXTRA_ARGS="--fail-swap-on=false"' > /etc/sysconfig/kubelet"

ssh $i "systemctl enable kubelet.service"

done

4、创建初始化配置文件,可以在此基础上面修改

# 生成默认配置文件

kubeadm config print init-defaults

# 可以根据组件生成

kubeadm config print init-defaults --component-configs KubeProxyConfiguration

kubeadm config print init-defaults --component-configs KubeletConfiguration

这里我们的默认配置文件如下,使用外部etcd的方式,还有一个注意点,修改pod网段及kubeproxy运行模式,把此文件copy到master01节点上面运行,注意还需要把etcd的证书copy到master节点;

# cat kubeadm_init.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 100.109.86.40

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

imagePullPolicy: IfNotPresent

name: master01

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

extraArgs:

authorization-mode: "Node,RBAC"

enable-admission-plugins: "NamespaceLifecycle,LimitRanger,ServiceAccount,PersistentVolumeClaimResize,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota,Priority"

runtime-config: api/all=true

certSANs:

- 10.96.0.1

- 127.0.0.1

- localhost

- master01

- master02

- master03

- 100.109.86.40

- 100.109.86.41

- 100.109.86.42

- apiserver.k8s.local

- kubernetes

- kubernetes.default

- kubernetes.default.svc

- kubernetes.default.svc.cluster.local

extraVolumes:

- hostPath: /etc/localtime

mountPath: /etc/localtime

name: localtime

readOnly: true

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager:

extraArgs:

bind-address: "0.0.0.0"

experimental-cluster-signing-duration: 876000h

extraVolumes:

- hostPath: /etc/localtime

mountPath: /etc/localtime

name: localtime

readOnly: true

dns:

imageRepository: docker.io

imageTag: 1.8.6

etcd:

external:

endpoints:

- https://100.109.79.10:2379

- https://100.109.79.11:2379

- https://100.109.79.12:2379

caFile: /etc/kubernetes/ssl/ca/ca.pem

certFile: /etc/kubernetes/ssl/etcd/etcd.pem

keyFile: /etc/kubernetes/ssl/etcd/etcd-key.pem

imageRepository: registry.aliyuncs.com/k8sxio

kind: ClusterConfiguration

kubernetesVersion: 1.23.1

networking:

dnsDomain: cluster.local

podSubnet: 172.30.0.0/16

serviceSubnet: 10.96.0.0/12

controlPlaneEndpoint: 100.109.86.40:6443

scheduler:

extraArgs:

bind-address: "0.0.0.0"

extraVolumes:

- hostPath: /etc/localtime

mountPath: /etc/localtime

name: localtime

readOnly: true

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

bindAddressHardFail: false

clientConnection:

acceptContentTypes: ""

burst: 0

contentType: ""

kubeconfig: /var/lib/kube-proxy/kubeconfig.conf

qps: 0

clusterCIDR: ""

configSyncPeriod: 0s

conntrack:

maxPerCore: null

min: null

tcpCloseWaitTimeout: null

tcpEstablishedTimeout: null

detectLocalMode: ""

enableProfiling: false

healthzBindAddress: ""

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: null

minSyncPeriod: 0s

syncPeriod: 0s

ipvs:

excludeCIDRs: null

minSyncPeriod: 0s

scheduler: ""

strictARP: false

syncPeriod: 0s

tcpFinTimeout: 0s

tcpTimeout: 0s

udpTimeout: 0s

kind: KubeProxyConfiguration

metricsBindAddress: ""

mode: "ipvs"

nodePortAddresses: null

oomScoreAdj: null

portRange: ""

showHiddenMetricsForVersion: ""

udpIdleTimeout: 0s

winkernel:

enableDSR: false

networkName: ""

sourceVip: ""

---

apiVersion: kubelet.config.k8s.io/v1beta1

cgroupDriver: systemd

kind: KubeletConfiguration

5、查看依赖镜像,如果下载不到,自行想办法,国内网络你懂的。

# kubeadm config images list --config=kubeadm_init.yaml

registry.aliyuncs.com/k8sxio/kube-apiserver:v1.23.1

registry.aliyuncs.com/k8sxio/kube-controller-manager:v1.23.1

registry.aliyuncs.com/k8sxio/kube-scheduler:v1.23.1

registry.aliyuncs.com/k8sxio/kube-proxy:v1.23.1

registry.aliyuncs.com/k8sxio/pause:3.6

docker.io/coredns:1.8.6

6、初始化安装

kubeadm init --config=kubeadm_init.yaml

7、需要把master节点生成证书copy到其它master 节点

#!/bin/bash

# 在kubeadm init 的服务器上面运行;

for i in `cat /etc/hosts|grep -E "master02|master03"|gawk '{print $2}'`

do

ssh $i "mkdir -p /etc/kubernetes/pki"

scp /etc/kubernetes/pki/ca.* $i:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/sa.* $i:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.* $i:/etc/kubernetes/pki/

scp /etc/kubernetes/admin.conf $i:/etc/kubernetes/

done

8、把master02与master03加入到控制平面

kubeadm join 192.168.86.40:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:073ebe293b1072de412755791542fea2791abf25000038f55727880ccc8a71e4 \

--control-plane --ignore-preflight-errors=Swap

9、在node01、node02上面运行

kubeadm join 192.168.86.40:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:073ebe293b1072de412755791542fea2791abf25000038f55727880ccc8a71e4 --ignore-preflight-errors=Swap

10、 master01节点

[root@master01 ~]# mkdir -p $HOME/.kube

[root@master01 ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master01 ~]# chown $(id -u):$(id -g) $HOME/.kube/config

六、部署CNI插件

1、前提准备

这里使用calico网络插件,下载链接,这个yaml文件中的image下载,在国内也很慢,需要你想办法解决此问题;

官网:https://projectcalico.docs.tigera.io/getting-started/kubernetes/self-managed-onprem/onpremises

下载地址:curl https://projectcalico.docs.tigera.io/manifests/calico.yaml -O

你可以根据你自定义的pod网段修改CALICO_IPV4POOL_CIDR对应的网段,默认是192.168.0.0/16,这里默认存储是etcd,还可以使用我们之前创建的etcd集群;由于这里没有做特别的修改,兼于文件太长,此配置不粘贴出来了。

- name: CALICO_IPV4POOL_CIDR

value: "172.30.0.0/16"

2、应用

# kubectl apply -f calico.yaml

configmap/calico-config unchanged

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org configured

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org configured

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers unchanged

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers unchanged

clusterrole.rbac.authorization.k8s.io/calico-node unchanged

clusterrolebinding.rbac.authorization.k8s.io/calico-node unchanged

daemonset.apps/calico-node configured

serviceaccount/calico-node unchanged

deployment.apps/calico-kube-controllers unchanged

serviceaccount/calico-kube-controllers unchanged

poddisruptionbudget.policy/calico-kube-controllers configured

3、查看(注意镜像下载有问题,可以从阿里下载)

# kubectl get pods -n kube-system|grep calico

calico-kube-controllers-7c8984549d-6jvwp 1/1 Running 0 5d20h

calico-node-5qbvf 1/1 Running 0 5d20h

calico-node-5v6f8 1/1 Running 0 5d20h

calico-node-nkc78 1/1 Running 0 5d20h

calico-node-t8wbf 1/1 Running 0 5d20h

calico-node-xpbf5 1/1 Running 0 5d20h

七、验证

1、创建服务及svc

[root@master01 ~]# kubectl apply -f nginx_test.yaml

deployment.apps/test-deployment-nginx created

service/default-svc-nginx created

[root@master01 ~]#

[root@master01 ~]# cat nginx_test.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: test-deployment-nginx

namespace: default

spec:

replicas: 10

selector:

matchLabels:

run: test-deployment-nginx

template:

metadata:

labels:

run: test-deployment-nginx

spec:

containers:

- name: test-deployment-nginx

image: nginx:1.7.9

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: default-svc-nginx

namespace: default

spec:

selector:

run: test-deployment-nginx

type: ClusterIP

ports:

- name: nginx-port

port: 80

targetPort: 80

[root@master01 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

default-busybox-675df76b45-xjtkd 1/1 Running 9 (37h ago) 21d 172.30.3.39 node01 <none> <none>

test-deployment-nginx-5f9c89657d-55cjf 1/1 Running 0 33s 172.30.235.3 master03 <none> <none>

test-deployment-nginx-5f9c89657d-9br8j 1/1 Running 0 33s 172.30.241.68 master01 <none> <none>

test-deployment-nginx-5f9c89657d-cdccb 1/1 Running 0 33s 172.30.196.129 node01 <none> <none>

test-deployment-nginx-5f9c89657d-dx2vh 1/1 Running 0 33s 172.30.140.69 node02 <none> <none>

test-deployment-nginx-5f9c89657d-m796j 1/1 Running 0 33s 172.30.235.4 master03 <none> <none>

test-deployment-nginx-5f9c89657d-nm95d 1/1 Running 0 33s 172.30.241.67 master01 <none> <none>

test-deployment-nginx-5f9c89657d-pp6vb 1/1 Running 0 33s 172.30.196.130 node01 <none> <none>

test-deployment-nginx-5f9c89657d-r5ghr 1/1 Running 0 33s 172.30.59.196 master02 <none> <none>

test-deployment-nginx-5f9c89657d-s5bd8 1/1 Running 0 33s 172.30.59.195 master02 <none> <none>

test-deployment-nginx-5f9c89657d-wwlql 1/1 Running 0 33s 172.30.140.70 node02 <none> <none>

[root@master01 ~]#

2、验证DNS

[root@master01 ~]# kubectl exec -it default-busybox-675df76b45-xjtkd /bin/bash

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

OCI runtime exec failed: exec failed: container_linux.go:380: starting container process caused: exec: "/bin/bash": stat /bin/bash: no such file or directory: unknown

command terminated with exit code 126

[root@master01 ~]# kubectl exec -it default-busybox-675df76b45-xjtkd /bin/sh

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

/ # wget -O index.html 10.105.18.45

Connecting to 10.105.18.45 (10.105.18.45:80)

saving to 'index.html'

index.html 100% |*********************************************************************************************************************************************************| 612 0:00:00 ETA

'index.html' saved

/ # curl http://

/bin/sh: curl: not found

/ # rm -rf index.html

/ # wget -O index.html http://default-svc-nginx.default

Connecting to default-svc-nginx.default (10.105.18.45:80)

saving to 'index.html'

index.html 100% |*********************************************************************************************************************************************************| 612 0:00:00 ETA

'index.html' saved

/ # ls

bin dev etc home index.html proc root sys tmp usr var

/ # ping default-svc-nginx

PING default-svc-nginx (10.105.18.45): 56 data bytes

64 bytes from 10.105.18.45: seq=0 ttl=64 time=0.136 ms

^C

--- default-svc-nginx ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max = 0.136/0.136/0.136 ms

/ # exit

[root@master01 ~]#

从上可以看出pod不仅可以访问自己的service名称,也可访问其它名称空间的serviceName.NAMESPACE。

3、查看集群状态

[root@master01 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {"health":"true","reason":""}

etcd-0 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

[root@master01 ~]# kubectl get node -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

master01 Ready control-plane,master 21d v1.23.1 100.109.86.40 <none> CentOS Linux 8 4.18.0-305.3.1.el8.x86_64 docker://20.10.12

master02 Ready control-plane,master 21d v1.23.1 100.109.86.41 <none> CentOS Linux 8 4.18.0-305.3.1.el8.x86_64 docker://20.10.12

master03 Ready control-plane,master 21d v1.23.1 100.109.86.42 <none> CentOS Linux 8 4.18.0-305.3.1.el8.x86_64 docker://20.10.12

node01 Ready <none> 21d v1.23.1 100.109.86.43 <none> CentOS Linux 8 4.18.0-305.3.1.el8.x86_64 docker://20.10.12

node02 Ready <none> 21d v1.23.1 100.109.79.13 <none> CentOS Linux 8 4.18.0-305.3.1.el8.x86_64 docker://20.10.12

[root@master01 ~]#

八、总结

kuberadm安装kubernetes v1.23.1还是非常简单的,有一个关键的点,大家需要注意一下,如果你弄高可用集群,建议在默认init时,指定的配置文件当中,要指定这个 controlPlaneEndpoint: 192.168.86.40:6443,初始化完成后才会有kubeadm join加入master节点control-plane命令参数和node节点加入集群的命令参数;还有一个地方需要注意,master01初始化完成后,需要把生成的pki下面的证书和私钥copy到其它的master节点,再执行kubeadm join,否则失败。