转自: https://blog.minio.io/modern-data-lake-with-minio-part-2-f24fb5f82424

In the first part of this series, we saw why object storage systems like Minio are the perfect approach to build modern data lakes that are agile, cost-effective, and massively scalable.

In this post we’ll learn more about object storage, specifically Minio and then see how to connect Minio with tools like Apache Spark and Presto for analytics workloads.

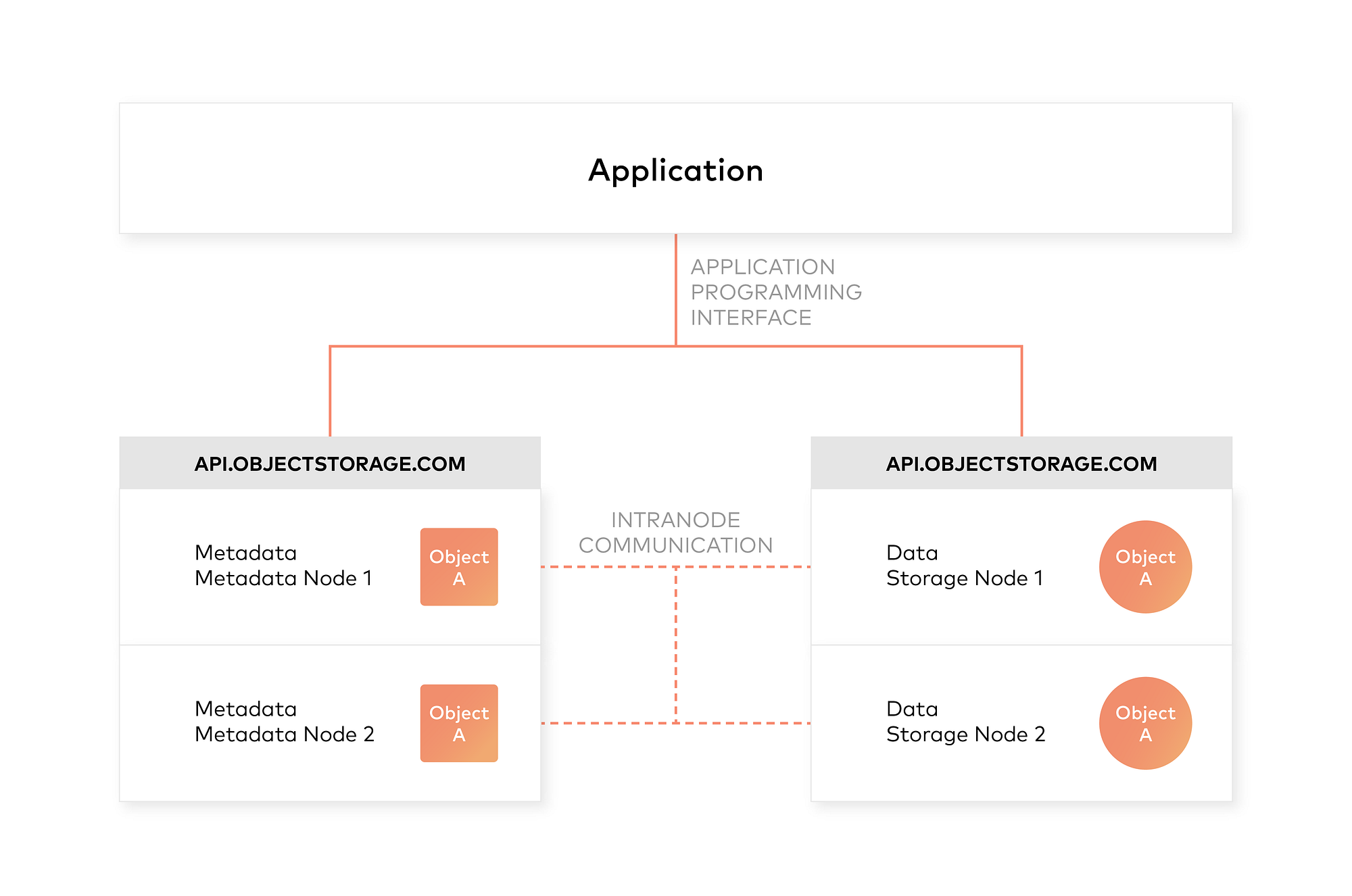

Architecture of an object storage system

One of the design principles of object storage is to abstract some of the lower layers of storage away from the administrators and applications. Thus, data is exposed and managed as objects instead of files or blocks. Objects contain additional descriptive properties which can be used for better indexing or management. Administrators do not have to perform lower-level storage functions like constructing and managing logical volumes to utilize disk capacity or setting RAID levels to deal with disk failure.

Object storage also allows the addressing and identification of individual objects by more than just file name and file path. Object storage adds a unique identifier within a bucket, or across the entire system, to support much larger namespaces and eliminate name collisions.

Inclusion of rich custom metadata within the object

Object storage explicitly separates file metadata from data to support additional capabilities. As opposed to fixed metadata in file systems (filename, creation date, type, etc.), object storage provides for full function, custom, object-level metadata in order to:

- Capture application-specific or user-specific information for better indexing purposes

- Support data-management policies (e.g. a policy to drive object movement from one storage tier to another)

- Centralized management of storage across many individual nodes and clusters

- Optimize metadata storage (e.g. encapsulated, database or key value storage) and caching/indexing (when authoritative metadata is encapsulated with the metadata inside the object) independently from the data storage (e.g. unstructured binary storage)

Integrating Minio Object Store with Hadoop 3

Use Minio documentation to deploy Minio on your preferred platform. Then, follow the steps to see how to integrate Minio with Hadoop.

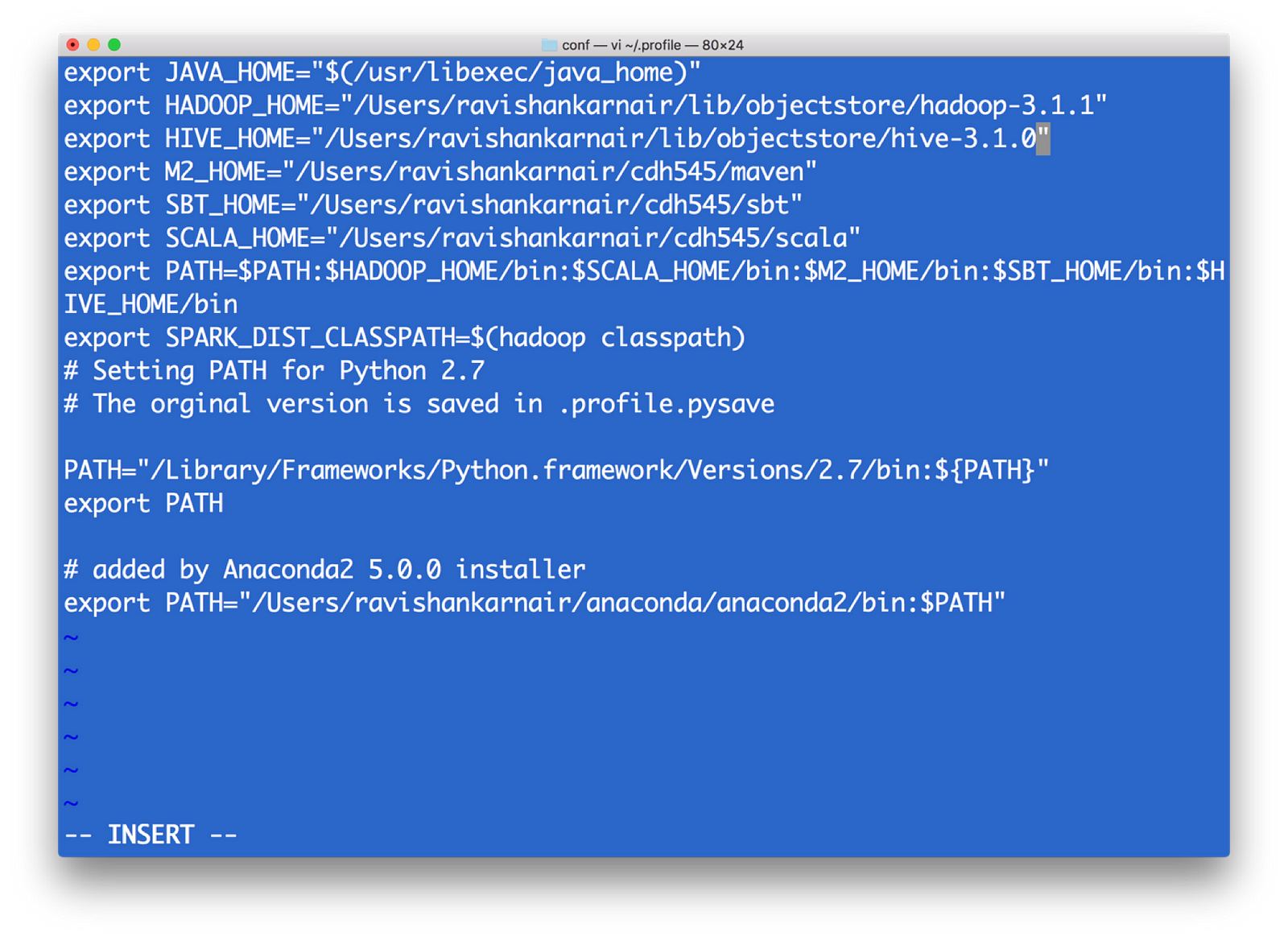

- First, install Hadoop 3.1.0. Download the tar and extract to a folder. Create either .bashrc or .profile (In Windows, path and environment variables) as below

2) All configurations for a Hadoop related with file system is kept in a directory etc/hadoop(this is relative from the root of your installation — I will call root installation directory as $HADOOP_HOME). We need to make changes in core-site.xml to point the new file system. Note that when we write hdfs:// for accessing the Hadoop file system, by default it connects to the underlying default FS configured in core-site.xml, which is managed as blocks of 128MB (Hadoop versions 1 was 64 MB), essentially indicating that hdfs is block storage. Now that we have Object Storage, the protocol to access cannot be hdfs://. Instead we need to use another protocol, and most commonly used one is s3a . S3 stands for Simple Storage Service, created by Amazon and is widely used as access protocol for object storage. Hence in core-site.xml, we need to write the info on what the underlying code should do when the command starts with s3a. Refer the below file for details and update it into core-site.xml of your Hadoop installation.

<property>

<name>fs.s3a.endpoint</name>

<description>AWS S3 endpoint to connect to.</description><value>http://localhost:9000</value>

<! — NOTE: Above value is obtained from the minio start window — -></property>

<property>

<name>fs.s3a.access.key</name>

<description>AWS access key ID.</description>

<value>UC8VCVUZMY185KVDBV32</value>

<! — NOTE: Above value is obtained from the minio start window — -></property>

<property>

<name>fs.s3a.secret.key</name>

<description>AWS secret key.</description>

<value>/ISE3ew43qUL5vX7XGQ/Br86ptYEiH/7HWShhATw</value>

<! — NOTE: Above value is obtained from the minio start window — ->

</property>

<property>

<name>fs.s3a.path.style.access</name>

<value>true</value>

<description>Enable S3 path style access.</description>

</property>

3) To enable s3a, we need some jar files to be copied to $HADOOP_HOME/share/lib/common/lib directory. Note that you also need to match your Hadoop version with the jar files you download. I used Hadoop version 3.1.1, hence I used hadoop-aws-3.1.1.jar. These are the jar files needed :

hadoop-aws-3.1.1.jar //should match your Hadoop version

aws-java-sdk-1.11.406.jar

aws-java-sdk-1.7.4.jar

aws-java-sdk-core-1.11.234.jar

aws-java-sdk-dynamodb-1.11.234.jar

aws-java-sdk-kms-1.11.234.jar

aws-java-sdk-s3–1.11.406.jar

httpclient-4.5.3.jar

joda-time-2.9.9.jar

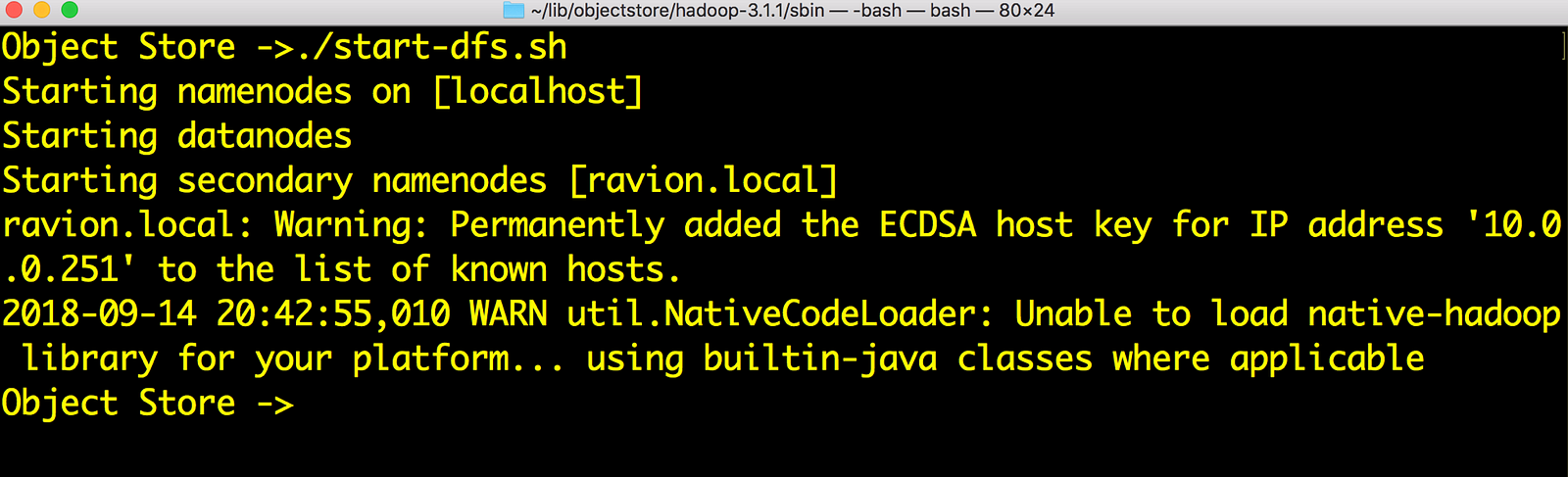

4) Now start dfs as usual. Without namenode, we can’t connect to s3a. The script to start is in $HADOOP_HOME/sbin directory. Note that this is needed only if you want to use hdfs filesystem as well as s3a

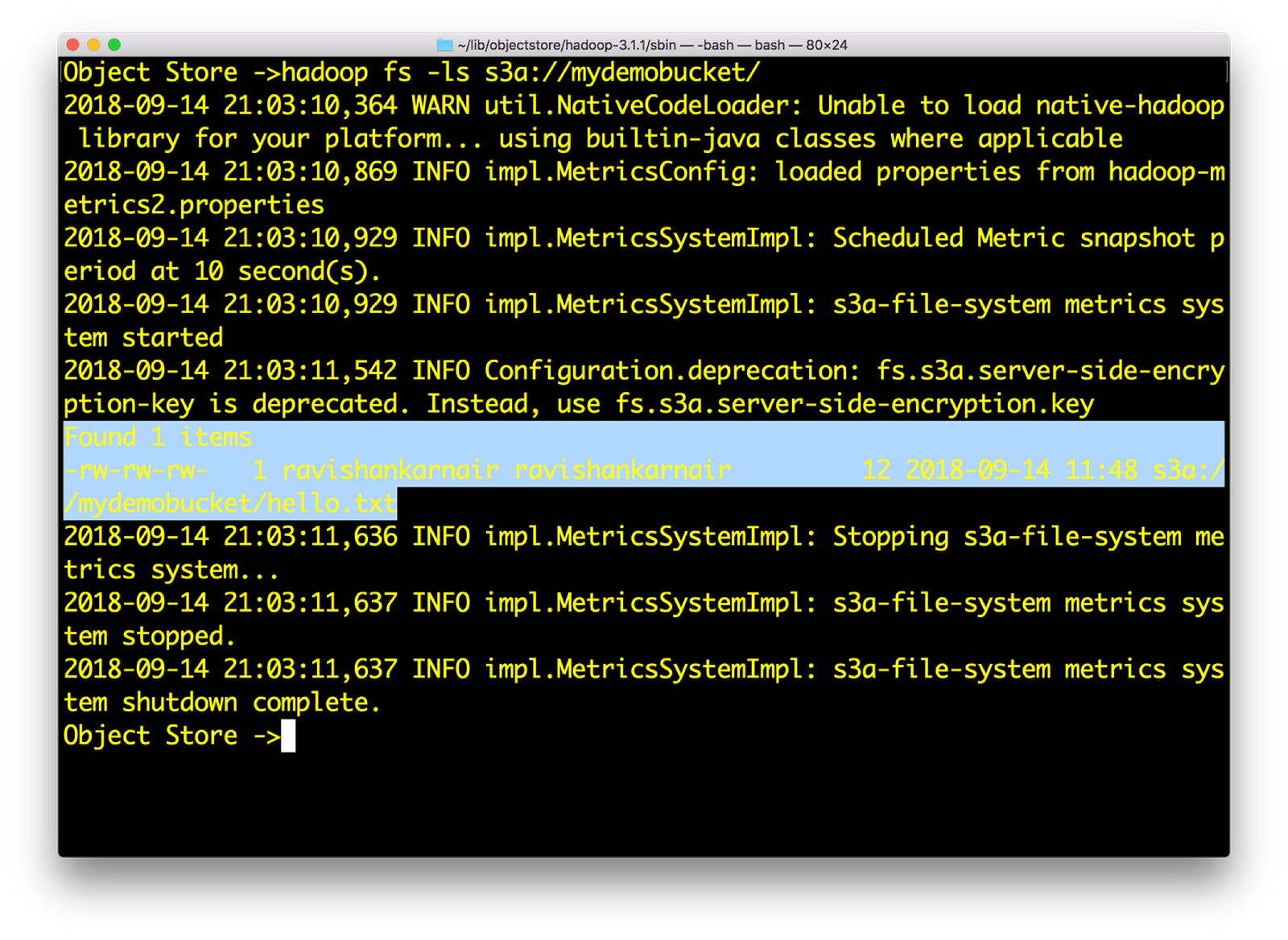

5) Let’s play with s3a://

6) Now create a sample file res.txt locally with random text. Then enter

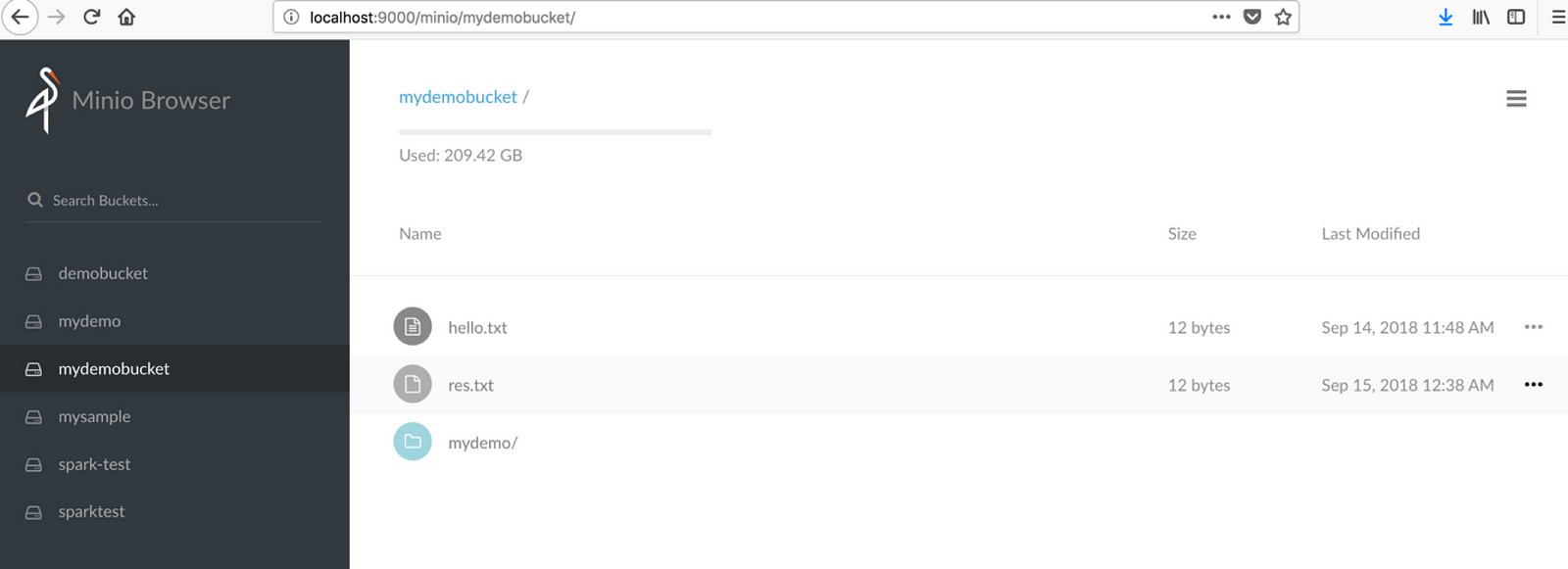

hadoop fs –put res.txt s3a://mydemobucket/res.txt

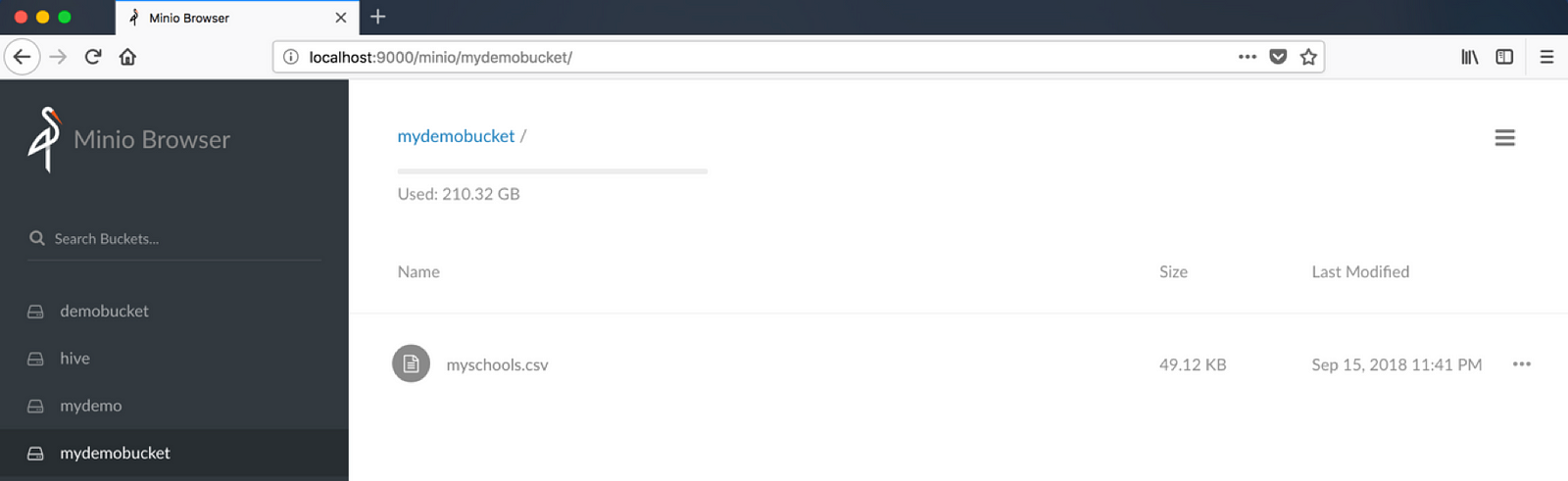

Check the Minio browser now, you should see the file in bucket mydemobucket

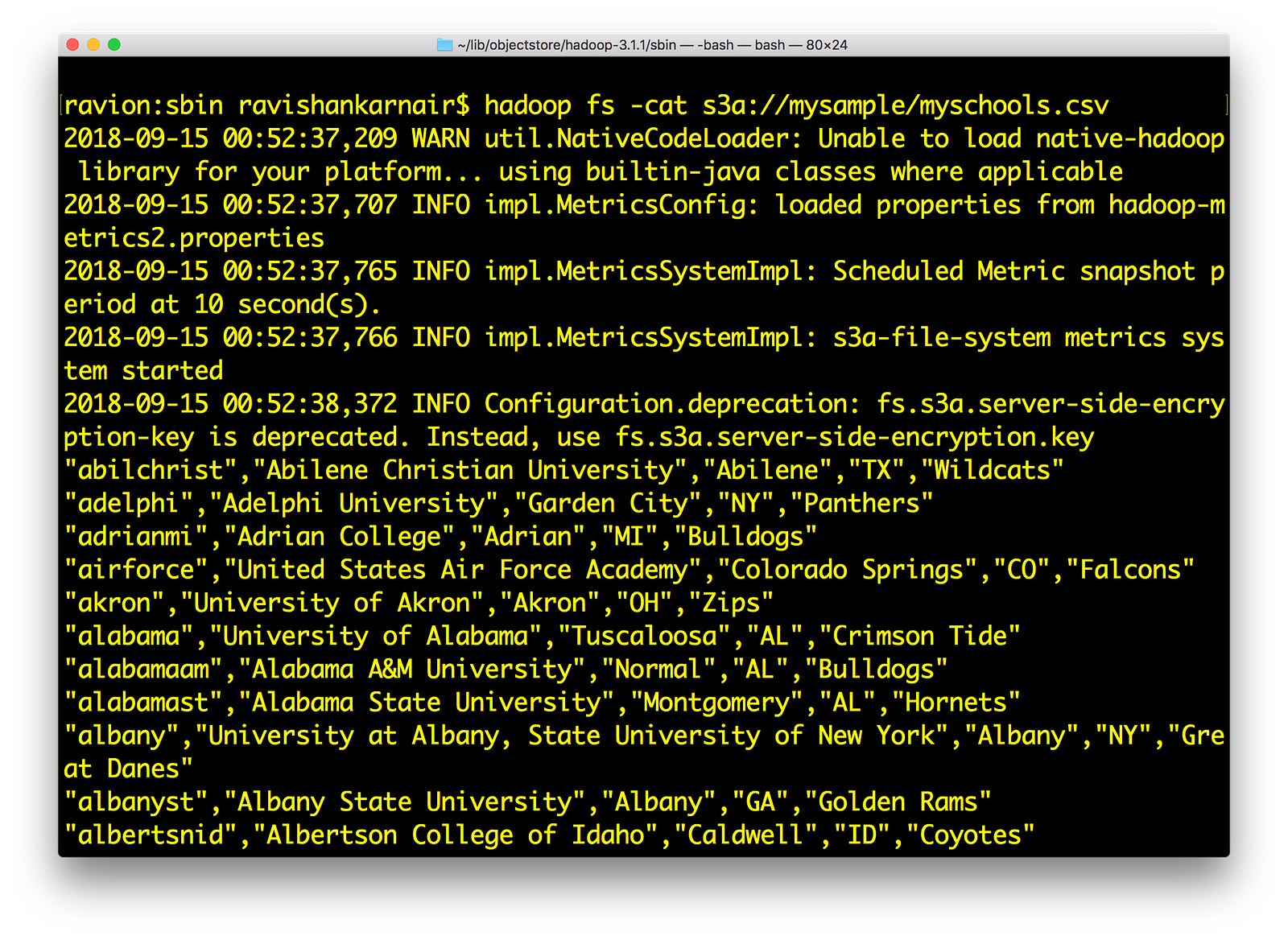

Also , try hadoop fs –cat command on any file that you may have:

If all this works for you, we have successfully integrated Minio with Hadoop using s3a:// .

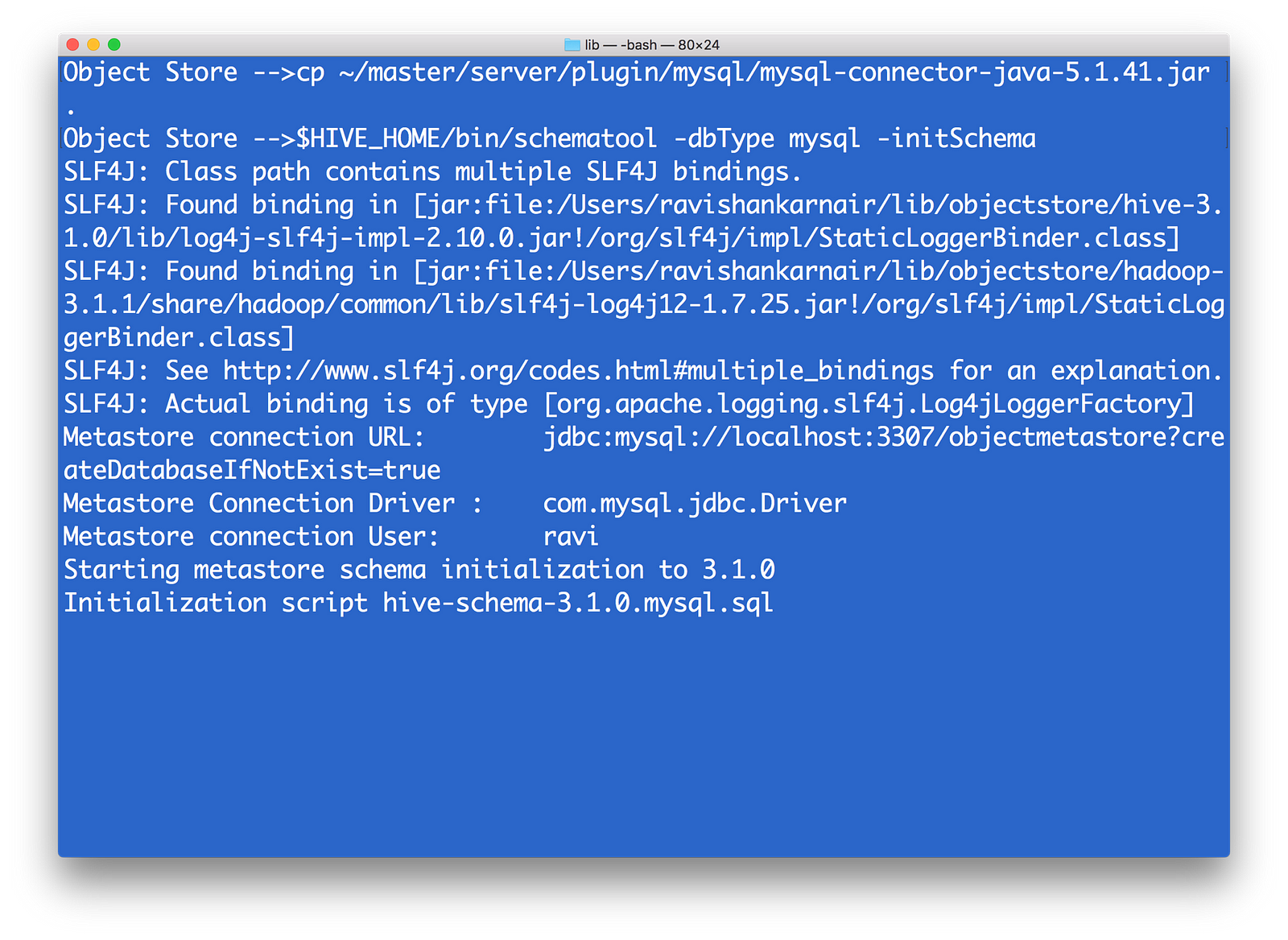

Integrating Minio Object Store with HIVE 3.1.0

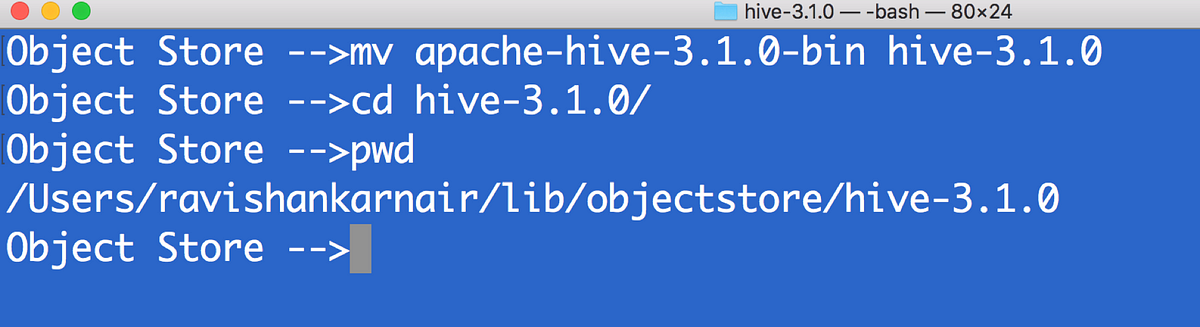

- Download latest version of HIVE compatible with Apache Hadoop 3.1.0. I have used apache-hive-3.1.0. Untar the downloaded bin file. Lets call untarred directory as hive-3.1.0

2) First copy all files that you used step 3 above (in the Hadoop integration section) to $HIVE_HOME/lib directory

3) Create hive-site.xml. Make sure you have MYSQL running to be used a metastore. Create a user (in this example ravi )

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name><value>jdbc:mysql://localhost:3307/objectmetastore?createDatabaseIfNotExist=true</value>

<description>metadata is stored in a MySQL server</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>MySQL JDBC driver class</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>ravi</value>

<description>user name for connecting to mysql server</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>ravi</value>

<description>password for connecting to mysql server</description>

</property>

<property>

<name>fs.s3a.endpoint</name>

<description>AWS S3 endpoint to connect to.</description><value>http://localhost:9000</value>

</property>

<property>

<name>fs.s3a.access.key</name>

<description>AWS access key ID.</description>

<value>UC8VCVUZMY185KVDBV32</value>

</property>

<property>

<name>fs.s3a.secret.key</name>

<description>AWS secret key.</description>

<value>/ISE3ew43qUL5vX7XGQ/Br86ptYEiH/7HWShhATw</value>

</property>

<property>

<name>fs.s3a.path.style.access</name>

<value>true</value>

<description>Enable S3 path style access.</description>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>s3a://hive/warehouse</value>

</property>

</configuration>

4) Since its initial time, we need to run metatool once. Add $HIVE_HOME and bin to environment variables. Refer to step 1 above.

5) In minio, create a bucket called hive and a directory warehouse. We must have s3a://hive/warehouse available.

6) Source your ~/.profile. Please copy the MYSQL driver (mysql-connector-java-x.y.z.jar)to $HIVE_HOME/lib. Then run metatool as

7) Start Hive server and Meta store by

$HIVE_HOME/bin/hiveserver2 start

$HIVE_HOME/bin/hiveserver2 --service metastore

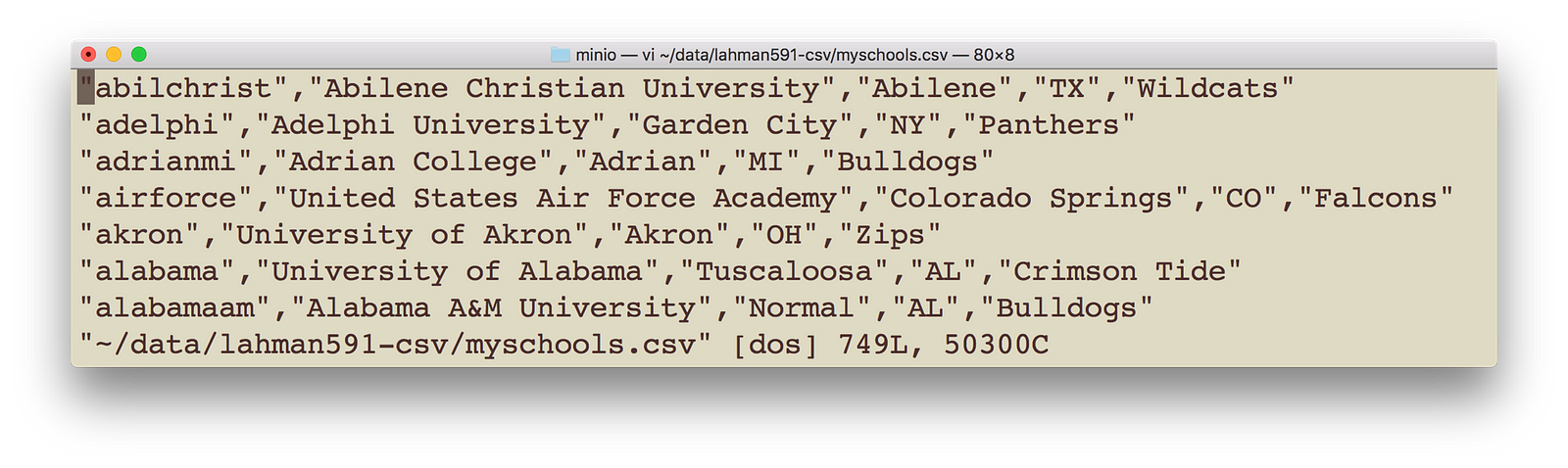

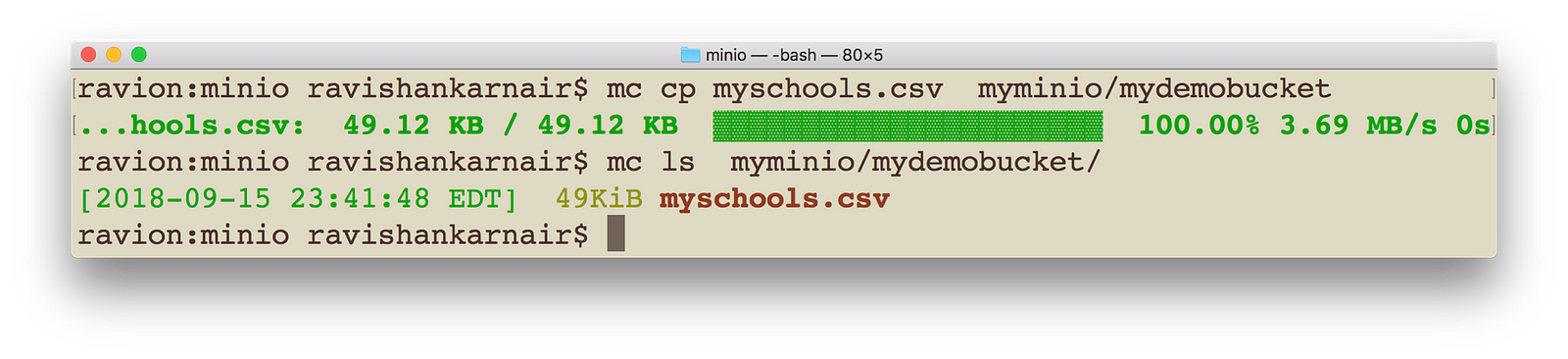

8) Copy some data file to the mydemobucket that we created earlier. You may use minio client in step 6 above. I have myschools.csv, which contains schoolid, schoolname, schoolcity, schoolstate and schoolnick. The file is as follows:

Execute following command with appropriate change to your data file location and name. Make sure you bring the data to be copied to your local.

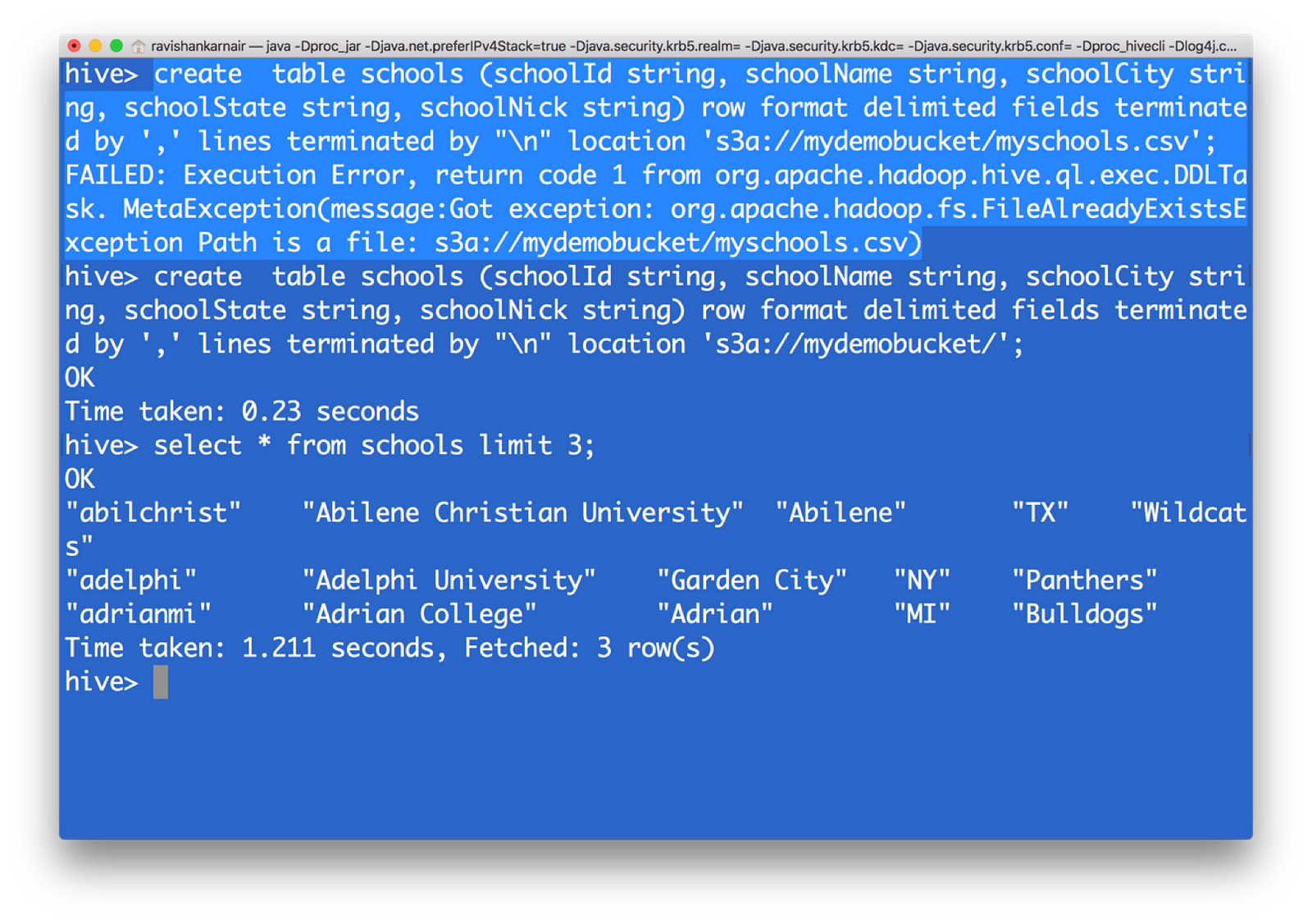

10) Create a HIVE table, with data pointing to s3 now. Please note that you must give upto parent directory, not the file name. I have highlighted the error message you may get when you give filename

create table schools (schoolId string, schoolName string, schoolCity string, schoolState string, schoolNick string) row format delimited fields terminated by ‘,’ lines terminated by “

” location ‘s3a://mydemobucket/’;

You have now created a HIVE table from s3 data. That means, with the underlying file system as S3, we can create a data warehouse without the dependency on HDFS.

Integrating Presto — unified data architecture platform with Minio object storage

You may now require another important component: How to combine data with an already existing system, when you move to Object Storage? You may want to compare with the data you have in a MYSQL database of your organization with the data in HIVE (on S3). Here comes one of the top notch and free (open source) unified data architecture systems in the world — Facebook’s presto.

Now let’s integrate presto with whole system. Download the latest version from https://github.com/prestodb/presto. Follow the deployment instructions. At the minimum, we need the presto server and presto client for accessing the server. Its distributed SQL query engine which allows you to write SQL for getting data from multiple systems with rich set of SQL functions.

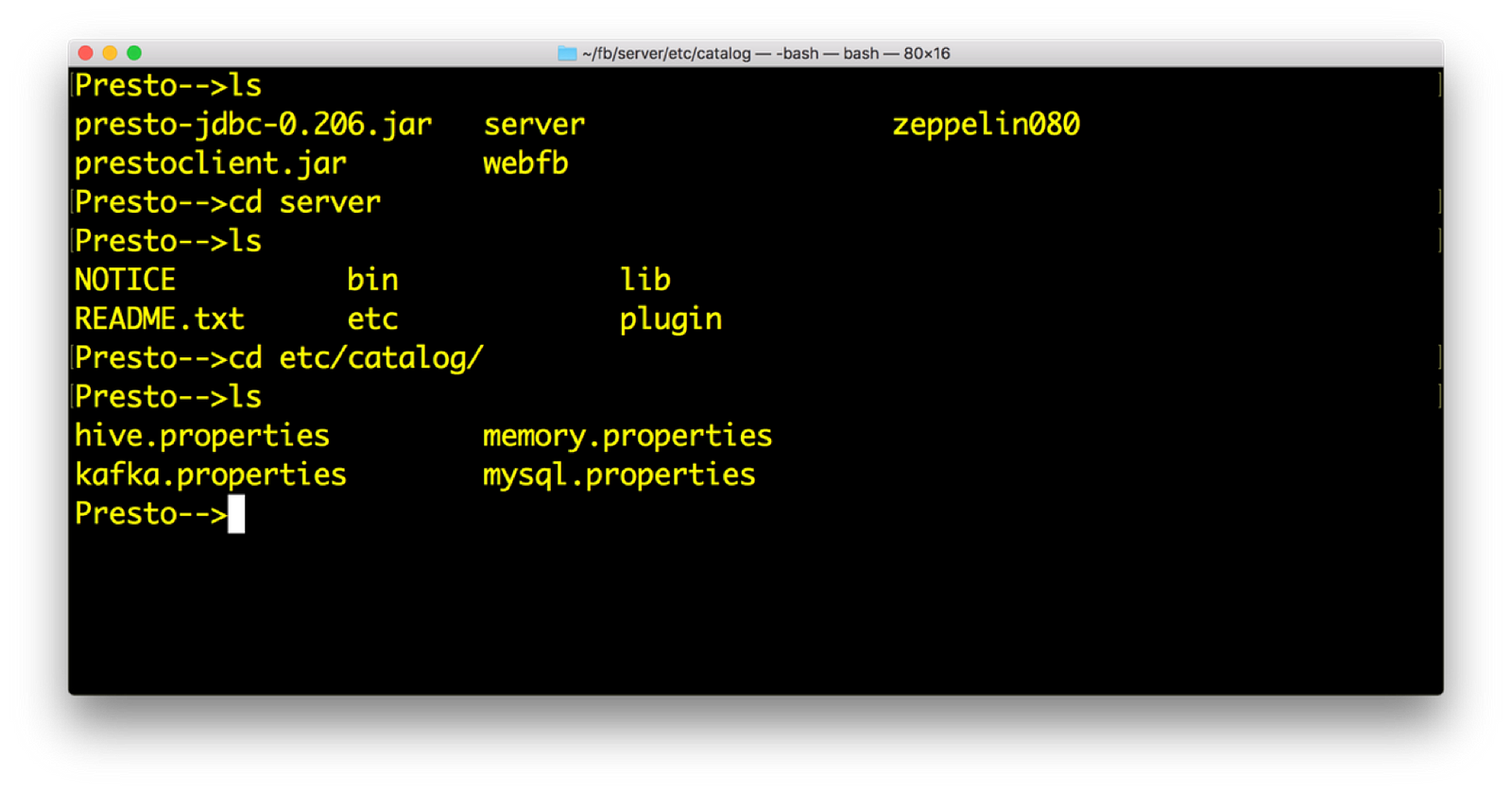

- Install Presto. You will have following directory structure from root. Within catalog directory, you will mention the connectors that you need Presto to query. Connectors are the systems with which Presto can query. Presto comes up with many default connectors.

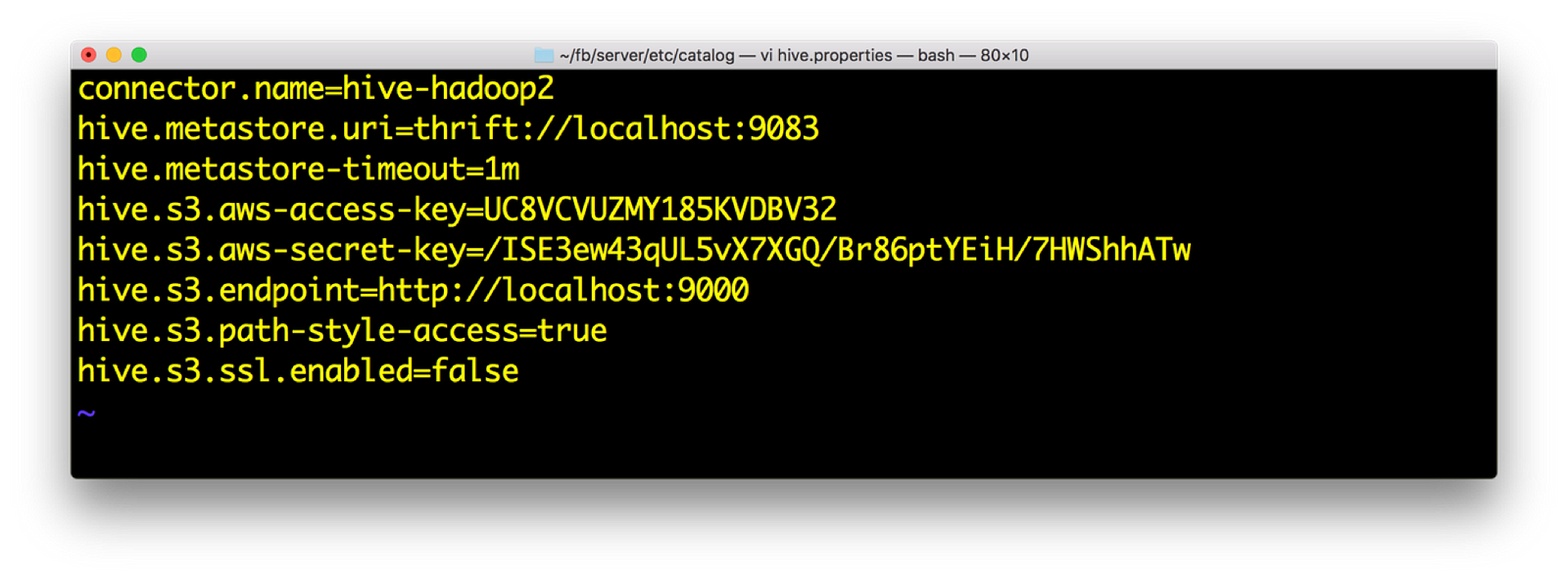

2) Open hive.properties. We should have the following data. Again access key and secret are available from minio launch screen

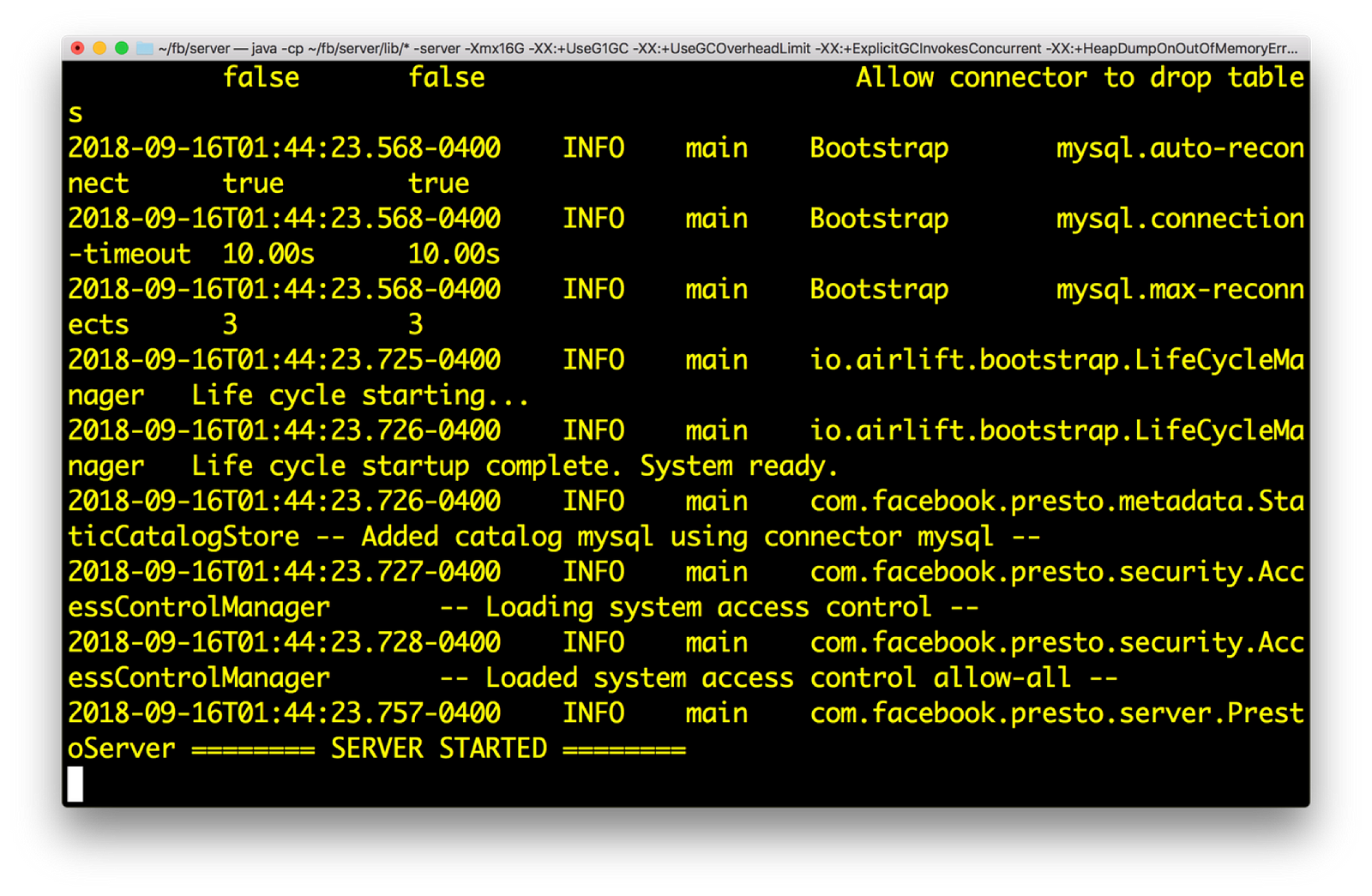

3) Start Presto. You need to do to server directory and execute: bin/launcher run

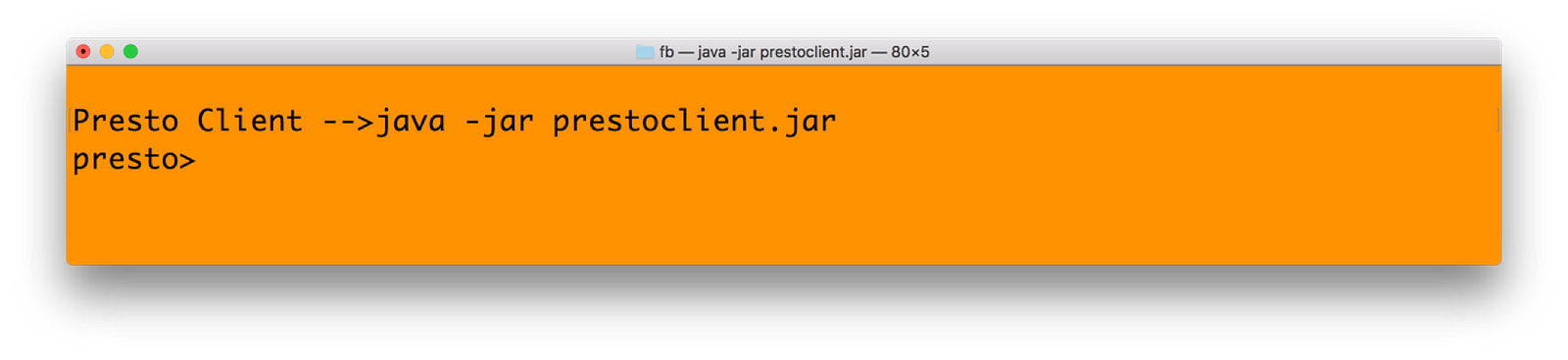

4) Run the presto client. This is done by following command:

java –jar <prestoclient jar name>

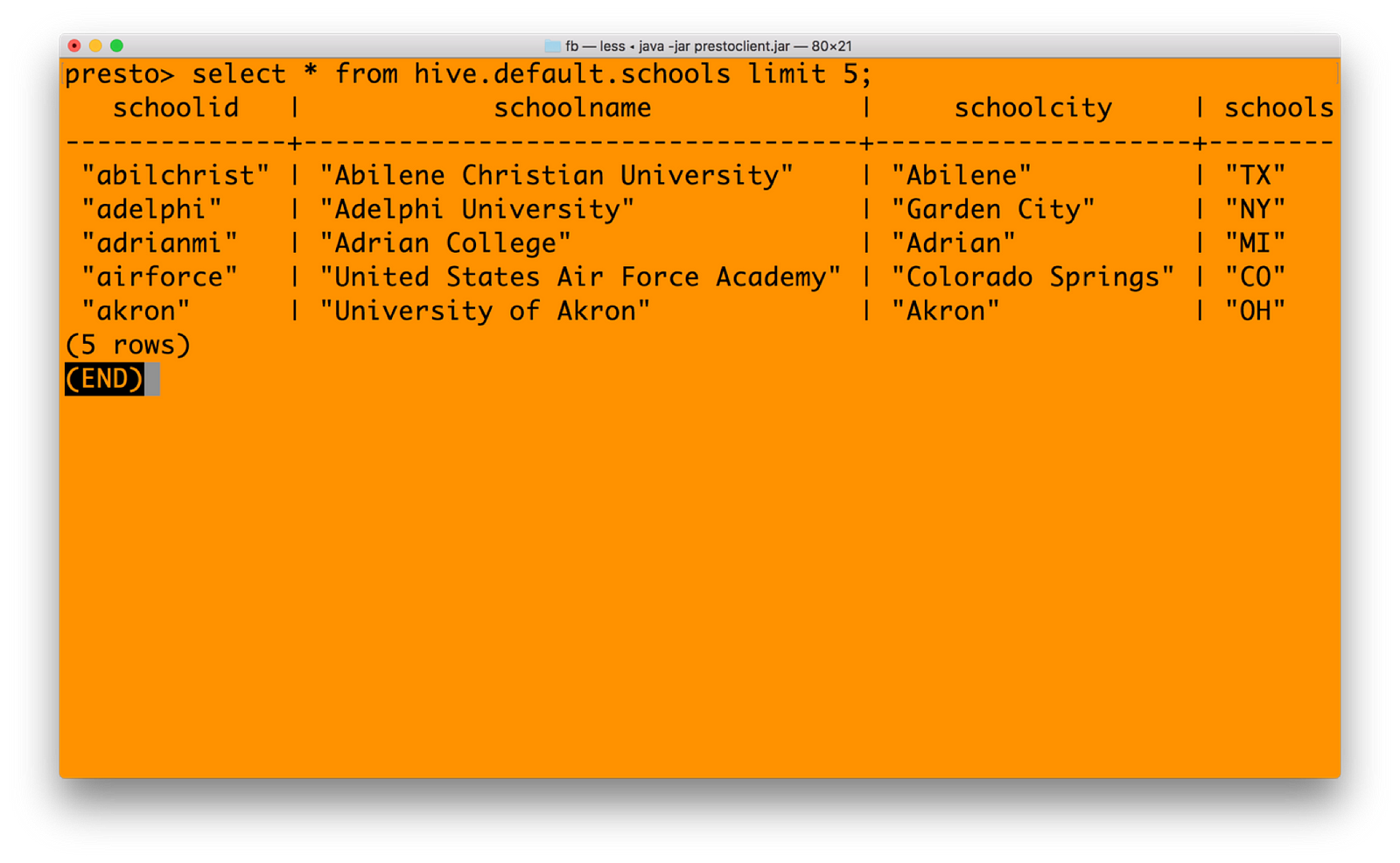

5) In presto, you need mention the catalogname.databasename.tablenameformat for getting data. Our catalog name is HIVE, database is default and table name is schools. Hence

6) You can join mysql catalog with hive catalog now — seamlessly unifying data from multiple systems. See the query below in Presto (just a cross join query for example)

Integrating Spark — Unified Analytics with Minio Object Storage

We have done all pre-requisites for running Spark against Minio, during our previous steps. This include getting right jars and setting core-site.xml in Hadoop 3.1.1. Notable point is, you need to download the Spark version without Hadoop. The reason being, Spark has not yet certified against 3.1.1 Hadoop, and hence including Spark with Hadoop will have many conflicts with jar files. You can download Spark without Hadoop from https://archive.apache.org/dist/spark/spark-2.3.1/

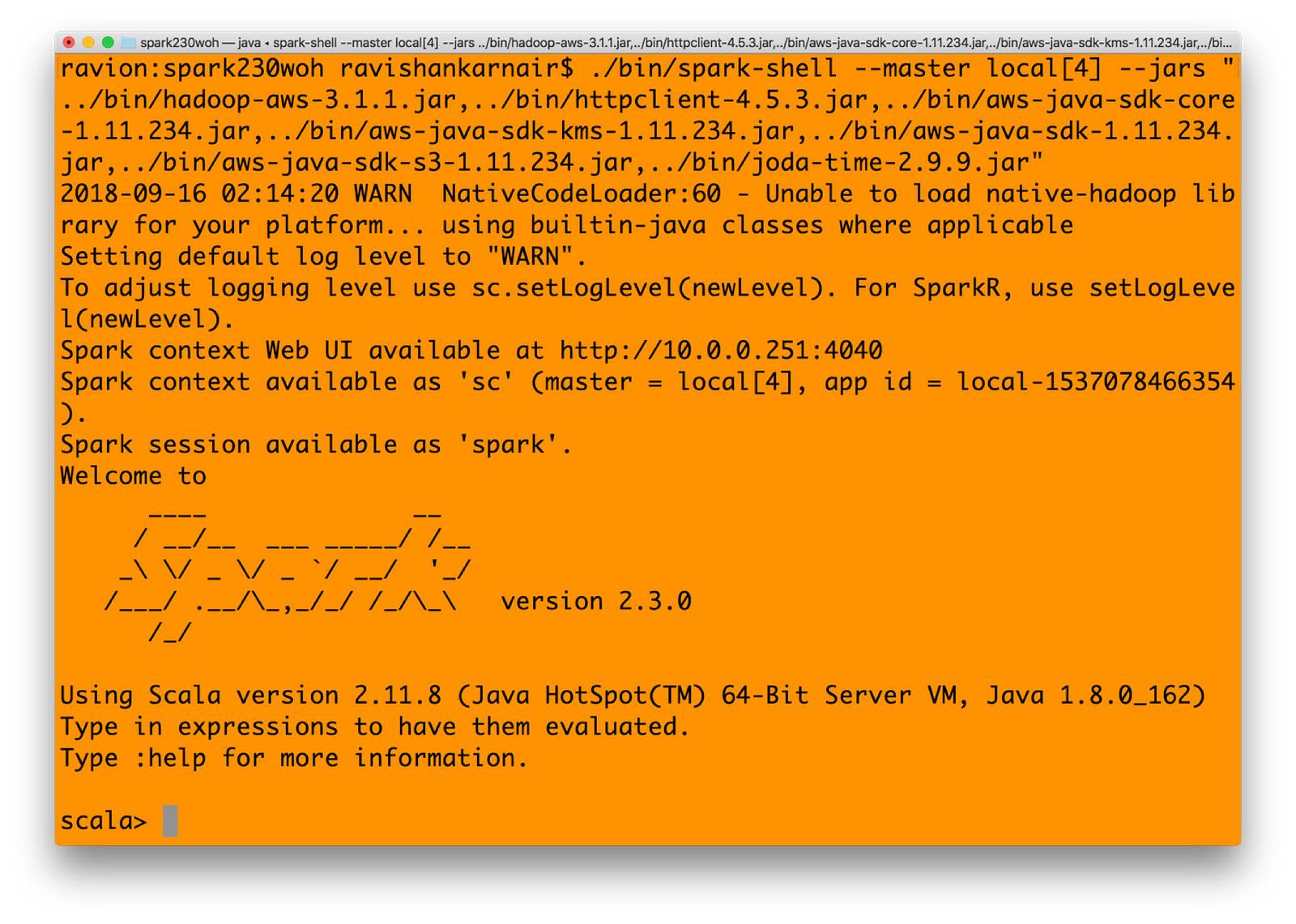

1) Start Spark shell with all jars mentioned earlier in Step 3 in Integration with Hadoop section. You may create a bin directory with all jars copied. Or refer to the jars that you had earlier.

./bin/spark-shell — master local[4] — jars “../bin/hadoop-aws-3.1.1.jar,../bin/httpclient-4.5.3.jar,../bin/aws-java-sdk-core-1.11.234.jar,../bin/aws-java-sdk-kms-1.11.234.jar,../bin/aws-java-sdk-1.11.234.jar,../bin/aws-java-sdk-s3–1.11.234.jar,../bin/joda-time-2.9.9.jar”

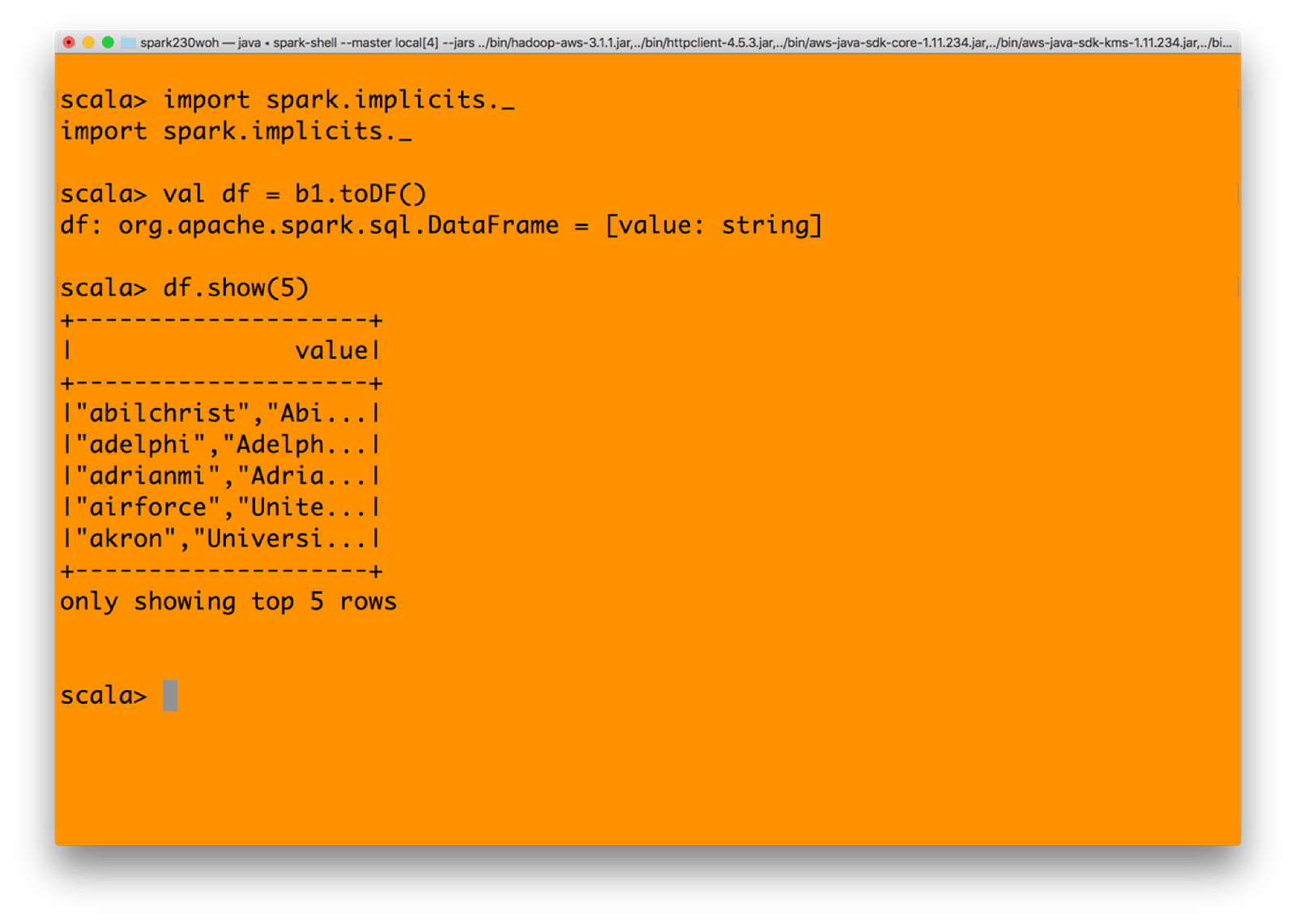

2) Let’s create an RDD in Spark. Type in

spark.sparkContext.hadoopConfiguration.set(“fs.s3a.endpoint”, “http://localhost:9000");

spark.sparkContext.hadoopConfiguration.set(“fs.s3a.access.key”, “UC8VCVUZMY185KVDBV32”);

spark.sparkContext.hadoopConfiguration.set(“fs.s3a.secret.key”, “/ISE3ew43qUL5vX7XGQ/Br86ptYEiH/7HWShhATw”);

spark.sparkContext.hadoopConfiguration.set(“fs.s3a.path.style.access”, “true”);

spark.sparkContext.hadoopConfiguration.set(“fs.s3a.connection.ssl.enabled”, “false”);

spark.sparkContext.hadoopConfiguration.set(“fs.s3a.impl”, “org.apache.hadoop.fs.s3a.S3AFileSystem”);

val b1 = sc.textFile(“s3a://mydemobucket/myschools.csv”)

3) You may convert the RDD to a dataFrame and then get values

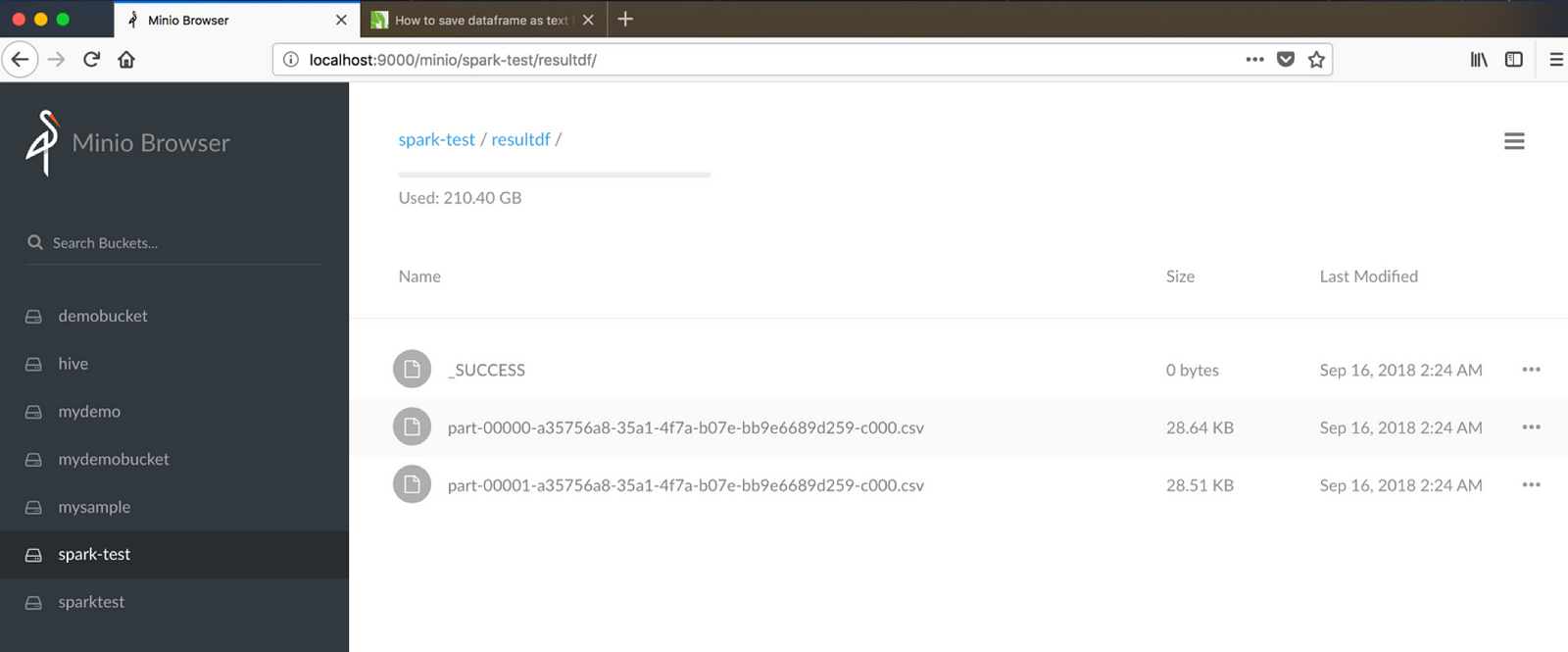

4) And finally, let’s write it back to minio object store with s3 protocol from Spark. Make sure that you have created a bucket named spark-test before executing below command.(Assuming resultdf is a bucket existing)

df.write.format(“csv”).save(“s3a://spark-test/resultdf”)

Integrating Spark — Are We Writing Files or Objects?

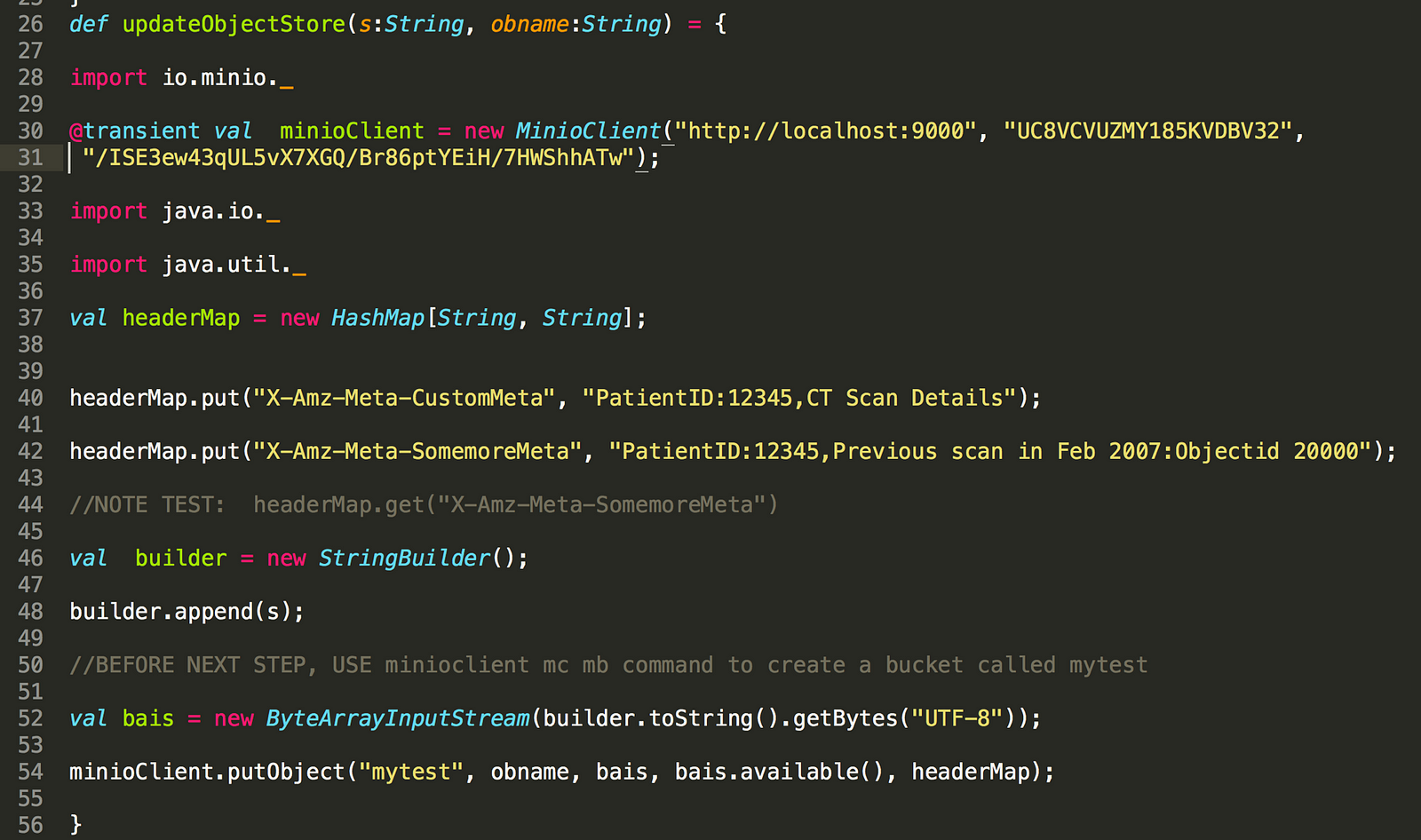

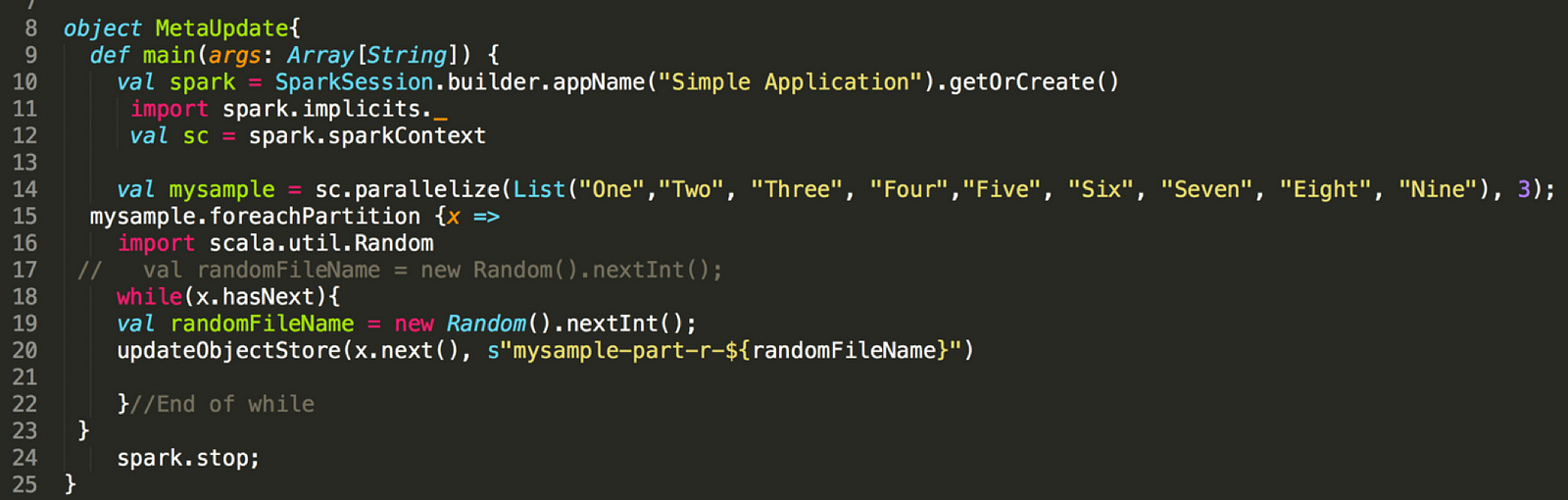

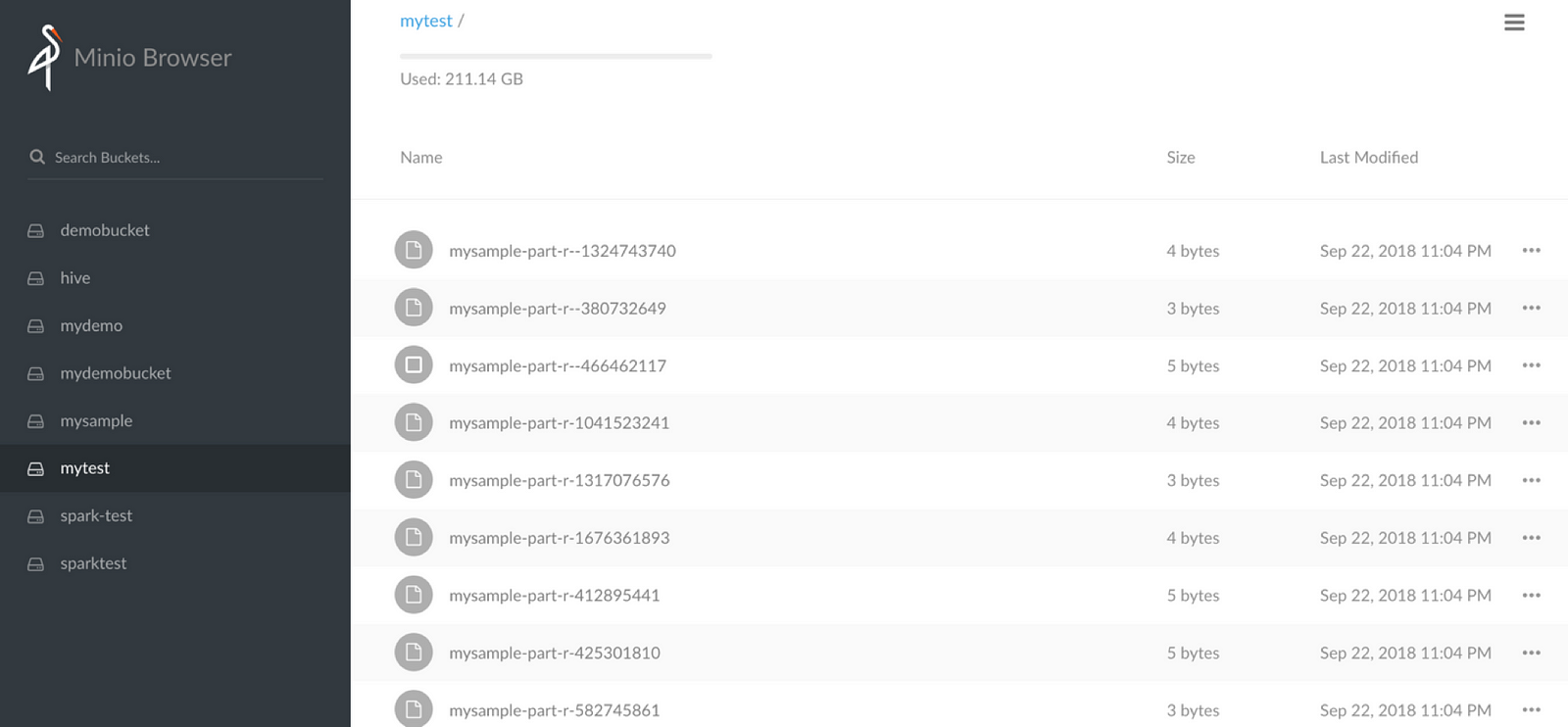

Did you notice that when we write using Spark through S3, the files are written as normal files — which ideally should not be the case when we use an underlying object storage Minio. Can we write the data into Minio as objects? The following code shows how. The trick is to integrate minio client java SDK within your Java or Scala code. Use the foreachPartition function of Spark to write directly into Minio.

First, write a function which updates into Minio.

Now use the above function in your Spark code as below:

Summary

We have shown that Object Storage with Minio is a very effective way to create a data lake. All essential components that a data lake needs are seamlessly working with Minio through s3a protocol.

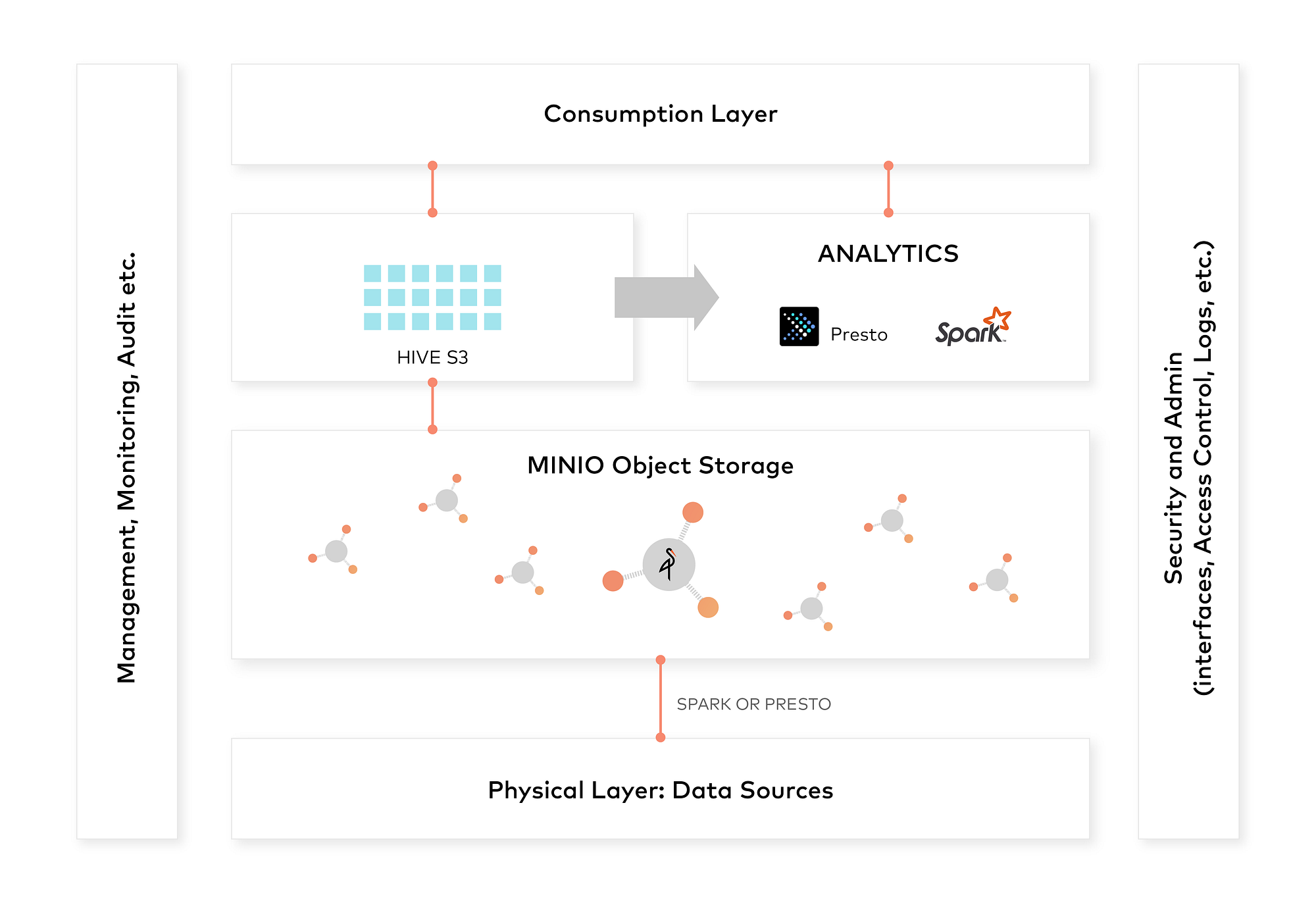

Overall architecture for your data lake can be:

Hope you will be able to explore the concepts and use it across various use cases. Enjoy!

This is a guest post by our community member Ravishankar Nair.