1 package com.mieba; 2 3 import us.codecraft.webmagic.Page; 4 import us.codecraft.webmagic.Site; 5 import us.codecraft.webmagic.processor.PageProcessor; 6 7 public class SinaPageProcessor implements PageProcessor 8 { 9 public static final String URL_LIST = "http://blog\.sina\.com\.cn/s/articlelist_1487828712_0_\d+\.html"; 10 11 public static final String URL_POST = "http://blog\.sina\.com\.cn/s/blog_\w+\.html"; 12 13 private Site site = Site.me().setTimeOut(10000).setRetryTimes(3).setSleepTime(1000).setCharset("UTF-8").setUserAgent( 14 15 "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_2) AppleWebKit/537.31 (KHTML, like Gecko) Chrome/26.0.1410.65 Safari/537.31");; 16 17 @Override 18 public Site getSite() 19 { 20 // TODO Auto-generated method stub 21 return site; 22 } 23 24 @Override 25 public void process(Page page) 26 { 27 // TODO Auto-generated method stub 28 // 列表页 29 30 if (page.getUrl().regex(URL_LIST).match()) 31 { 32 // 从页面发现后续的url地址来抓取 33 page.addTargetRequests(page.getHtml().xpath("//div[@class="articleList"]").links().regex(URL_POST).all()); 34 35 page.addTargetRequests(page.getHtml().links().regex(URL_LIST).all()); 36 37 // 文章页 38 39 } else 40 { 41 String title = new String(); 42 String content = new String(); 43 Article ar = new Article(title, content); 44 // 定义如何抽取页面信息,并保存下来 45 ar.setTitle(page.getHtml().xpath("//div[@class='articalTitle']/h2/text()").toString()); 46 47 ar.setContent( 48 page.getHtml().xpath("//div[@id='articlebody']//div[@class='articalContent']/text()").toString()); 49 System.out.println("title:"+ar.getTitle()); 50 System.out.println(ar.getContent()); 51 page.putField("repo", ar); 52 // page.putField("date", page.getHtml().xpath("//div[@id='articlebody']//span[@class='time SG_txtc']/text()").regex("\((.*)\)")); 53 54 } 55 } 56 57 }

1 package com.mieba; 2 3 import java.io.FileNotFoundException; 4 import java.io.FileWriter; 5 import java.io.IOException; 6 import java.io.PrintWriter; 7 import java.util.Vector; 8 9 10 11 import us.codecraft.webmagic.ResultItems; 12 import us.codecraft.webmagic.Task; 13 import us.codecraft.webmagic.pipeline.Pipeline; 14 15 public class SinaPipeline implements Pipeline 16 { 17 18 @Override 19 public void process(ResultItems resultItems, Task arg1) 20 { 21 // TODO Auto-generated method stub 22 Article vo = resultItems.get("repo"); 23 PrintWriter pw = null; 24 try 25 { 26 pw = new PrintWriter(new FileWriter("sina.txt", true)); 27 28 pw.println(vo); 29 pw.flush(); 30 31 }catch(FileNotFoundException e) { 32 e.printStackTrace(); 33 }catch (IOException e) 34 { 35 e.printStackTrace(); 36 } finally 37 { 38 pw.close(); 39 } 40 } 41 42 }

1 package com.mieba; 2 3 public class Article 4 { 5 private String title; 6 private String content; 7 public String getTitle() 8 { 9 return title; 10 } 11 public void setTitle(String title) 12 { 13 this.title = title; 14 } 15 public String getContent() 16 { 17 return content; 18 } 19 public void setContent(String content) 20 { 21 this.content = content; 22 } 23 public Article(String title, String content) 24 { 25 super(); 26 this.title = title; 27 this.content = content; 28 } 29 @Override 30 public String toString() 31 { 32 return "Article [title=" + title + ", content=" + content + "]"; 33 } 34 35 }

1 package com.mieba; 2 3 4 5 import us.codecraft.webmagic.Spider; 6 7 public class Demo 8 { 9 10 public static void main(String[] args) 11 { // 爬取开始 12 Spider 13 // 爬取过程 14 .create(new SinaPageProcessor()) 15 // 爬取结果保存 16 .addPipeline(new SinaPipeline()) 17 // 爬取的第一个页面 18 .addUrl("http://blog.sina.com.cn/s/articlelist_1487828712_0_1.html") 19 // 启用的线程数 20 .thread(5).run(); 21 } 22 }

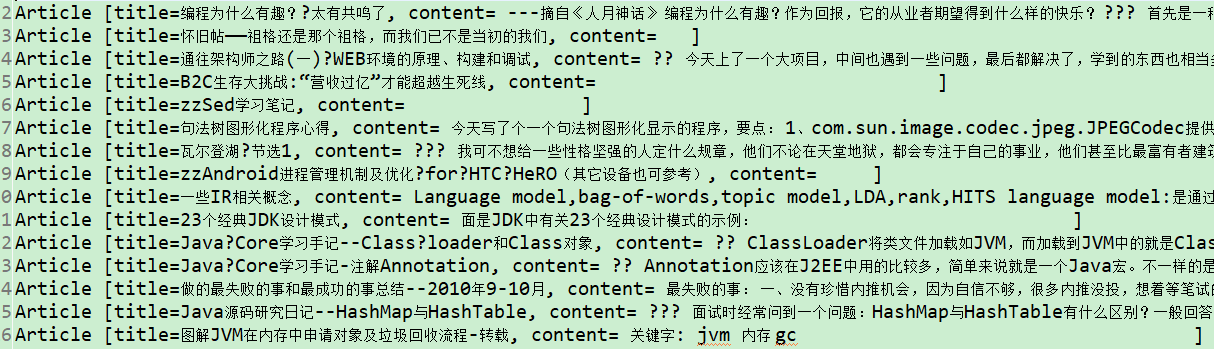

运行结果

爬取到的数据

总结:

关于简单的页面基本可以实现爬取,并且用对象进行存储数据,并最终保存为txt文档。

目前存在的问题,在于一些前端渲染的页面,还找不到url链接去完成相应的爬取,还需要进一步学习模拟登录页面,以获得隐藏的url等数据。