https://blog.51cto.com/13641616/2442005

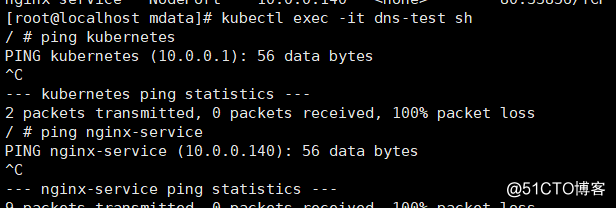

排错背景:在一次生产环境的部署过程中,配置文件中配置的访问地址为集群的Service,配置好后发现服务不能正常访问,遂启动了一个busybox进行测试,测试发现在busybox中,能通过coredns正常的解析到IP,然后去ping了一下service,发现不能ping通,ping clusterIP也不能ping通。

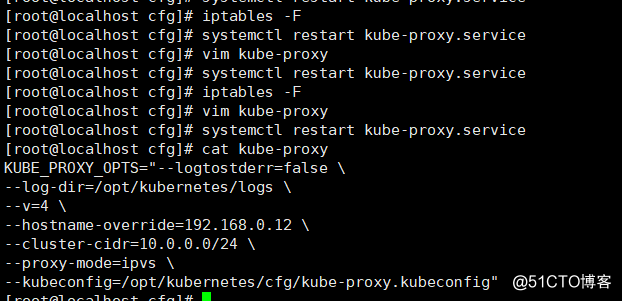

排错经历:首先排查了kube-proxy是否正常,发现启动都是正常的,然后也重启了,还是一样ping不通,然后又排查了网络插件,也重启过flannel,依然没有任何效果。后来想到自己的另一套k8s环境,是能正常ping通service的,就对比这两套环境检查配置,发现所有配置中只有kube-proxy的配置有一点差别,能ping通的环境kube-proxy使用了--proxy-mode=ipvs ,不能ping通的环境使用了默认模式(iptables)。

iptables没有具体设备响应。

然后就是开始经过多次测试,添加--proxy-mode=ipvs 后,清空node上防火墙规则,重启kube-proxy后就能正常的ping通了。

在学习K8S的时候,自己一直比较忽略底层流量转发,也即IPVS和iptables的相关知识,认为不管哪种模式,只要能转发访问到pod就可以,不用太在意这些细节,以后还是得更加仔细才行。

补充:kubeadm 部署方式修改kube-proxy为 ipvs模式。

默认情况下,我们部署的kube-proxy通过查看日志,能看到如下信息:Flag proxy-mode="" unknown,assuming iptables proxy

这里我们需要修改kube-proxy的配置文件,添加mode 为ipvs。

ipvs模式需要注意的是要添加ip_vs相关模块:

重启kube-proxy 的pod

在pod重启后再查看日志,发现模式已经变为ipvs了。

再次测试ping service