send和recv背后数据的收发过程

send和recv是TCP常用的发送数据和接受数据函数,这两个函数具体在linux内核的代码实现上是如何实现的呢?

ssize_t recv(int sockfd, void *buf, size_t len, int flags)

ssize_t send(int sockfd, const void *buf, size_t len, int flags)

理论分析

对于send函数,比较容易理解,捋一下计算机网络的知识,可以大概的到实现的方法,首先TCP是面向连接的,会有三次握手,建立连接成功,即代表两个进程可以用send和recv通信,作为发送信息的一方,肯定是接收到了从用户程序发送数据的请求,即send函数的参数之一,接收到数据后,若数据的大小超过一定长度,肯定不可能直接发送除去,因此,首先要对数据分段,将数据分成一个个的代码段,其次,TCP协议位于传输层,有响应的头部字段,在传输时肯定要加在数据前,数据也就被准备好了。当然,TCP是没有能力直接通过物理链路发送出去的,要想数据正确传输,还需要一层一层的进行。所以,最后一步是将数据传递给网络层,网络层再封装,然后链路层、物理层,最后被发送除去。总结一下就是:

1.数据分段

2.封装头部

3.传递给下一层。

对于secv函数,有一个不太能理解的就是,作为接收方,我是否是一直在等待其他进程给我发送数据,如果是,那么就应该是不停的判断是否有数到了,如果有,就把数据保存起来,然后执行send的逆过程即可。若没有一直等,那就可能是进程被挂起了,如果有数据到达,内核通过中断唤醒进程,然后接收数据。至于具体是哪种,可以通过代码和调试得到结果。

源码分析

首先,当调用send()函数时,内核封装send()为sendto(),然后发起系统调用。其实也很好理解,send()就是sendto()的一种特殊情况,而sendto()在内核的系统调用服务程序为sys_sendto

int __sys_sendto(int fd, void __user *buff, size_t len, unsigned int flags,

struct sockaddr __user *addr, int addr_len)

{

struct socket *sock;

struct sockaddr_storage address;

int err;

struct msghdr msg;

struct iovec iov;

int fput_needed;

err = import_single_range(WRITE, buff, len, &iov, &msg.msg_iter);

if (unlikely(err))

return err;

sock = sockfd_lookup_light(fd, &err, &fput_needed);

if (!sock)

goto out;

msg.msg_name = NULL;

msg.msg_control = NULL;

msg.msg_controllen = 0;

msg.msg_namelen = 0;

if (addr) {

err = move_addr_to_kernel(addr, addr_len, &address);

if (err < 0)

goto out_put;

msg.msg_name = (struct sockaddr *)&address;

msg.msg_namelen = addr_len;

}

if (sock->file->f_flags & O_NONBLOCK)

flags |= MSG_DONTWAIT;

msg.msg_flags = flags;

err = sock_sendmsg(sock, &msg);

out_put:

fput_light(sock->file, fput_needed);

out:

return err;

}

这里定义了一个struct msghdr msg,他是用来表示要发送的数据的一些属性。

struct msghdr {

void *msg_name; /* 接收方的struct sockaddr结构体地址 (用于udp)*/

int msg_namelen; /* 接收方的struct sockaddr结构体地址(用于udp)*/

struct iov_iter msg_iter; /* io缓冲区的地址 */

void *msg_control; /* 辅助数据的地址 */

__kernel_size_t msg_controllen; /* 辅助数据的长度 */

unsigned int msg_flags; /*接受消息的表示 */

struct kiocb *msg_iocb; /* ptr to iocb for async requests */

};

还有一个struct iovec,他被称为io向量,故名思意,用来表示io数据的一些信息。

struct iovec

{

void __user *iov_base; /* 要传输数据的用户态下的地址*) */

__kernel_size_t iov_len; /*要传输数据的长度 */

};

所以,__sys_sendto函数其实做了3件事:1.通过fd获取了对应的struct socket2.创建了用来描述要发送的数据的结构体struct msghdr。3.调用了sock_sendmsg来执行实际的发送。继续追踪这个函数,会看到最终调用的是sock->ops->sendmsg(sock, msg, msg_data_left(msg));,即socet在初始化时赋值给结构体struct proto tcp_prot的函数tcp_sendmsg。

struct proto tcp_prot = {

.name = "TCP",

.owner = THIS_MODULE,

.close = tcp_close,

.pre_connect = tcp_v4_pre_connect,

.connect = tcp_v4_connect,

.disconnect = tcp_disconnect,

.accept = inet_csk_accept,

.ioctl = tcp_ioctl,

.init = tcp_v4_init_sock,

.destroy = tcp_v4_destroy_sock,

.shutdown = tcp_shutdown,

.setsockopt = tcp_setsockopt,

.getsockopt = tcp_getsockopt,

.keepalive = tcp_set_keepalive,

.recvmsg = tcp_recvmsg,

.sendmsg = tcp_sendmsg,

......

tcp_sendmsg实际上调用的是int tcp_sendmsg_locked(struct sock *sk, struct msghdr *msg, size_t size)。

int tcp_sendmsg_locked(struct sock *sk, struct msghdr *msg, size_t size)

{

struct tcp_sock *tp = tcp_sk(sk);/*进行了强制类型转换*/

struct sk_buff *skb;

flags = msg->msg_flags;

......

if (copied)

tcp_push(sk, flags & ~MSG_MORE, mss_now,

TCP_NAGLE_PUSH, size_goal);

}

在tcp_sendmsg_locked中,完成的是将所有的数据组织成发送队列,这个发送队列是struct sock结构中的一个域sk_write_queue,这个队列的每一个元素是一个skb,里面存放的就是待发送的数据。然后调用了tcp_push()函数。

struct sock{

...

struct sk_buff_head sk_write_queue;/*指向skb队列的第一个元素*/

...

struct sk_buff *sk_send_head;/*指向队列第一个还没有发送的元素*/

}

在tcp协议的头部有几个标志字段:URG、ACK、RSH、RST、SYN、FIN,tcp_push中会判断这个skb的元素是否需要push,如果需要就将tcp头部字段的push置一,置一的过程如下:

static void tcp_push(struct sock *sk, int flags, int mss_now,

int nonagle, int size_goal)

{

struct tcp_sock *tp = tcp_sk(sk);

struct sk_buff *skb;

skb = tcp_write_queue_tail(sk);

if (!skb)

return;

if (!(flags & MSG_MORE) || forced_push(tp))

tcp_mark_push(tp, skb);

tcp_mark_urg(tp, flags);

if (tcp_should_autocork(sk, skb, size_goal)) {

/* avoid atomic op if TSQ_THROTTLED bit is already set */

if (!test_bit(TSQ_THROTTLED, &sk->sk_tsq_flags)) {

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPAUTOCORKING);

set_bit(TSQ_THROTTLED, &sk->sk_tsq_flags);

}

/* It is possible TX completion already happened

* before we set TSQ_THROTTLED.

*/

if (refcount_read(&sk->sk_wmem_alloc) > skb->truesize)

return;

}

if (flags & MSG_MORE)

nonagle = TCP_NAGLE_CORK;

__tcp_push_pending_frames(sk, mss_now, nonagle);

}

整个过程会有点绕,首先struct tcp_skb_cb结构体存放的就是tcp的头部,头部的控制位为tcp_flags,通过tcp_mark_push会将skb中的cb,也就是48个字节的数组,类型转换为struct tcp_skb_cb ,这样位于skb的cb就成了tcp的头部。

static inline void tcp_mark_push(struct tcp_sock *tp, struct sk_buff *skb)

{

TCP_SKB_CB(skb)->tcp_flags |= TCPHDR_PSH;

tp->pushed_seq = tp->write_seq;

}

...

#define TCP_SKB_CB(__skb) ((struct tcp_skb_cb *)&((__skb)->cb[0]))

...

struct sk_buff {

...

char cb[48] __aligned(8);

...

struct tcp_skb_cb {

__u32 seq; /* Starting sequence number */

__u32 end_seq; /* SEQ + FIN + SYN + datalen */

__u8 tcp_flags; /* tcp头部标志,位于第13个字节tcp[13]) */

......

};

然后,tcp_push调用了__tcp_push_pending_frames(sk, mss_now, nonagle);函数发送数据:

void __tcp_push_pending_frames(struct sock *sk, unsigned int cur_mss,

int nonagle)

{

if (tcp_write_xmit(sk, cur_mss, nonagle, 0,

sk_gfp_mask(sk, GFP_ATOMIC)))

tcp_check_probe_timer(sk);

}

随后又调用了tcp_write_xmit来发送数据:

static bool tcp_write_xmit(struct sock *sk, unsigned int mss_now, int nonagle,

int push_one, gfp_t gfp)

{

struct tcp_sock *tp = tcp_sk(sk);

struct sk_buff *skb;

unsigned int tso_segs, sent_pkts;

int cwnd_quota;

int result;

bool is_cwnd_limited = false, is_rwnd_limited = false;

u32 max_segs;

/*统计已发送的报文总数*/

sent_pkts = 0;

......

/*若发送队列未满,则准备发送报文*/

while ((skb = tcp_send_head(sk))) {

unsigned int limit;

if (unlikely(tp->repair) && tp->repair_queue == TCP_SEND_QUEUE) {

/* "skb_mstamp_ns" is used as a start point for the retransmit timer */

skb->skb_mstamp_ns = tp->tcp_wstamp_ns = tp->tcp_clock_cache;

list_move_tail(&skb->tcp_tsorted_anchor, &tp->tsorted_sent_queue);

tcp_init_tso_segs(skb, mss_now);

goto repair; /* Skip network transmission */

}

if (tcp_pacing_check(sk))

break;

tso_segs = tcp_init_tso_segs(skb, mss_now);

BUG_ON(!tso_segs);

/*检查发送窗口的大小*/

cwnd_quota = tcp_cwnd_test(tp, skb);

if (!cwnd_quota) {

if (push_one == 2)

/* Force out a loss probe pkt. */

cwnd_quota = 1;

else

break;

}

if (unlikely(!tcp_snd_wnd_test(tp, skb, mss_now))) {

is_rwnd_limited = true;

break;

......

limit = mss_now;

if (tso_segs > 1 && !tcp_urg_mode(tp))

limit = tcp_mss_split_point(sk, skb, mss_now,

min_t(unsigned int,

cwnd_quota,

max_segs),

nonagle);

if (skb->len > limit &&

unlikely(tso_fragment(sk, TCP_FRAG_IN_WRITE_QUEUE,

skb, limit, mss_now, gfp)))

break;

if (tcp_small_queue_check(sk, skb, 0))

break;

if (unlikely(tcp_transmit_skb(sk, skb, 1, gfp)))

break;

......

tcp_write_xmit位于tcpoutput.c中,它实现了tcp的拥塞控制,然后调用了tcp_transmit_skb(sk, skb, 1, gfp)传输数据,实际上调用的是__tcp_transmit_skb

static int __tcp_transmit_skb(struct sock *sk, struct sk_buff *skb,

int clone_it, gfp_t gfp_mask, u32 rcv_nxt)

{

skb_push(skb, tcp_header_size);

skb_reset_transport_header(skb);

......

/* 构建TCP头部和校验和 */

th = (struct tcphdr *)skb->data;

th->source = inet->inet_sport;

th->dest = inet->inet_dport;

th->seq = htonl(tcb->seq);

th->ack_seq = htonl(rcv_nxt);

tcp_options_write((__be32 *)(th + 1), tp, &opts);

skb_shinfo(skb)->gso_type = sk->sk_gso_type;

if (likely(!(tcb->tcp_flags & TCPHDR_SYN))) {

th->window = htons(tcp_select_window(sk));

tcp_ecn_send(sk, skb, th, tcp_header_size);

} else {

/* RFC1323: The window in SYN & SYN/ACK segments

* is never scaled.

*/

th->window = htons(min(tp->rcv_wnd, 65535U));

}

......

icsk->icsk_af_ops->send_check(sk, skb);

if (likely(tcb->tcp_flags & TCPHDR_ACK))

tcp_event_ack_sent(sk, tcp_skb_pcount(skb), rcv_nxt);

if (skb->len != tcp_header_size) {

tcp_event_data_sent(tp, sk);

tp->data_segs_out += tcp_skb_pcount(skb);

tp->bytes_sent += skb->len - tcp_header_size;

}

if (after(tcb->end_seq, tp->snd_nxt) || tcb->seq == tcb->end_seq)

TCP_ADD_STATS(sock_net(sk), TCP_MIB_OUTSEGS,

tcp_skb_pcount(skb));

tp->segs_out += tcp_skb_pcount(skb);

/* OK, its time to fill skb_shinfo(skb)->gso_{segs|size} */

skb_shinfo(skb)->gso_segs = tcp_skb_pcount(skb);

skb_shinfo(skb)->gso_size = tcp_skb_mss(skb);

/* Leave earliest departure time in skb->tstamp (skb->skb_mstamp_ns) */

/* Cleanup our debris for IP stacks */

memset(skb->cb, 0, max(sizeof(struct inet_skb_parm),

sizeof(struct inet6_skb_parm)));

err = icsk->icsk_af_ops->queue_xmit(sk, skb, &inet->cork.fl);

......

}

tcp_transmit_skb是tcp发送数据位于传输层的最后一步,这里首先对TCP数据段的头部进行了处理,然后调用了网络层提供的发送接口icsk->icsk_af_ops->queue_xmit(sk, skb, &inet->cork.fl);实现了数据的发送,自此,数据离开了传输层,传输层的任务也就结束了。

对于recv函数,与send类似,自然也是recvfrom的特殊情况,调用的也就是__sys_recvfrom,整个函数的调用路径与send非常类似:

int __sys_recvfrom(int fd, void __user *ubuf, size_t size, unsigned int flags,

struct sockaddr __user *addr, int __user *addr_len)

{

......

err = import_single_range(READ, ubuf, size, &iov, &msg.msg_iter);

if (unlikely(err))

return err;

sock = sockfd_lookup_light(fd, &err, &fput_needed);

.....

msg.msg_control = NULL;

msg.msg_controllen = 0;

/* Save some cycles and don't copy the address if not needed */

msg.msg_name = addr ? (struct sockaddr *)&address : NULL;

/* We assume all kernel code knows the size of sockaddr_storage */

msg.msg_namelen = 0;

msg.msg_iocb = NULL;

msg.msg_flags = 0;

if (sock->file->f_flags & O_NONBLOCK)

flags |= MSG_DONTWAIT;

err = sock_recvmsg(sock, &msg, flags);

if (err >= 0 && addr != NULL) {

err2 = move_addr_to_user(&address,

msg.msg_namelen, addr, addr_len);

.....

}

__sys_recvfrom调用了sock_recvmsg来接收数据,整个函数实际调用的是sock->ops->recvmsg(sock, msg, msg_data_left(msg), flags);,同样,根据tcp_prot结构的初始化,调用的其实是tcp_rcvmsg

.接受函数比发送函数要复杂得多,因为数据接收不仅仅只是接收,tcp的三次握手也是在接收函数实现的,所以收到数据后要判断当前的状态,是否正在建立连接等,根据发来的信息考虑状态是否要改变,在这里,我们仅仅考虑在连接建立后数据的接收。

int tcp_recvmsg(struct sock *sk, struct msghdr *msg, size_t len, int nonblock,

int flags, int *addr_len)

{

......

if (sk_can_busy_loop(sk) && skb_queue_empty(&sk->sk_receive_queue) &&

(sk->sk_state == TCP_ESTABLISHED))

sk_busy_loop(sk, nonblock);

lock_sock(sk);

.....

if (unlikely(tp->repair)) {

err = -EPERM;

if (!(flags & MSG_PEEK))

goto out;

if (tp->repair_queue == TCP_SEND_QUEUE)

goto recv_sndq;

err = -EINVAL;

if (tp->repair_queue == TCP_NO_QUEUE)

goto out;

......

last = skb_peek_tail(&sk->sk_receive_queue);

skb_queue_walk(&sk->sk_receive_queue, skb) {

last = skb;

......

if (!(flags & MSG_TRUNC)) {

err = skb_copy_datagram_msg(skb, offset, msg, used);

if (err) {

/* Exception. Bailout! */

if (!copied)

copied = -EFAULT;

break;

}

}

*seq += used;

copied += used;

len -= used;

tcp_rcv_space_adjust(sk);

这里共维护了三个队列:prequeue、backlog、receive_queue,分别为预处理队列,后备队列和接收队列,在连接建立后,若没有数据到来,接收队列为空,进程会在sk_busy_loop函数内循环等待,知道接收队列不为空,并调用函数数skb_copy_datagram_msg将接收到的数据拷贝到用户态,实际调用的是__skb_datagram_iter,这里同样用了struct msghdr *msg来实现。

int __skb_datagram_iter(const struct sk_buff *skb, int offset,

struct iov_iter *to, int len, bool fault_short,

size_t (*cb)(const void *, size_t, void *, struct iov_iter *),

void *data)

{

int start = skb_headlen(skb);

int i, copy = start - offset, start_off = offset, n;

struct sk_buff *frag_iter;

/* 拷贝tcp头部 */

if (copy > 0) {

if (copy > len)

copy = len;

n = cb(skb->data + offset, copy, data, to);

offset += n;

if (n != copy)

goto short_copy;

if ((len -= copy) == 0)

return 0;

}

/* 拷贝数据部分 */

for (i = 0; i < skb_shinfo(skb)->nr_frags; i++) {

int end;

const skb_frag_t *frag = &skb_shinfo(skb)->frags[i];

WARN_ON(start > offset + len);

end = start + skb_frag_size(frag);

if ((copy = end - offset) > 0) {

struct page *page = skb_frag_page(frag);

u8 *vaddr = kmap(page);

if (copy > len)

copy = len;

n = cb(vaddr + frag->page_offset +

offset - start, copy, data, to);

kunmap(page);

offset += n;

if (n != copy)

goto short_copy;

if (!(len -= copy))

return 0;

}

start = end;

}

拷贝完成后,函数返回,整个接收的过程也就完成了。

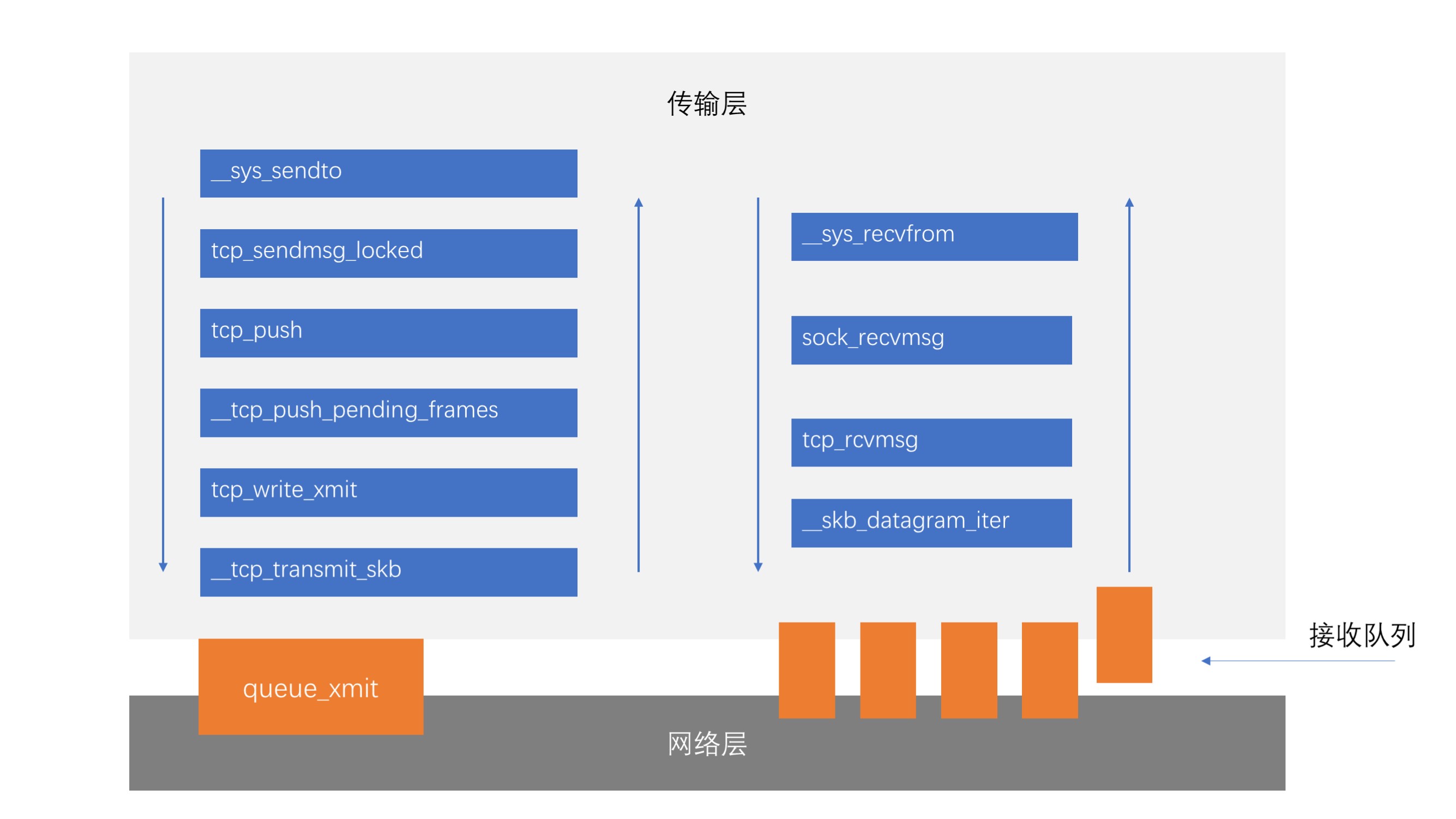

整体来讲与我们的分析并不大,用一张函数间的相互调用图可以表示

gdb调试分析

接下来用gdb调试验证上面的分析,调试环境为linux5.0.1+menuos(64位),测试程序为hello/hi网络聊天程序,将其植入到menuos,命令为client。

首先看send的调用关系,分别将断点打在__sys_sendto、tcp_sendmsg_locked、tcp_push、__tcp_push_pending_frames、tcp_write_xmit、__tcp_transmit_skb。观察函数的调用顺序,与我们的分析是否一致。

(gdb) file vmlinux

Reading symbols from vmlinux...done.

warning: File "/home/netlab/netlab/linux-5.2.7/scripts/gdb/vmlinux-gdb.py" auto-loading has been declined by your `auto-load safe-path' set to "$debugdir:$datadir/auto-load".

To enable execution of this file add

add-auto-load-safe-path /home/netlab/netlab/linux-5.2.7/scripts/gdb/vmlinux-gdb.py

line to your configuration file "/home/netlab/.gdbinit".

To completely disable this security protection add

set auto-load safe-path /

line to your configuration file "/home/netlab/.gdbinit".

For more information about this security protection see the

"Auto-loading safe path" section in the GDB manual. E.g., run from the shell:

info "(gdb)Auto-loading safe path"

(gdb) target remote: 1234

Remote debugging using : 1234

0x0000000000000000 in fixed_percpu_data ()

(gdb) c

Continuing.

^C

Program received signal SIGINT, Interrupt.

default_idle () at arch/x86/kernel/process.c:581

581 trace_cpu_idle_rcuidle(PWR_EVENT_EXIT, smp_processor_id());

(gdb) b __sys_sendto

Breakpoint 1 at 0xffffffff817ef560: file net/socket.c, line 1929.

(gdb) b tcp_sendmsg_locked

Breakpoint 2 at 0xffffffff81895000: file net/ipv4/tcp.c, line 1158.

(gdb) b tcp_push

Breakpoint 3 at 0xffffffff818907c0: file ./include/linux/skbuff.h, line 1766.

(gdb) b __tcp_push_pending_frames

Breakpoint 4 at 0xffffffff818a44a0: file net/ipv4/tcp_output.c, line 2584.

(gdb) b tcp_wrtie_xmit

Function "tcp_wrtie_xmit" not defined.

Make breakpoint pending on future shared library load? (y or [n]) n

(gdb) b tcp_write_xmit

Breakpoint 5 at 0xffffffff818a32d0: file net/ipv4/tcp_output.c, line 2330.

(gdb) b __tcp_transmit_skb

Breakpoint 6 at 0xffffffff818a2830: file net/ipv4/tcp_output.c, line 1015.

(gdb)

执行client命令,观察程序暂停的位置:

Breakpoint 6, __tcp_transmit_skb (sk=0xffff888006478880,

skb=0xffff888006871400, clone_it=1, gfp_mask=3264, rcv_nxt=0)

at net/ipv4/tcp_output.c:1015

1015 {

(gdb)

并非我们预想的那样,程序停在了__tcp_transmit_skb,但仔细分析,这应该是三次握手的过程,继续调试

Breakpoint 6, __tcp_transmit_skb (sk=0xffff888006478880,

skb=0xffff888006871400, clone_it=1, gfp_mask=3264, rcv_nxt=0)

at net/ipv4/tcp_output.c:1015

1015 {

(gdb) c

Continuing.

Breakpoint 6, __tcp_transmit_skb (sk=0xffff888006478880,

skb=0xffff88800757a100, clone_it=0, gfp_mask=0, rcv_nxt=1155786088)

at net/ipv4/tcp_output.c:1015

1015 {

(gdb) c

Continuing.

又有两次停在了这里,恰恰验证了猜想,因为这个程序的服务端和客户端都在同一台主机上,共用了同一个TCP协议栈,在TCP三次握手时,客户端发送两次,服务端发送一次,恰好三次。下面我们用客户端向服务器端发送,分析程序的调用过程:

Breakpoint 1, __sys_sendto (fd=5, buff=0x7ffc33c54bc0, len=2, flags=0,

addr=0x0 <fixed_percpu_data>, addr_len=0) at net/socket.c:1929

1929 {

(gdb) c

Continuing.

Breakpoint 2, tcp_sendmsg_locked (sk=0xffff888006479100,

msg=0xffffc900001f7e28, size=2) at net/ipv4/tcp.c:1158

1158 {

(gdb) c

Continuing.

Breakpoint 3, tcp_push (sk=0xffff888006479100, flags=0, mss_now=32752,

nonagle=0, size_goal=32752) at net/ipv4/tcp.c:699

699 skb = tcp_write_queue_tail(sk);

(gdb) c

Continuing.

Breakpoint 4, __tcp_push_pending_frames (sk=0xffff888006479100, cur_mss=32752,

nonagle=0) at net/ipv4/tcp_output.c:2584

2584 if (unlikely(sk->sk_state == TCP_CLOSE))

(gdb) c

Continuing.

Breakpoint 5, tcp_write_xmit (sk=0xffff888006479100, mss_now=32752, nonagle=0,

push_one=0, gfp=2592) at net/ipv4/tcp_output.c:2330

2330 {

(gdb) c

Continuing.

Breakpoint 6, __tcp_transmit_skb (sk=0xffff888006479100,

skb=0xffff888006871400, clone_it=1, gfp_mask=2592, rcv_nxt=405537035)

at net/ipv4/tcp_output.c:1015

1015 {

(gdb) c

Continuing.

Breakpoint 6, __tcp_transmit_skb (sk=0xffff888006478880,

skb=0xffff88800757a100, clone_it=0, gfp_mask=0, rcv_nxt=1155786090)

at net/ipv4/tcp_output.c:1015

1015 {

(gdb) c

可以看到,与我们分析的顺序是一致的,但是最后__tcp_transmit_skb调用了两次,经过仔细分析,终于找到原因——这是接收方接收到数据后发送ACK使用的。

验证完send,来验证一下recv

将断点分别设在:

__sys_recvfrom、sock_recvmsg、tcp_rcvmsg、__skb_datagram_iter处,以同样的方式观察:

Breakpoint 1, __sys_recvfrom (fd=5, ubuf=0x7ffd9428d960, size=1024, flags=0,

addr=0x0 <fixed_percpu_data>, addr_len=0x0 <fixed_percpu_data>)

at net/socket.c:1990

1990 {

(gdb) c

Continuing.

Breakpoint 2, sock_recvmsg (sock=0xffff888006df1900, msg=0xffffc900001f7e28,

flags=0) at net/socket.c:891

891 {

(gdb) c

Continuing.

Breakpoint 3, tcp_recvmsg (sk=0xffff888006479100, msg=0xffffc900001f7e28,

len=1024, nonblock=0, flags=0, addr_len=0xffffc900001f7df4)

at net/ipv4/tcp.c:1933

1933 {

(gdb) c

在未发送之前,程序也会暂停在断点处,根据之前的分析,这也是三次握手的过程,但是为什么没有__skb_datagram_iter呢?,因为三次握手时,并没有发送数据过来,所以并没有数据被拷贝到用户空间。

同样,尝试发送数据观察调用过程。

Breakpoint 1, __sys_recvfrom (fd=5, ubuf=0x7ffd9428d960, size=1024, flags=0,

addr=0x0 <fixed_percpu_data>, addr_len=0x0 <fixed_percpu_data>)

at net/socket.c:1990

1990 {

(gdb) c

Continuing.

Breakpoint 2, sock_recvmsg (sock=0xffff888006df1900, msg=0xffffc900001f7e28,

flags=0) at net/socket.c:891

891 {

(gdb) c

Continuing.

Breakpoint 3, tcp_recvmsg (sk=0xffff888006479100, msg=0xffffc900001f7e28,

len=1024, nonblock=0, flags=0, addr_len=0xffffc900001f7df4)

at net/ipv4/tcp.c:1933

1933 {

(gdb) c

Continuing.

Breakpoint 4, __skb_datagram_iter (skb=0xffff8880068714e0, offset=0,

to=0xffffc900001efe38, len=2, fault_short=false,

cb=0xffffffff817ff860 <simple_copy_to_iter>, data=0x0 <fixed_percpu_data>)

at net/core/datagram.c:414

414 {

验证完毕。