Result文件数据说明:

Ip:106.39.41.166,(城市)

Date:10/Nov/2016:00:01:02 +0800,(日期)

Day:10,(天数)

Traffic: 54 ,(流量)

Type: video,(类型:视频video或文章article)

Id: 8701(视频或者文章的id)

测试要求:

1、 数据清洗:按照进行数据清洗,并将清洗后的数据导入hive数据库中。

两阶段数据清洗:

(1)第一阶段:把需要的信息从原始日志中提取出来

ip: 199.30.25.88

time: 10/Nov/2016:00:01:03 +0800

traffic: 62

文章: article/11325

视频: video/3235

(2)第二阶段:根据提取出来的信息做精细化操作

ip--->城市 city(IP)

date--> time:2016-11-10 00:01:03

day: 10

traffic:62

type:article/video

id:11325

(3)hive数据库表结构:

create table data( ip string, time string , day string, traffic bigint,

type string, id string )

2、数据处理:

·统计最受欢迎的视频/文章的Top10访问次数 (video/article)

·按照地市统计最受欢迎的Top10课程 (ip)

·按照流量统计最受欢迎的Top10课程 (traffic)

代码如下:

public static class Map extends Mapper<Object , Text , Text,Text >{ 2 private static Text ip=new Text(); 3 // private static Text date=new Text(); 4 // private static Text type=new Text(); 5 // private static Text id=new Text(); 6 private static Text traffic=new Text(); 7 public void map(Object key,Text value,Context context) throws IOException, InterruptedException{ 8 String line=value.toString(); 9 String arr[]=line.split(","); 10 traffic.set(arr[0]); 11 String str[]=arr[1].split("[:]|[/]|[+]"); 12 String s=str[2]+"-"+"11"+"-"+str[0]+" "+str[3]+":"+str[4]+":"+str[5]; 13 ip.set(s+","+str[0]+","+arr[3]+","+arr[4]+","+arr[5]); 14 context.write(traffic,ip); 15 } 16 } 17 public static class Reduce extends Reducer< IntWritable, Text, Text, Text>{ 18 public void reduce(Text key,Iterable<Text> values,Context context) throws IOException, InterruptedException{ 19 for(Text val:values){ 20 context.write(key,val); 21 } 22 } 23 }

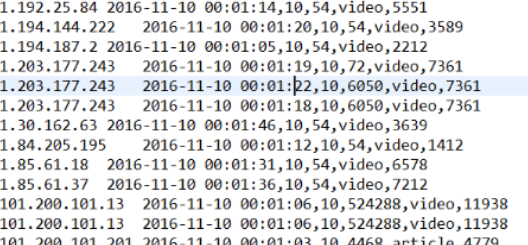

清洗前截图:

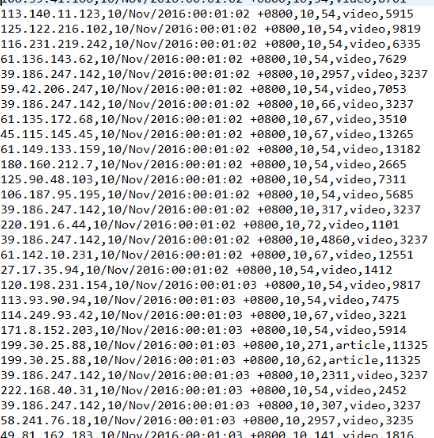

清洗后截图: