一、部署准备:

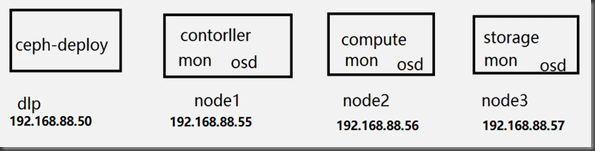

4台虚拟机(linux系统为centos7.6版本)

dlp:192.168.88.50

node1:192.168.88.55

node2:192.168.88.56

node3:192.168.88.57

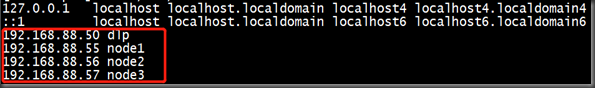

(1)所有ceph集群节点(包括客户端)设置静态域名解析;

[root@dlp ~]# vim /etc/hosts

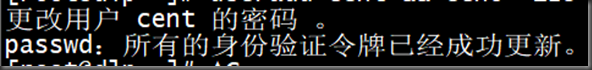

(2)所有集群节点(包括客户端)创建cent用户,并设置密码,后执行如下命令:

1. 添加cent用户:

useradd cent && echo "123" | passwd

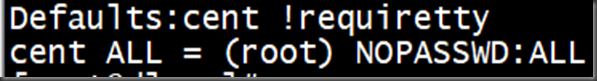

2.赋予sudo权限:

echo -e 'Defaults:cent !requiretty cent ALL = (root) NOPASSWD:ALL' | tee /etc/sudoers.d/ceph

3. 修改权限

chmod 440 /etc/sudoers.d/ceph

(3)在部署节点切换为cent用户,设置无密钥登陆各节点包括客户端节点

[root@dlp ~]# su - cent

[cent@dlp ~]$ ssh-keygen

[cent@dlp ~]$ ssh-copy-id node1

[cent@dlp ~]$ ssh-copy-id node2

[cent@dlp ~]$ ssh-copy-id node3

[cent@dlp ~]$ ssh-copy-id dlp

(4)在部署节点切换为cent用户,在cent用户家目录,设置如下文件:vi~/.ssh/config# create new ( define all nodes and users )

[cent@dlp ~]$ ls -a

[cent@dlp ~]$ cd .ssh/

[cent@dlp .ssh]$ vim config #config是新建的文件,下面是配置内容

Host dlp

Hostname dlp

User cent

Host node1

Hostname node1

User cent

Host node2

Hostname node2

User cent

Host node3

Hostname node3

User cent

[cent@dlp .ssh]$ chmod 600 ~/.ssh/config

二、所有节点配置国内ceph源:

(1)all-node(包括客户端)在/etc/yum.repos.d/创建 ceph-yunwei.repo

[cent@dlp ~]$ cat /etc/redhat-release #查看系统版本

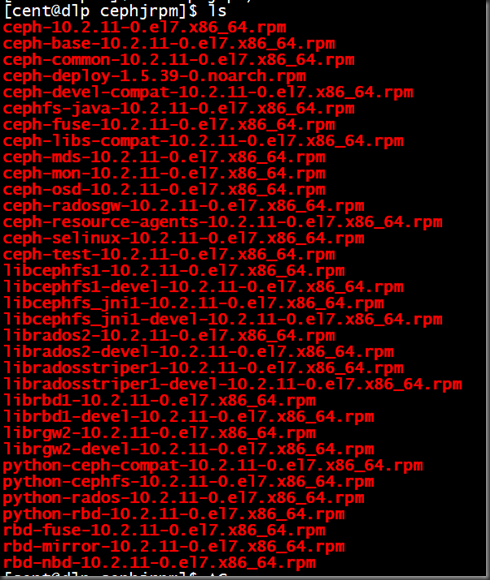

(2)到国内ceph源中https://mirrors.aliyun.com/centos/7.6.1810/storage/x86_64/ceph-jewel/下载如下所需rpm包。注意:红色框中为ceph-deploy的rpm,只需要在部署节点安装,下载需要到https://mirrors.aliyun.com/ceph/rpm-jewel/el7/noarch/中找到最新对应的ceph-deploy-xxxxx.noarch.rpm 下载

[cent@dlp ~]$ ls

[cent@dlp ~]$ cd cephjrpm/

(3)将下载好的rpm拷贝到所有节点,并安装。注意ceph-deploy-xxxxx.noarch.rpm 只有部署节点用到,其他节点不需要,部署节点也需要安装其余的rpm

[root@dlp cephjrpm]# yum localinstall ceph-deploy-1.5.39-0.noarch.rpm -y

[root@dlp cephjrpm]# ceph-deploy --version # 查看 ceph-deploy版本号

[root@dlp cephjrpm]# mv ceph-deploy-1.5.39-0.noarch.rpm /root/

[root@dlp cephjrpm]# yum localinstall -y ./*

在node1,2,3节点上安装除了ceph-deploy-1.5.39-0.noarch.rpm外的所以软件包

(4)在部署节点(cent用户下执行):安装 ceph-deploy,在root用户下,进入下载好的rpm包目录,执行:

yum localinstall -y ./*

创建ceph工作目录

[root@dlp ~]# su - cent

[cent@dlp ~]$ mkdir ceph

[cent@dlp ~]$ cd ceph/

[cent@dlp ceph]$ pwd

/home/cent/ceph

(5)在部署节点(cent用户下执行):配置新集群

[cent@dlp ceph]$ pwd

/home/cent/ceph

[cent@dlp ceph]$ ceph-deploy new node1 node2 node3 #在ceph工作目录下

[cent@dlp ceph]$ vim ceph.conf

[global]

fsid = 062e2b8a-2fb6-4982-aab2-3440d01d2b2c

mon_initial_members = node1, node2, node3

mon_host = 192.168.88.55,192.168.88.56,192.168.88.57

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

osd_pool_default_size = 1 # 保存副本数量

mon_clock_drift_allowed = 2 # 至少由两个节点正常,集群才为正常状态

<br>mon_clock_drift_warn_backoff = 3 # 每3秒做一次ceph集群的健康检查

可选参数如下:

public_network = 192.168.254.0/24

cluster_network = 172.16.254.0/24

osd_pool_default_size = 3

osd_pool_default_min_size = 1

osd_pool_default_pg_num = 8

osd_pool_default_pgp_num = 8

osd_crush_chooseleaf_type = 1

[mon]

mon_clock_drift_allowed = 0.5

[osd]

osd_mkfs_type = xfs

osd_mkfs_options_xfs = -f

filestore_max_sync_interval = 5

filestore_min_sync_interval = 0.1

filestore_fd_cache_size = 655350

filestore_omap_header_cache_size = 655350

filestore_fd_cache_random = true

osd op threads = 8

osd disk threads = 4

filestore op threads = 8

max_open_files = 655350

(6)在部署节点执行,所有节点安装ceph软件

[cent@dlp ceph]$ ceph-deploy install dlp node1 node2 node3 #在工作目录下进行操作

(7)在部署节点初始化集群(cent用户下执行):

[cent@dlp ceph]$ ceph-deploy mon create-initial #工作目录

查看启动状态

[root@node1 cephjrpm]# systemctl status ceph-mon@node1 ceph-mon.target

[root@node2 cephjrpm]# systemctl status ceph-mon@node2 ceph-mon.target

[root@node3 cephjrpm]# systemctl status ceph-mon@node3 ceph-mon.target

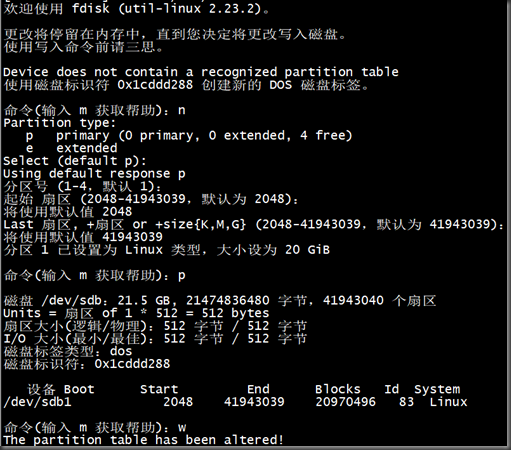

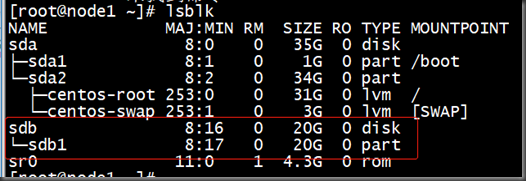

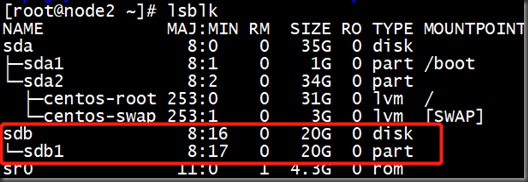

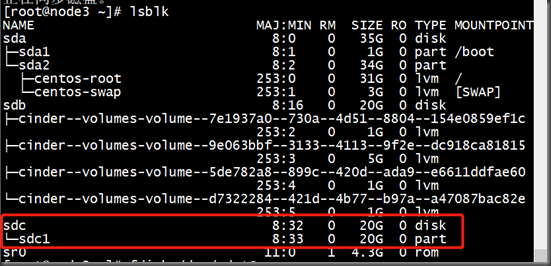

(8)每个节点将第二块硬盘做分区,并格式化为xfs文件系统挂载到/data:

[root@node1 ~]# fdisk /dev/sdb

[root@node2 ~]# fdisk /dev/sdb

[root@node3 ~]# fdisk /dev/sdc

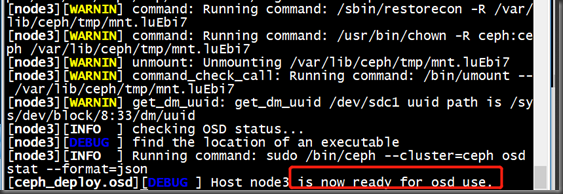

(9)准备Object Storage Daemon:

[cent@dlp ceph]$ ceph-deploy osd prepare node1:/dev/sdb1

[cent@dlp ceph]$ ceph-deploy osd prepare node2:/dev/sdb1

[cent@dlp ceph]$ ceph-deploy osd prepare node3:/dev/sdc1

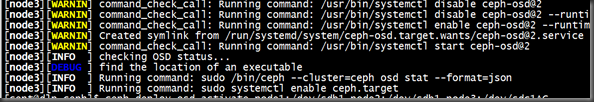

(10)激活Object Storage Daemon:

[cent@dlp ceph]$ ceph-deploy osd activate node1:/dev/sdb1 node2:/dev/sdb1 node3:/dev/sdc1

(11)在部署节点transfer config files

[cent@dlp ceph]$ ceph-deploy admin dlp node1 node2 node3

[cent@dlp ceph]$ sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

[root@node1 ~]# sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

[root@node2 ~]# sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

[root@node3 ~]# sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

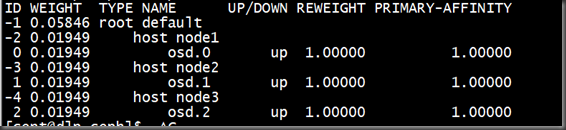

(12)在ceph集群中任意节点检测:

[cent@dlp ceph]$ ceph -s

[cent@dlp ceph]$ ceph osd tree