用scrapy爬取斗图表情,其实呀,我是运用别人的博客写的,里面的东西改了改就好了,推存链接“ http://www.cnblogs.com/jiaoyu121/p/6992587.html ”

首先建立项目:scrapy startproject doutu

在scrapy框架里先写自己要爬取的是什么,在item里面写。

import scrapy

class DoutuItem(scrapy.Item):

# define the fields for your item here like

img_url = scrapy.Field()

name = scrapy.Field()

写完以后就是该写主程序了doutula.py

# -*- coding: utf-8 -*-

import scrapy

import os

import requests

from doutu.items import DoutuItem

class DoutulaSpider(scrapy.Spider):

name = "doutula"

allowed_domains = ["www.doutula.com"]

这是斗图网站

start_urls = ['https://www.doutula.com/photo/list/?page={}'.format(i) for i in range(1,847)]

斗图循环的页数

def parse(self, response):

i=0

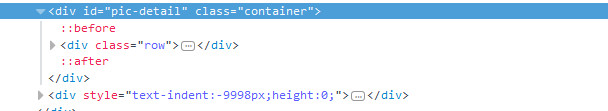

for content in response.xpath('//*[@id="pic-detail"]/div/div[1]/div[2]/a'):

这里的xpath是:

i+=1

item = DoutuItem()

item['img_url'] = 'http:'+ content.xpath('//img/@data-original').extract()[i]

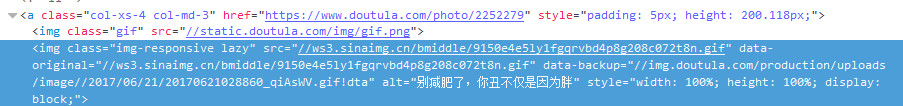

这里的xpath是图片的超链接:

item['name']=content.xpath('//p/text()').extract()[i]文中的extract()用来返回一个list(就是系统自带的那个) 里面是一些你提取的内容,[i]是结合前面的i的循环每次获取下一个标签内容,如果不这样设置,就会把全部的标签内容放入一个字典的值中。

这里是图片的名字:

try:

if not os.path.exists('doutu'):

os.makedirs('doutu')

r = requests.get(item['img_url'])

filename = 'doutu\{}'.format(item['name'])+item['img_url'][-4:]用来获取图片的名称,最后item['img_url'][-4:]是获取图片地址的最后四位这样就可以保证不同的文件格式使用各自的后缀。

with open(filename,'wb') as fo:

fo.write(r.content)

except:

print('Error')

yield item

下面是修改setting的配置文件:

BOT_NAME = 'doutu'

SPIDER_MODULES = ['doutu.spiders']

NEWSPIDER_MODULE = 'doutu.spiders'

DOWNLOADER_MIDDLEWARES = {

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware': None,

'doutu.middlewares.RotateUserAgentMiddleware': 400,

}

ROBOTSTXT_OBEY = False

CONCURRENT_REQUESTS =16

DOWNLOAD_DELAY = 0.2

COOKIES_ENABLED = False

配置middleware.py配合settings中的UA设置可以在下载中随机选择UA有一定的反ban效果,在原有代码基础上加入下面代码。这里的user_agent_list可以加入更多。

import random

from scrapy.downloadermiddlewares.useragent import UserAgentMiddleware

from scrapy import signals

class RotateUserAgentMiddleware(UserAgentMiddleware):

def __init__(self, user_agent=''):

self.user_agent = user_agent

def process_request(self, request, spider):

ua = random.choice(self.user_agent_list)

if ua:

print(ua)

request.headers.setdefault('User-Agent', ua)

user_agent_list = [

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/22.0.1207.1 Safari/537.1",

"Mozilla/5.0 (X11; CrOS i686 2268.111.0) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.57 Safari/536.11",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1092.0 Safari/536.6",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.6 (KHTML, like Gecko) Chrome/20.0.1090.0 Safari/536.6",

"Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/19.77.34.5 Safari/537.1",

"Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.9 Safari/536.5",

"Mozilla/5.0 (Windows NT 6.0) AppleWebKit/536.5 (KHTML, like Gecko) Chrome/19.0.1084.36 Safari/536.5",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 5.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_0) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 (KHTML, like Gecko) Chrome/19.0.1061.0 Safari/536.3",

]

下面写一个启动项目的文件:

from scrapy.cmdline import execute

execute(['scrapy','crawl','doutula'])

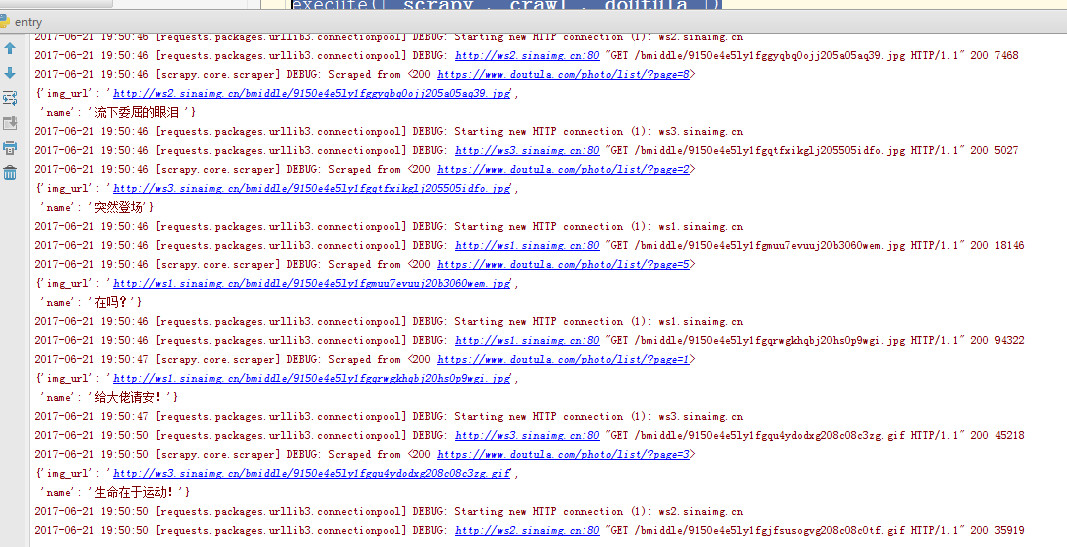

下面是见证奇迹的时刻啦:

我这是属于摘录,复制的 但是我做了一遍,已经大部分已经更改成我看的懂得套路了