1.写在前面

在spark streaming+kafka对流式数据处理过程中,往往是spark streaming消费kafka的数据写入hdfs中,再进行hive映射形成数仓,当然也可以利用sparkSQL直接写入hive形成数仓。对于写入hdfs中,如果是普通的rdd则API为saveAsTextFile(),如果是PairRDD则API为saveAsHadoopFile()。当然高版本的spark可能将这两个合二为一。这两种API在spark streaming中如果不自定义的话会导致新写入的hdfs文件覆盖历史写入的hdfs文件,下面来重现这个问题。

2.saveAsTextFile()写新写入的hdfs文件覆盖历史写入的hdfs文件测试代码

package com.surfilter.dp.timer.job;

import kafka.message.MessageAndMetadata;

import kafka.serializer.StringDecoder;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function;

import org.apache.spark.api.java.function.PairFlatMapFunction;

import org.apache.spark.api.java.function.VoidFunction;

import org.apache.spark.streaming.Seconds;

import org.apache.spark.streaming.api.java.JavaInputDStream;

import org.apache.spark.streaming.api.java.JavaStreamingContext;

import org.apache.spark.streaming.kafka.KafkaUtils;

import java.text.SimpleDateFormat;

import java.util.*;

public class TestStreaming extends BaseParams {

public static void main(String args[]) {

String totalParameterString = null;

if (null != args && args.length > 0) {

totalParameterString = args[0];

}

if (null != totalParameterString && !"".equals(totalParameterString)) {

ParameterParse parameterParse = new ParameterParse(totalParameterString);

SparkConf conf = new SparkConf().setAppName(parameterParse.getSpark_app_name());

setSparkConf(parameterParse, conf);

JavaSparkContext sparkContext = new JavaSparkContext(conf);

JavaStreamingContext streamingContext = new JavaStreamingContext(sparkContext, Seconds.apply(Long.parseLong(parameterParse.getSpark_streaming_duration())));

JavaInputDStream<String> dStream = KafkaUtils.createDirectStream(streamingContext, String.class, String.class,

StringDecoder.class, StringDecoder.class, String.class,

generatorKafkaParams(parameterParse), generatorTopicOffsets(parameterParse, "test_20200509"),

new Function<MessageAndMetadata<String, String>, String>() {

private static final long serialVersionUID = 1L;

@Override

public String call(MessageAndMetadata<String, String> msgAndMd) throws Exception {

return msgAndMd.message();

}

});

dStream.foreachRDD(new VoidFunction<JavaRDD<String>>() {

@Override

public void call(JavaRDD<String> rdd) throws Exception {

JavaRDD<String> saveHdfsRdd = rdd.mapPartitions(new FlatMapFunction<Iterator<String>, String>() {

@Override

public Iterable<String> call(Iterator<String> iterator) throws Exception {

List<String> returnList = new ArrayList<>();

while (iterator.hasNext()){

String message = iterator.next().toString();

returnList.add(message);

}

return returnList;

}

});

String dt = new SimpleDateFormat("yyyyMMdd").format(new Date());

String hour = new SimpleDateFormat("HH").format(new Date());

String savePath = "hdfs://gawh220:8020/user/hive/warehouse/rzx_standard.db/meijs_test/dt=" + dt + "/hour=" + hour + "/";

saveHdfsRdd.saveAsTextFile(savePath);

}

});

streamingContext.start();

streamingContext.awaitTermination();

streamingContext.close();

}

}

}

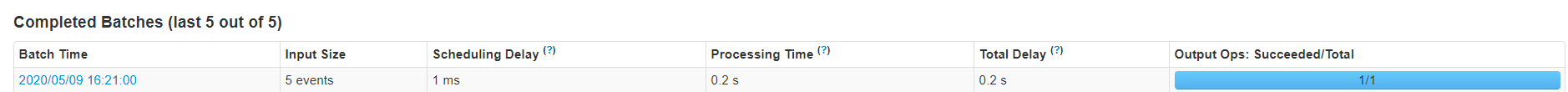

在yarn上执行spark streaming观察,用命令行的方式往test_20200509的topic手动生产一段测试数据,发现spark streaming立马检测到并执行完成

之后查看写入的hdfs文件

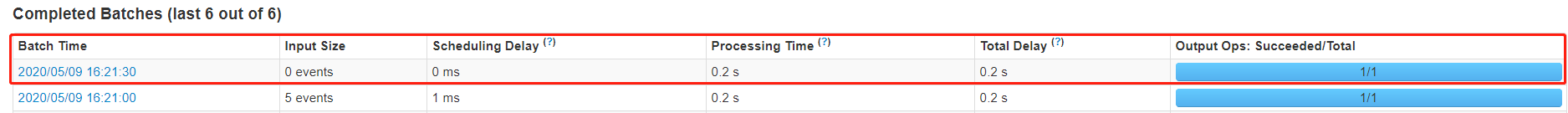

发现hdfs文件写入正常,也是有数据的。之后不再继续命令行生产数据,当sprak streaming新的一个批次记录为0的任务开始执行并执行完成

再观察写入的hdfs文件,发现文件依然有,但是文件的内容为空,这就证明了第一批有数据的被覆盖掉了

为什么被覆盖?

spark streaming是按照特定的配置时间去一批批的拉取kafka的数据,在写入的时候也是按照分区的状态写入hdfs中的,比如下图

可以看出三个分区写成三个文件,每一批写入都是按照这种方式自动生成文件名并写入文件中,所以会造成最新一批覆盖之前的一批

3.利用saveAsHadoopFile()自定义输出文件格式避免覆盖问题

package com.surfilter.dp.timer.job;

import com.surfilter.dp.timer.parse.BaseParams;

import com.surfilter.dp.timer.parse.ParameterParse;

import kafka.message.MessageAndMetadata;

import kafka.serializer.StringDecoder;

import org.apache.hadoop.mapred.lib.MultipleTextOutputFormat;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.Function;

import org.apache.spark.api.java.function.PairFlatMapFunction;

import org.apache.spark.api.java.function.VoidFunction;

import org.apache.spark.sql.hive.HiveContext;

import org.apache.spark.streaming.Seconds;

import org.apache.spark.streaming.api.java.JavaInputDStream;

import org.apache.spark.streaming.api.java.JavaStreamingContext;

import org.apache.spark.streaming.kafka.KafkaUtils;

import scala.Tuple2;

import java.text.SimpleDateFormat;

import java.util.ArrayList;

import java.util.Date;

import java.util.Iterator;

import java.util.List;

public class TestStreaming extends BaseParams {

public static void main(String args[]) {

String totalParameterString = null;

if (null != args && args.length > 0) {

totalParameterString = args[0];

}

if (null != totalParameterString && !"".equals(totalParameterString)) {

ParameterParse parameterParse = new ParameterParse(totalParameterString);

SparkConf conf = new SparkConf().setAppName(parameterParse.getSpark_app_name());

setSparkConf(parameterParse, conf);

JavaSparkContext sparkContext = new JavaSparkContext(conf);

JavaStreamingContext streamingContext = new JavaStreamingContext(sparkContext, Seconds.apply(Long.parseLong(parameterParse.getSpark_streaming_duration())));

JavaInputDStream<String> dStream = KafkaUtils.createDirectStream(streamingContext, String.class, String.class,

StringDecoder.class, StringDecoder.class, String.class,

generatorKafkaParams(parameterParse), generatorTopicOffsets(parameterParse, "test_20200509"),

new Function<MessageAndMetadata<String, String>, String>() {

private static final long serialVersionUID = 1L;

@Override

public String call(MessageAndMetadata<String, String> msgAndMd) throws Exception {

return msgAndMd.message();

}

});

dStream.foreachRDD(new VoidFunction<JavaRDD<String>>() {

@Override

public void call(JavaRDD<String> rdd) {

JavaPairRDD<String, String> pairRDD = rdd.mapPartitionsToPair(new PairFlatMapFunction<Iterator<String>, String, String>() {

@Override

public Iterable<Tuple2<String, String>> call(Iterator<String> iterator) {

List<Tuple2<String, String>> returnTuple = new ArrayList<>();

while (iterator.hasNext()) {

String message = iterator.next().toString();

returnTuple.add(new Tuple2<>(message, ""));

}

return returnTuple;

}

});

String dt = new SimpleDateFormat("yyyyMMdd").format(new Date());

String hour = new SimpleDateFormat("HH").format(new Date());

String savePath = "hdfs://gawh220:8020/user/hive/warehouse/rzx_standard.db/meijs_test/dt=" + dt + "/hour=" + hour + "/";

pairRDD.saveAsHadoopFile(savePath, String.class, String.class, RDDMultipleTextOutputFormat.class);

}

});

streamingContext.start();

streamingContext.awaitTermination();

streamingContext.close();

}

}

}

class RDDMultipleTextOutputFormat extends MultipleTextOutputFormat {

private static String system_time = System.currentTimeMillis() + "";

@Override

protected String generateFileNameForKeyValue(Object key, Object value, String name) {

name = system_time + "-" + name;

return super.generateFileNameForKeyValue(key, value, name);

}

}

用命令行的方式往test_20200509的topic手动生产一段测试数据,发现spark streaming立马检测到并执行完成

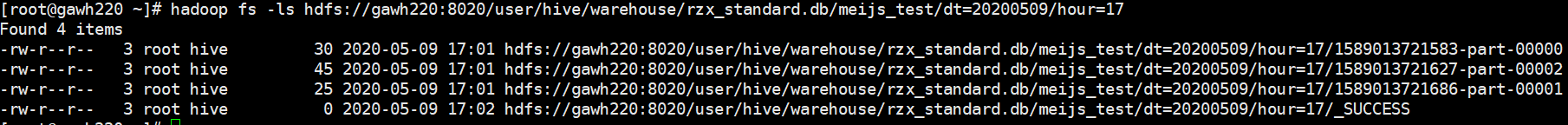

之后查看写入的hdfs文件

发现hdfs文件写入正常,也是有数据的。之后不再继续命令行生产数据,当sprak streaming新的一个批次记录为0的任务开始执行并执行完成

再观察写入的hdfs文件,发现并没有产生新的hdfs文件

再命令行的方式往test_20200509的topic手动生产一段测试数据,发现spark streaming立马检测到并执行完成

之后查看写入的hdfs文件,发现新写入的hdfs文件是追加到之前的文件的方式并且有数据的,如果之前的文件大小超过hdfs设定的大小,则会追加新的文件方式

说明:这种方式不但可以避免覆盖问题,而且可以避免大量小文件