一 Rook概述

1.1 Ceph简介

Ceph是一种高度可扩展的分布式存储解决方案,提供对象、文件和块存储。在每个存储节点上,将找到Ceph存储对象的文件系统和Ceph OSD(对象存储守护程序)进程。在Ceph集群上,还存在Ceph MON(监控)守护程序,它们确保Ceph集群保持高可用性。

更多Ceph介绍参考:https://www.cnblogs.com/itzgr/category/1382602.html

1.2 Rook简介

Rook 是一个开源的cloud-native storage编排, 提供平台和框架;为各种存储解决方案提供平台、框架和支持,以便与云原生环境本地集成。目前主要专用于Cloud-Native环境的文件、块、对象存储服务。它实现了一个自我管理的、自我扩容的、自我修复的分布式存储服务。

Rook支持自动部署、启动、配置、分配(provisioning)、扩容/缩容、升级、迁移、灾难恢复、监控,以及资源管理。为了实现所有这些功能,Rook依赖底层的容器编排平台,例如 kubernetes、CoreOS 等。。

Rook 目前支持Ceph、NFS、Minio Object Store、Edegefs、Cassandra、CockroachDB 存储的搭建。

Rook机制:

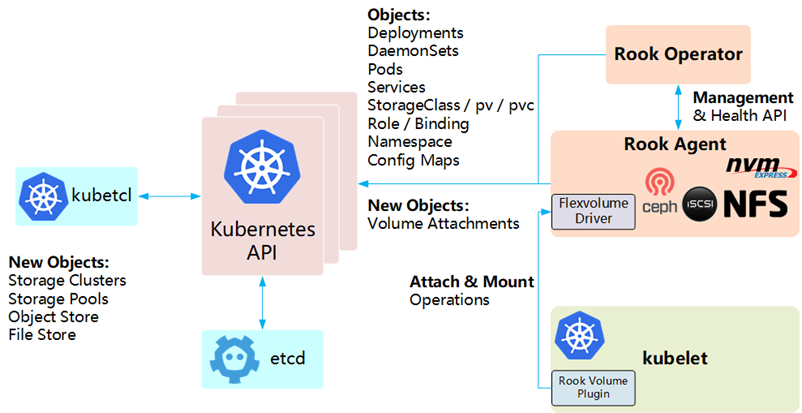

- Rook 提供了卷插件,来扩展了 K8S 的存储系统,使用 Kubelet 代理程序 Pod 可以挂载 Rook 管理的块设备和文件系统。

- Rook Operator 负责启动并监控整个底层存储系统,例如 Ceph Pod、Ceph OSD 等,同时它还管理 CRD、对象存储、文件系统。

- Rook Agent 代理部署在 K8S 每个节点上以 Pod 容器运行,每个代理 Pod 都配置一个 Flexvolume 驱动,该驱动主要用来跟 K8S 的卷控制框架集成起来,每个节点上的相关的操作,例如添加存储设备、挂载、格式化、删除存储等操作,都有该代理来完成。

更多参考如下官网:

https://rook.io

https://ceph.com/

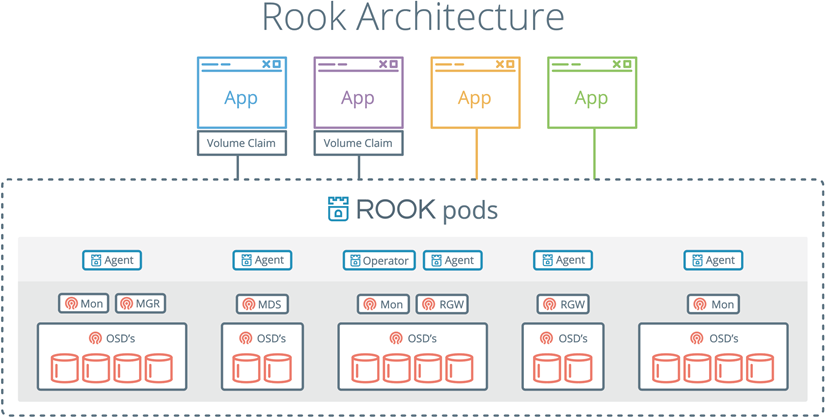

1.3 Rook架构

Rook架构如下:

Kubernetes集成Rook架构如下:

二 Rook部署

2.1 前期规划

提示:本实验不涉及Kubernetes部署,Kubernetes部署参考《附012.Kubeadm部署高可用Kubernetes》。

裸磁盘规划

2.2 获取YAML

[root@k8smaster01 ~]# git clone https://github.com/rook/rook.git

2.3 部署Rook Operator

本实验使用k8snode01——k8snode03三个节点,因此需要如下修改:

[root@k8smaster01 ceph]# kubectl taint node k8smaster01 node-role.kubernetes.io/master="":NoSchedule

[root@k8smaster01 ceph]# kubectl taint node k8smaster02 node-role.kubernetes.io/master="":NoSchedule

[root@k8smaster01 ceph]# kubectl taint node k8smaster03 node-role.kubernetes.io/master="":NoSchedule

[root@k8smaster01 ceph]# kubectl label nodes {k8snode01,k8snode02,k8snode03} ceph-osd=enabled

[root@k8smaster01 ceph]# kubectl label nodes {k8snode01,k8snode02,k8snode03} ceph-mon=enabled

[root@k8smaster01 ceph]# kubectl label nodes k8snode01 ceph-mgr=enabled

提示:当前版本rook中mgr只能支持一个节点运行。

[root@k8smaster01 ~]# cd /root/rook/cluster/examples/kubernetes/ceph/

[root@k8smaster01 ceph]# kubectl create -f common.yaml

[root@k8smaster01 ceph]# kubectl create -f operator.yaml

解读:如上创建了相应的基础服务(如serviceaccounts),同时rook-ceph-operator会在每个节点创建 rook-ceph-agent 和 rook-discover。

2.4 配置cluster

[root@k8smaster01 ceph]# vi cluster.yaml

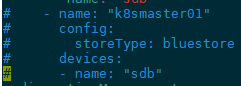

1 apiVersion: ceph.rook.io/v1 2 kind: CephCluster 3 metadata: 4 name: rook-ceph 5 namespace: rook-ceph 6 spec: 7 cephVersion: 8 image: ceph/ceph:v14.2.4-20190917 9 allowUnsupported: false 10 dataDirHostPath: /var/lib/rook 11 skipUpgradeChecks: false 12 mon: 13 count: 3 14 allowMultiplePerNode: false 15 dashboard: 16 enabled: true 17 ssl: true 18 monitoring: 19 enabled: false 20 rulesNamespace: rook-ceph 21 network: 22 hostNetwork: false 23 rbdMirroring: 24 workers: 0 25 placement: #配置特定节点亲和力保证Node作为存储节点 26 # all: 27 # nodeAffinity: 28 # requiredDuringSchedulingIgnoredDuringExecution: 29 # nodeSelectorTerms: 30 # - matchExpressions: 31 # - key: role 32 # operator: In 33 # values: 34 # - storage-node 35 # tolerations: 36 # - key: storage-node 37 # operator: Exists 38 mon: 39 nodeAffinity: 40 requiredDuringSchedulingIgnoredDuringExecution: 41 nodeSelectorTerms: 42 - matchExpressions: 43 - key: ceph-mon 44 operator: In 45 values: 46 - enabled 47 tolerations: 48 - key: ceph-mon 49 operator: Exists 50 ods: 51 nodeAffinity: 52 requiredDuringSchedulingIgnoredDuringExecution: 53 nodeSelectorTerms: 54 - matchExpressions: 55 - key: ceph-osd 56 operator: In 57 values: 58 - enabled 59 tolerations: 60 - key: ceph-osd 61 operator: Exists 62 mgr: 63 nodeAffinity: 64 requiredDuringSchedulingIgnoredDuringExecution: 65 nodeSelectorTerms: 66 - matchExpressions: 67 - key: ceph-mgr 68 operator: In 69 values: 70 - enabled 71 tolerations: 72 - key: ceph-mgr 73 operator: Exists 74 annotations: 75 resources: 76 removeOSDsIfOutAndSafeToRemove: false 77 storage: 78 useAllNodes: false #关闭使用所有Node 79 useAllDevices: false #关闭使用所有设备 80 deviceFilter: sdb 81 config: 82 metadataDevice: 83 databaseSizeMB: "1024" 84 journalSizeMB: "1024" 85 nodes: 86 - name: "k8snode01" #指定存储节点主机 87 config: 88 storeType: bluestore #指定类型为裸磁盘 89 devices: 90 - name: "sdb" #指定磁盘为sdb 91 - name: "k8snode02" 92 config: 93 storeType: bluestore 94 devices: 95 - name: "sdb" 96 - name: "k8snode03" 97 config: 98 storeType: bluestore 99 devices: 100 - name: "sdb" 101 disruptionManagement: 102 managePodBudgets: false 103 osdMaintenanceTimeout: 30 104 manageMachineDisruptionBudgets: false 105 machineDisruptionBudgetNamespace: openshift-machine-api

提示:更多cluster的CRD配置参考:https://github.com/rook/rook/blob/master/Documentation/ceph-cluster-crd.md。

https://blog.gmem.cc/rook-based-k8s-storage-solution

2.5 获取镜像

可能由于国内环境无法Pull镜像,建议提前pull如下镜像:

docker pull rook/ceph:master

docker pull quay.azk8s.cn/cephcsi/cephcsi:v1.2.2

docker pull quay.azk8s.cn/k8scsi/csi-node-driver-registrar:v1.1.0

docker pull quay.azk8s.cn/k8scsi/csi-provisioner:v1.4.0

docker pull quay.azk8s.cn/k8scsi/csi-attacher:v1.2.0

docker pull quay.azk8s.cn/k8scsi/csi-snapshotter:v1.2.2

docker tag quay.azk8s.cn/cephcsi/cephcsi:v1.2.2 quay.io/cephcsi/cephcsi:v1.2.2

docker tag quay.azk8s.cn/k8scsi/csi-node-driver-registrar:v1.1.0 quay.io/k8scsi/csi-node-driver-registrar:v1.1.0

docker tag quay.azk8s.cn/k8scsi/csi-provisioner:v1.4.0 quay.io/k8scsi/csi-provisioner:v1.4.0

docker tag quay.azk8s.cn/k8scsi/csi-attacher:v1.2.0 quay.io/k8scsi/csi-attacher:v1.2.0

docker tag quay.azk8s.cn/k8scsi/csi-snapshotter:v1.2.2 quay.io/k8scsi/csi-snapshotter:v1.2.2

docker rmi quay.azk8s.cn/cephcsi/cephcsi:v1.2.2

docker rmi quay.azk8s.cn/k8scsi/csi-node-driver-registrar:v1.1.0

docker rmi quay.azk8s.cn/k8scsi/csi-provisioner:v1.4.0

docker rmi quay.azk8s.cn/k8scsi/csi-attacher:v1.2.0

docker rmi quay.azk8s.cn/k8scsi/csi-snapshotter:v1.2.2

2.6 部署cluster

[root@k8smaster01 ceph]# kubectl create -f cluster.yaml

[root@k8smaster01 ceph]# kubectl logs -f -n rook-ceph rook-ceph-operator-cb47c46bc-pszfh #可查看部署log

[root@k8smaster01 ceph]# kubectl get pods -n rook-ceph -o wide #需要等待一定时间,部分中间态容器可能会波动

提示:若部署失败,master节点执行[root@k8smaster01 ceph]# kubectl delete -f ./

所有node节点执行如下清理操作:

rm -rf /var/lib/rook

/dev/mapper/ceph-*

dmsetup ls

dmsetup remove_all

dd if=/dev/zero of=/dev/sdb bs=512k count=1

wipefs -af /dev/sdb

2.7 部署Toolbox

toolbox是一个rook的工具集容器,该容器中的命令可以用来调试、测试Rook,对Ceph临时测试的操作一般在这个容器内执行。

[root@k8smaster01 ceph]# kubectl create -f toolbox.yaml

[root@k8smaster01 ceph]# kubectl -n rook-ceph get pod -l "app=rook-ceph-tools"

NAME READY STATUS RESTARTS AGE

rook-ceph-tools-59b8cccb95-9rl5l 1/1 Running 0 15s

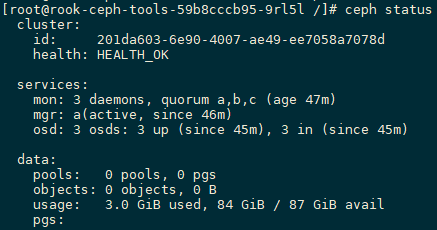

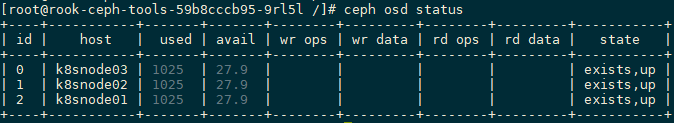

2.8 测试Rook

[root@k8smaster01 ceph]# kubectl -n rook-ceph exec -it $(kubectl -n rook-ceph get pod -l "app=rook-ceph-tools" -o jsonpath='{.items[0].metadata.name}') bash

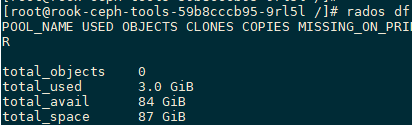

[root@rook-ceph-tools-59b8cccb95-9rl5l /]# ceph status #查看Ceph状态

[root@rook-ceph-tools-59b8cccb95-9rl5l /]# ceph osd status

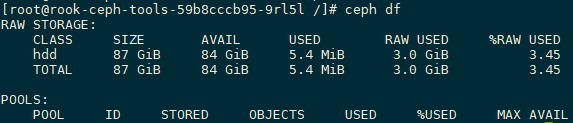

[root@rook-ceph-tools-59b8cccb95-9rl5l /]# ceph df

[root@rook-ceph-tools-59b8cccb95-9rl5l /]# rados df

[root@rook-ceph-tools-59b8cccb95-9rl5l /]# ceph auth ls #查看Ceph所有keyring

[root@rook-ceph-tools-59b8cccb95-9rl5l /]# ceph version

ceph version 14.2.4 (75f4de193b3ea58512f204623e6c5a16e6c1e1ba) nautilus (stable)

提示:更多Ceph管理参考《008.RHCS-管理Ceph存储集群》,如上工具中也支持使用独立的ceph命令ceph osd pool create ceph-test 512创建相关pool,实际Kubernetes rook中,不建议直接操作底层Ceph,以防止上层Kubernetes而言数据不一致性。

2.10 复制key和config

为方便管理,可将Ceph的keyring和config在master节点也创建一份,从而实现在Kubernetes外部宿主机对rook Ceph集群的简单查看。

[root@k8smaster01 ~]# kubectl -n rook-ceph exec -it $(kubectl -n rook-ceph get pod -l "app=rook-ceph-tools" -o jsonpath='{.items[0].metadata.name}') cat /etc/ceph/ceph.conf > /etc/ceph/ceph.conf

[root@k8smaster01 ~]# kubectl -n rook-ceph exec -it $(kubectl -n rook-ceph get pod -l "app=rook-ceph-tools" -o jsonpath='{.items[0].metadata.name}') cat /etc/ceph/keyring > /etc/ceph/keyring

[root@k8smaster01 ceph]# tee /etc/yum.repos.d/ceph.repo <<-'EOF'

[Ceph]

name=Ceph packages for $basearch

baseurl=http://mirrors.aliyun.com/ceph/rpm-nautilus/el7/$basearch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

[Ceph-noarch]

name=Ceph noarch packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-nautilus/el7/noarch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-nautilus/el7/SRPMS

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc

priority=1

EOF

[root@k8smaster01 ceph]# yum -y install ceph-common ceph-fuse #安装客户端

[root@k8smaster01 ~]# ceph status

提示:rpm-nautilus版本建议和2.8所查看的版本一致。基于Kubernetes的rook Ceph集群,强烈不建议直接使用ceph命令进行管理,否则可能出现非一致性,对于rook集群的使用参考步骤三,ceph命令仅限于简单的集群查看。

三 Ceph 块存储

3.1 创建StorageClass

在提供(Provisioning)块存储之前,需要先创建StorageClass和存储池。K8S需要这两类资源,才能和Rook交互,进而分配持久卷(PV)。

[root@k8smaster01 ceph]# kubectl create -f csi/rbd/storageclass.yaml

解读:如下配置文件中会创建一个名为replicapool的存储池,和rook-ceph-block的storageClass。

1 apiVersion: ceph.rook.io/v1 2 kind: CephBlockPool 3 metadata: 4 name: replicapool 5 namespace: rook-ceph 6 spec: 7 failureDomain: host 8 replicated: 9 size: 3 10 --- 11 apiVersion: storage.k8s.io/v1 12 kind: StorageClass 13 metadata: 14 name: rook-ceph-block 15 provisioner: rook-ceph.rbd.csi.ceph.com 16 parameters: 17 clusterID: rook-ceph 18 pool: replicapool 19 imageFormat: "2" 20 imageFeatures: layering 21 csi.storage.k8s.io/provisioner-secret-name: rook-csi-rbd-provisioner 22 csi.storage.k8s.io/provisioner-secret-namespace: rook-ceph 23 csi.storage.k8s.io/node-stage-secret-name: rook-csi-rbd-node 24 csi.storage.k8s.io/node-stage-secret-namespace: rook-ceph 25 csi.storage.k8s.io/fstype: ext4 26 reclaimPolicy: Delete

[root@k8smaster01 ceph]# kubectl get storageclasses.storage.k8s.io

NAME PROVISIONER AGE

rook-ceph-block rook-ceph.rbd.csi.ceph.com 8m44s

3.2 创建PVC

[root@k8smaster01 ceph]# kubectl create -f csi/rbd/pvc.yaml

1 apiVersion: v1 2 kind: PersistentVolumeClaim 3 metadata: 4 name: block-pvc 5 spec: 6 storageClassName: rook-ceph-block 7 accessModes: 8 - ReadWriteOnce 9 resources: 10 requests: 11 storage: 200Mi

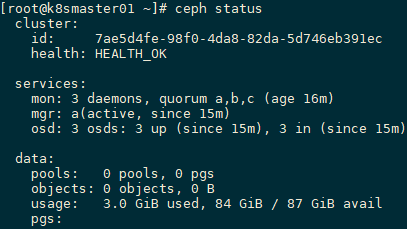

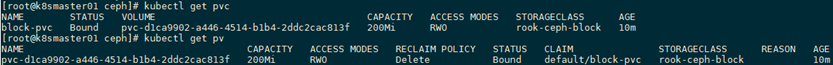

[root@k8smaster01 ceph]# kubectl get pvc

[root@k8smaster01 ceph]# kubectl get pv

解读:如上创建相应的PVC,storageClassName:为基于rook Ceph集群的rook-ceph-block。

3.3 消费块设备

[root@k8smaster01 ceph]# vi rookpod01.yaml

1 apiVersion: v1 2 kind: Pod 3 metadata: 4 name: rookpod01 5 spec: 6 restartPolicy: OnFailure 7 containers: 8 - name: test-container 9 image: busybox 10 volumeMounts: 11 - name: block-pvc 12 mountPath: /var/test 13 command: ['sh', '-c', 'echo "Hello World" > /var/test/data; exit 0'] 14 volumes: 15 - name: block-pvc 16 persistentVolumeClaim: 17 claimName: block-pvc

[root@k8smaster01 ceph]# kubectl create -f rookpod01.yaml

[root@k8smaster01 ceph]# kubectl get pod

NAME READY STATUS RESTARTS AGE

rookpod01 0/1 Completed 0 5m35s

解读:创建如上Pod,并挂载3.2所创建的PVC,等待执行完毕。

3.4 测试持久性

[root@k8smaster01 ceph]# kubectl delete pods rookpod01 #删除rookpod01

[root@k8smaster01 ceph]# vi rookpod02.yaml

1 apiVersion: v1 2 kind: Pod 3 metadata: 4 name: rookpod02 5 spec: 6 restartPolicy: OnFailure 7 containers: 8 - name: test-container 9 image: busybox 10 volumeMounts: 11 - name: block-pvc 12 mountPath: /var/test 13 command: ['sh', '-c', 'cat /var/test/data; exit 0'] 14 volumes: 15 - name: block-pvc 16 persistentVolumeClaim: 17 claimName: block-pvc

[root@k8smaster01 ceph]# kubectl create -f rookpod02.yaml

[root@k8smaster01 ceph]# kubectl logs rookpod02 test-container

Hello World

解读:创建rookpod02,并使用所创建的PVC,测试持久性。

提示:更多Ceph块设备知识参考《003.RHCS-RBD块存储使用》。

四 Ceph 对象存储

4.1 创建CephObjectStore

在提供(object)对象存储之前,需要先创建相应的支持,使用如下官方提供的默认yaml可部署对象存储的CephObjectStore。

[root@k8smaster01 ceph]# kubectl create -f object.yaml

1 apiVersion: ceph.rook.io/v1 2 kind: CephObjectStore 3 metadata: 4 name: my-store 5 namespace: rook-ceph 6 spec: 7 metadataPool: 8 failureDomain: host 9 replicated: 10 size: 3 11 dataPool: 12 failureDomain: host 13 replicated: 14 size: 3 15 preservePoolsOnDelete: false 16 gateway: 17 type: s3 18 sslCertificateRef: 19 port: 80 20 securePort: 21 instances: 1 22 placement: 23 annotations: 24 resources:

[root@k8smaster01 ceph]# kubectl -n rook-ceph get pod -l app=rook-ceph-rgw #部署完成会创建rgw的Pod

NAME READY STATUS RESTARTS AGE

rook-ceph-rgw-my-store-a-6bd6c797c4-7dzjr 1/1 Running 0 19s

4.2 创建StorageClass

使用如下官方提供的默认yaml可部署对象存储的StorageClass。

[root@k8smaster01 ceph]# kubectl create -f storageclass-bucket-delete.yaml

1 apiVersion: storage.k8s.io/v1 2 kind: StorageClass 3 metadata: 4 name: rook-ceph-delete-bucket 5 provisioner: ceph.rook.io/bucket 6 reclaimPolicy: Delete 7 parameters: 8 objectStoreName: my-store 9 objectStoreNamespace: rook-ceph 10 region: us-east-1

[root@k8smaster01 ceph]# kubectl get sc

4.3 创建bucket

使用如下官方提供的默认yaml可部署对象存储的bucket。

[root@k8smaster01 ceph]# kubectl create -f object-bucket-claim-delete.yaml

1 apiVersion: objectbucket.io/v1alpha1 2 kind: ObjectBucketClaim 3 metadata: 4 name: ceph-delete-bucket 5 spec: 6 generateBucketName: ceph-bkt 7 storageClassName: rook-ceph-delete-bucket

4.4 设置对象存储访问信息

[root@k8smaster01 ceph]# kubectl -n default get cm ceph-delete-bucket -o yaml | grep BUCKET_HOST | awk '{print $2}'

rook-ceph-rgw-my-store.rook-ceph

[root@k8smaster01 ceph]# kubectl -n rook-ceph get svc rook-ceph-rgw-my-store

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

rook-ceph-rgw-my-store ClusterIP 10.102.165.187 <none> 80/TCP 7m34s

[root@k8smaster01 ceph]# export AWS_HOST=$(kubectl -n default get cm ceph-delete-bucket -o yaml | grep BUCKET_HOST | awk '{print $2}')

[root@k8smaster01 ceph]# export AWS_ACCESS_KEY_ID=$(kubectl -n default get secret ceph-delete-bucket -o yaml | grep AWS_ACCESS_KEY_ID | awk '{print $2}' | base64 --decode)

[root@k8smaster01 ceph]# export AWS_SECRET_ACCESS_KEY=$(kubectl -n default get secret ceph-delete-bucket -o yaml | grep AWS_SECRET_ACCESS_KEY | awk '{print $2}' | base64 --decode)

[root@k8smaster01 ceph]# export AWS_ENDPOINT='10.102.165.187'

[root@k8smaster01 ceph]# echo '10.102.165.187 rook-ceph-rgw-my-store.rook-ceph' >> /etc/hosts

4.5 测试访问

[root@k8smaster01 ceph]# radosgw-admin bucket list #查看bucket

[root@k8smaster01 ceph]# yum --assumeyes install s3cmd #安装S3客户端

[root@k8smaster01 ceph]# echo "Hello Rook" > /tmp/rookObj #创建测试文件

[root@k8smaster01 ceph]# s3cmd put /tmp/rookObj --no-ssl --host=${AWS_HOST} --host-bucket= s3://ceph-bkt-377bf96f-aea8-4838-82bc-2cb2c16cccfb/test.txt #测试上传至bucket

提示:更多rook 对象存储使用,如创建用户等参考:https://rook.io/docs/rook/v1.1/ceph-object.html。

五 Ceph 文件存储

5.1 创建CephFilesystem

默认Ceph未部署对CephFS的支持,使用如下官方提供的默认yaml可部署文件存储的filesystem。

[root@k8smaster01 ceph]# kubectl create -f filesystem.yaml

1 apiVersion: ceph.rook.io/v1 2 kind: CephFilesystem 3 metadata: 4 name: myfs 5 namespace: rook-ceph 6 spec: 7 metadataPool: 8 replicated: 9 size: 3 10 dataPools: 11 - failureDomain: host 12 replicated: 13 size: 3 14 preservePoolsOnDelete: true 15 metadataServer: 16 activeCount: 1 17 activeStandby: true 18 placement: 19 podAntiAffinity: 20 requiredDuringSchedulingIgnoredDuringExecution: 21 - labelSelector: 22 matchExpressions: 23 - key: app 24 operator: In 25 values: 26 - rook-ceph-mds 27 topologyKey: kubernetes.io/hostname 28 annotations: 29 resources:

[root@k8smaster01 ceph]# kubectl get cephfilesystems.ceph.rook.io -n rook-ceph

NAME ACTIVEMDS AGE

myfs 1 27s

5.2 创建StorageClass

[root@k8smaster01 ceph]# kubectl create -f csi/cephfs/storageclass.yaml

使用如下官方提供的默认yaml可部署文件存储的StorageClass。

[root@k8smaster01 ceph]# vi csi/cephfs/storageclass.yaml

1 apiVersion: storage.k8s.io/v1 2 kind: StorageClass 3 metadata: 4 name: csi-cephfs 5 provisioner: rook-ceph.cephfs.csi.ceph.com 6 parameters: 7 clusterID: rook-ceph 8 fsName: myfs 9 pool: myfs-data0 10 csi.storage.k8s.io/provisioner-secret-name: rook-csi-cephfs-provisioner 11 csi.storage.k8s.io/provisioner-secret-namespace: rook-ceph 12 csi.storage.k8s.io/node-stage-secret-name: rook-csi-cephfs-node 13 csi.storage.k8s.io/node-stage-secret-namespace: rook-ceph 14 reclaimPolicy: Delete 15 mountOptions:

[root@k8smaster01 ceph]# kubectl get sc

NAME PROVISIONER AGE

csi-cephfs rook-ceph.cephfs.csi.ceph.com 10m

5.3 创建PVC

[root@k8smaster01 ceph]# vi rookpvc03.yaml

1 apiVersion: v1 2 kind: PersistentVolumeClaim 3 metadata: 4 name: cephfs-pvc 5 spec: 6 storageClassName: csi-cephfs 7 accessModes: 8 - ReadWriteOnce 9 resources: 10 requests: 11 storage: 200Mi

[root@k8smaster01 ceph]# kubectl create -f rookpvc03.yaml

[root@k8smaster01 ceph]# kubectl get pv

[root@k8smaster01 ceph]# kubectl get pvc

5.4 消费PVC

[root@k8smaster01 ceph]# vi rookpod03.yaml

1 --- 2 apiVersion: v1 3 kind: Pod 4 metadata: 5 name: csicephfs-demo-pod 6 spec: 7 containers: 8 - name: web-server 9 image: nginx 10 volumeMounts: 11 - name: mypvc 12 mountPath: /var/lib/www/html 13 volumes: 14 - name: mypvc 15 persistentVolumeClaim: 16 claimName: cephfs-pvc 17 readOnly: false

[root@k8smaster01 ceph]# kubectl create -f rookpod03.yaml

[root@k8smaster01 ceph]# kubectl get pods

NAME READY STATUS RESTARTS AGE

csicephfs-demo-pod 1/1 Running 0 24s

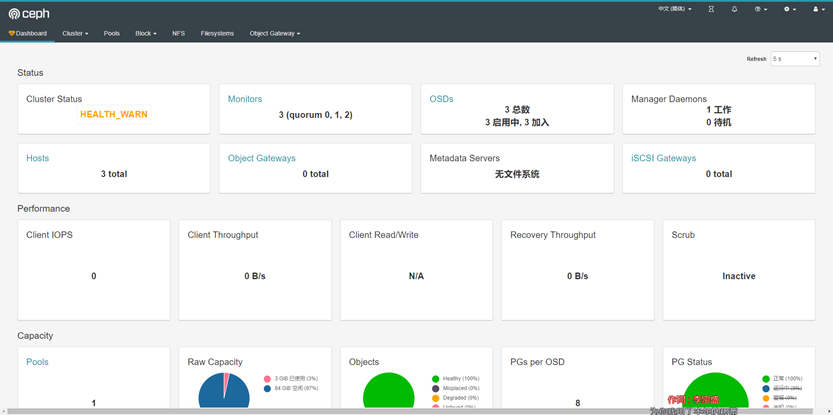

六 设置dashboard

6.1 部署Node SVC

步骤2.4已创建dashboard,但仅使用clusterIP暴露服务,使用如下官方提供的默认yaml可部署外部nodePort方式暴露服务的dashboard。

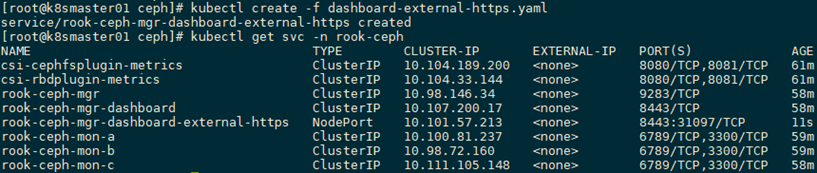

[root@k8smaster01 ceph]# kubectl create -f dashboard-external-https.yaml

[root@k8smaster01 ceph]# vi dashboard-external-https.yaml

1 apiVersion: v1 2 kind: Service 3 metadata: 4 name: rook-ceph-mgr-dashboard-external-https 5 namespace: rook-ceph 6 labels: 7 app: rook-ceph-mgr 8 rook_cluster: rook-ceph 9 spec: 10 ports: 11 - name: dashboard 12 port: 8443 13 protocol: TCP 14 targetPort: 8443 15 selector: 16 app: rook-ceph-mgr 17 rook_cluster: rook-ceph 18 sessionAffinity: None 19 type: NodePort

[root@k8smaster01 ceph]# kubectl get svc -n rook-ceph

6.2 确认验证

kubectl -n rook-ceph get secret rook-ceph-dashboard-password -o jsonpath='{.data.password}' | base64 --decode #获取初始密码

浏览器访问:https://172.24.8.71:31097

账号:admin,密码:如上查找即可。

七 集群管理

7.1 修改配置

默认创建Ceph集群的配置参数在创建Cluster的时候生成Ceph集群的配置参数,若需要在部署完成后修改相应参数,可通过如下操作试下:

[root@k8smaster01 ceph]# kubectl -n rook-ceph get configmap rook-config-override -o yaml #获取参数

[root@k8snode02 ~]# cat /var/lib/rook/rook-ceph/rook-ceph.config #也可在任何node上查看

[root@k8smaster01 ceph]# kubectl -n rook-ceph edit configmap rook-config-override -o yaml #修改参数

1 …… 2 apiVersion: v1 3 data: 4 config: | 5 [global] 6 osd pool default size = 2 7 ……

依次重启ceph组件

[root@k8smaster01 ceph]# kubectl -n rook-ceph delete pod rook-ceph-mgr-a-5699bb7984-kpxgp

[root@k8smaster01 ceph]# kubectl -n rook-ceph delete pod rook-ceph-mon-a-85698dfff9-w5l8c

[root@k8smaster01 ceph]# kubectl -n rook-ceph delete pod rook-ceph-mgr-a-d58847d5-dj62p

[root@k8smaster01 ceph]# kubectl -n rook-ceph delete pod rook-ceph-mon-b-76559bf966-652nl

[root@k8smaster01 ceph]# kubectl -n rook-ceph delete pod rook-ceph-mon-c-74dd86589d-s84cz

注意:ceph-mon, ceph-osd的delete最后是one-by-one的,等待ceph集群状态为HEALTH_OK后再delete另一个。

提示:其他更多rook配置参数参考:https://rook.io/docs/rook/v1.1/。

7.2 创建Pool

对rook Ceph集群的pool创建,建议采用Kubernetes的方式,而不建议使用toolbox中的ceph命令。

使用如下官方提供的默认yaml可部署Pool。

[root@k8smaster01 ceph]# kubectl create -f pool.yaml

1 apiVersion: ceph.rook.io/v1 2 kind: CephBlockPool 3 metadata: 4 name: replicapool2 5 namespace: rook-ceph 6 spec: 7 failureDomain: host 8 replicated: 9 size: 3 10 annotations:

7.3 删除Pool

[root@k8smaster01 ceph]# kubectl delete -f pool.yaml

提示:更多Pool管理,如纠删码池参考:https://rook.io/docs/rook/v1.1/ceph-pool-crd.html。

7.4 添加OSD节点

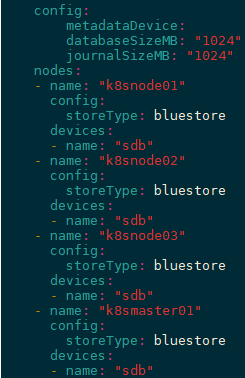

本步骤模拟将k8smaster的sdb添加为OSD。

[root@k8smaster01 ceph]# kubectl taint node k8smaster01 node-role.kubernetes.io/master- #允许调度Pod

[root@k8smaster01 ceph]# kubectl label nodes k8smaster01 ceph-osd=enabled #设置标签

[root@k8smaster01 ceph]# vi cluster.yaml #追加master01的配置

……

- name: "k8smaster01"

config:

storeType: bluestore

devices:

- name: "sdb"

……

[root@k8smaster01 ceph]# kubectl apply -f cluster.yaml

[root@k8smaster01 ceph]# kubectl -n rook-ceph get pod -o wide -w

ceph osd tree

7.5 删除OSD节点

[root@k8smaster01 ceph]# kubectl label nodes k8smaster01 ceph-osd- #删除标签

[root@k8smaster01 ceph]# vi cluster.yaml #删除如下master01的配置

1 …… 2 - name: "k8smaster01" 3 config: 4 storeType: bluestore 5 devices: 6 - name: "sdb" 7 ……

[root@k8smaster01 ceph]# kubectl apply -f cluster.yaml

[root@k8smaster01 ceph]# kubectl -n rook-ceph get pod -o wide -w

[root@k8smaster01 ceph]# rm -rf /var/lib/rook

7.6 删除Cluster

完整优雅删除rook集群的方式参考:https://github.com/rook/rook/blob/master/Documentation/ceph-teardown.md

7.7 升级rook

参考:http://www.yangguanjun.com/2018/12/28/rook-ceph-practice-part2/。

更多官网文档参考:https://rook.github.io/docs/rook/v1.1/

推荐博文:http://www.yangguanjun.com/archives/

https://sealyun.com/post/rook/