测试环境如下

| IP | host | JDK | linux | hadop | role |

| 172.16.101.55 | sht-sgmhadoopnn-01 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | NameNode,SecondaryNameNode,ResourceManager |

| 172.16.101.58 | sht-sgmhadoopdn-01 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | DataNode,NodeManager |

| 172.16.101.59 | sht-sgmhadoopdn-02 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | DataNode,NodeManager |

| 172.16.101.60 | sht-sgmhadoopdn-03 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | DataNode,NodeManager |

1. 软件准备

http://www-eu.apache.org/dist/hadoop/common/hadoop-2.7.3/hadoop-2.7.3.tar.gz

http://download.oracle.com/otn-pub/java/jdk/8u144-b01/090f390dda5b47b9b721c7dfaa008135/jdk-8u144-linux-x64.tar.gz

2. 系统环境该准备

2.1 创建用户并配置好环境变量

# useradd -r -u 115 -g dba -G root -d /home/hduser hduser # echo 'admin123' | passwd --stdin hduser # cat /home/hduser/.bash_profile .......... export JAVA_HOME=/usr/java/jdk1.8.0_111_273 export JRE_HOME=$JAVA_HOME/jre export HADOOP_HOME=/usr/local/hadoop-2.7.3 export CLASSPATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar:$JRE_HOME/lib export PATH=$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH:$HOME/bin

2.2 解压hadoop和jdk

# tar -zxf jdk-8u144-linux-x64.tar.gz -C /usr/java/ # tar -zxf hadoop-2.7.3.tar.gz -C /usr/local/ # chown -R hduser:dba /usr/local/hadoop-2.7.3/

2.3 设置各主机名与IP地址解析

# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 172.16.101.55 sht-sgmhadoopnn-01 172.16.101.58 sht-sgmhadoopdn-01 172.16.101.59 sht-sgmhadoopdn-02 172.16.101.60 sht-sgmhadoopdn-03

2.4 配置namnode与各datanode节点间的ssh互信

$ ssh-keygen -t rsa $ ssh-copy-id hduser@sht-sgmhadoopnn-01 $ ssh-copy-id hduser@sht-sgmhadoopdn-01 $ ssh-copy-id hduser@sht-sgmhadoopdn-02 $ ssh-copy-id hduser@sht-sgmhadoopdn-03

2.5 同步各节点之间时间

http://lythjq.blog.51cto.com/8902270/1603266

3. hadoop配置

3.1 配置hadoop-env.sh文件

修改JAVA_HOME

export JAVA_HOME=${JAVA_HOME}

为

export JAVA_HOME=/usr/java/jdk1.8.0_111

3.2 配置core-site.xml文件

<configuration> <property> <name>hadoop.tmp.dir</name> <value>/usr/local/hadoop/data</value> </property> <property> <name>fs.defaultFS</name> <value>hdfs://sht-sgmhadoopnn-01:9000</value> </property> <property> <name>hadoop.http.staticuser.user</name> <value>root</value> </property> <property> <name>fs.trash.interval</name> <value>1440</value> </property> <property> <name>fs.trash.checkpoint.interval</name> <value>0</value> </property> <property> <name>io.file.buffer.size</name> <value>65536</value> </property> <property> <name>hadoop.proxyuser.hduser.groups</name> <value>*</value> </property> <property> <name>hadoop.proxyuser.hduser.hosts</name> <value>*</value> </property> </configuration>

3.2 配置hdfs-site.xml

<configuration> <property> <name>dfs.webhdfs.enabled</name> <value>true</value> </property> <property> <name>dfs.permissions.enabled</name> <value>false</value> </property> <property> <name>dfs.namenode.http-address</name> <value>172.16.101.55:50070</value> </property> <property> <name>dfs.namenode.https-address</name> <value>172.16.101.55:50470</value> </property> <property> <name>dfs.namenode.secondary.http-address</name> <value>172.16.101.55:50090</value> </property> <property> <name>dfs.namenode.secondary.https-address</name> <value>172.16.101.55:50091</value> </property> <property> <name>dfs.datanode.address</name> <value>0.0.0.0:50010</value> </property> <property> <name>dfs.datanode.ipc.address</name> <value>0.0.0.0:50020</value> </property> <property> <name>dfs.datanode.http.address</name> <value>0.0.0.0:50075</value> </property> <property> <name>dfs.datanode.https-address</name> <value>0.0.0.0:50475</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>/usr/local/hadoop/data/dfs/name</value> </property> <property> <name>dfs.namenode.edits.dir</name> <value>${dfs.namenode.name.dir}</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>/usr/local/hadoop/data/dfs/data</value> </property> <property> <name>dfs.namenode.checkpoint.dir</name> <value>/usr/local/hadoop/data/dfs/namesecondary</value> </property> <property> <name>dfs.namenode.checkpoint.period</name> <value>3600</value> </property> <property> <name>dfs.namenode.checkpoint.edits.dir</name> <value>/usr/local/hadoop/data/dfs/namesecondary</value> </property> <property> <name>dfs.replication</name> <value>3</value> </property> <property> <name>dfs.blocksize</name> <value>134217728</value> </property> <property> <name>dfs.datanode.handler.count</name> <value>100</value> </property> </configuration>

3.3 配置mapred-site.xml

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <property> <name>mapreduce.jobtracker.http.address</name> <value>0.0.0.0:50030</value> </property> <property> <name>mapreduce.jobhistory.address</name> <value>0.0.0.0:10020</value> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>0.0.0.0:19888</value> </property> </configuration>

3.4 配置yarn-site.xml

<configuration> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <name>yarn.resourcemanager.hostname</name> <value>172.16.101.55</value> <description>The hostname of the RM.</description> </property> <property> <name>yarn.resourcemanager.address</name> <value>${yarn.resourcemanager.hostname}:8032</value> </property> <property> <name>yarn.resourcemanager.scheduler.address</name> <value>${yarn.resourcemanager.hostname}:8030</value> </property> <property> <name>yarn.resourcemanager.webapp.address</name> <value>${yarn.resourcemanager.hostname}:8088</value> </property> <property> <name>yarn.resourcemanager.webapp.https.address</name> <value>${yarn.resourcemanager.hostname}:8090</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address</name> <value>${yarn.resourcemanager.hostname}:8031</value> </property> <property> <name>yarn.resourcemanager.admin.address</name> <value>${yarn.resourcemanager.hostname}:8033</value> </property> <property> <name>yarn.nodemanager.localizer.address</name> <value>0.0.0.0:8040</value> </property> <property> <name>yarn.nodemanager.webapp.address</name> <value>0.0.0.0:8042</value> </property> <property> <name>yarn.resourcemanager.connect.retry-interval.ms</name> <value>2000</value> </property> <property> <name>yarn.nodemanager.resource.memory-mb</name> <value>4096</value> </property> <property> <name>yarn.scheduler.minimum-allocation-mb</name> <value>1024</value> </property> <property> <name>yarn.scheduler.maximum-allocation-mb</name> <value>4096</value> </property> </configuration>

3.5 配置slaves

sht-sgmhadoopdn-01

sht-sgmhadoopdn-02

sht-sgmhadoopdn-03

3.6 将hadoop配置同步到其他节点

$ scp -r /usr/local/hadoop-2.7.3/* hduser@sht-sgmhadoopdn-01:/usr/local/hadoop-2.7.3/

$ scp -r /usr/local/hadoop-2.7.3/* hduser@sht-sgmhadoopdn-02:/usr/local/hadoop-2.7.3/

$ scp -r /usr/local/hadoop-2.7.3/* hduser@sht-sgmhadoopdn-03:/usr/local/hadoop-2.7.3/

4. 格式化namenode主节点

$ hdfs namenode -format 17/08/30 23:19:55 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = sht-sgmhadoopnn-01/172.16.101.55 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 2.7.3 STARTUP_MSG: classpath = /usr/local/hadoop-2.7.3/etc/hadoop:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/hadoop-auth-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-httpclient-3.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jersey-json-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jsr305-3.0.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jsp-api-2.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jets3t-0.9.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-net-3.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/avro-1.7.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/hadoop-annotations-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-lang-2.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/guava-11.0.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/httpcore-4.2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-collections-3.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-digester-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-logging-1.1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/junit-4.11.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/xz-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/xmlenc-0.52.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-codec-1.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jettison-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jsch-0.1.42.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-math3-3.1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/curator-framework-2.7.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jetty-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/mockito-all-1.8.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/stax-api-1.0-2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/curator-client-2.7.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/httpclient-4.2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/gson-2.2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/activation-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/servlet-api-2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/zookeeper-3.4.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jetty-util-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/hamcrest-core-1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/paranamer-2.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-cli-1.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-configuration-1.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-compress-1.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/hadoop-nfs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/guava-11.0.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-client-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-json-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-lang-2.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/guava-11.0.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/guice-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/xz-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-codec-1.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jettison-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/javax.inject-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/activation-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/aopalliance-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/servlet-api-2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-cli-1.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-client-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-registry-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-api-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/junit-4.11.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/xz-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/javax.inject-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.3.jar:/usr/local/hadoop-2.7.3/contrib/capacity-scheduler/*.jar STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by 'root' on 2016-08-18T01:41Z STARTUP_MSG: java = 1.8.0_111 ************************************************************/ 17/08/30 23:19:55 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 17/08/30 23:19:55 INFO namenode.NameNode: createNameNode [-format] 17/08/30 23:19:56 WARN common.Util: Path /usr/local/hadoop-2.7.3/data/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration. 17/08/30 23:19:56 WARN common.Util: Path /usr/local/hadoop-2.7.3/data/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration. Formatting using clusterid: CID-80464bc9-331a-4ebd-9c45-5c952ddaa4ef 17/08/30 23:19:56 INFO namenode.FSNamesystem: No KeyProvider found. 17/08/30 23:19:56 INFO namenode.FSNamesystem: fsLock is fair:true 17/08/30 23:19:57 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000 17/08/30 23:19:57 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true 17/08/30 23:19:57 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000 17/08/30 23:19:57 INFO blockmanagement.BlockManager: The block deletion will start around 2017 Aug 30 23:19:57 17/08/30 23:19:57 INFO util.GSet: Computing capacity for map BlocksMap 17/08/30 23:19:57 INFO util.GSet: VM type = 64-bit 17/08/30 23:19:57 INFO util.GSet: 2.0% max memory 889 MB = 17.8 MB 17/08/30 23:19:57 INFO util.GSet: capacity = 2^21 = 2097152 entries 17/08/30 23:19:57 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false 17/08/30 23:19:57 INFO blockmanagement.BlockManager: defaultReplication = 3 17/08/30 23:19:57 INFO blockmanagement.BlockManager: maxReplication = 512 17/08/30 23:19:57 INFO blockmanagement.BlockManager: minReplication = 1 17/08/30 23:19:57 INFO blockmanagement.BlockManager: maxReplicationStreams = 2 17/08/30 23:19:57 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000 17/08/30 23:19:57 INFO blockmanagement.BlockManager: encryptDataTransfer = false 17/08/30 23:19:57 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000 17/08/30 23:19:57 INFO namenode.FSNamesystem: fsOwner = hduser (auth:SIMPLE) 17/08/30 23:19:57 INFO namenode.FSNamesystem: supergroup = supergroup 17/08/30 23:19:57 INFO namenode.FSNamesystem: isPermissionEnabled = false 17/08/30 23:19:57 INFO namenode.FSNamesystem: HA Enabled: false 17/08/30 23:19:57 INFO namenode.FSNamesystem: Append Enabled: true 17/08/30 23:19:57 INFO util.GSet: Computing capacity for map INodeMap 17/08/30 23:19:57 INFO util.GSet: VM type = 64-bit 17/08/30 23:19:57 INFO util.GSet: 1.0% max memory 889 MB = 8.9 MB 17/08/30 23:19:57 INFO util.GSet: capacity = 2^20 = 1048576 entries 17/08/30 23:19:57 INFO namenode.FSDirectory: ACLs enabled? false 17/08/30 23:19:57 INFO namenode.FSDirectory: XAttrs enabled? true 17/08/30 23:19:57 INFO namenode.FSDirectory: Maximum size of an xattr: 16384 17/08/30 23:19:57 INFO namenode.NameNode: Caching file names occuring more than 10 times 17/08/30 23:19:57 INFO util.GSet: Computing capacity for map cachedBlocks 17/08/30 23:19:57 INFO util.GSet: VM type = 64-bit 17/08/30 23:19:57 INFO util.GSet: 0.25% max memory 889 MB = 2.2 MB 17/08/30 23:19:57 INFO util.GSet: capacity = 2^18 = 262144 entries 17/08/30 23:19:57 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033 17/08/30 23:19:57 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0 17/08/30 23:19:57 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000 17/08/30 23:19:57 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10 17/08/30 23:19:57 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10 17/08/30 23:19:57 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25 17/08/30 23:19:57 INFO namenode.FSNamesystem: Retry cache on namenode is enabled 17/08/30 23:19:57 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis 17/08/30 23:19:57 INFO util.GSet: Computing capacity for map NameNodeRetryCache 17/08/30 23:19:57 INFO util.GSet: VM type = 64-bit 17/08/30 23:19:57 INFO util.GSet: 0.029999999329447746% max memory 889 MB = 273.1 KB 17/08/30 23:19:57 INFO util.GSet: capacity = 2^15 = 32768 entries 17/08/30 23:19:58 INFO namenode.FSImage: Allocated new BlockPoolId: BP-402567071-172.16.101.55-1504106397983 17/08/30 23:19:58 INFO common.Storage: Storage directory /usr/local/hadoop-2.7.3/data/dfs/name has been successfully formatted. 17/08/30 23:19:58 INFO namenode.FSImageFormatProtobuf: Saving image file /usr/local/hadoop-2.7.3/data/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression 17/08/30 23:19:58 INFO namenode.FSImageFormatProtobuf: Image file /usr/local/hadoop-2.7.3/data/dfs/name/current/fsimage.ckpt_0000000000000000000 of size 353 bytes saved in 0 seconds. 17/08/30 23:19:58 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0 17/08/30 23:19:58 INFO util.ExitUtil: Exiting with status 0 17/08/30 23:19:58 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at sht-sgmhadoopnn-01/172.16.101.55 ************************************************************/

5. 启动hadoop

5.1 启动hdfs

$ /usr/local/hadoop-2.7.3/sbin/start-dfs.sh Starting namenodes on [sht-sgmhadoopnn-01] sht-sgmhadoopnn-01: starting namenode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-namenode-sht-sgmhadoopnn-01.out sht-sgmhadoopdn-02: starting datanode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-datanode-sht-sgmhadoopdn-02.out sht-sgmhadoopdn-01: starting datanode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-datanode-sht-sgmhadoopdn-01.out sht-sgmhadoopdn-03: starting datanode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-datanode-sht-sgmhadoopdn-03.out Starting secondary namenodes [sht-sgmhadoopnn-01] sht-sgmhadoopnn-01: starting secondarynamenode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-secondarynamenode-sht-sgmhadoopnn-01.out

5.2 启动yarn

$ /usr/local/hadoop-2.7.3/sbin/start-yarn.sh starting yarn daemons starting resourcemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-resourcemanager-sht-sgmhadoopnn-01.out sht-sgmhadoopdn-02: starting nodemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-nodemanager-sht-sgmhadoopdn-02.out sht-sgmhadoopdn-03: starting nodemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-nodemanager-sht-sgmhadoopdn-03.out sht-sgmhadoopdn-01: starting nodemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-nodemanager-sht-sgmhadoopdn-01.out

5.3 启动historyserver

$ /usr/local/hadoop-2.7.3/sbin/mr-jobhistory-daemon.sh start historyserver starting historyserver, logging to /usr/local/hadoop-2.7.3/logs/mapred-hduser-historyserver-sht-sgmhadoopnn-01.out

5.4 验证各节点状态

sht-sgmhadoopnn-0

$ jps 3842 Jps 3762 JobHistoryServer 2580 NameNode 3237 ResourceManager 2796 SecondaryNameNode

sht-sgmhadoopdn-01

$ jps 7809 DataNode 8818 Jps 7971 NodeManager

sht-sgmhadoopdn-02

$ jps 30817 DataNode 31665 Jps 30959 NodeManager

sht-sgmhadoopdn-03

$ jps 20050 NodeManager 19878 DataNode 20362 Jps

6. 检查Hadoop运行状态

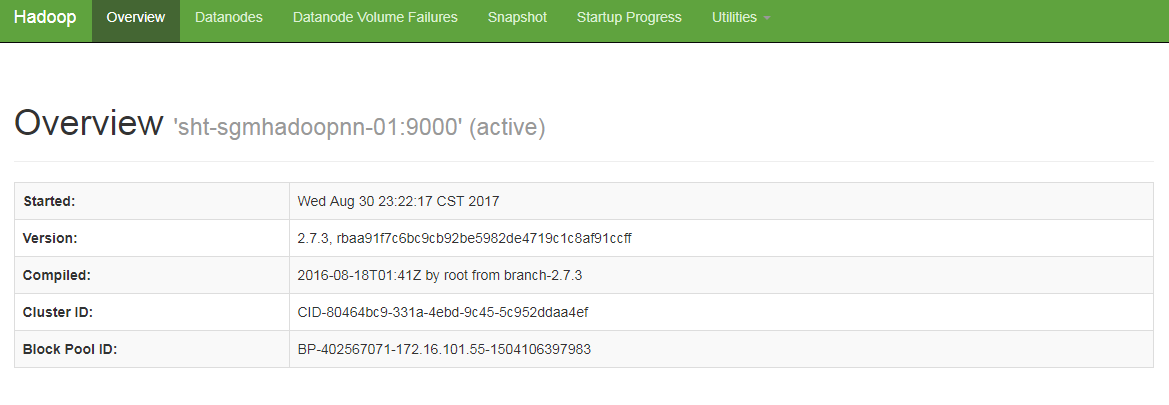

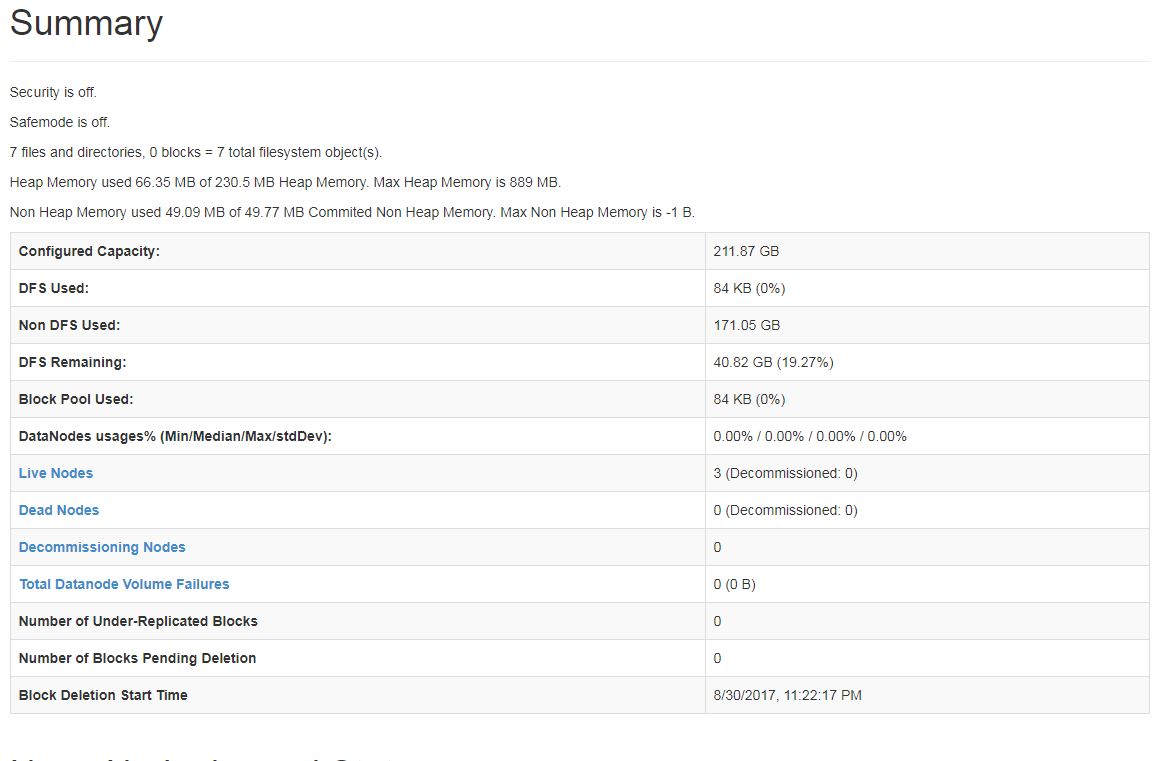

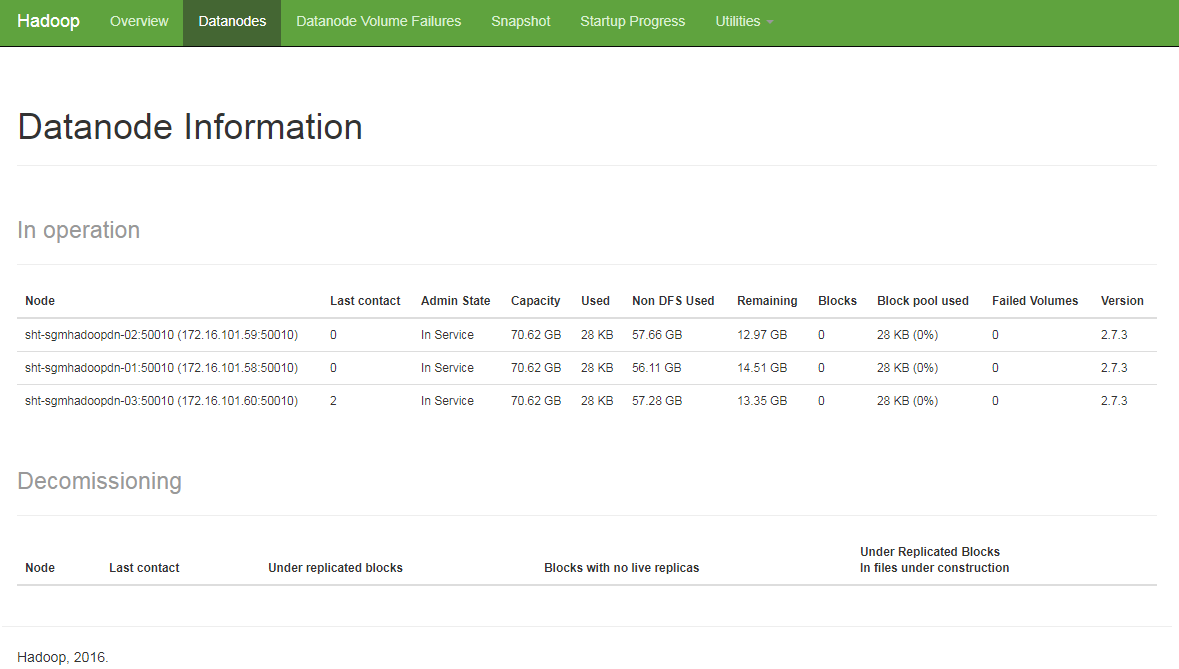

6.1 HDFS状态

http://172.16.101.55:50070

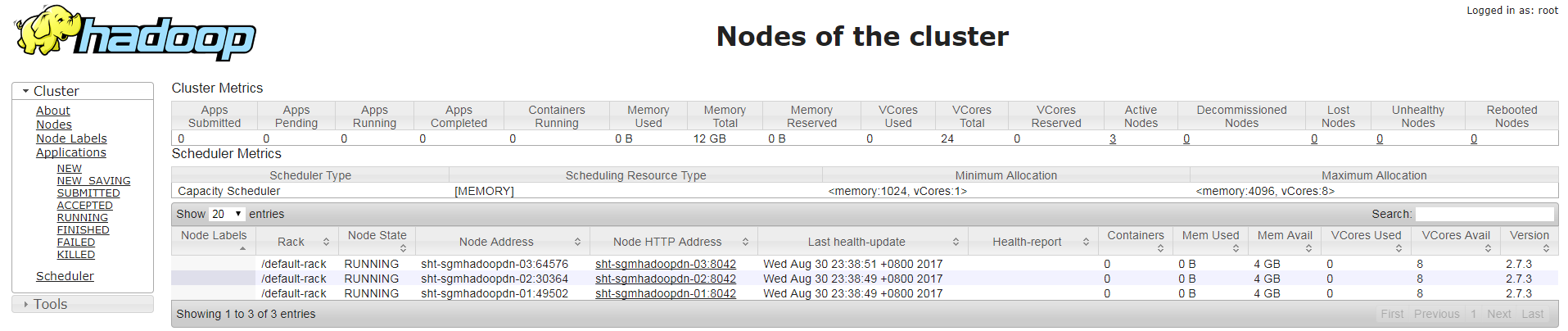

6.1 YARN状态

http://172.16.101.55:8088