本来SSD做测试的Python接口用起来也是比较方便的,但是如果部署集成的话,肯定要用c++环境,于是动手鼓捣了一下。

编译用的cmake,写的CMakeList.txt,期间碰到一些小问题,简单记录一下问题以及解决方法。

当然前提是你本地的caffe环境没啥问题。各种依赖都安好了。。

1.error: ‘AnnotatedDatum’ has not been declared AnnotatedDatum* anno_datum);

/home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:192:40: error: ‘AnnotatedDatum_AnnotationType’ does not name a type const std::string& encoding, const AnnotatedDatum_AnnotationType type, ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:194:5: error: ‘AnnotatedDatum’ has not been declared AnnotatedDatum* anno_datum); ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:199:11: error: ‘AnnotatedDatum_AnnotationType’ does not name a type const AnnotatedDatum_AnnotationType type, const string& labeltype, ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:200:49: error: ‘AnnotatedDatum’ has not been declared const std::map<string, int>& name_to_label, AnnotatedDatum* anno_datum) { ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:208:5: error: ‘AnnotatedDatum’ has not been declared AnnotatedDatum* anno_datum); ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:212:5: error: ‘AnnotatedDatum’ has not been declared AnnotatedDatum* anno_datum); ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:215:22: error: ‘AnnotatedDatum’ has not been declared const int width, AnnotatedDatum* anno_datum); ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:218:30: error: ‘LabelMap’ has not been declared const string& delimiter, LabelMap* map); ^ /home/jiawenhao/ssd/caffe/include/caffe/util/io.hpp:221:32: error: ‘LabelMap’ has not been declared bool include_background, LabelMap* map) { ^

这个问题拿去google了一下,https://github.com/BVLC/caffe/issues/5671提示说是

caffe.pb.h这个文件有问题。

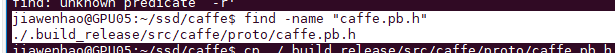

在本地find了一下,

发现是有这个文件的,

于是在/ssd/caffe/include/caffe下 mkdir一下 proto,然后把 caffe.bp.h 复制过来就好了。

如果没有 caffe.pb.h可以用命令生成这个文件,生成方法google一下就好了。。。。

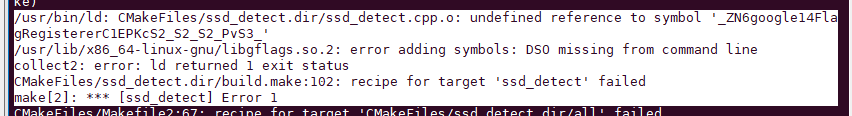

2.链接库的问题。错误提示说明用到了这个库,但是程序没找到。在CMakeList.txt里填上 libflags.so即可 ,其他so库同理。

/usr/bin/ld: CMakeFiles/ssd_detect.dir/ssd_detect.cpp.o: undefined reference to symbol '_ZN6google14FlagRegistererC1EPKcS2_S2_S2_PvS3_' /usr/lib/x86_64-linux-gnu/libgflags.so.2: error adding symbols: DSO missing from command line collect2: error: ld returned 1 exit status CMakeFiles/ssd_detect.dir/build.make:102: recipe for target 'ssd_detect' failed make[2]: *** [ssd_detect] Error 1

这个是CMakeList.txt内容。 就是指定好include路径,还有需要用到的各种库的路径。

cmake_minimum_required (VERSION 2.8) add_definitions(-std=c++11) project (ssd_detect) add_executable(ssd_detect ssd_detect.cpp) include_directories (/home/yourpath/ssd/caffe/include /usr/include /usr/local/include /usr/local/cuda/include ) target_link_libraries(ssd_detect /home/yourpath/ssd/caffe/build/lib/libcaffe.so /usr/local/lib/libopencv_core.so /usr/local/lib/libopencv_imgproc.so /usr/local/lib/libopencv_imgcodecs.so /usr/local/lib/libopencv_highgui.so /usr/local/lib/libopencv_videoio.so /usr/lib/x86_64-linux-gnu/libgflags.so /usr/lib/x86_64-linux-gnu/libglog.so /usr/lib/x86_64-linux-gnu/libprotobuf.so /usr/lib/x86_64-linux-gnu/libboost_system.so )

3.发现github上下载的默认的ssd_detect.cpp默认没有添加 using namespace std;

添加之后,会有错误。 error: reference to ‘shared_ptr’ is ambiguous

ssd_detect.cpp:54:3: error: reference to ‘shared_ptr’ is ambiguous shared_ptr<Net<float> > net_; ^ In file included from /usr/include/c++/5/bits/shared_ptr.h:52:0, from /usr/include/c++/5/memory:82, from /usr/include/boost/config/no_tr1/memory.hpp:21, from /usr/include/boost/smart_ptr/shared_ptr.hpp:23, from /usr/include/boost/shared_ptr.hpp:17, from /home/jiawenhao/ssd/caffe/include/caffe/common.hpp:4, from /home/jiawenhao/ssd/caffe/include/caffe/blob.hpp:8, from /home/jiawenhao/ssd/caffe/include/caffe/caffe.hpp:7, from /data/jiawenhao/ssdtest/ssd_detect.cpp:16: /usr/include/c++/5/bits/shared_ptr_base.h:345:11: note: candidates are: template<class _Tp> class std::shared_ptr class shared_ptr; ^ In file included from /usr/include/boost/throw_exception.hpp:42:0, from /usr/include/boost/smart_ptr/shared_ptr.hpp:27, from /usr/include/boost/shared_ptr.hpp:17, from /home/jiawenhao/ssd/caffe/include/caffe/common.hpp:4, from /home/jiawenhao/ssd/caffe/include/caffe/blob.hpp:8, from /home/jiawenhao/ssd/caffe/include/caffe/caffe.hpp:7, from /data/jiawenhao/ssdtest/ssd_detect.cpp:16: /usr/include/boost/exception/exception.hpp:148:11: note: template<class T> class boost::shared_ptr class shared_ptr; ^ /data/jiawenhao/ssdtest/ssd_detect.cpp: In constructor ‘Detector::Detector(const string&, const string&, const string&, const string&)’: /data/jiawenhao/ssdtest/ssd_detect.cpp:71:3: error: ‘net_’ was not declared in this scope net_.reset(new Net<float>(model_file, TEST));

在shared_ptr<Net<float> > net_前面添加上boost即可。

boost::shared_ptr<Net<float> > net_;

修改后的ssd_detect.cpp源码如下:

// This is a demo code for using a SSD model to do detection. // The code is modified from examples/cpp_classification/classification.cpp. // Usage: // ssd_detect [FLAGS] model_file weights_file list_file // // where model_file is the .prototxt file defining the network architecture, and // weights_file is the .caffemodel file containing the network parameters, and // list_file contains a list of image files with the format as follows: // folder/img1.JPEG // folder/img2.JPEG // list_file can also contain a list of video files with the format as follows: // folder/video1.mp4 // folder/video2.mp4 // #define USE_OPENCV 1 #include <caffe/caffe.hpp> #ifdef USE_OPENCV #include <opencv2/core/core.hpp> #include <opencv2/highgui/highgui.hpp> #include <opencv2/imgproc/imgproc.hpp> #endif // USE_OPENCV #include <algorithm> #include <iomanip> #include <iosfwd> #include <memory> #include <string> #include <utility> #include <vector> #ifdef USE_OPENCV using namespace caffe; // NOLINT(build/namespaces) using namespace cv; using namespace std; class Detector { public: Detector(const string& model_file, const string& weights_file, const string& mean_file, const string& mean_value); std::vector<vector<float> > Detect(const cv::Mat& img); private: void SetMean(const string& mean_file, const string& mean_value); void WrapInputLayer(std::vector<cv::Mat>* input_channels); void Preprocess(const cv::Mat& img, std::vector<cv::Mat>* input_channels); private: boost::shared_ptr<Net<float> > net_; cv::Size input_geometry_; int num_channels_; cv::Mat mean_; }; Detector::Detector(const string& model_file, const string& weights_file, const string& mean_file, const string& mean_value) { #ifdef CPU_ONLY Caffe::set_mode(Caffe::CPU); #else Caffe::set_mode(Caffe::GPU); #endif /* Load the network. */ net_.reset(new Net<float>(model_file, TEST)); net_->CopyTrainedLayersFrom(weights_file); CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input."; CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output."; Blob<float>* input_layer = net_->input_blobs()[0]; num_channels_ = input_layer->channels(); CHECK(num_channels_ == 3 || num_channels_ == 1) << "Input layer should have 1 or 3 channels."; input_geometry_ = cv::Size(input_layer->width(), input_layer->height()); /* Load the binaryproto mean file. */ SetMean(mean_file, mean_value); } std::vector<vector<float> > Detector::Detect(const cv::Mat& img) { Blob<float>* input_layer = net_->input_blobs()[0]; input_layer->Reshape(1, num_channels_, input_geometry_.height, input_geometry_.width); /* Forward dimension change to all layers. */ net_->Reshape(); std::vector<cv::Mat> input_channels; WrapInputLayer(&input_channels); Preprocess(img, &input_channels); net_->Forward(); /* Copy the output layer to a std::vector */ Blob<float>* result_blob = net_->output_blobs()[0]; const float* result = result_blob->cpu_data(); const int num_det = result_blob->height(); vector<vector<float> > detections; for (int k = 0; k < num_det; ++k) { if (result[0] == -1) { // Skip invalid detection. result += 7; continue; } vector<float> detection(result, result + 7); detections.push_back(detection); result += 7; } return detections; } /* Load the mean file in binaryproto format. */ void Detector::SetMean(const string& mean_file, const string& mean_value) { cv::Scalar channel_mean; if (!mean_file.empty()) { CHECK(mean_value.empty()) << "Cannot specify mean_file and mean_value at the same time"; BlobProto blob_proto; ReadProtoFromBinaryFileOrDie(mean_file.c_str(), &blob_proto); /* Convert from BlobProto to Blob<float> */ Blob<float> mean_blob; mean_blob.FromProto(blob_proto); CHECK_EQ(mean_blob.channels(), num_channels_) << "Number of channels of mean file doesn't match input layer."; /* The format of the mean file is planar 32-bit float BGR or grayscale. */ std::vector<cv::Mat> channels; float* data = mean_blob.mutable_cpu_data(); for (int i = 0; i < num_channels_; ++i) { /* Extract an individual channel. */ cv::Mat channel(mean_blob.height(), mean_blob.width(), CV_32FC1, data); channels.push_back(channel); data += mean_blob.height() * mean_blob.width(); } /* Merge the separate channels into a single image. */ cv::Mat mean; cv::merge(channels, mean); /* Compute the global mean pixel value and create a mean image * filled with this value. */ channel_mean = cv::mean(mean); mean_ = cv::Mat(input_geometry_, mean.type(), channel_mean); } if (!mean_value.empty()) { CHECK(mean_file.empty()) << "Cannot specify mean_file and mean_value at the same time"; stringstream ss(mean_value); vector<float> values; string item; while (getline(ss, item, ',')) { float value = std::atof(item.c_str()); values.push_back(value); } CHECK(values.size() == 1 || values.size() == num_channels_) << "Specify either 1 mean_value or as many as channels: " << num_channels_; std::vector<cv::Mat> channels; for (int i = 0; i < num_channels_; ++i) { /* Extract an individual channel. */ cv::Mat channel(input_geometry_.height, input_geometry_.width, CV_32FC1, cv::Scalar(values[i])); channels.push_back(channel); } cv::merge(channels, mean_); } } /* Wrap the input layer of the network in separate cv::Mat objects * (one per channel). This way we save one memcpy operation and we * don't need to rely on cudaMemcpy2D. The last preprocessing * operation will write the separate channels directly to the input * layer. */ void Detector::WrapInputLayer(std::vector<cv::Mat>* input_channels) { Blob<float>* input_layer = net_->input_blobs()[0]; int width = input_layer->width(); int height = input_layer->height(); float* input_data = input_layer->mutable_cpu_data(); for (int i = 0; i < input_layer->channels(); ++i) { cv::Mat channel(height, width, CV_32FC1, input_data); input_channels->push_back(channel); input_data += width * height; } } void Detector::Preprocess(const cv::Mat& img, std::vector<cv::Mat>* input_channels) { /* Convert the input image to the input image format of the network. */ cv::Mat sample; if (img.channels() == 3 && num_channels_ == 1) cv::cvtColor(img, sample, cv::COLOR_BGR2GRAY); else if (img.channels() == 4 && num_channels_ == 1) cv::cvtColor(img, sample, cv::COLOR_BGRA2GRAY); else if (img.channels() == 4 && num_channels_ == 3) cv::cvtColor(img, sample, cv::COLOR_BGRA2BGR); else if (img.channels() == 1 && num_channels_ == 3) cv::cvtColor(img, sample, cv::COLOR_GRAY2BGR); else sample = img; cv::Mat sample_resized; if (sample.size() != input_geometry_) cv::resize(sample, sample_resized, input_geometry_); else sample_resized = sample; cv::Mat sample_float; if (num_channels_ == 3) sample_resized.convertTo(sample_float, CV_32FC3); else sample_resized.convertTo(sample_float, CV_32FC1); cv::Mat sample_normalized; cv::subtract(sample_float, mean_, sample_normalized); /* This operation will write the separate BGR planes directly to the * input layer of the network because it is wrapped by the cv::Mat * objects in input_channels. */ cv::split(sample_normalized, *input_channels); CHECK(reinterpret_cast<float*>(input_channels->at(0).data) == net_->input_blobs()[0]->cpu_data()) << "Input channels are not wrapping the input layer of the network."; } DEFINE_string(mean_file, "", "The mean file used to subtract from the input image."); DEFINE_string(mean_value, "104,117,123", "If specified, can be one value or can be same as image channels" " - would subtract from the corresponding channel). Separated by ','." "Either mean_file or mean_value should be provided, not both."); DEFINE_string(file_type, "image", "The file type in the list_file. Currently support image and video."); DEFINE_string(out_file, "", "If provided, store the detection results in the out_file."); DEFINE_double(confidence_threshold, 0.6, "Only store detections with score higher than the threshold."); vector<string> labels = {"background", "aeroplane", "bicycle","bird", "boat", "bottle", "bus", "car", "cat","chair","cow", "diningtable","dog","horse","motorbike","person", "pottedplant","sheep","sofa","train","tvmonitor"}; int main(int argc, char** argv) { const string& model_file = "deploy.prototxt"; const string& weights_file = "/home/jiawenhao/ssd/caffe/models/VGGNet/VOC0712/SSD_300x300/VGG_VOC0712_SSD_300x300_iter_120000.caffemodel"; const string& mean_file = FLAGS_mean_file; const string& mean_value = "104, 117, 123"; const string& file_type = "image"; const string& out_file = "a.outfile"; const float confidence_threshold = 0.6; // Initialize the network. Detector detector(model_file, weights_file, mean_file, mean_value); // Set the output mode. std::streambuf* buf = std::cout.rdbuf(); std::ofstream outfile; if (!out_file.empty()) { outfile.open(out_file.c_str()); if (outfile.good()) { buf = outfile.rdbuf(); } } std::ostream out(buf); // Process image one by one. std::ifstream infile("testimg.list"); std::string file; std::string imgName; int cnt = 0; while (infile >> file) { if (file_type == "image") { std::cout << file <<" "<<cnt++<<std::endl; int pos = file.find_last_of('/'); imgName = file.substr(pos + 1, file.size() - pos); cv::Mat img = cv::imread(file, -1); CHECK(!img.empty()) << "Unable to decode image " << file; std::vector<vector<float> > detections = detector.Detect(img); /* Print the detection results. */ for (int i = 0; i < detections.size(); ++i) { const vector<float>& d = detections[i]; // Detection format: [image_id, label, score, xmin, ymin, xmax, ymax]. CHECK_EQ(d.size(), 7); const float score = d[2]; if (score >= confidence_threshold) { out << file << " "; out << static_cast<int>(d[1]) << " "; out << score << " "; out << static_cast<int>(d[3] * img.cols) << " "; out << static_cast<int>(d[4] * img.rows) << " "; out << static_cast<int>(d[5] * img.cols) << " "; out << static_cast<int>(d[6] * img.rows) << std::endl; int x = static_cast<int>(d[3] * img.cols); int y = static_cast<int>(d[4] * img.rows); int width = static_cast<int>(d[5] * img.cols) - x; int height = static_cast<int>(d[6] * img.rows) - y; Rect rect(max(x,0), max(y,0), width, height); rectangle(img, rect, Scalar(0,255,0)); string sco = to_string(score).substr(0, 5); putText(img, labels[static_cast<int>(d[1])] + ":" + sco, Point(max(x, 0), max(y + height / 2, 0)), FONT_HERSHEY_SIMPLEX, 1, Scalar(0,255,0)); imwrite("result/" + imgName, img); } } } else if (file_type == "video") { cv::VideoCapture cap(file); if (!cap.isOpened()) { LOG(FATAL) << "Failed to open video: " << file; } cv::Mat img; int frame_count = 0; while (true) { bool success = cap.read(img); if (!success) { LOG(INFO) << "Process " << frame_count << " frames from " << file; break; } CHECK(!img.empty()) << "Error when read frame"; std::vector<vector<float> > detections = detector.Detect(img); /* Print the detection results. */ for (int i = 0; i < detections.size(); ++i) { const vector<float>& d = detections[i]; // Detection format: [image_id, label, score, xmin, ymin, xmax, ymax]. CHECK_EQ(d.size(), 7); const float score = d[2]; if (score >= confidence_threshold) { out << file << "_"; out << std::setfill('0') << std::setw(6) << frame_count << " "; out << static_cast<int>(d[1]) << " "; out << score << " "; out << static_cast<int>(d[3] * img.cols) << " "; out << static_cast<int>(d[4] * img.rows) << " "; out << static_cast<int>(d[5] * img.cols) << " "; out << static_cast<int>(d[6] * img.rows) << std::endl; } } ++frame_count; } if (cap.isOpened()) { cap.release(); } } else { LOG(FATAL) << "Unknown file_type: " << file_type; } } return 0; } #else int main(int argc, char** argv) { LOG(FATAL) << "This example requires OpenCV; compile with USE_OPENCV."; } #endif // USE_OPENCV

最后,放一张识别结果: