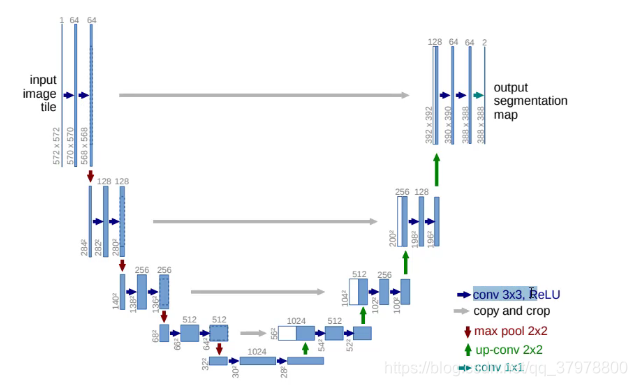

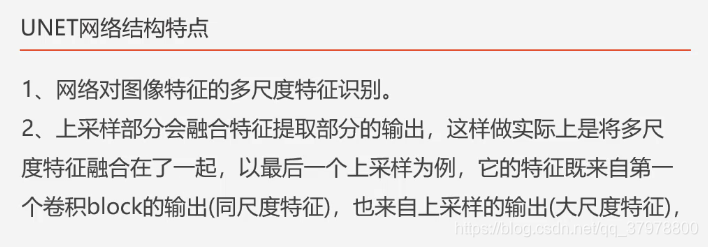

UNET图像语义分割模型简介

代码

import tensorflow as tf

import matplotlib.pyplot as plt

%matplotlib inline

import numpy as np

import glob

import os

# 显存自适应分配

gpus = tf.config.experimental.list_physical_devices(device_type='GPU')

for gpu in gpus:

tf.config.experimental.set_memory_growth(gpu,True)

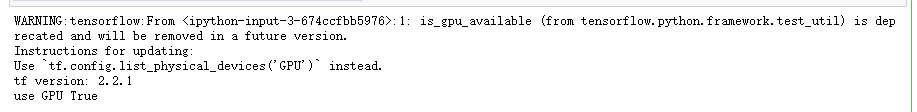

gpu_ok = tf.test.is_gpu_available()

print("tf version:", tf.__version__)

print("use GPU", gpu_ok) # 判断是否使用gpu进行训练

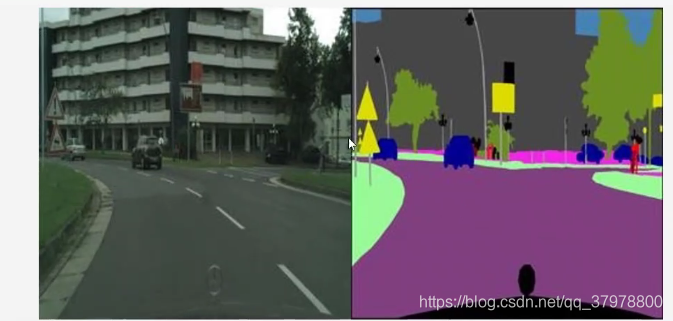

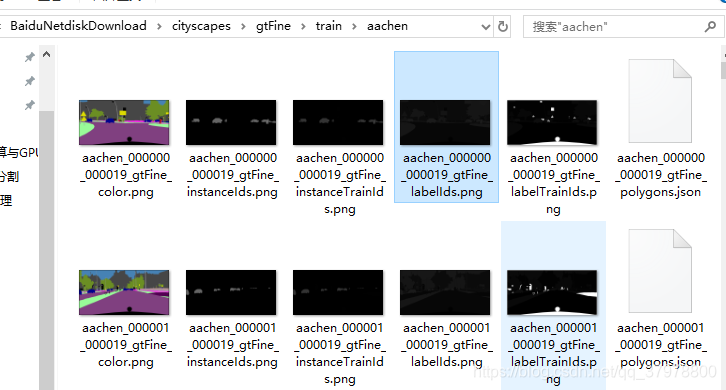

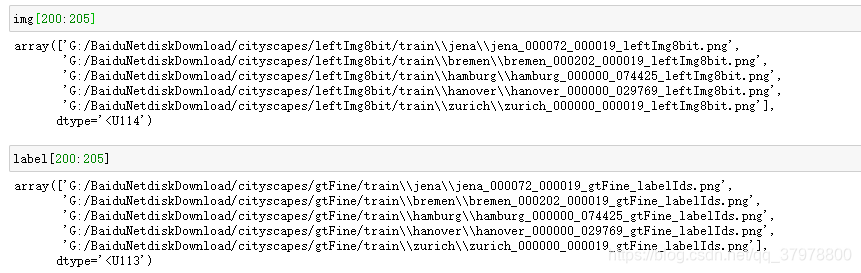

获取训练数据及目标值

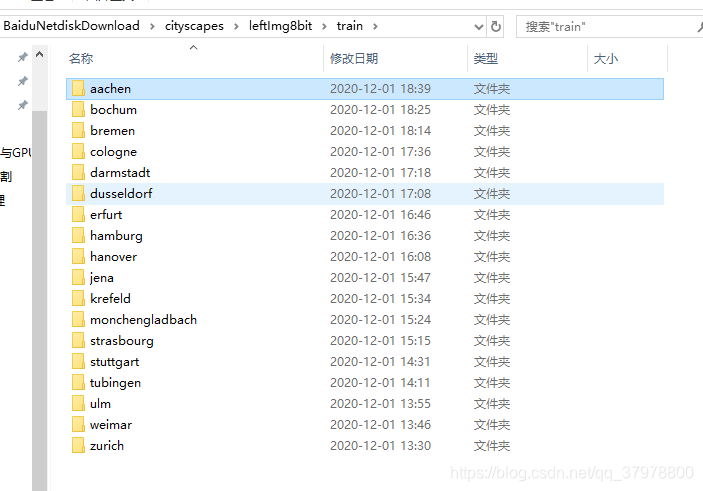

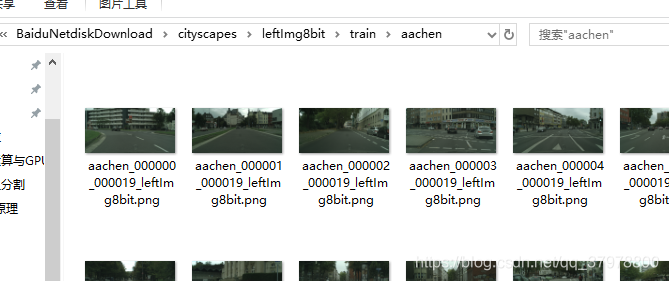

# 获取train文件下所有文件中所有png的图片

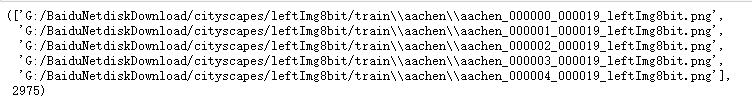

img = glob.glob("G:/BaiduNetdiskDownload/cityscapes/leftImg8bit/train/*/*.png")

train_count = len(img)

img[:5],train_count

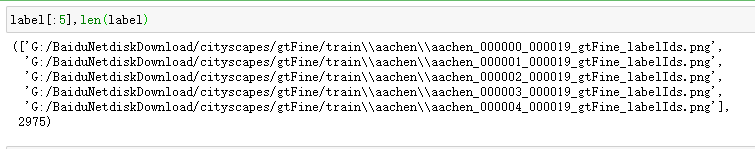

# 获取gtFine/train文件下所有文件中所有_gtFine_labelIds.png的图片

label = glob.glob("G:/BaiduNetdiskDownload/cityscapes/gtFine/train/*/*_gtFine_labelIds.png")

index = np.random.permutation(len(img)) # 创建一个随即种子,保障image和label 随机后还是一一对应的

img = np.array(img)[index] # 对训练集图片进行乱序

label = np.array(label)[index]

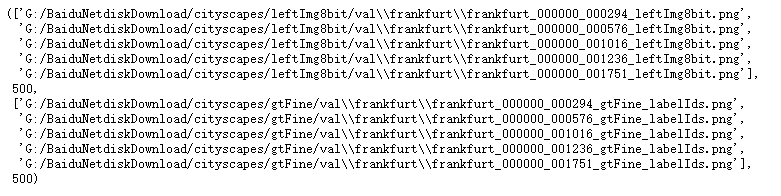

获取测试数据

# 获取val文件下所有文件中所有png的图片

img_val = glob.glob("G:/BaiduNetdiskDownload/cityscapes/leftImg8bit/val/*/*.png")

# 获取gtFine/val文件下所有文件中所有_gtFine_labelIds.png的图片

label_val = glob.glob("G:/BaiduNetdiskDownload/cityscapes/gtFine/val/*/*_gtFine_labelIds.png")

test_count = len(img_val)

img_val[:5],test_count,label_val[:5],len(label_val)

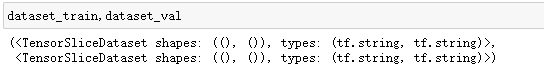

创建数据集

dataset_train = tf.data.Dataset.from_tensor_slices((img,label))

dataset_val = tf.data.Dataset.from_tensor_slices((img_val,label_val))

# 创建png的解码函数

def read_png(path):

img = tf.io.read_file(path)

img = tf.image.decode_png(img,channels=3)

return img

# 创建png的解码函数

def read_png_label(path):

img = tf.io.read_file(path)

img = tf.image.decode_png(img,channels=1)

return img

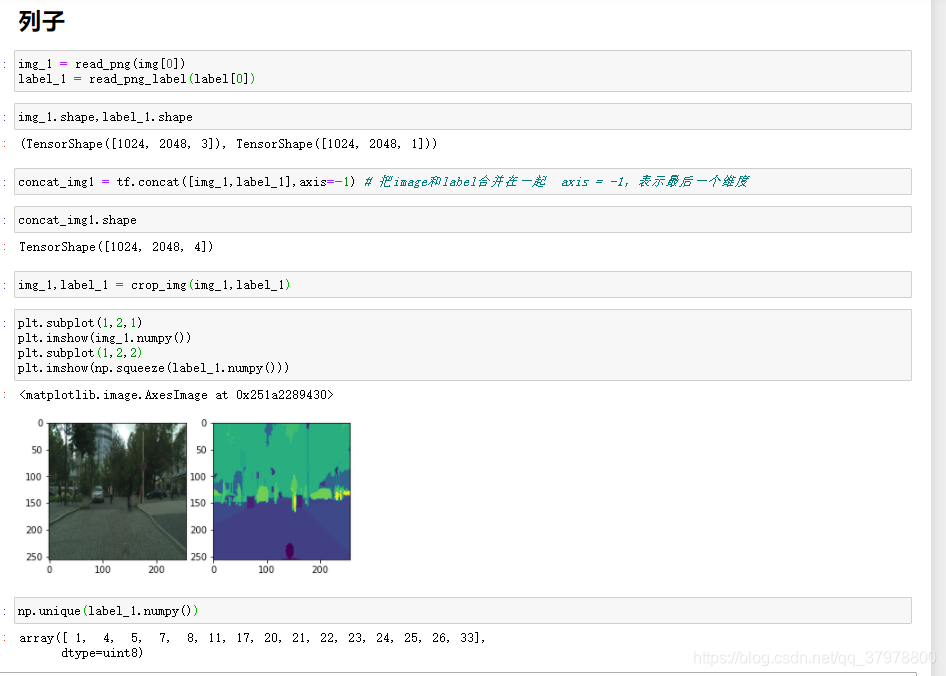

# 数据增强

def crop_img(img,mask):

concat_img = tf.concat([img,mask],axis=-1) # 把image和label合并在一起 axis = -1,表示最后一个维度

concat_img = tf.image.resize(concat_img,(280,280), # 修改大小为280*280

method=tf.image.ResizeMethod.NEAREST_NEIGHBOR)#使用最近邻插值调整images为size

crop_img = tf.image.random_crop(concat_img,[256,256,4]) # 随机裁剪

return crop_img[ :, :, :3],crop_img[ :, :, 3:] # 高维切片(第一,第二维度全要,第三个维度的前3是image,最后一个维度就是label)

def normal(img,mask):

img = tf.cast(img,tf.float32)/127.5-1

mask = tf.cast(mask,tf.int32)

return img,mask

# 组装

def load_image_train(img_path,mask_path):

img = read_png(img_path)

mask = read_png_label(mask_path) # 获取路径

img,mask = crop_img(img,mask) # 调用随机裁剪函数对图片进行裁剪

if tf.random.uniform(())>0.5: # 从均匀分布中返回随机值 如果大于0.5就执行下面的随机翻转

img = tf.image.flip_left_right(img)

mask = tf.image.flip_left_right(mask)

img,mask = normal(img,mask) # 调用归一化函数

return img,mask

# 组装

def load_image_test(img_path,mask_path):

img = read_png(img_path)

mask = read_png_label(mask_path)

img = tf.image.resize(img,(256,256))

mask = tf.image.resize(mask,(256,256))

img,mask = normal(img,mask)

return img,mask

BATCH_SIZE = 32

BUFFER_SIZE = 300

step_per_epoch = train_count//BATCH_SIZE

val_step = test_count//BATCH_SIZE

auto = tf.data.experimental.AUTOTUNE # 根据cpu使用情况自动规划线程读取图片

# 创建输入管道

dataset_train = dataset_train.map(load_image_train,num_parallel_calls=auto)

dataset_val = dataset_val.map(load_image_test,num_parallel_calls=auto)

dataset_train = dataset_train.cache().repeat().shuffle(BUFFER_SIZE).batch(BATCH_SIZE).prefetch(auto)

dataset_val = dataset_val.cache().batch(BATCH_SIZE)

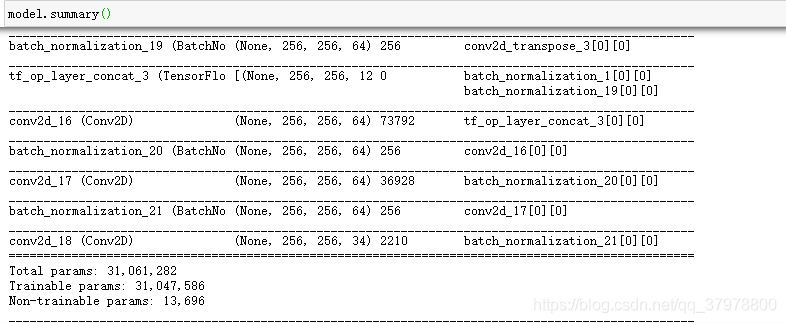

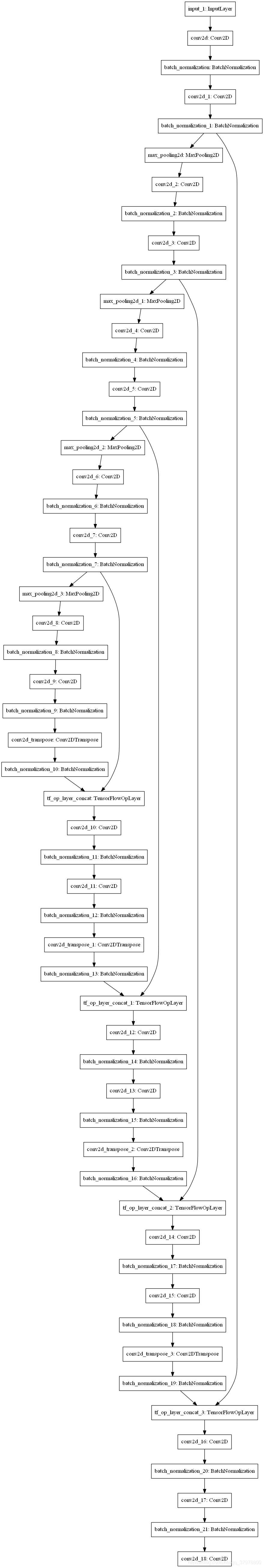

定义unet模型

def create_model():

## unet网络结构下采样部分

# 输入层 第一部分

inputs = tf.keras.layers.Input(shape = (256,256,3))

x = tf.keras.layers.Conv2D(64,3,padding="same",activation="relu")(inputs)

x = tf.keras.layers.BatchNormalization()(x)

x = tf.keras.layers.Conv2D(64,3,padding="same",activation="relu")(x)

x = tf.keras.layers.BatchNormalization()(x) # 256*256*64

# 下采样

x1 = tf.keras.layers.MaxPooling2D(padding="same")(x) # 128*128*64

# 卷积 第二部分

x1 = tf.keras.layers.Conv2D(128,3,padding="same",activation="relu")(x1)

x1 = tf.keras.layers.BatchNormalization()(x1)

x1 = tf.keras.layers.Conv2D(128,3,padding="same",activation="relu")(x1)

x1 = tf.keras.layers.BatchNormalization()(x1) # 128*128*128

# 下采样

x2 = tf.keras.layers.MaxPooling2D(padding="same")(x1) # 64*64*128

# 卷积 第三部分

x2 = tf.keras.layers.Conv2D(256,3,padding="same",activation="relu")(x2)

x2 = tf.keras.layers.BatchNormalization()(x2)

x2 = tf.keras.layers.Conv2D(256,3,padding="same",activation="relu")(x2)

x2 = tf.keras.layers.BatchNormalization()(x2) # 64*64*256

# 下采样

x3 = tf.keras.layers.MaxPooling2D(padding="same")(x2) # 32*32*256

# 卷积 第四部分

x3 = tf.keras.layers.Conv2D(512,3,padding="same",activation="relu")(x3)

x3 = tf.keras.layers.BatchNormalization()(x3)

x3 = tf.keras.layers.Conv2D(512,3,padding="same",activation="relu")(x3)

x3 = tf.keras.layers.BatchNormalization()(x3) # 32*32*512

# 下采样

x4 = tf.keras.layers.MaxPooling2D(padding="same")(x3) # 16*16*512

# 卷积 第五部分

x4 = tf.keras.layers.Conv2D(1024,3,padding="same",activation="relu")(x4)

x4 = tf.keras.layers.BatchNormalization()(x4)

x4 = tf.keras.layers.Conv2D(1024,3,padding="same",activation="relu")(x4)

x4 = tf.keras.layers.BatchNormalization()(x4) # 16*16*1024

## unet网络结构上采样部分

# 反卷积 第一部分 512个卷积核 卷积核大小2*2 跨度2 填充方式same 激活relu

x5 = tf.keras.layers.Conv2DTranspose(512,2,strides=2,

padding="same",

activation="relu")(x4)#32*32*512

x5 = tf.keras.layers.BatchNormalization()(x5)

x6 = tf.concat([x3,x5],axis=-1)#合并 32*32*1024

# 卷积

x6 = tf.keras.layers.Conv2D(512,3,padding="same",activation="relu")(x6)

x6 = tf.keras.layers.BatchNormalization()(x6)

x6 = tf.keras.layers.Conv2D(512,3,padding="same",activation="relu")(x6)

x6 = tf.keras.layers.BatchNormalization()(x6) # 32*32*512

# 反卷积 第二部分

x7 = tf.keras.layers.Conv2DTranspose(256,2,strides=2,

padding="same",

activation="relu")(x6)#64*64*256

x7 = tf.keras.layers.BatchNormalization()(x7)

x8 = tf.concat([x2,x7],axis=-1)#合并 64*64*512

# 卷积

x8 = tf.keras.layers.Conv2D(256,3,padding="same",activation="relu")(x8)

x8 = tf.keras.layers.BatchNormalization()(x8)

x8 = tf.keras.layers.Conv2D(256,3,padding="same",activation="relu")(x8)

x8 = tf.keras.layers.BatchNormalization()(x8) # #64*64*256

# 反卷积 第三部分

x9 = tf.keras.layers.Conv2DTranspose(128,2,strides=2,

padding="same",

activation="relu")(x8)# 128*128*128

x9 = tf.keras.layers.BatchNormalization()(x9)

x10 = tf.concat([x1,x9],axis=-1)#合并 128*128*256

# 卷积

x10 = tf.keras.layers.Conv2D(128,3,padding="same",activation="relu")(x10)

x10 = tf.keras.layers.BatchNormalization()(x10)

x10 = tf.keras.layers.Conv2D(128,3,padding="same",activation="relu")(x10)

x10 = tf.keras.layers.BatchNormalization()(x10) # 128*128*128

# 反卷积 第四部分

x11 = tf.keras.layers.Conv2DTranspose(64,2,strides=2,

padding="same",

activation="relu")(x10)# 256*256*64

x11 = tf.keras.layers.BatchNormalization()(x11)

x12 = tf.concat([x,x11],axis=-1)#合并 256*256*128

# 卷积

x12 = tf.keras.layers.Conv2D(64,3,padding="same",activation="relu")(x12)

x12 = tf.keras.layers.BatchNormalization()(x12)

x12 = tf.keras.layers.Conv2D(64,3,padding="same",activation="relu")(x12)

x12 = tf.keras.layers.BatchNormalization()(x12) # 256*256*64

# 输出层 第五部分

output =tf.keras.layers.Conv2D(34,1,padding="same",activation="softmax")(x12)# 256*256*34

return tf.keras.Model(inputs=inputs,outputs=output)

model = create_model()

tf.keras.utils.plot_model(model) # 绘制模型图

# tf.keras.metrics.MeanIoU(num_classes=34) # 根据独热编码进行计算

# 我们是顺序编码 需要更改类

class MeanIou(tf.keras.metrics.MeanIoU): # 继承这个类

def __call__(self,y_true,y_pred,sample_weight=None):

y_pred = tf.argmax(u_pred,axis=-1)

return super().__call__(y_true,y_pred,sample_weight=sample_weight)

# 编译模型

model.compile(optimizer="adam",

loss="sparse_categorical_crossentropy",

metrics=["acc",MeanIou(num_classes=34)]

)

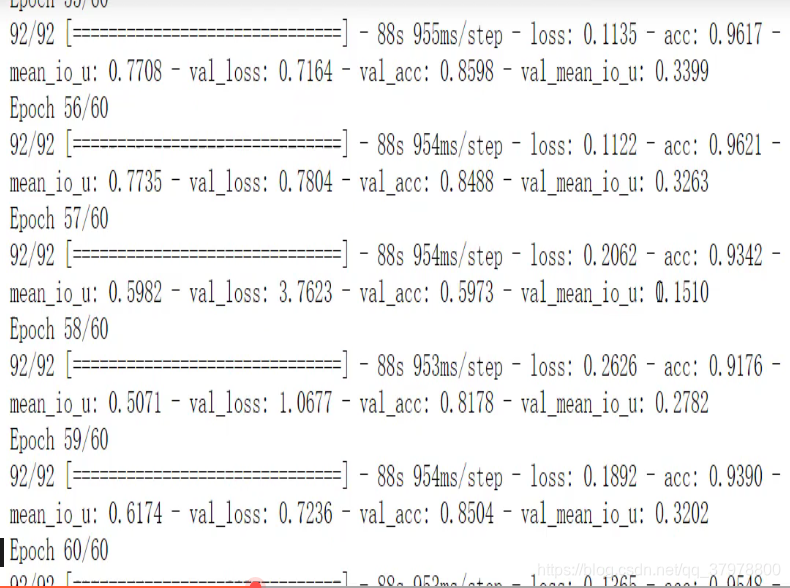

# 训练

history = model.fit(dataset_train,

epochs=60,

steps_per_epoch=step_per_epoch,

validation_steps=val_step,

validation_data=dataset_val

)

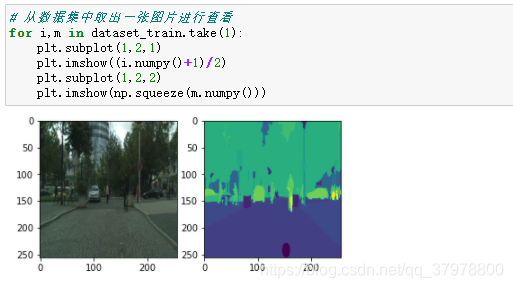

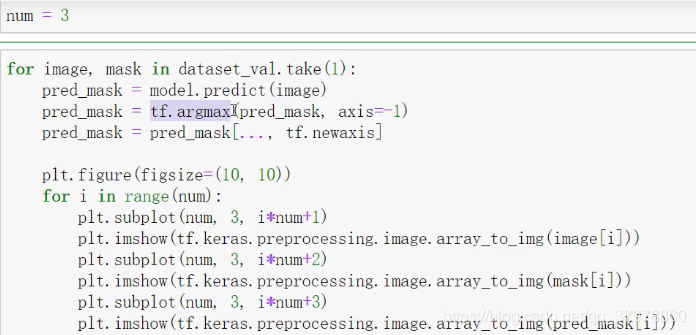

列子