基于Intellij IDEA搭建Spark开发环境搭

基于Intellij IDEA搭建Spark开发环境搭——参考文档

● 参考文档http://spark.apache.org/docs/latest/programming-guide.html

● 操作步骤

a)创建maven 项目

b)引入依赖(Spark 依赖、打包插件等等)

基于Intellij IDEA搭建Spark开发环境—maven vs sbt

● 哪个熟悉用哪个

● Maven也可以构建scala项目

基于Intellij IDEA搭建Spark开发环境搭—maven构建scala项目

● 参考文档http://docs.scala-lang.org/tutorials/scala-with-maven.html

● 操作步骤

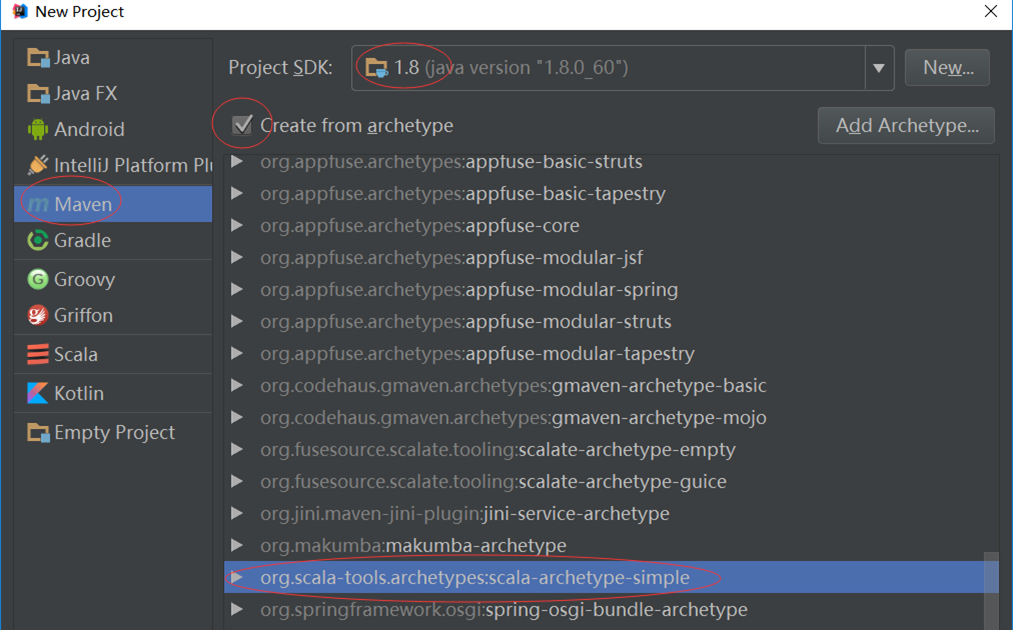

a)用maven构建scala项目(基于net.alchim31.maven:scala-archetype-simple)

b)pom.xml引入依赖(spark依赖、打包插件等等)

注意:scala与java版本的兼容性

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.dajiangtai.test</groupId>

<artifactId>test-spark</artifactId>

<version>1.0-SNAPSHOT</version>

<name>myWordCount</name>

<inceptionYear>2008</inceptionYear>

<properties>

<scala.version>2.10.5</scala.version>

<spark.version>1.6.1</spark.version>

</properties>

<repositories>

<repository>

<id>scala-tools.org</id>

<name>Scala-Tools Maven2 Repository</name>

<url>http://scala-tools.org/repo-releases</url>

</repository>

</repositories>

<pluginRepositories>

<pluginRepository>

<id>scala-tools.org</id>

<name>Scala-Tools Maven2 Repository</name>

<url>http://scala-tools.org/repo-releases</url