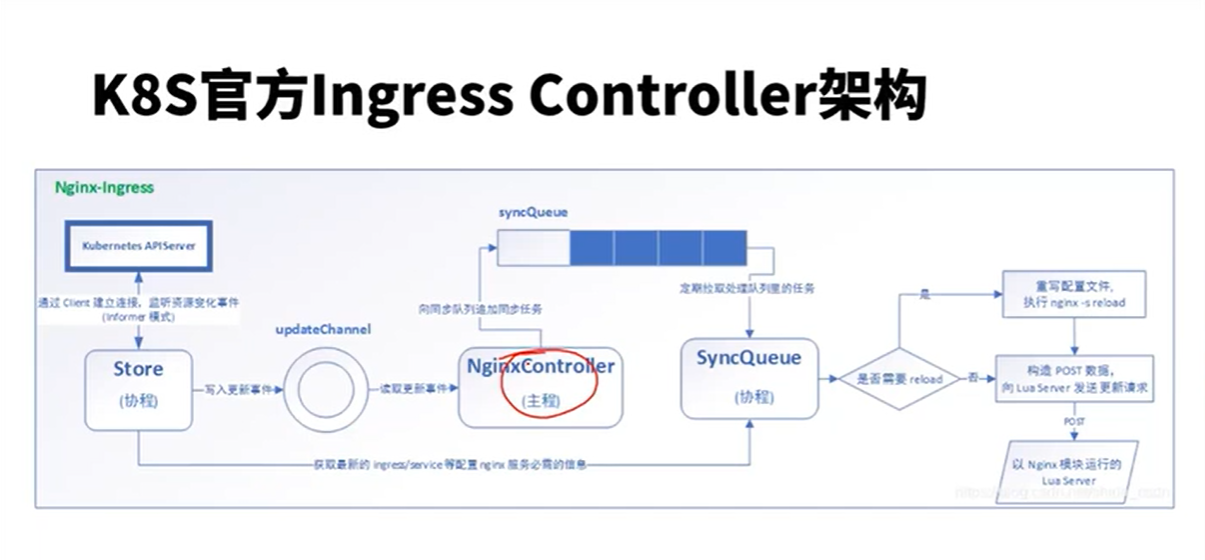

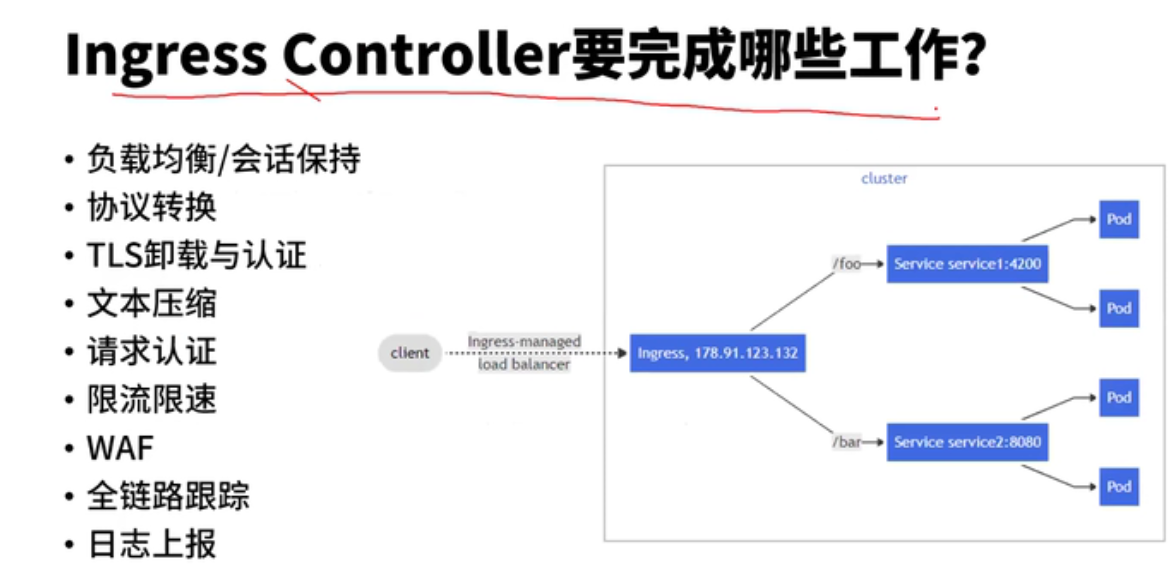

首先,目前常用的Ingress-Nginx-Controller有两个。一个是K8S官方开源的Ingress-Nginx-Controller,另一个是nginx官方开源的Ingress-Nginx-Controller。我们使用的是K8S官方的版本。

这两个Controller大致的区别如下:

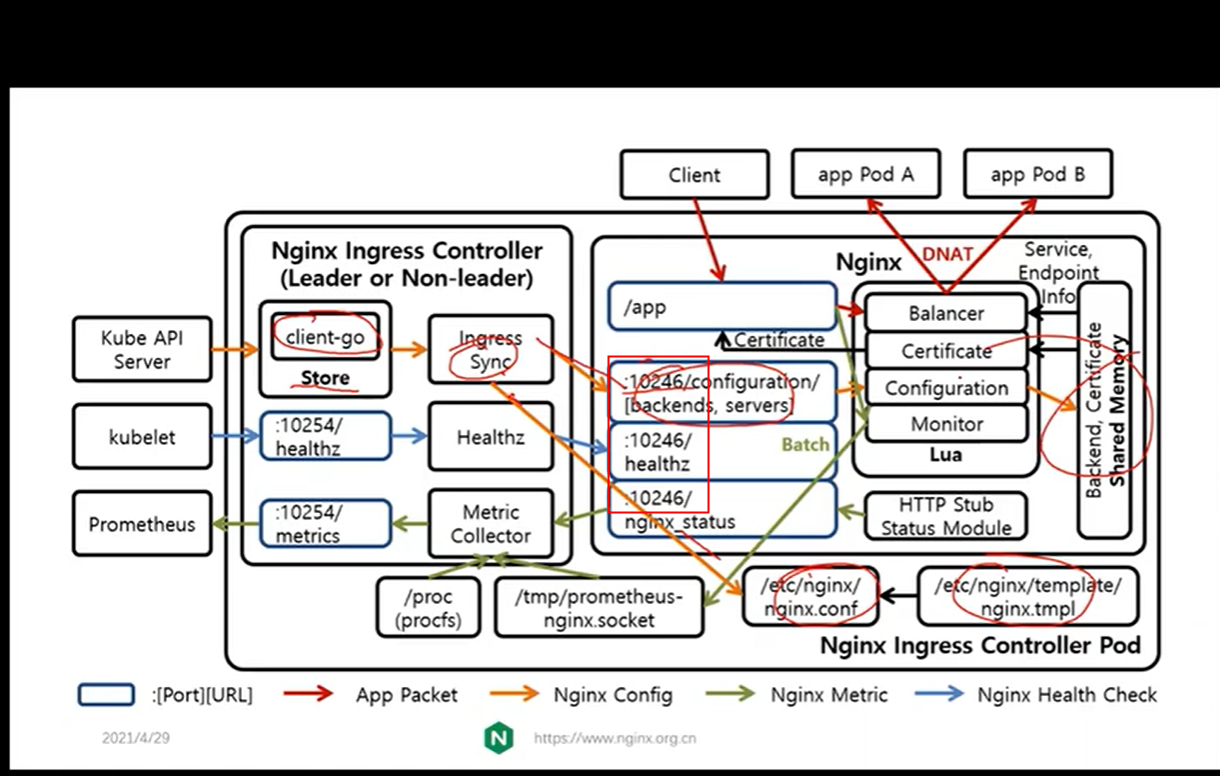

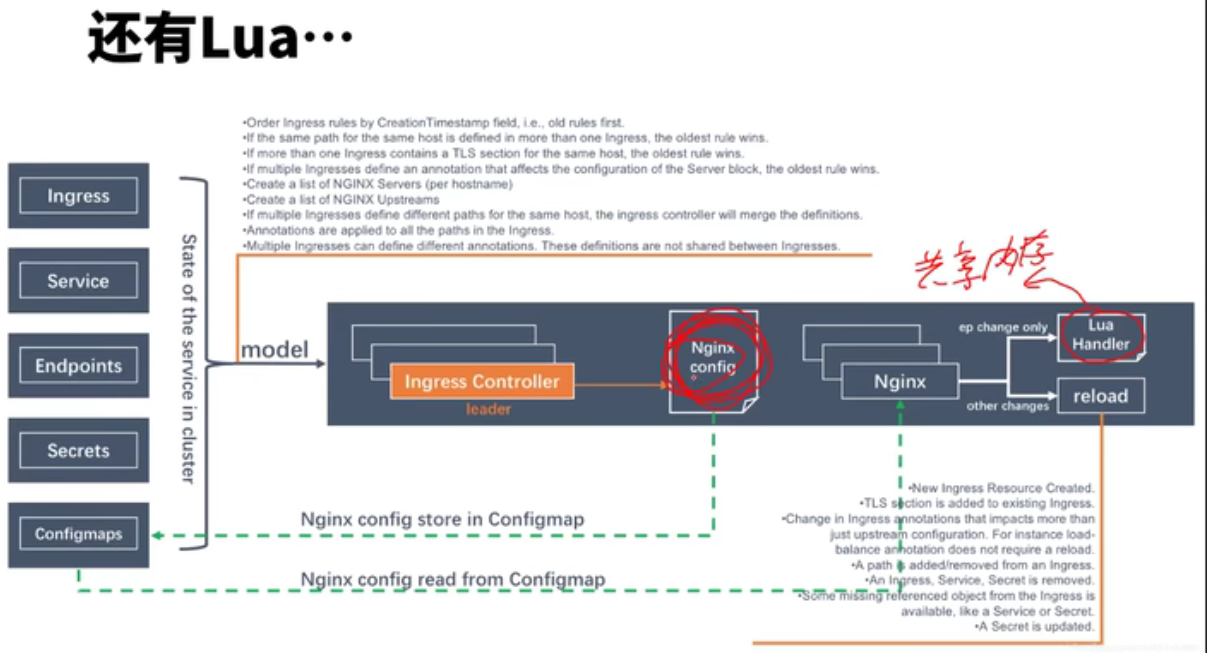

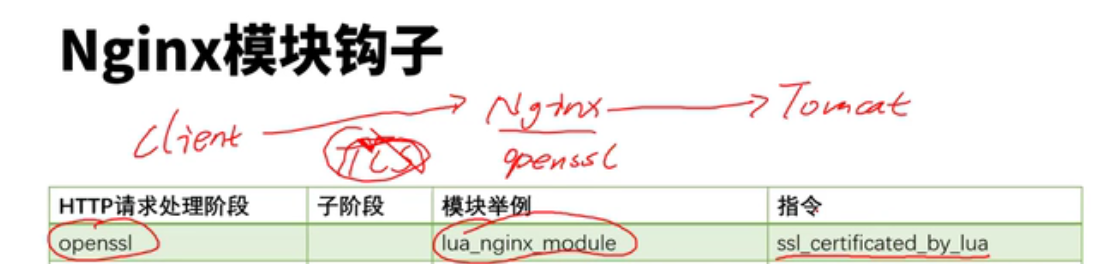

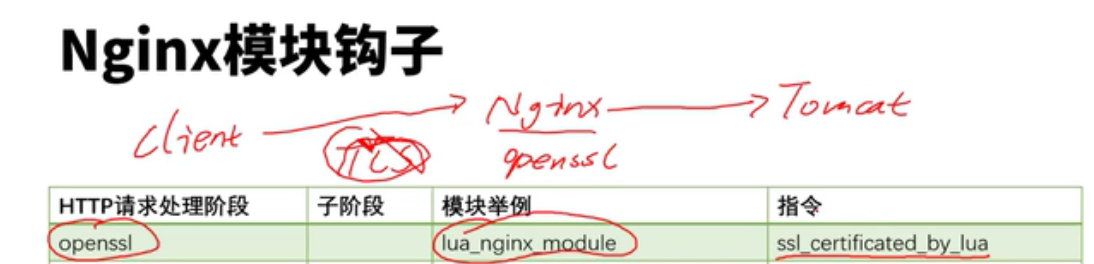

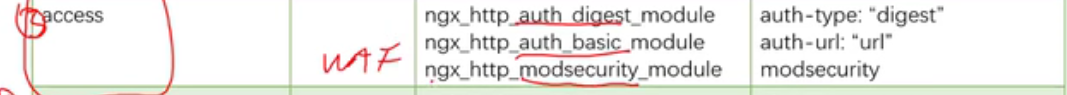

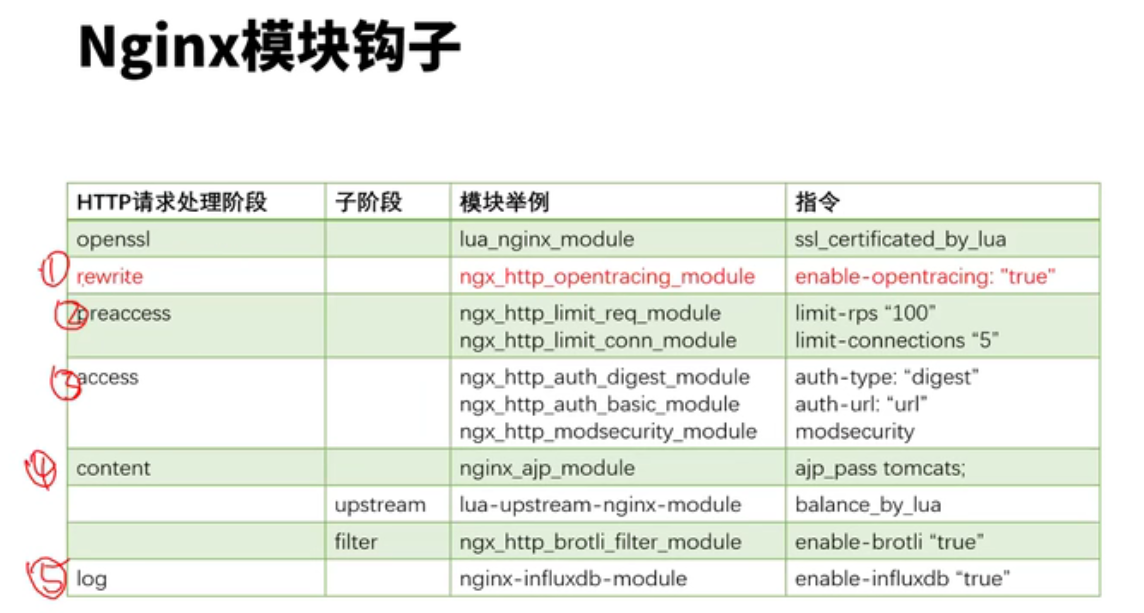

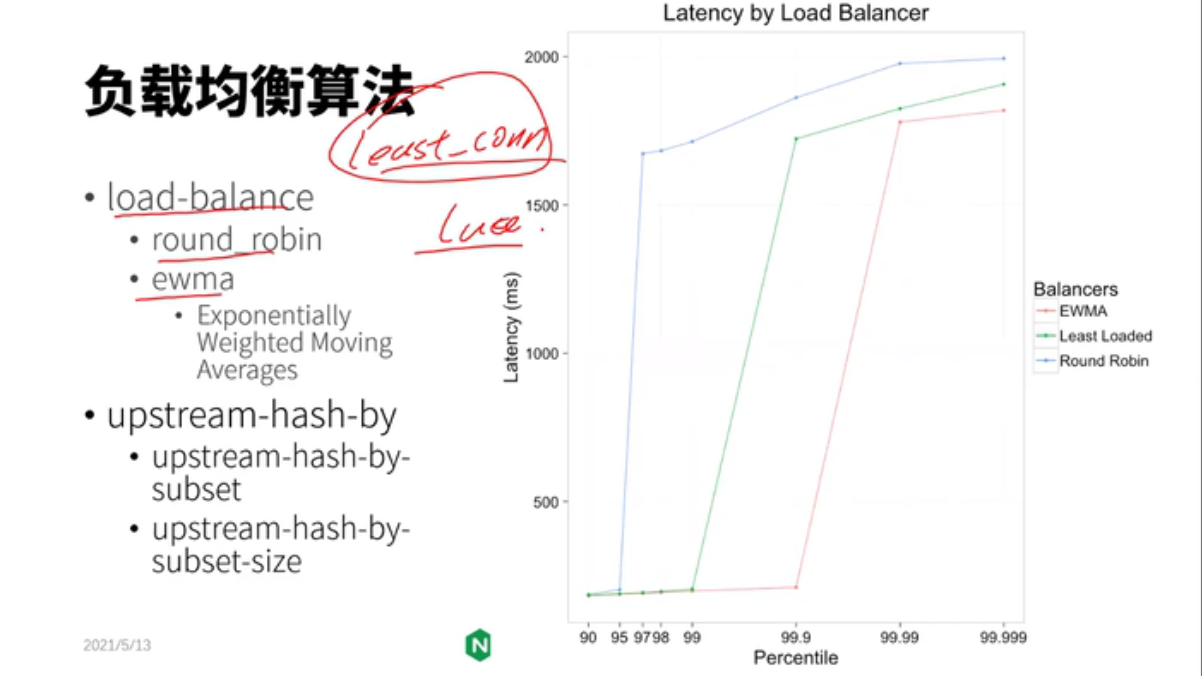

1)K8S官方的Controller也是采用Go语言开发的,集成了Lua实现的OpenResty;而Nginx官方的Ccontroller是集成了Nginx;

2)两者对Nginx的配置不同,并且使用的nginx.conf配置模板也是不一样的,Nginx官方的采用两个模板文件以include的方式配置upstream;K8S官方版本采用Lua动态配置upstream,所以不需要reload。

所以,在pod频繁变更的场景下,采用K8S官方版本不需要reload,影响会更小。

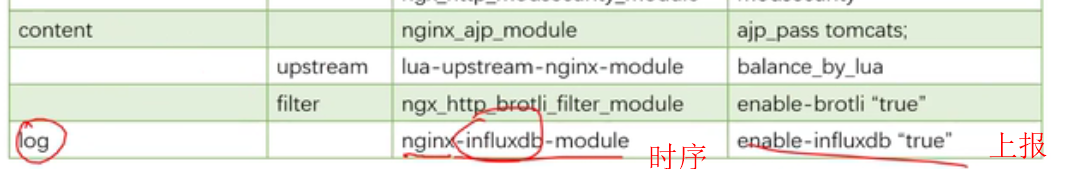

接下来,我们来看K8S官方的Ingress-Nginx-Controller是如何实现Metrics监控数据采集上报的。

ingress-Nginx-Controller的配置分为两部分nginx.conf 和内存

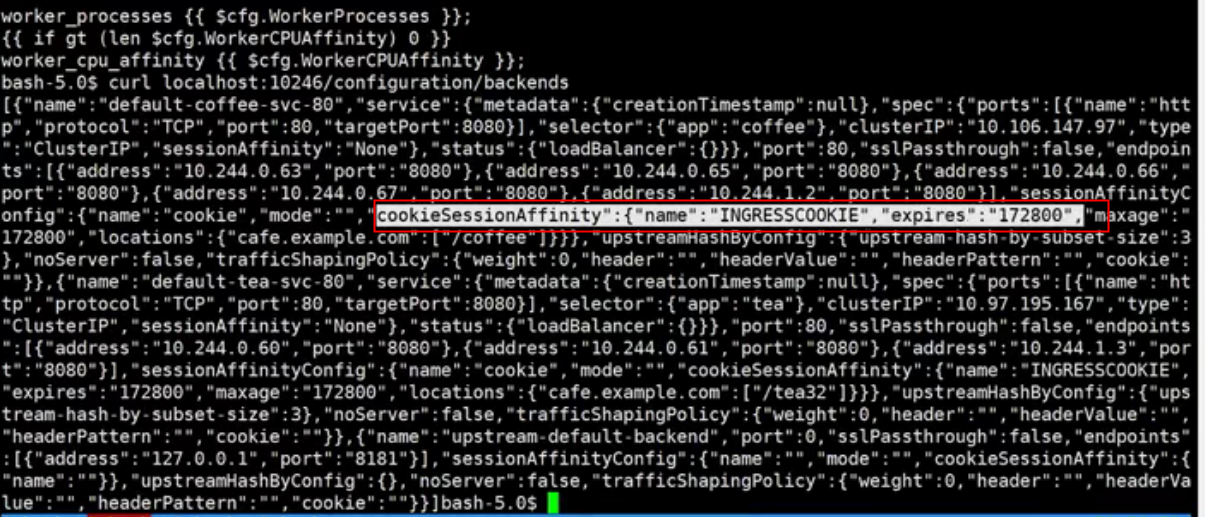

内存中的可以通过curl http://127.0.0.1:10246/configuration/backends访问

ingress-nginx/rootfs/etc/nginx/template/nginx.tmpl:1392: opentracing off; root@ubuntu:~/nginx_ingress# kubectl exec -it ingress-nginx-controller-7478b9dbb5-6qk65 -n ingress-nginx -- curl http://127.0.0.1:10246/configuration/backends [{"name":"default-apache-svc-80","service":{"metadata":{"creationTimestamp":null},"spec":{"ports":[{"protocol":"TCP","port":80,"targetPort":80}],"selector":{"app":"apache-app"},"clusterIP":"10.111.63.105","type":"ClusterIP","sessionAffinity":"None"},"status":{"loadBalancer":{}}},"port":80,"sslPassthrough":false,"endpoints":[{"address":"10.244.243.197","port":"80"},{"address":"10.244.41.61","port":"80"}],"sessionAffinityConfig":{"name":"","mode":"","cookieSessionAffinity":{"name":""}},"upstreamHashByConfig":{"upstream-hash-by-subset-size":3},"noServer":false,"trafficShapingPolicy":{"weight":0,"header":"","headerValue":"","headerPattern":"","cookie":""}},{"name":"default-nginx-svc-80","service":{"metadata":{"creationTimestamp":null},"spec":{"ports":[{"protocol":"TCP","port":80,"targetPort":80}],"selector":{"app":"nginx-app"},"clusterIP":"10.103.182.145","type":"ClusterIP","sessionAffinity":"None"},"status":{"loadBalancer":{}}},"port":80,"sslPassthrough":false,"endpoints":[{"address":"10.244.243.195","port":"80"},{"address":"10.244.41.58","port":"80"}],"sessionAffinityConfig":{"name":"","mode":"","cookieSessionAffinity":{"name":""}},"upstreamHashByConfig":{"upstream-hash-by-subset-size":3},"noServer":false,"trafficShapingPolicy":{"weight":0,"header":"","headerValue":"","headerPattern":"","cookie":""}},{"name":"default-web2-8097","service":{"metadata":{"creationTimestamp":null},"spec":{"ports":[{"protocol":"TCP","port":8097,"targetPort":80}],"selector":{"run":"web2"},"clusterIP":"10.99.87.66","type":"ClusterIP","sessionAffinity":"None"},"status":{"loadBalancer":{}}},"port":8097,"sslPassthrough":false,"endpoints":[{"address":"10.244.41.59","port":"80"}],"sessionAffinityConfig":{"name":"","mode":"","cookieSessionAffinity":{"name":""}},"upstreamHashByConfig":{"upstream-hash-by-subset-size":3},"noServer":false,"trafficShapingPolicy":{"weight":0,"header":"","headerValue":"","headerPattern":"","cookie":""}},{"name":"default-web3-8097","service":{"metadata":{"creationTimestamp":null},"spec":{"ports":[{"protocol":"TCP","port":8097,"targetPort":80}],"selector":{"run":"web3"},"clusterIP":"10.107.70.171","type":"ClusterIP","sessionAffinity":"None"},"status":{"loadBalancer":{}}},"port":8097,"sslPassthrough":false,"endpoints":[{"address":"10.244.41.55","port":"80"}],"sessionAffinityConfig":{"name":"","mode":"","cookieSessionAffinity":{"name":""}},"upstreamHashByConfig":{"upstream-hash-by-subset-size":3},"noServer":false,"trafficShapingPolicy":{"weight":0,"header":"","headerValue":"","headerPattern":"","cookie":""}},{"name":"upstream-default-backend","port":0,"sslPassthrough":false,"endpoints":[{"address":"127.0.0.1","port":"8181"}],"sessionAffinityConfig":{"name":"","mode":"","cookieSessionAffinity":{"name":""}},"upstreamHashByConfig":{},"noServer":false,"trafficShapingPolicy":{"weight":0,"header":"","headerValue":"","headerPattern":"","cookie":""}}]

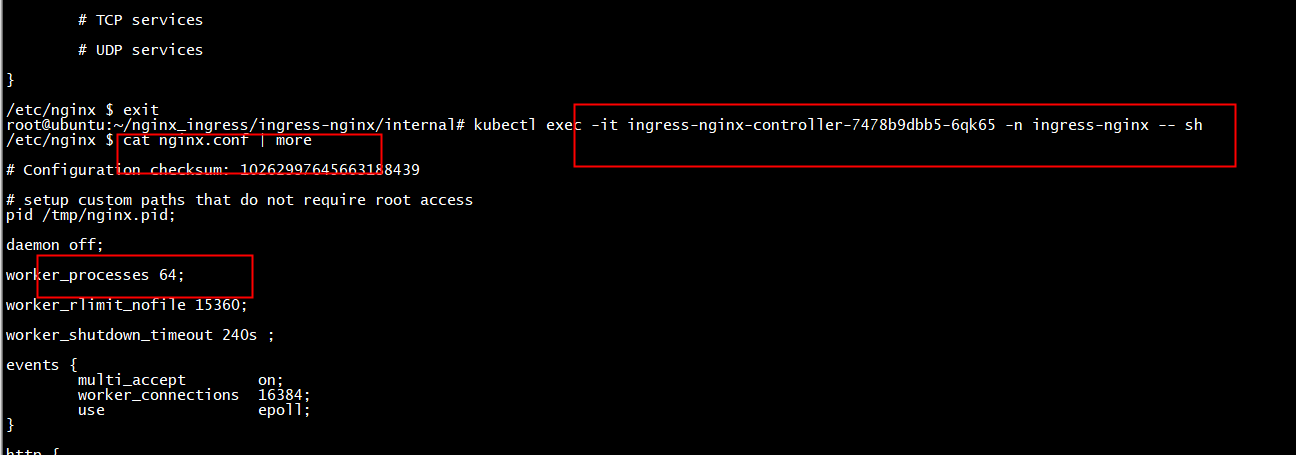

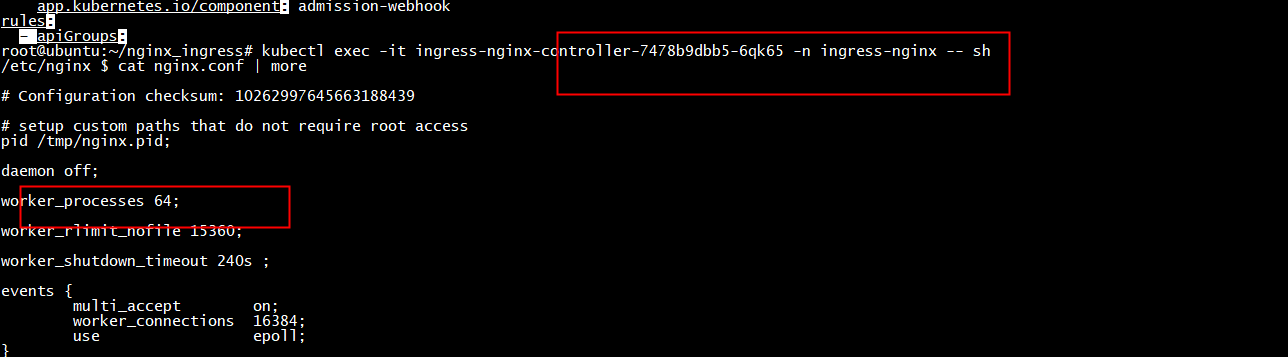

root@ubuntu:~/nginx_ingress# kubectl exec -it ingress-nginx-controller-7478b9dbb5-6qk65 -n ingress-nginx -- sh /etc/nginx $ ls fastcgi.conf fastcgi_params.default koi-win mime.types.default nginx.conf owasp-modsecurity-crs template win-utf fastcgi.conf.default geoip lua modsecurity nginx.conf.default scgi_params uwsgi_params fastcgi_params koi-utf mime.types modules opentracing.json scgi_params.default uwsgi_params.default /etc/nginx $ ps -elf PID USER TIME COMMAND 1 www-data 0:00 /usr/bin/dumb-init -- /nginx-ingress-controller --publish-service=ingress-nginx/ingress-nginx-controller --election-id=ingress-controller-leader --ingress-class=nginx --configmap=ingress-nginx/ingress-nginx-controller --validating-web 6 www-data 25:35 /nginx-ingress-controller --publish-service=ingress-nginx/ingress-nginx-controller --election-id=ingress-controller-leader --ingress-class=nginx --configmap=ingress-nginx/ingress-nginx-controller --validating-webhook=:8443 --validatin 47 www-data 0:00 nginx: master process /usr/local/nginx/sbin/nginx -c /etc/nginx/nginx.conf 51 www-data 3:23 nginx: worker process 52 www-data 3:12 nginx: worker process 53 www-data 2:36 nginx: worker process 54 www-data 2:38 nginx: worker process 55 www-data 2:54 nginx: worker process 56 www-data 3:15 nginx: worker process 64 www-data 2:38 nginx: worker process 102 www-data 2:39 nginx: worker process 148 www-data 2:56 nginx: worker process 192 www-data 2:38 nginx: worker process 232 www-data 2:37 nginx: worker process 268 www-data 2:54 nginx: worker process 289 www-data 3:10 nginx: worker process 324 www-data 2:55 nginx: worker process 366 www-data 2:36 nginx: worker process 418 www-data 2:38 nginx: worker process 443 www-data 2:37 nginx: worker process 479 www-data 2:38 nginx: worker process 517 www-data 2:39 nginx: worker process 541 www-data 2:38 nginx: worker process 571 www-data 3:08 nginx: worker process 596 www-data 2:41 nginx: worker process 619 www-data 2:39 nginx: worker process 647 www-data 2:39 nginx: worker process 653 www-data 3:09 nginx: worker process 684 www-data 2:41 nginx: worker process 709 www-data 2:40 nginx: worker process 737 www-data 2:41 nginx: worker process 771 www-data 2:37 nginx: worker process 808 www-data 2:38 nginx: worker process 847 www-data 2:54 nginx: worker process 882 www-data 3:11 nginx: worker process 937 www-data 2:39 nginx: worker process 996 www-data 2:38 nginx: worker process

root@ubuntu:~/karmada/pkg# kubectl get ingress -o wide NAME CLASS HOSTS ADDRESS PORTS AGE example-ingress <none> ubuntu.com 80 18d micro-ingress <none> nginx.mydomain.com,apache.mydomain.com 80 18d web-ingress <none> web.mydomain.com 80 18d web-ingress-lb <none> web3.mydomain.com,web2.mydomain.com 80 18d

root@cloud:~# kubectl get svc -n ingress-nginx NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE ingress-nginx-controller LoadBalancer 10.109.135.148 <pending> 80:31324/TCP,443:31274/TCP 18d ingress-nginx-controller-admission ClusterIP 10.107.93.85 <none> 443/TCP 18d root@cloud:~# curl -I -H "Host: web2.mydomain.com" http://10.109.135.148 HTTP/1.1 200 OK Date: Tue, 24 Aug 2021 09:58:54 GMT Content-Type: text/html Content-Length: 612 Connection: keep-alive Last-Modified: Tue, 06 Jul 2021 14:59:17 GMT ETag: "60e46fc5-264" Accept-Ranges: bytes root@cloud:~#

root@cloud:~# kubectl describe svc ingress-nginx-controller -n ingress-nginx | more Name: ingress-nginx-controller Namespace: ingress-nginx Labels: app.kubernetes.io/component=controller app.kubernetes.io/instance=ingress-nginx app.kubernetes.io/managed-by=Helm app.kubernetes.io/name=ingress-nginx app.kubernetes.io/version=0.44.0 helm.sh/chart=ingress-nginx-3.23.0 Annotations: <none> Selector: app.kubernetes.io/component=controller,app.kubernetes.io/instance=ingress-nginx,app.kubernetes.io/name=ingress-nginx Type: LoadBalancer IP: 10.109.135.148 Port: http 80/TCP TargetPort: http/TCP NodePort: http 31324/TCP Endpoints: 10.244.41.54:80 Port: https 443/TCP TargetPort: https/TCP NodePort: https 31274/TCP Endpoints: 10.244.41.54:443 Session Affinity: None External Traffic Policy: Local HealthCheck NodePort: 32469 Events: <none>

root@cloud:~# kubectl get pod -n ingress-nginx NAME READY STATUS RESTARTS AGE ingress-nginx-controller-7478b9dbb5-6qk65 1/1 Running 6 18d root@cloud:~# kubectl get pod -n ingress-nginx -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES ingress-nginx-controller-7478b9dbb5-6qk65 1/1 Running 6 18d 10.244.41.54 cloud <none> <none> root@cloud:~# curl -I -H "Host: web2.mydomain.com" http://10.244.41.54 HTTP/1.1 200 OK Date: Tue, 24 Aug 2021 10:03:08 GMT Content-Type: text/html Content-Length: 612 Connection: keep-alive Last-Modified: Tue, 06 Jul 2021 14:59:17 GMT ETag: "60e46fc5-264" Accept-Ranges: bytes root@cloud:~#

root@ubuntu:~# kubectl get pods -A -o wide | grep nginx default nginx-app-56b5bb67cc-mkfct 1/1 Running 3 15d 10.244.41.58 cloud <none> <none> default nginx-app-56b5bb67cc-s9jtk 1/1 Running 0 19d 10.244.243.195 ubuntu <none> <none> default nginx-karmada-f89759699-qcztn 1/1 Running 0 18h 10.244.41.51 cloud <none> <none> default nginx-karmada-f89759699-vn47h 1/1 Running 0 18h 10.244.41.52 cloud <none> <none> ingress-nginx ingress-nginx-controller-7478b9dbb5-6qk65 1/1 Running 6 19d 10.244.41.54 cloud <none> <none> root@ubuntu:~# netstat -pan | grep 10254 root@ubuntu:~# kubectl exec -it ingress-nginx-controller-7478b9dbb5-6qk65 -n ingress-nginx -- sh /etc/nginx $ netstat -pan | grep 10254 netstat: can't scan /proc - are you root? tcp 0 0 :::10254 :::* LISTEN - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:51780 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:64858 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:60173 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:22861 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:7885 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:20247 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:8397 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:16263 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:49831 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:21393 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:46450 TIME_WAIT - tcp 0 0 ::ffff:10.244.41.54:10254 ::ffff:10.10.16.47:25988 TIME_WAIT - /etc/nginx $

curl http://127.0.0.1:10246/configuration/backends -o backend.json

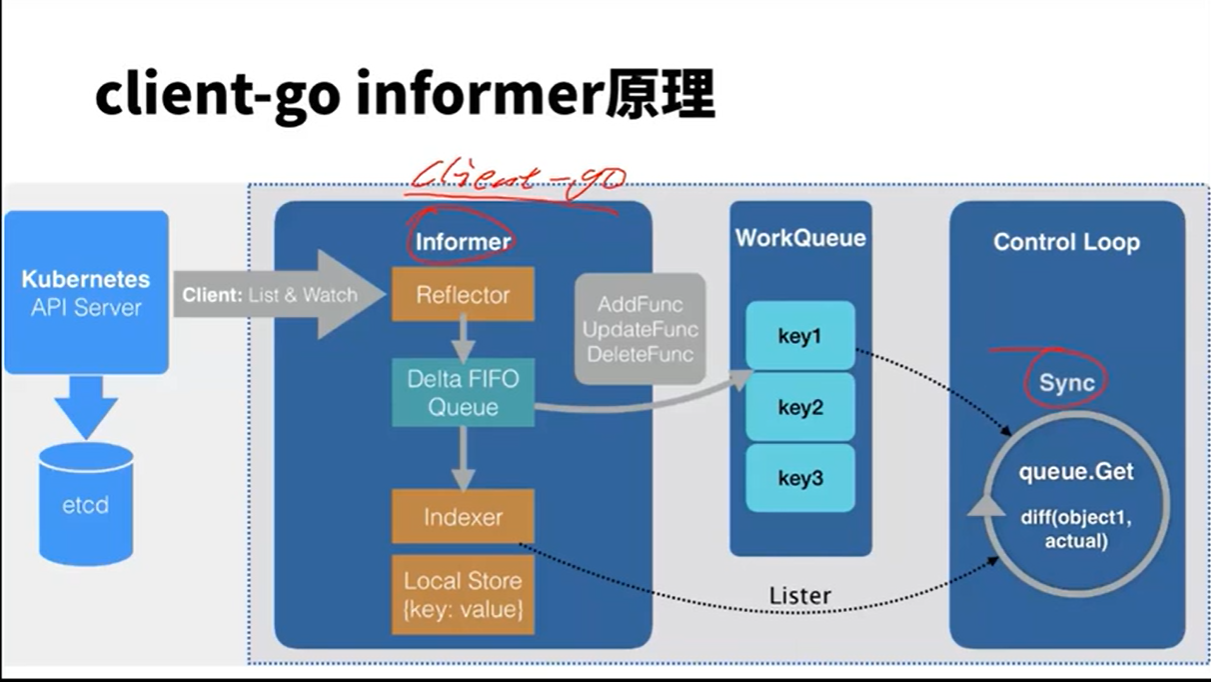

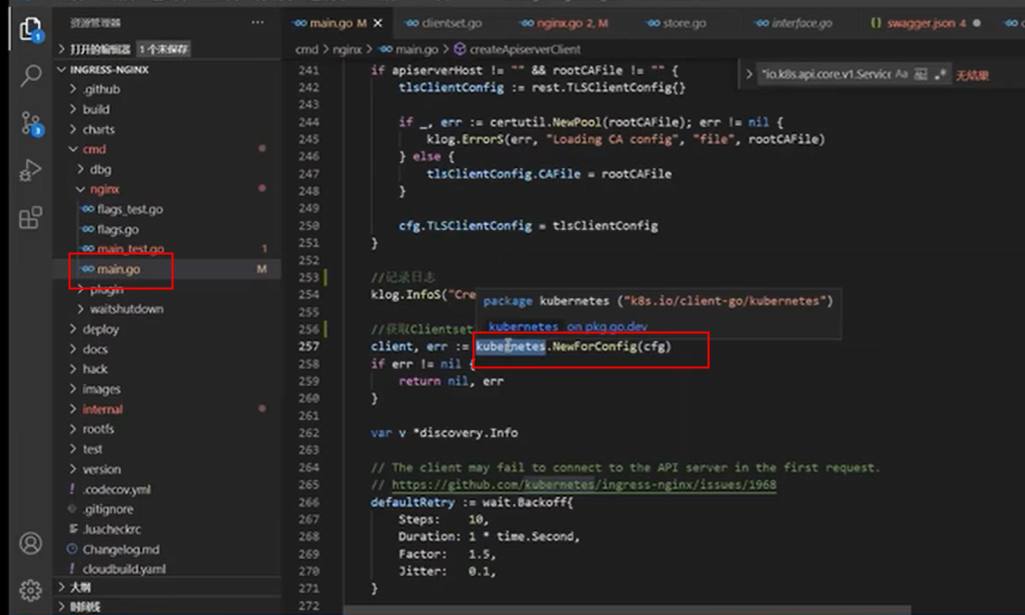

updateCh

ingress/controller/nginx.go

for { select { case err := <-n.ngxErrCh: if n.isShuttingDown { return } // if the nginx master process dies, the workers continue to process requests // until the failure of the configured livenessProbe and restart of the pod. if process.IsRespawnIfRequired(err) { return } case event := <-n.updateCh.Out(): if n.isShuttingDown { break } if evt, ok := event.(store.Event); ok { klog.V(3).InfoS("Event received", "type", evt.Type, "object", evt.Obj) if evt.Type == store.ConfigurationEvent { // TODO: is this necessary? Consider removing this special case n.syncQueue.EnqueueTask(task.GetDummyObject("configmap-change")) continue } n.syncQueue.EnqueueSkippableTask(evt.Obj) } else { klog.Warningf("Unexpected event type received %T", event) } case <-n.stopCh: return } }

syncQueue

// Start starts the loop to keep the status in sync func (s statusSync) Run(stopCh chan struct{}) { go s.syncQueue.Run(time.Second, stopCh) // trigger initial sync s.syncQueue.EnqueueTask(task.GetDummyObject("sync status")) // when this instance is the leader we need to enqueue // an item to trigger the update of the Ingress status. wait.PollUntil(time.Duration(UpdateInterval)*time.Second, func() (bool, error) { s.syncQueue.EnqueueTask(task.GetDummyObject("sync status")) return false, nil }, stopCh) }

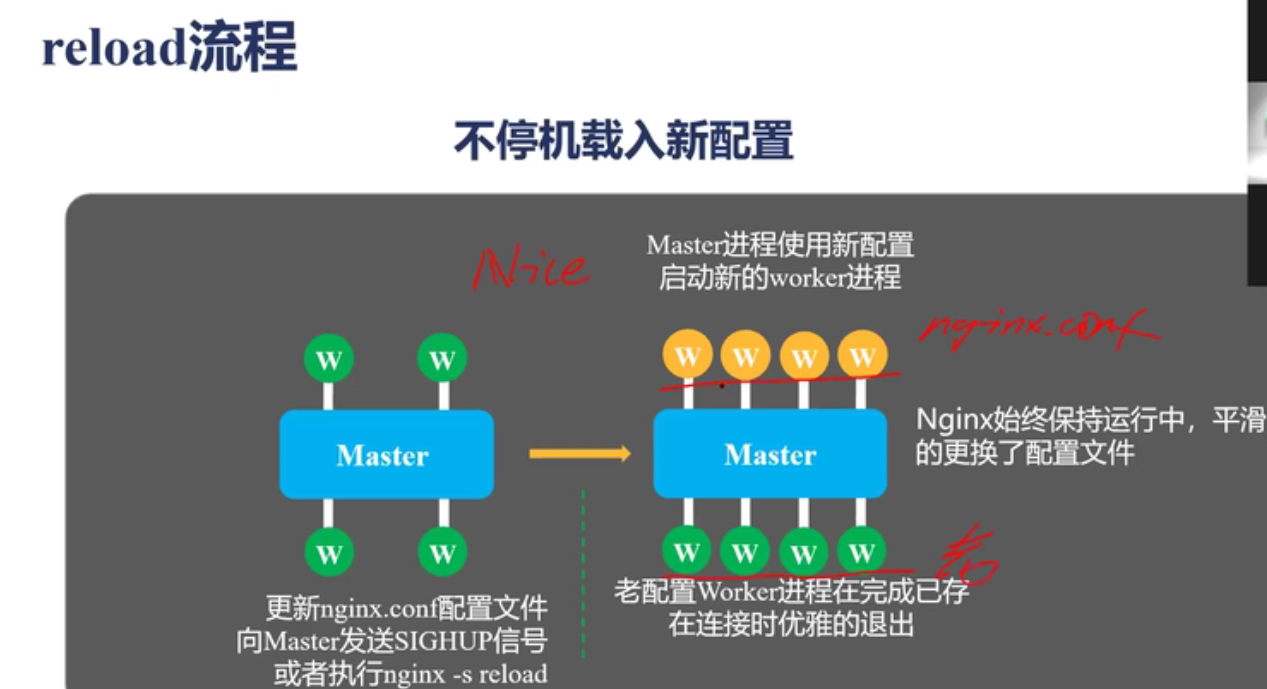

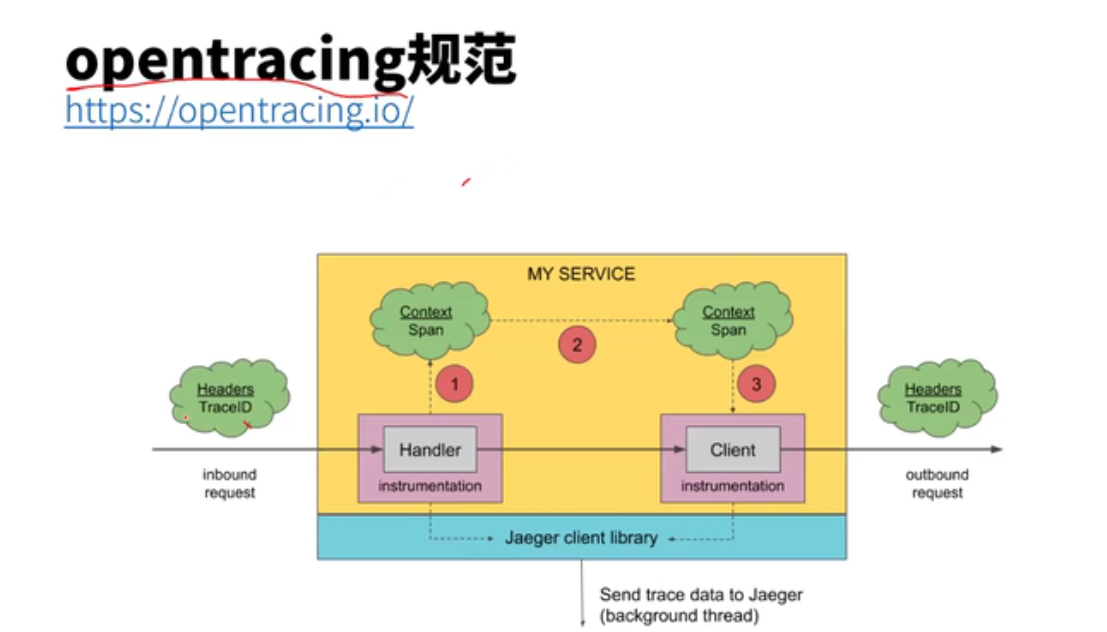

reload

// OnUpdate is called by the synchronization loop whenever configuration // changes were detected. The received backend Configuration is merged with the // configuration ConfigMap before generating the final configuration file. // Returns nil in case the backend was successfully reloaded. func (n *NGINXController) OnUpdate(ingressCfg ingress.Configuration) error { cfg := n.store.GetBackendConfiguration() cfg.Resolver = n.resolver content, err := n.generateTemplate(cfg, ingressCfg) if err != nil { return err } err = createOpentracingCfg(cfg) if err != nil { return err } err = n.testTemplate(content) if err != nil { return err } if klog.V(2).Enabled() { src, _ := ioutil.ReadFile(cfgPath) if !bytes.Equal(src, content) { tmpfile, err := ioutil.TempFile("", "new-nginx-cfg") if err != nil { return err } defer tmpfile.Close() err = ioutil.WriteFile(tmpfile.Name(), content, file.ReadWriteByUser) if err != nil { return err } diffOutput, err := exec.Command("diff", "-I", "'# Configuration.*'", "-u", cfgPath, tmpfile.Name()).CombinedOutput() if err != nil { if exitError, ok := err.(*exec.ExitError); ok { ws := exitError.Sys().(syscall.WaitStatus) if ws.ExitStatus() == 2 { klog.Warningf("Failed to executing diff command: %v", err) } } } klog.InfoS("NGINX configuration change", "diff", string(diffOutput)) // we do not defer the deletion of temp files in order // to keep them around for inspection in case of error os.Remove(tmpfile.Name()) } } err = ioutil.WriteFile(cfgPath, content, file.ReadWriteByUser) if err != nil { return err } o, err := n.command.ExecCommand("-s", "reload").CombinedOutput() if err != nil { return fmt.Errorf("%v %v", err, string(o)) } return nil }

syncIngress

// syncIngress collects all the pieces required to assemble the NGINX // configuration file and passes the resulting data structures to the backend // (OnUpdate) when a reload is deemed necessary. func (n *NGINXController) syncIngress(interface{}) error { // 获取最新配置信息 .... // 构造 nginx 配置 pcfg := &ingress.Configuration{ Backends: upstreams, Servers: servers, PassthroughBackends: passUpstreams, BackendConfigChecksum: n.store.GetBackendConfiguration().Checksum, } ... // 不能避免 reload,就执行 reload 更新配置 if !n.IsDynamicConfigurationEnough(pcfg) { ... err := n.OnUpdate(*pcfg) ... } ... // 动态更新配置 err := wait.ExponentialBackoff(retry, func() (bool, error) { err := configureDynamically(pcfg, n.cfg.ListenPorts.Status, n.cfg.DynamicCertificatesEnabled) ... }) ... }

nginx_request_exporter 通过 syslog 协议 收集并分析 Nginx 的 access log 来统计 HTTP 请求相关的一些指标;nginx-prometheus-shiny-exporter 和 nginx_request_exporter 类似,也是使用 syslog 协议来收集 access log

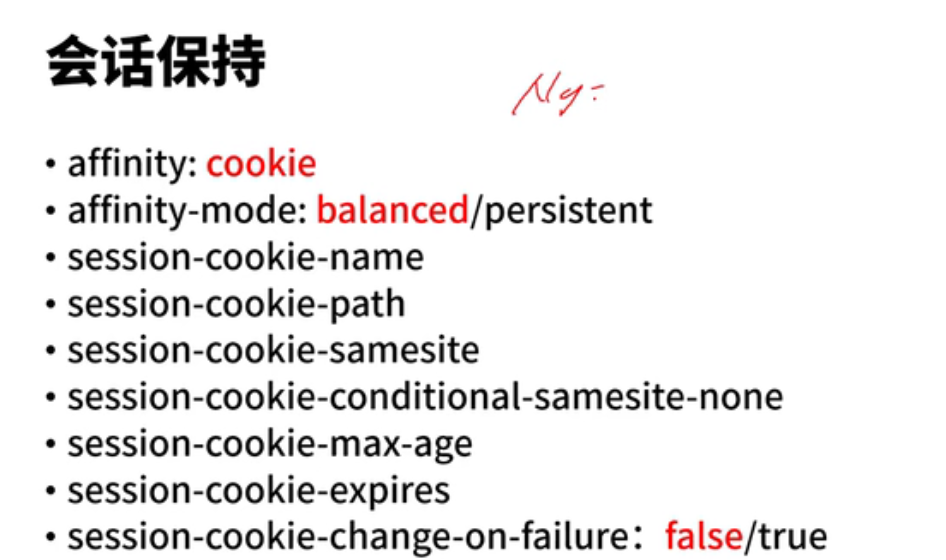

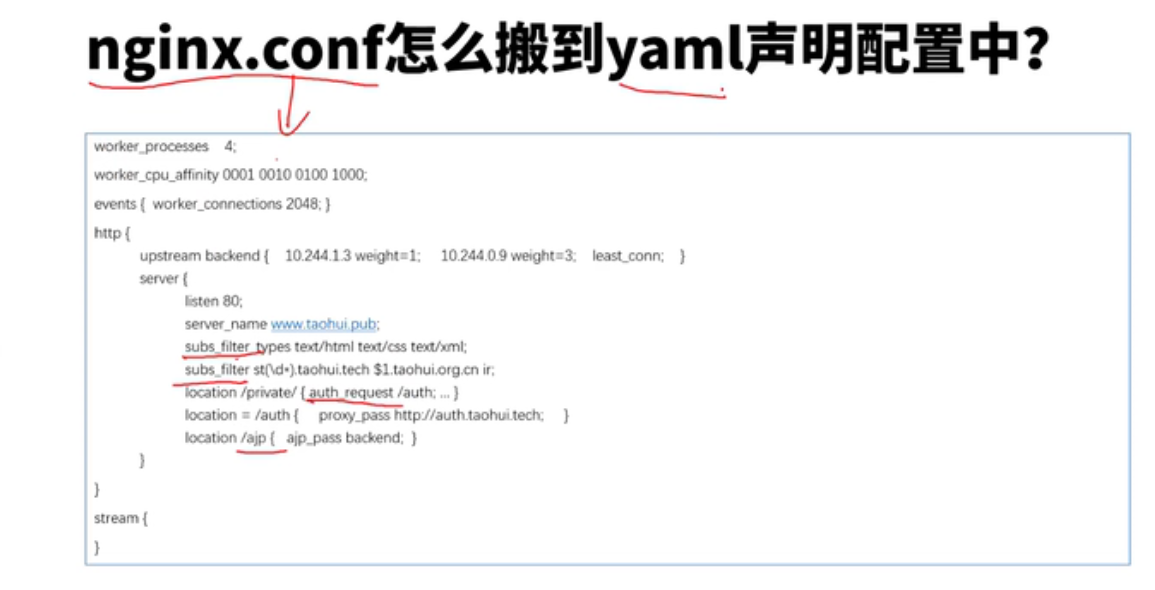

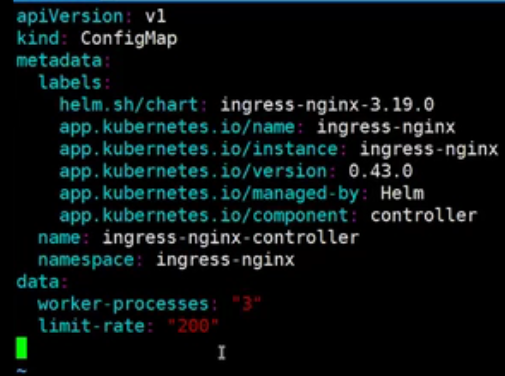

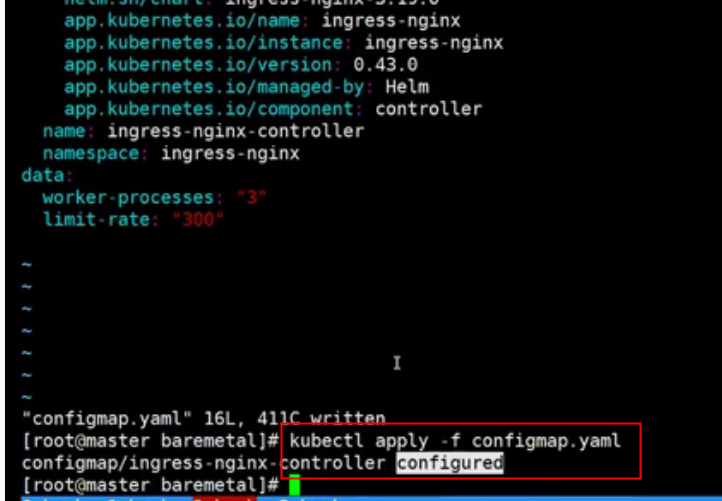

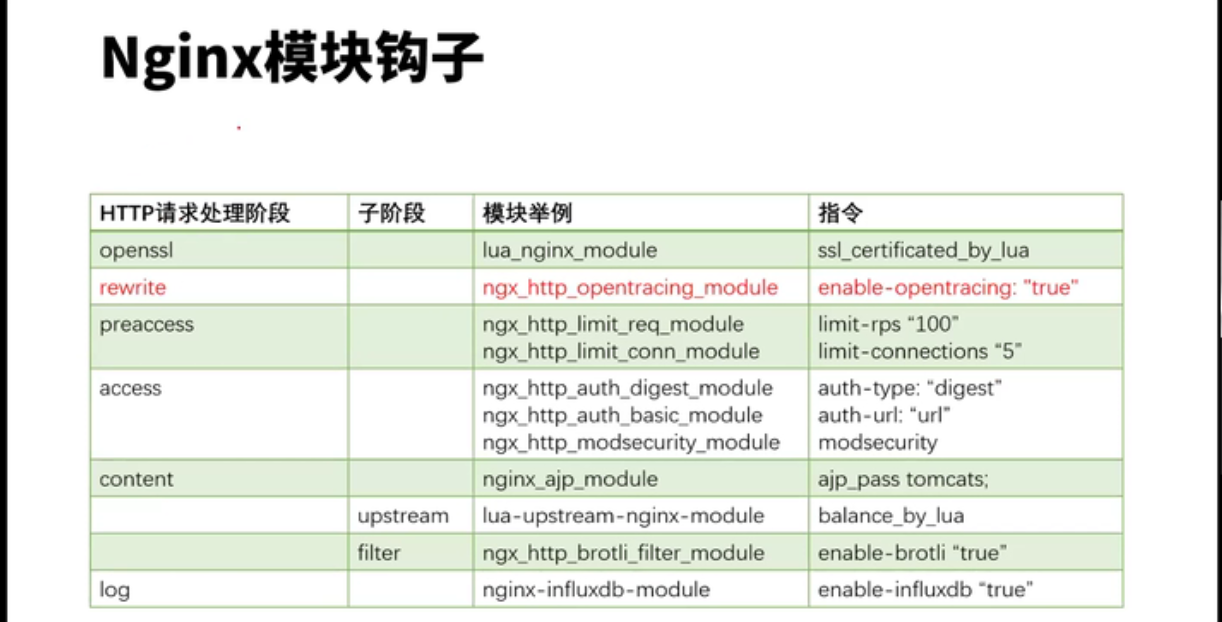

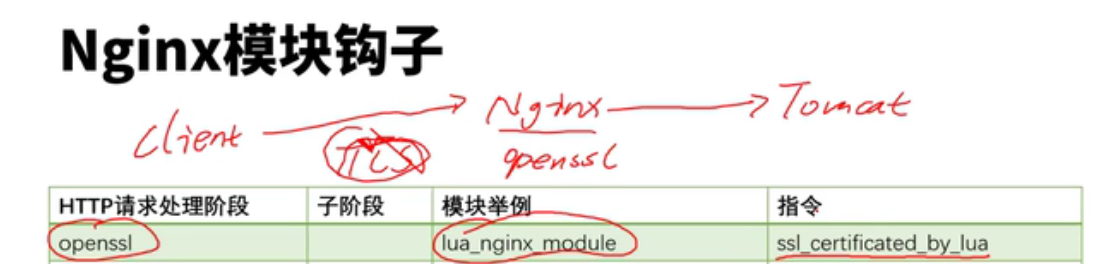

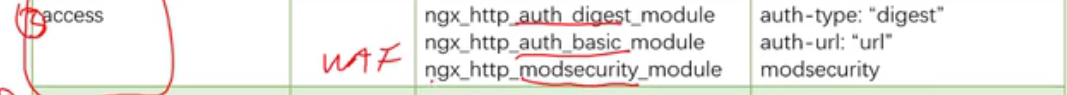

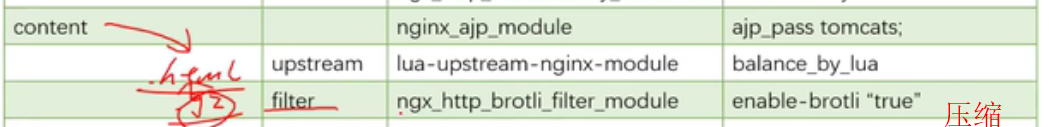

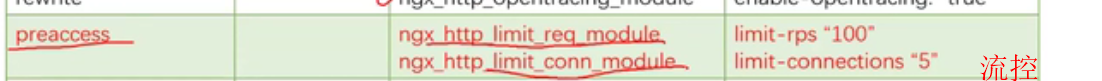

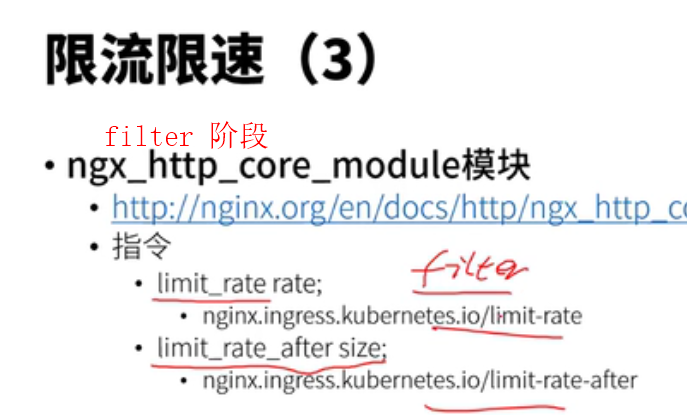

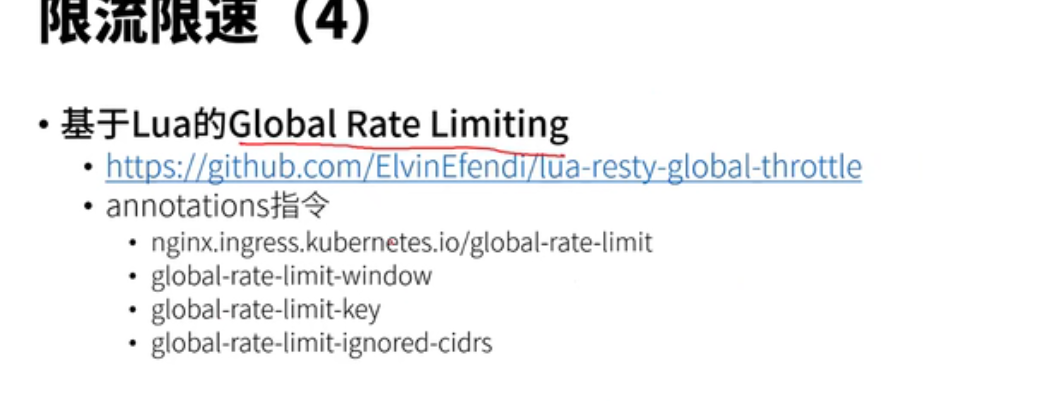

nginx ingress controller configmap annotation template

--election-id=ingress-controller-leader --ingress-class=nginx --configmap=ingress-nginx/ingress-nginx-controller --validating-webhook=:8443

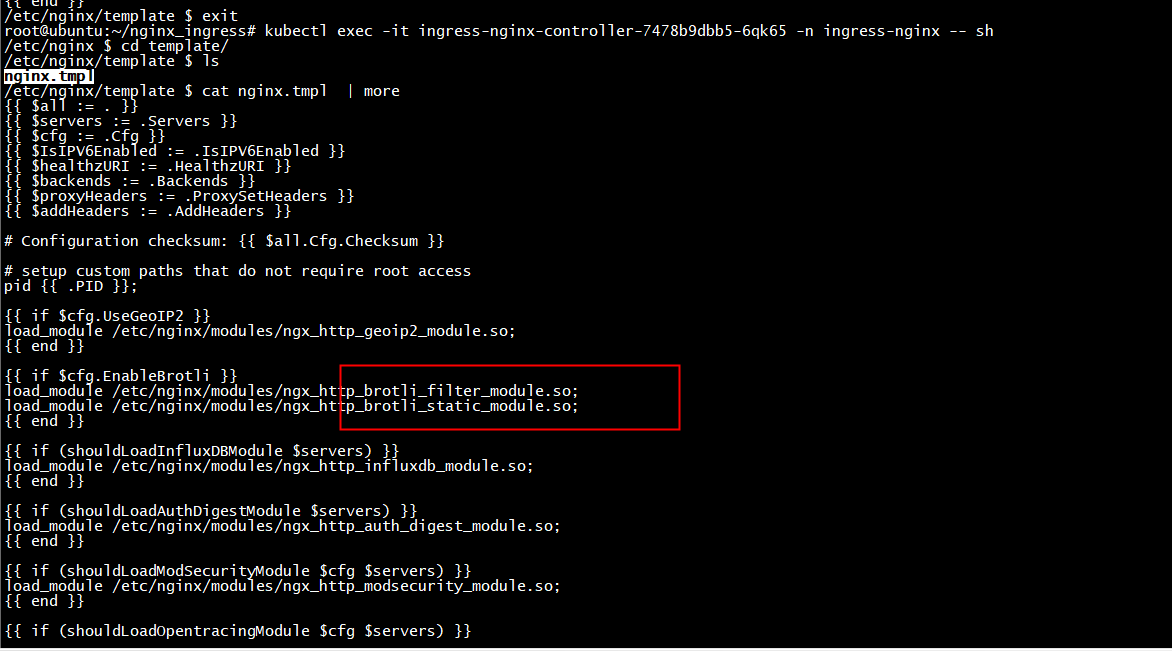

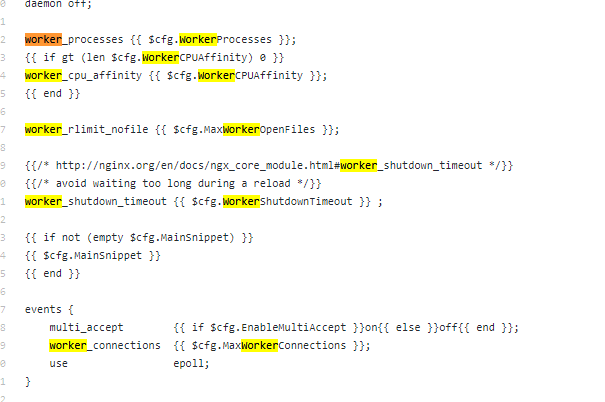

template

ingress-nginx/rootfs/etc/nginx/template/nginx.tmpl

lua模块和c块有冲突,这些配置写在共享内存中

lua写,不需要做reload