环境准备:

保证openstack节点的hosts文件里有ceph集群的各个主机名,也要保证ceph集群节点有openstack节点的各个主机名

一、使用rbd方式提供存储如下数据:

二、实施步骤:

(1)客户端也要有cent用户:

useradd cent && echo "123" | passwd --stdin cent

echo -e 'Defaults:cent !requiretty

cent ALL = (root) NOPASSWD:ALL' | tee /etc/sudoers.d/ceph

chmod 440 /etc/sudoers.d/ceph

(2)openstack要用ceph的节点(比如compute-node和storage-node)安装下载的软件包:

yum localinstall ./* -y

或则:每个节点安装 clients(要访问ceph集群的节点):

yum install python-rbd

yum install ceph-common

(3)部署节点上执行,为openstack节点安装ceph:

ceph-deploy install controller

ceph-deploy admin controller

(4)客户端执行

sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

5)创建存储池,分别名为images、vms、volumes

[root@controller ~]#ceph osd pool create images 128

pool 'images' created

[root@controller ~]# ceph osd pool create vms 128

pool 'vms' created

[root@controller ~]# ceph osd pool create volumes 128

pool 'volumes' created

6)查看pool列表

ceph osd lspools

0 rbd,1 images,2 vms,3 volumes,

如果你启用了 cephx 认证,需要分别为 Nova/Cinder 和 Glance 创建新用户

7)在ceph集群中创建glance和cinder用户,由于是all in one环境所以这里我们就在部署节点上创建这两个用户即可。

useradd glance

useradd cinder

8)给与这两个用户输出一个对应的密钥环

[root@controller ceph]# ceph auth get-or-create client.glance mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=images'

[client.glance]

key = AQCZggNd3TrTDBAAFgWrEAXhXt7xv4xcnn0eWA==

[root@controller ceph]# ceph auth get-or-create client.cinder mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=volumes, allow rwx pool=vms, allow rx pool=images'

[client.cinder]

key = AQCtggNdHrFuHhAAsI/rt4cVujt8QEYZOODRFw==

每创建一个用户会输出一个对应用户的秘钥环,秘钥环是其他节点访问集群的钥匙(client.glance/cinder )

9)拷贝密钥环,并发给需要的节点,我们这里就在controller节点上拷贝然后发给storage节点

root@controller ceph]# ceph auth get-or-create client.glance > /etc/ceph/ceph.client.glance.keyring

[root@controller ceph]# ceph auth get-or-create client.cinder > /etc/ceph/ceph.client.cinder.keyring

[root@controller ceph]# scp ceph.client.glance.keyring ceph.client.cinder.keyring compute:/etc/ceph/

ceph.client.glance.keyring 100% 64 15.4KB/s 00:00

ceph.client.cinder.keyring 100% 64 31.4KB/s 00:00

[root@controller ceph]# scp ceph.client.glance.keyring ceph.client.cinder.keyring storage:/etc/ceph/

ceph.client.glance.keyring 100% 64 14.1KB/s 00:00

ceph.client.cinder.keyring 100% 64 28.0KB/s 00:00

10)更改下面keyring文件的属组属组,不然没有权限访问。

[root@controller ceph]# chown glance:glance /etc/ceph/ceph.client.glance.keyring

[root@controller ceph]# chown cinder:cinder /etc/ceph/ceph.client.glance.keyring

注意:必须已经在controller节点上安装过ceph包,也就是要有/etc/ceph这个文件夹,完成后可以在controller下面的/etc/ceph目录下看到ceph.client.glance.keyring秘钥环

运行着 glance-api 、 cinder-volume 、 nova-compute 或 cinder-backup 的主机被当作 Ceph 客户端

11)更改libvirt权限,只需要在nova-compute节点上操作就行

生成一个uuid,并将uuid写入/etc/ceph/uuid

[root@compute ceph]# uuidgen

3e3314c9-bfb0-439e-8764-61896c621b7e

[root@compute ceph]# vim uuid

3e3314c9-bfb0-439e-8764-61896c621b7e

在/etc/ceph目录下创建secret文件,添加以下内容

cat > secret.xml <<EOF

<secret ephemeral='no' private='no'>

<uuid>940f0485-e206-4b49-b878-dcd0cb9c70a4</uuid>

<usage type='ceph'>

<name>client.cinder secret</name>

</usage>

</secret>

将secret文件发送到其他compute节点,并执行以下操作

[root@compute ceph]# ceph auth get-key client.cinder > ./client.cinder.key

[root@compute ceph]# ls

ceph.client.admin.keyring ceph.conf secret.xml

ceph.client.cinder.keyring client.cinder.key tmpJKjseK

ceph.client.glance.keyring rbdmap uuid

[root@compute ceph]# virsh secret-set-value --secret 3e3314c9-bfb0-439e-8764-61896c621b7e --base64 $(cat ./client.cinder.key)

secret 值设定

15)在horizon页面删除镜像和实例

16)在controller节点上修改glance-api.conf配置文件,然后重启

[DEFAULT]

default_store = rbd

[glance_store]

stores = rbd

default_store = rbd

rbd_store_pool = images

rbd_store_user = glance

rbd_store_ceph_conf = /etc/ceph/ceph.conf

rbd_store_chunk_size = 8

[root@controller ceph]# systemctl restart openstack-glance-api

17)查看并重新创建image镜像

[root@controller ceph]# openstack image create "cirros" --file cirros-0.3.3-x86_64-disk.img --disk-format qcow2 --container-format bare --public

更改后镜像就存储在ceph集群glance pool里。

18)storage节点上修改cinder配置文件(两处),并重启controller节点相关服务和storage节点相关服务

enabled_backends = ceph

systemctl restart openstack-cinder-api.service openstack-cinder-scheduler.service openstack-cinder-volume.service(controller节点)

systemctl restart openstack-cinder-volumes(storage节点)

19)horizon界面创建卷验证

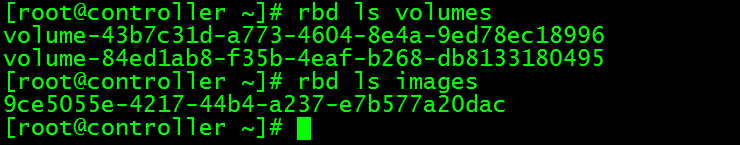

[root@controller gfs]# rbd ls volumes

volume-43b7c31d-a773-4604-8e4a-9ed78ec18996

20)在nova-compute节点修改nova配置文件,重启controller的nova相关服务和storage节点的相关服务

21)horizon界面创建虚拟机验证