最近写的天气爬虫想要让它在后台每天定时执行,一开始用的celery,但不知道为什么明明设置cron在某个时间运行,但任务却不间断的运行。无奈转用apscheduler,但是不管怎么设置都不能使得当调用: python tasks.py 的时候都会阻塞在控制台。再次无奈转用supervisor。

首先是任务tasks.py:

#-*- coding: utf-8 -*- #!/usr/bin/python import datetime from apscheduler.schedulers.blocking import BlockingScheduler from scrapy.crawler import CrawlerProcess from province_spider import ProvinceSpider from billiard import Process from scrapy.utils.log import configure_logging configure_logging({'LOG_FORMAT': '%(levelname)s: %(message)s', 'LOG_FILE': 'schedule.log'}) def _crawl(path=None): crawl = CrawlerProcess({ 'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)' }) crawl.crawl(ProvinceSpider) crawl.start() crawl.stop() def run_crawl(path=None): p = Process(target=_crawl, args=['hahahahha']) p.start() #p.join() scheduler = BlockingScheduler(daemon=True) scheduler.add_job(run_crawl, "cron", hour=8, minute=30, timezone='Asia/Shanghai') scheduler.add_job(run_crawl, "cron", hour=12, minute=30, timezone='Asia/Shanghai') scheduler.add_job(run_crawl, "cron", hour=18, minute=30, timezone='Asia/Shanghai') try: scheduler.start() except (KeyboardInterrupt, SystemExit): scheduler.shutdown()

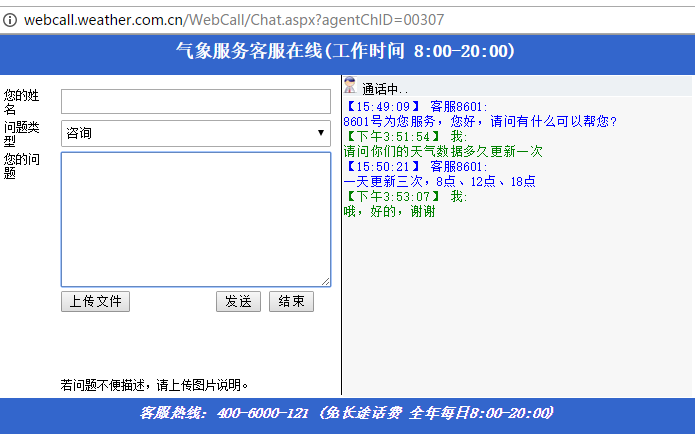

设置成8点半、12点半、18点半是因为天气数据是一天更新三次,分别在8点,12点,18点,有图为证:

直接执行:python tasks.py可以执行任务,但是会在控制台阻塞。这个时候要用supervisor。

ubuntu安装: apt-get install supervisor

开始:

1. 进行/etc/supervisor/conf.d 目录,新建weather_aps.conf文件,文件内容为:

[program:weather_aps]

command=python /var/my_git/WeatherCrawler/aps/tasks.py

autorstart=true

stdout_logfile=/var/my_git/WeatherCrawler/aps/log/weather_aps.log

2. 启动supervisor:

/etc/init.d/supervisor start

3. 启动成功后,查看weather_aps的状态:

supervisorctl status weather_aps

如果是running,则表示成功.

需要注意的是,如果在任务里面有日志输出到文件,而文件没有指定绝对路径的话,默认是在根目录生成,即在 ” / “ 目录下。