版权声明:原创作品,谢绝转载!否则将追究法律责任。

本篇博客的高可用集群是建立在完全分布式基础之上的,详情请参考:https://www.cnblogs.com/yinzhengjie/p/9065191.html。并且需要新增一台Linux服务器,用于Namenode的备份节点。

一.实验环境准备

需要准备五台Linux操作系统的服务器,配置参数最好一样,由于我的虚拟机是之前完全分布式部署而来的,因此我的环境都一致。

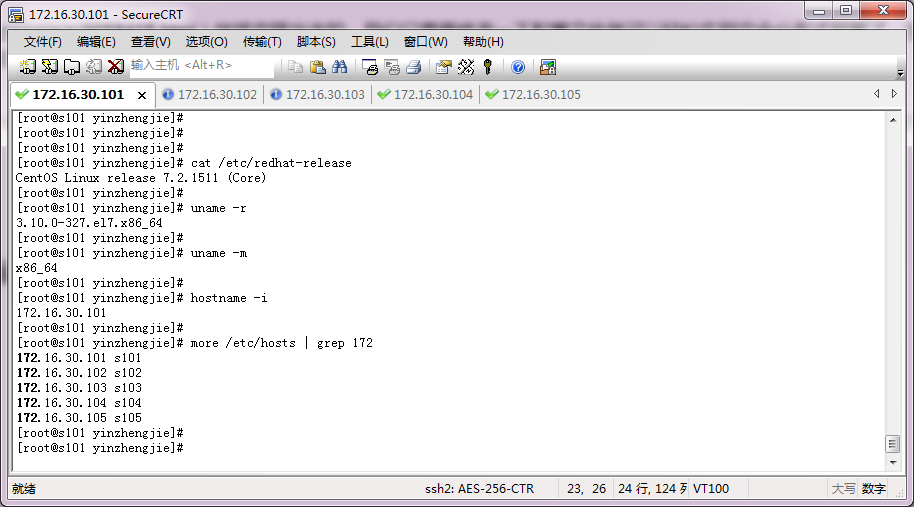

1>.NameNode服务器(s101)

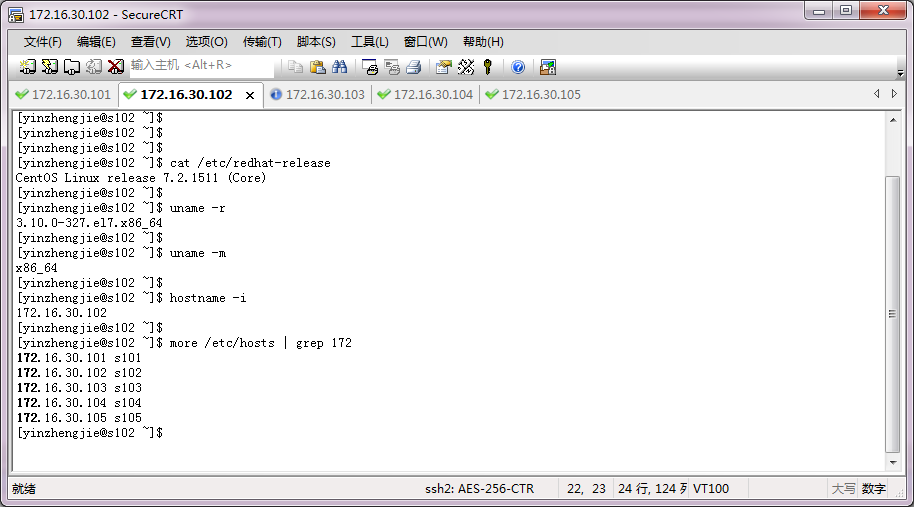

2>.DataNode服务器(s102)

3>.DataNode服务器(s103)

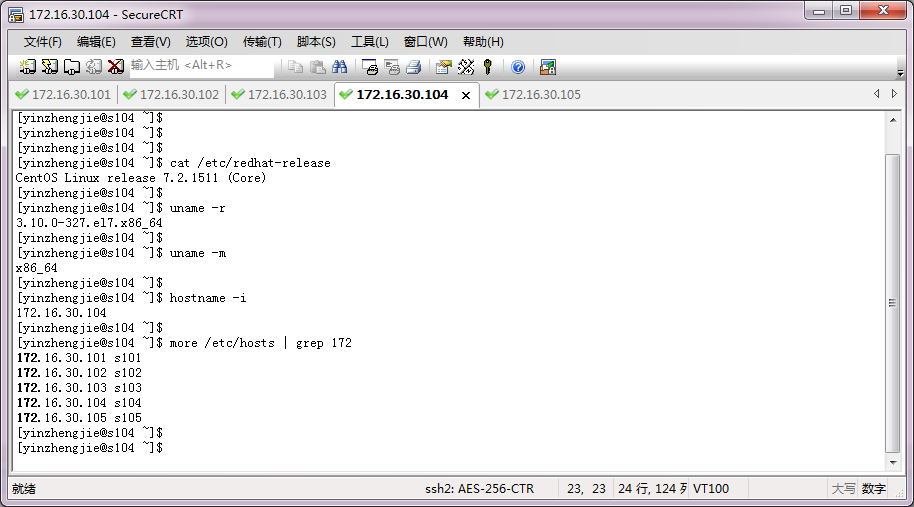

4>.DataNode服务器(s104)

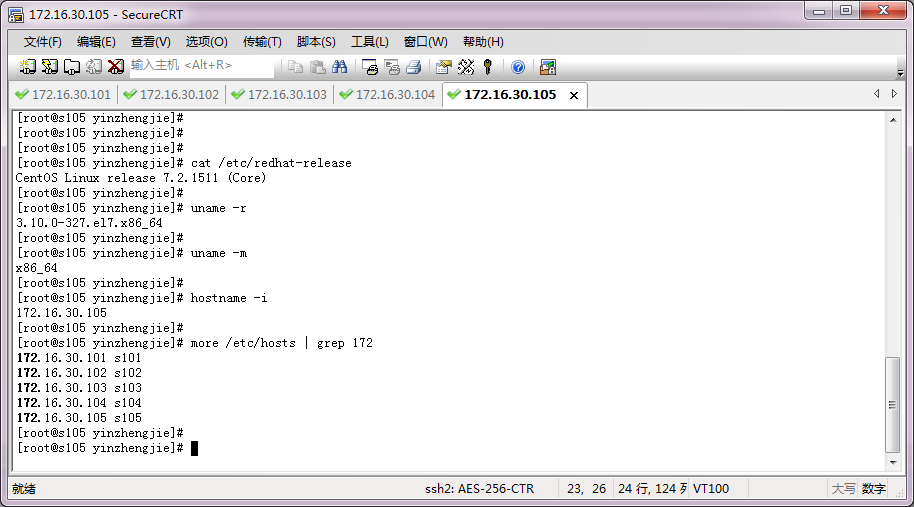

5>.DataNode服务器(s105)

二.在s101上修改配置文件并分发到其它节点

关于配置hadoop高可用的参数,参考官网链接:http://hadoop.apache.org/docs/r2.7.3/hadoop-project-dist/hadoop-hdfs/HDFSHighAvailabilityWithQJM.html

1>.在s101上拷贝配置目录并修改符号链接

[yinzhengjie@s101 ~]$ ll /soft/hadoop/etc/ total 12 drwxr-xr-x. 2 yinzhengjie yinzhengjie 4096 Jun 8 05:36 full lrwxrwxrwx. 1 yinzhengjie yinzhengjie 21 Jun 8 05:54 hadoop -> /soft/hadoop/etc/full drwxr-xr-x. 2 yinzhengjie yinzhengjie 4096 May 25 09:15 local drwxr-xr-x. 2 yinzhengjie yinzhengjie 4096 May 25 20:51 pseudo [yinzhengjie@s101 ~]$ cp -r /soft/hadoop/etc/full /soft/hadoop/etc/ha [yinzhengjie@s101 ~]$ ln -sfT /soft/hadoop/etc/ha /soft/hadoop/etc/hadoop [yinzhengjie@s101 ~]$ ll /soft/hadoop/etc/ total 16 drwxr-xr-x. 2 yinzhengjie yinzhengjie 4096 Jun 8 05:36 full drwxr-xr-x. 2 yinzhengjie yinzhengjie 4096 Jun 8 05:54 ha lrwxrwxrwx. 1 yinzhengjie yinzhengjie 19 Jun 8 05:54 hadoop -> /soft/hadoop/etc/ha drwxr-xr-x. 2 yinzhengjie yinzhengjie 4096 May 25 09:15 local drwxr-xr-x. 2 yinzhengjie yinzhengjie 4096 May 25 20:51 pseudo [yinzhengjie@s101 ~]$

2>.配置s105ssh免密登陆

[yinzhengjie@s101 ~]$ ssh-copy-id yinzhengjie@s105 /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys yinzhengjie@s105's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'yinzhengjie@s105'" and check to make sure that only the key(s) you wanted were added. [yinzhengjie@s101 ~]$ who yinzhengjie pts/0 2018-06-08 05:29 (172.16.30.1) [yinzhengjie@s101 ~]$ [yinzhengjie@s101 ~]$ ssh s105 Last login: Fri Jun 8 05:37:20 2018 from 172.16.30.1 [yinzhengjie@s105 ~]$ [yinzhengjie@s105 ~]$ who yinzhengjie pts/0 2018-06-08 05:37 (172.16.30.1) yinzhengjie pts/1 2018-06-08 05:56 (s101) [yinzhengjie@s105 ~]$ exit logout Connection to s105 closed. [yinzhengjie@s101 ~]$

3>.编辑core-site.xml配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/yinzhengjie/ha</value>

</property>

<property>

<name>hadoop.http.staticuser.user</name>

<value>yinzhengjie</value>

</property>

</configuration>

<!--

core-site.xml配置文件的作用:

用于定义系统级别的参数,如HDFS URL、Hadoop的临时

目录以及用于rack-aware集群中的配置文件的配置等,此中的参

数定义会覆盖core-default.xml文件中的默认配置。

fs.defaultFS 参数的作用:

#fs.defaultFS 客户端连接HDFS时,默认的路径前缀。如果前面配置了nameservice ID的值是mycluster,那么这里可以配置为授权

信息的一部分

hadoop.tmp.dir 参数的作用:

#声明hadoop工作目录的地址。

hadoop.http.staticuser.user 参数的作用:

#在网页界面访问数据使用的用户名。

-->

[yinzhengjie@s101 ~]$

4>.编辑hdfs-site.xml配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/home/yinzhengjie/ha/dfs/name1,/home/yinzhengjie/ha/dfs/name2</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/home/yinzhengjie/ha/dfs/data1,/home/yinzhengjie/ha/dfs/data2</value>

</property>

<!-- 高可用配置 -->

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>s101:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>s105:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>s101:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>s105:50070</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://s102:8485;s103:8485;s104:8485/mycluster</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<!-- 在容灾发生时,保护活跃的namenode -->

<property>

<name>dfs.ha.fencing.methods</name>

<value>

sshfence

shell(/bin/true)

</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/yinzhengjie/.ssh/id_rsa</value>

</property>

</configuration>

<!--

hdfs-site.xml 配置文件的作用:

#HDFS的相关设定,如文件副本的个数、块大小及是否使用强制权限

等,此中的参数定义会覆盖hdfs-default.xml文件中的默认配置.

dfs.replication 参数的作用:

#为了数据可用性及冗余的目的,HDFS会在多个节点上保存同一个数据

块的多个副本,其默认为3个。而只有一个节点的伪分布式环境中其仅用

保存一个副本即可,这可以通过dfs.replication属性进行定义。它是一个

软件级备份。

dfs.namenode.name.dir 参数的作用:

#本地磁盘目录,NN存储fsimage文件的地方。可以是按逗号分隔的目录列表,

fsimage文件会存储在全部目录,冗余安全。这里多个目录设定,最好在多个磁盘,

另外,如果其中一个磁盘故障,不会导致系统故障,会跳过坏磁盘。由于使用了HA,

建议仅设置一个。如果特别在意安全,可以设置2个

dfs.datanode.data.dir 参数的作用:

#本地磁盘目录,HDFS数据应该存储Block的地方。可以是逗号分隔的目录列表

(典型的,每个目录在不同的磁盘)。这些目录被轮流使用,一个块存储在这个目录,

下一个块存储在下一个目录,依次循环。每个块在同一个机器上仅存储一份。不存在

的目录被忽略。必须创建文件夹,否则被视为不存在。

dfs.nameservices 参数的作用:

#nameservices列表。逗号分隔。

dfs.ha.namenodes.mycluster 参数的作用:

#dfs.ha.namenodes.[nameservice ID] 命名空间中所有NameNode的唯一标示名称。

可以配置多个,使用逗号分隔。该名称是可以让DataNode知道每个集群的所有NameNode。

当前,每个集群最多只能配置两个NameNode。

dfs.namenode.rpc-address.mycluster.nn1 参数的作用:

#dfs.namenode.rpc-address.[nameservice ID].[name node ID] 每个namenode监听的RPC地址。

dfs.namenode.http-address.mycluster.nn1 参数的作用:

#dfs.namenode.http-address.[nameservice ID].[name node ID] 每个namenode监听的http地址。

dfs.namenode.shared.edits.dir 参数的作用:

#这是NameNode读写JNs组的uri。通过这个uri,NameNodes可以读写edit log内容。URI的格式"qjournal://host1:port1;host2:port

2;host3:port3/journalId"。这里的host1、host2、host3指的是Journal Node的地址,这里必须是奇数个,至少3个;其中journalId是集群

的唯一标识符,对于多个联邦命名空间,也使用同一个journalId。

dfs.client.failover.proxy.provider.mycluster 参数的作用:

#这里配置HDFS客户端连接到Active NameNode的一个java类

dfs.ha.fencing.methods 参数的作用:

#dfs.ha.fencing.methods 配置active namenode出错时的处理类。当active namenode出错时,一般需要关闭该进程。处理方式可以

是ssh也可以是shell。

dfs.ha.fencing.ssh.private-key-files 参数的作用:

#使用sshfence时,SSH的私钥文件。 使用了sshfence,这个必须指定

-->

[yinzhengjie@s101 ~]$

5>.分发配置文件

[yinzhengjie@s101 ~]$ more `which xrsync.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com

#判断用户是否传参

if [ $# -lt 1 ];then

echo "请输入参数";

exit

fi

#获取文件路径

file=$@

#获取子路径

filename=`basename $file`

#获取父路径

dirpath=`dirname $file`

#获取完整路径

cd $dirpath

fullpath=`pwd -P`

#同步文件到DataNode

for (( i=102;i<=105;i++ ))

do

#使终端变绿色

tput setaf 2

echo =========== s$i %file ===========

#使终端变回原来的颜色,即白灰色

tput setaf 7

#远程执行命令

rsync -lr $filename `whoami`@s$i:$fullpath

#判断命令是否执行成功

if [ $? == 0 ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$ xrsync.sh /soft/hadoop/etc/

=========== s102 %file ===========

命令执行成功

=========== s103 %file ===========

命令执行成功

=========== s104 %file ===========

命令执行成功

=========== s105 %file ===========

命令执行成功

[yinzhengjie@s101 ~]$

三.启动HDFS分布式系统

1>.启动journalnode进程

[yinzhengjie@s101 ~]$ hadoop-daemons.sh start journalnode

s104: starting journalnode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-journalnode-s104.out

s103: starting journalnode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-journalnode-s103.out

s102: starting journalnode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-journalnode-s102.out

[yinzhengjie@s101 ~]$ xcall.sh jps

============= s101 jps ============

2855 Jps

命令执行成功

============= s102 jps ============

2568 Jps

2490 JournalNode

命令执行成功

============= s103 jps ============

2617 Jps

2539 JournalNode

命令执行成功

============= s104 jps ============

2611 Jps

2532 JournalNode

命令执行成功

============= s105 jps ============

2798 Jps

命令执行成功

[yinzhengjie@s101 ~]$ more `which xcall.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com

#判断用户是否传参

if [ $# -lt 1 ];then

echo "请输入参数"

exit

fi

#获取用户输入的命令

cmd=$@

for (( i=101;i<=105;i++ ))

do

#使终端变绿色

tput setaf 2

echo ============= s$i $cmd ============

#使终端变回原来的颜色,即白灰色

tput setaf 7

#远程执行命令

ssh s$i $cmd

#判断命令是否执行成功

if [ $? == 0 ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

2>.格式化名称节点

[yinzhengjie@s101 ~]$ hdfs namenode -format

[yinzhengjie@s101 ~]$ hdfs namenode -format3>.将s101中的工作目录同步到s105

[yinzhengjie@s101 ~]$ scp -r /home/yinzhengjie/ha yinzhengjie@s105:~ VERSION 100% 205 0.2KB/s 00:00 seen_txid 100% 2 0.0KB/s 00:00 fsimage_0000000000000000000.md5 100% 62 0.1KB/s 00:00 fsimage_0000000000000000000 100% 358 0.4KB/s 00:00 VERSION 100% 205 0.2KB/s 00:00 seen_txid 100% 2 0.0KB/s 00:00 fsimage_0000000000000000000.md5 100% 62 0.1KB/s 00:00 fsimage_0000000000000000000 100% 358 0.4KB/s 00:00 [yinzhengjie@s101 ~]$

4>.启动hdfs进程

[yinzhengjie@s101 ~]$ start-dfs.sh Starting namenodes on [s101 s105] s105: starting namenode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-namenode-s105.out s101: starting namenode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-namenode-s101.out s104: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s104.out s103: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s103.out s102: starting datanode, logging to /soft/hadoop-2.7.3/logs/hadoop-yinzhengjie-datanode-s102.out Starting journal nodes [s102 s103 s104] s102: journalnode running as process 2490. Stop it first. s104: journalnode running as process 2532. Stop it first. s103: journalnode running as process 2539. Stop it first. [yinzhengjie@s101 ~]$ xcall.sh jps ============= s101 jps ============ 3377 Jps 3117 NameNode 命令执行成功 ============= s102 jps ============ 2649 DataNode 2490 JournalNode 2764 Jps 命令执行成功 ============= s103 jps ============ 2539 JournalNode 2700 DataNode 2815 Jps 命令执行成功 ============= s104 jps ============ 2532 JournalNode 2693 DataNode 2809 Jps 命令执行成功 ============= s105 jps ============ 3171 NameNode 3254 Jps 命令执行成功 [yinzhengjie@s101 ~]$

5>.手动将s101转换成激活状态

[yinzhengjie@s101 ~]$ hdfs haadmin -transitionToActive nn1 //手动将s101转换成激活状态 [yinzhengjie@s101 ~]$

配置到这里基本上高可用就配置好了,但是美中不足的是需要字节手动切换NameNode模式,这就比较麻烦了。索性的是:Hadoop生态圈有专门维护的工具叫做zookeeper工具,我们可以用该工具对集群继续管理就相当方便啦!详情请参考:https://www.cnblogs.com/yinzhengjie/p/9154265.html