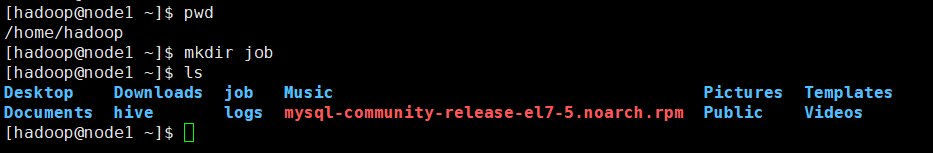

先创建一个目录

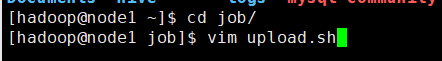

在这个job目录下创建upload.sh文件

[hadoop@node1 ~]$ pwd /home/hadoop [hadoop@node1 ~]$ mkdir job [hadoop@node1 ~]$ ls Desktop Downloads job Music Pictures Templates Documents hive logs mysql-community-release-el7-5.noarch.rpm Public Videos [hadoop@node1 ~]$ cd job/ [hadoop@node1 job]$ vim upload.sh

对upload.sh进行编辑

#!/bin/bash #set java env export JAVA_HOME=/opt/modules/jdk1.8.0_65 export JRE_HOME=${JAVA_HOME}/jre export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib export PATH=${JAVA_HOME}/bin:$PATH #set hadoop env export HADOOP_HOME=/opt/modules/hadoop-2.6.0 export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH log_src_dir=/home/hadoop/logs/log/ log_toupload_dir=/home/hadoop/logs/toupload/ hdfs_root_dir=/data/clickLog/20190620/ echo "log_src_dir:"$log_src_dir ls $log_src_dir | while read fileName do if [[ "$fileName" == access.log ]]; then # if [ "access.log" = "$fileName" ];then date=`date +%Y_%m_%d_%H_%M_%S` #将文件移动到待上传目录并重命名 #打印信息 echo "moving $log_src_dir$fileName to $log_toupload_dir"xxxxx_click_log_$fileName"$date" mv $log_src_dir$fileName $log_toupload_dir"xxxxx_click_log_$fileName"$date #将待上传的文件path写入一个列表文件willDoing echo $log_toupload_dir"xxxxx_click_log_$fileName"$date >> $log_toupload_dir"willDoing."$date fi done #找到列表文件willDoing ls $log_toupload_dir | grep will |grep -v "_COPY_" | grep -v "_DONE_" | while read line do #打印信息 echo "toupload is in file:"$line #将待上传文件列表willDoing改名为willDoing_COPY_ mv $log_toupload_dir$line $log_toupload_dir$line"_COPY_" #读列表文件willDoing_COPY_的内容(一个一个的待上传文件名) ,此处的line 就是列表中的一个待上传文件的path cat $log_toupload_dir$line"_COPY_" |while read line do #打印信息 echo "puting...$line to hdfs path.....$hdfs_root_dir" hadoop fs -put $line $hdfs_root_dir done mv $log_toupload_dir$line"_COPY_" $log_toupload_dir$line"_DONE_" done

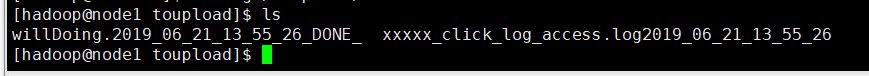

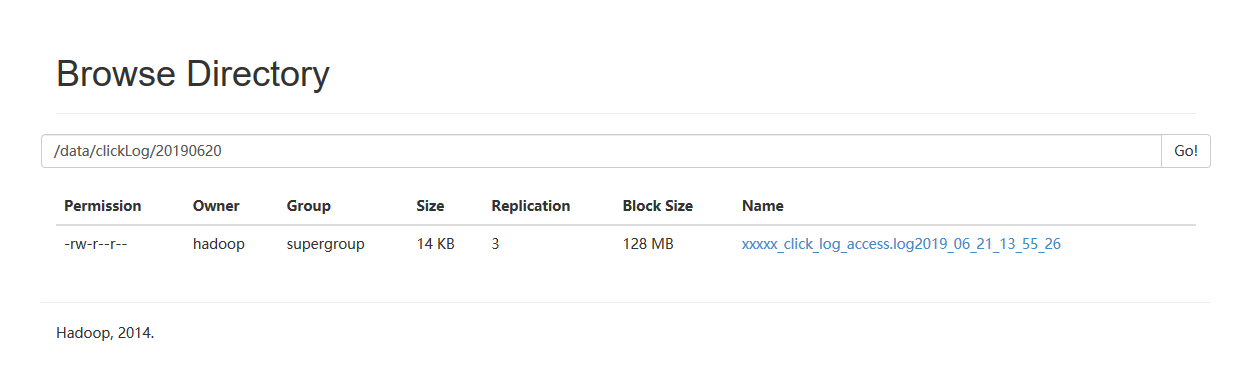

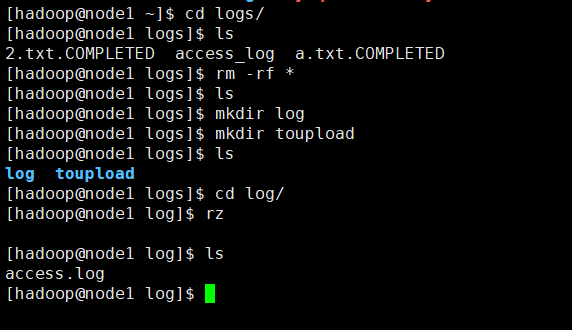

然后新建目录,并上传日志文件

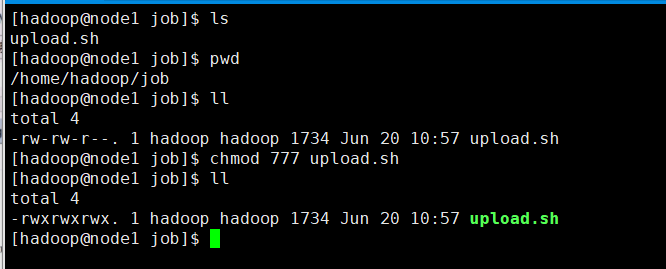

给脚本赋予权限

[hadoop@node1 job]$ ls upload.sh [hadoop@node1 job]$ pwd /home/hadoop/job [hadoop@node1 job]$ ll total 4 -rw-rw-r--. 1 hadoop hadoop 1734 Jun 20 10:57 upload.sh [hadoop@node1 job]$ chmod 777 upload.sh [hadoop@node1 job]$ ll total 4 -rwxrwxrwx. 1 hadoop hadoop 1734 Jun 20 10:57 upload.sh [hadoop@node1 job]$

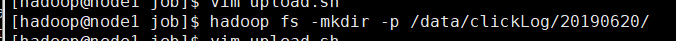

在HDFS上新建目录

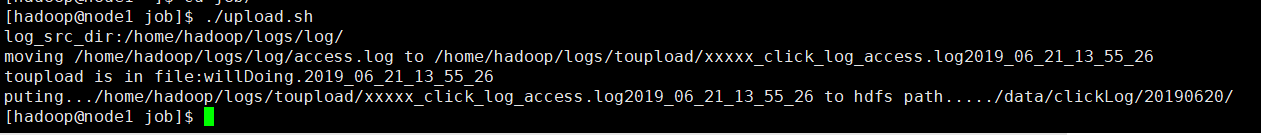

执行脚本

可以看到结果