1、分析Elasticsearch查询语句的功能。

1)、首先需要收集Elasticsearch集群的查询语句。

2)、然后分析查询语句的常用语句、响应时长等等指标。

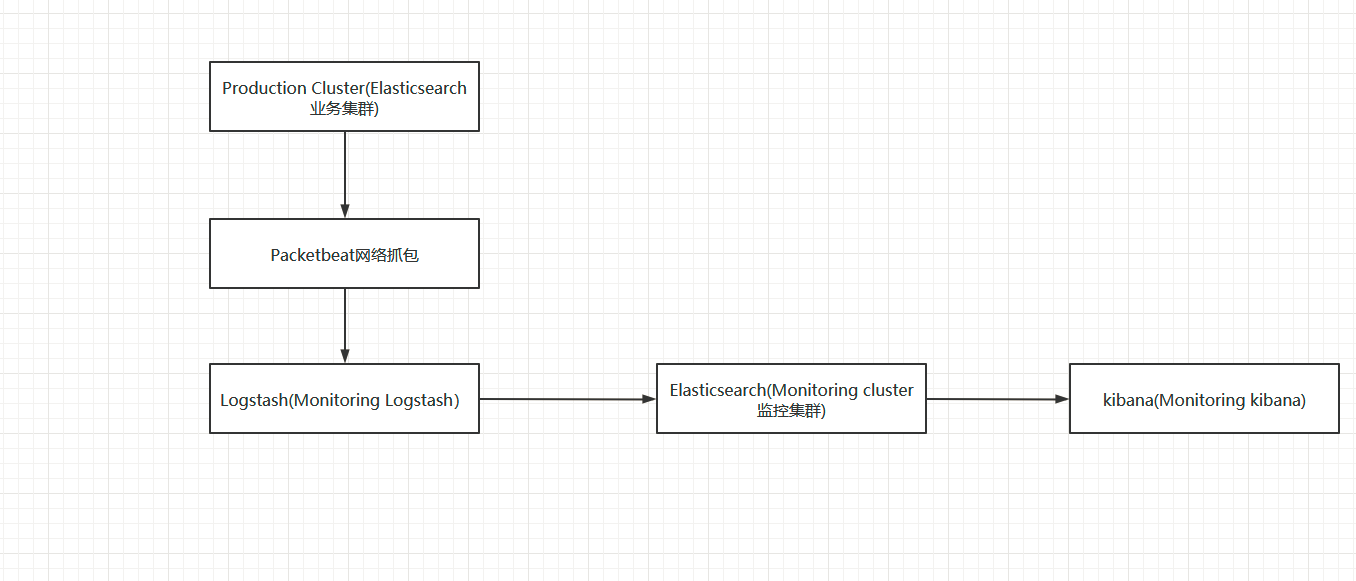

2、分析Elasticsearch查询语句的功能,使用方案。

1)、应用Packetbeat + Logstash完成数据收集工作。

2)、使用Kibana + Elasticsearch完成数据分析工作。

3、分析Elasticsearch查询语句的功能,流程分析。

1)、Production Cluster(Elasticsearch集群) -> Packetbeat -> Logstash(Monitoring Logstash) -> Elasticsearch(Monitoring cluster) -> kibana(Monitoring kibana)。

2)、Production Cluster,可以使用Elasticsearch,地址http://192.168.110.133:9200。kibana,地址http:192.168.110.133:5601。

3)、Elasticsearch(Monitoring cluster,用于存储Packetbeat抓取的查询语句。Elasticsearch地址http://192.168.110.133:8200,可以通过bin/elasticsearch -Ecluster.name=sniff_search -Ehttp.port=8200 -Epath.data=sniff快速启动一个节点。kibana,地址http:192.168.110.133:8601。快速启动方式,bin/kibana -e http://192.168.110.133:8200 -p 8601。

注意:Production与Monitoring不能是一个集群,否则会进入抓包死循环。

4、关于Logstash的配置方案,文件名称sniff_search.conf,如下所示:

1 input { 2 beats { # 在5044端口接收beats的输入 3 port => 5044 4 } 5 } 6 filter { 7 if "search" in [request]{ # 查询语句的过滤,如果请求中包含search才进行处理 8 grok { # 从request中提取query_body,即实际的查询语句。 9 match => { "request" => ".* {(?<query_body>.*)"} 10 } 11 grok { # 从path中提取index,即对某个索引的操作。 12 match => { "path" => "/(?<index>.*)/_search"} 13 } 14 if [index] { 15 } else { 16 mutate { 17 add_field => { "index" => "All" } 18 } 19 } 20 21 mutate { 22 update => { "query_body" => "{%{query_body}"}} 23 } 24 25 # mutate { 26 # remove_field => [ "[http][response][body]" ] 27 # } 28 } 29 30 output { 31 #stdout{codec=>rubydebug} 32 33 if "search" in [request]{ # 只对查询做存储,如果存在查询就保存到监控的elasticsearch中。 34 elasticsearch { 35 hosts => "192.168.110.133:8200" 36 } 37 } 38 }

关于Packetbeat的配置方案,文件名称sniff_search.yml,如下所示:

1 #################### Packetbeat Configuration Example ######################### 2 3 # This file is an example configuration file highlighting only the most common 4 # options. The packetbeat.full.yml file from the same directory contains all the 5 # supported options with more comments. You can use it as a reference. 6 # 7 # You can find the full configuration reference here: 8 # https://www.elastic.co/guide/en/beats/packetbeat/index.html 9 10 #============================== Network device ================================ 11 12 # Select the network interface to sniff the data. On Linux, you can use the 13 # "any" keyword to sniff on all connected interfaces. 14 packetbeat.interfaces.device: any 15 16 packetbeat.protocols.http: 17 # Configure the ports where to listen for HTTP traffic. You can disable 18 # the HTTP protocol by commenting out the list of ports. 19 ports: [9200] 20 send_request: true 21 include_body_for: ["application/json", "x-www-form-urlencoded"] 22 23 24 #================================ Outputs ===================================== 25 26 # Configure what outputs to use when sending the data collected by the beat. 27 # Multiple outputs may be used. 28 29 #-------------------------- Elasticsearch output ------------------------------ 30 #output.elasticsearch: 31 # Array of hosts to connect to. 32 # hosts: ["localhost:9200"] 33 34 # Optional protocol and basic auth credentials. 35 #protocol: "https" 36 #username: "elastic" 37 #password: "changeme" 38 39 #output.console: 40 # pretty: true 41 42 output.logstash: # 输出到 logstash中。 43 hosts: ["192.168.110.133:5044"] 44 45 46 #================================ Logging ===================================== 47 48 # Sets log level. The default log level is info. 49 # Available log levels are: critical, error, warning, info, debug 50 #logging.level: debug 51 52 # At debug level, you can selectively enable logging only for some components. 53 # To enable all selectors use ["*"]. Examples of other selectors are "beat", 54 # "publish", "service". 55 #logging.selectors: ["*"]

5、首先启动Production Cluster(Elasticsearch业务集群或者节点),然后启动kibana,如下所示:

1 [elsearch@slaver1 elasticsearch-6.7.0]$ ./bin/elasticsearch -d 2 [elsearch@slaver1 elasticsearch-6.7.0]$ jps 3 2645 Jps 4 2582 Elasticsearch 5 [elsearch@slaver1 elasticsearch-6.7.0]$ free -h 6 total used free shared buff/cache available 7 Mem: 5.3G 1.6G 3.2G 22M 485M 3.5G 8 Swap: 0B 0B 0B 9 [elsearch@slaver1 elasticsearch-6.7.0]$ curl http://192.168.110.133:9200/ 10 { 11 "name" : "cLqvbUZ", 12 "cluster_name" : "elasticsearch", 13 "cluster_uuid" : "FSGn9ENRTh6Ya5SBPV9bxA", 14 "version" : { 15 "number" : "6.7.0", 16 "build_flavor" : "default", 17 "build_type" : "tar", 18 "build_hash" : "8453f77", 19 "build_date" : "2019-03-21T15:32:29.844721Z", 20 "build_snapshot" : false, 21 "lucene_version" : "7.7.0", 22 "minimum_wire_compatibility_version" : "5.6.0", 23 "minimum_index_compatibility_version" : "5.0.0" 24 }, 25 "tagline" : "You Know, for Search" 26 } 27 [elsearch@slaver1 elasticsearch-6.7.0]$ cd ../kibana-6.7.0-linux-x86_64/ 28 [elsearch@slaver1 kibana-6.7.0-linux-x86_64]$ ls 29 bin built_assets config data LICENSE.txt node node_modules nohup.out NOTICE.txt optimize package.json plugins README.txt src target webpackShims 30 [elsearch@slaver1 kibana-6.7.0-linux-x86_64]$ nohup ./bin/kibana & 31 [1] 2717 32 [elsearch@slaver1 kibana-6.7.0-linux-x86_64]$ nohup: 忽略输入并把输出追加到"nohup.out" 33 34 [elsearch@slaver1 kibana-6.7.0-linux-x86_64]$ fuser -n tcp 5601

然后启动Elasticsearch监控集群或者节点,Elasticsearch(Monitoring cluster监控集群或者节点),用于存储Packetbeat抓取的查询语句。

1)、Elasticsearch地址http://192.168.110.133:8200,可以通过bin/elasticsearch -Ecluster.name=sniff_search -Ehttp.port=8200 -Epath.data=sniff_search快速启动一个节点。其中修改集群名称、端口号、数据存储位置。访问地址:http://192.168.110.133:8200/

1 [elsearch@slaver1 elasticsearch-6.7.0]$ ./bin/elasticsearch -Ecluster.name=sniff_search -Ehttp.port=8200 -Epath.data=sniff_search

2)、kibana,地址http:192.168.110.133:8601。快速启动方式,bin/kibana -e http://192.168.110.133:8200 -p 8601。如果访问kibana,出现Kibana server is not ready yet,说明还在启动,不是报错了。访问地址:http://192.168.110.133:8601/

3)、现在开始启动Logstash和Packetbeat,首先启动Logstash,然后启动Packbeat。

1 [elsearch@slaver1 logstash-6.7.0]$ ./bin/logstash -f config/sniff_search.conf 2 Sending Logstash logs to /home/hadoop/soft/logstash-6.7.0/logs which is now configured via log4j2.properties 3 [2021-01-11T17:00:28,768][INFO ][logstash.setting.writabledirectory] Creating directory {:setting=>"path.queue", :path=>"/home/hadoop/soft/logstash-6.7.0/data/queue"} 4 [2021-01-11T17:00:28,835][INFO ][logstash.setting.writabledirectory] Creating directory {:setting=>"path.dead_letter_queue", :path=>"/home/hadoop/soft/logstash-6.7.0/data/dead_letter_queue"} 5 [2021-01-11T17:00:30,167][WARN ][logstash.config.source.multilocal] Ignoring the 'pipelines.yml' file because modules or command line options are specified 6 [2021-01-11T17:00:30,218][INFO ][logstash.runner ] Starting Logstash {"logstash.version"=>"6.7.0"} 7 [2021-01-11T17:00:30,295][INFO ][logstash.agent ] No persistent UUID file found. Generating new UUID {:uuid=>"3e7c3496-04fa-4f22-a768-d5e140a69887", :path=>"/home/hadoop/soft/logstash-6.7.0/data/uuid"} 8 [2021-01-11T17:00:51,925][INFO ][logstash.pipeline ] Starting pipeline {:pipeline_id=>"main", "pipeline.workers"=>2, "pipeline.batch.size"=>125, "pipeline.batch.delay"=>50} 9 [2021-01-11T17:00:53,149][INFO ][logstash.outputs.elasticsearch] Elasticsearch pool URLs updated {:changes=>{:removed=>[], :added=>[http://192.168.110.133:8200/]}} 10 [2021-01-11T17:00:53,628][WARN ][logstash.outputs.elasticsearch] Restored connection to ES instance {:url=>"http://192.168.110.133:8200/"} 11 [2021-01-11T17:00:53,772][INFO ][logstash.outputs.elasticsearch] ES Output version determined {:es_version=>6} 12 [2021-01-11T17:00:53,778][WARN ][logstash.outputs.elasticsearch] Detected a 6.x and above cluster: the `type` event field won't be used to determine the document _type {:es_version=>6} 13 [2021-01-11T17:00:53,829][INFO ][logstash.outputs.elasticsearch] New Elasticsearch output {:class=>"LogStash::Outputs::ElasticSearch", :hosts=>["//192.168.110.133:8200"]} 14 [2021-01-11T17:00:53,890][INFO ][logstash.outputs.elasticsearch] Using default mapping template 15 [2021-01-11T17:00:54,039][INFO ][logstash.outputs.elasticsearch] Attempting to install template {:manage_template=>{"template"=>"logstash-*", "version"=>60001, "settings"=>{"index.refresh_interval"=>"5s"}, "mappings"=>{"_default_"=>{"dynamic_templates"=>[{"message_field"=>{"path_match"=>"message", "match_mapping_type"=>"string", "mapping"=>{"type"=>"text", "norms"=>false}}}, {"string_fields"=>{"match"=>"*", "match_mapping_type"=>"string", "mapping"=>{"type"=>"text", "norms"=>false, "fields"=>{"keyword"=>{"type"=>"keyword", "ignore_above"=>256}}}}}], "properties"=>{"@timestamp"=>{"type"=>"date"}, "@version"=>{"type"=>"keyword"}, "geoip"=>{"dynamic"=>true, "properties"=>{"ip"=>{"type"=>"ip"}, "location"=>{"type"=>"geo_point"}, "latitude"=>{"type"=>"half_float"}, "longitude"=>{"type"=>"half_float"}}}}}}}} 16 [2021-01-11T17:00:54,197][INFO ][logstash.outputs.elasticsearch] Installing elasticsearch template to _template/logstash 17 [2021-01-11T17:00:56,341][INFO ][logstash.inputs.beats ] Beats inputs: Starting input listener {:address=>"0.0.0.0:5044"} 18 [2021-01-11T17:00:56,437][INFO ][logstash.pipeline ] Pipeline started successfully {:pipeline_id=>"main", :thread=>"#<Thread:0x55951b0d run>"} 19 [2021-01-11T17:00:56,739][INFO ][org.logstash.beats.Server] Starting server on port: 5044 20 [2021-01-11T17:00:56,918][INFO ][logstash.agent ] Pipelines running {:count=>1, :running_pipelines=>[:main], :non_running_pipelines=>[]} 21 [2021-01-11T17:00:57,772][INFO ][logstash.agent ] Successfully started Logstash API endpoint {:port=>9600}

开始然后启动Packbeat,如果下面的报错,将输出到控制台的注释了即可,这里只向logstash输出,如下所示:

1 [elsearch@slaver1 packetbeat-6.7.0-linux-x86_64]$ sudo ./packetbeat -e -c sniff_search.yml -strict.perms=false 2 Exiting: error unpacking config data: more than one namespace configured accessing 'output' (source:'sniff_search.yml') 3 [elsearch@slaver1 packetbeat-6.7.0-linux-x86_64]$ vim sniff_search.yml 4 [elsearch@slaver1 packetbeat-6.7.0-linux-x86_64]$ sudo ./packetbeat -e -c sniff_search.yml -strict.perms=false 5 2021-01-11T17:09:59.624+0800 INFO instance/beat.go:612 Home path: [/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64] Config path: [/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64] Data path: [/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64/data] Logs path: [/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64/logs] 6 2021-01-11T17:09:59.626+0800 INFO instance/beat.go:619 Beat UUID: eac3176e-b703-4258-8b17-ece52ba6b6b2 7 2021-01-11T17:09:59.626+0800 INFO [seccomp] seccomp/seccomp.go:116 Syscall filter successfully installed 8 2021-01-11T17:09:59.626+0800 INFO [beat] instance/beat.go:932 Beat info {"system_info": {"beat": {"path": {"config": "/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64", "data": "/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64/data", "home": "/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64", "logs": "/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64/logs"}, "type": "packetbeat", "uuid": "eac3176e-b703-4258-8b17-ece52ba6b6b2"}}} 9 2021-01-11T17:09:59.626+0800 INFO [beat] instance/beat.go:941 Build info {"system_info": {"build": {"commit": "14ca49c28a6e10b84b4ea8cdebdc46bd2eab3130", "libbeat": "6.7.0", "time": "2019-03-21T14:48:48.000Z", "version": "6.7.0"}}} 10 2021-01-11T17:09:59.626+0800 INFO [beat] instance/beat.go:944 Go runtime info {"system_info": {"go": {"os":"linux","arch":"amd64","max_procs":2,"version":"go1.10.8"}}} 11 2021-01-11T17:09:59.654+0800 INFO [beat] instance/beat.go:948 Host info {"system_info": {"host": {"architecture":"x86_64","boot_time":"2021-01-11T16:37:31+08:00","containerized":true,"name":"slaver1","ip":["127.0.0.1/8","::1/128","192.168.110.133/24","fe80::b65d:d33b:d10d:8133/64","192.168.122.1/24"],"kernel_version":"3.10.0-957.el7.x86_64","mac":["00:0c:29:e3:5a:02","52:54:00:f6:a6:99","52:54:00:f6:a6:99"],"os":{"family":"redhat","platform":"centos","name":"CentOS Linux","version":"7 (Core)","major":7,"minor":7,"patch":1908,"codename":"Core"},"timezone":"CST","timezone_offset_sec":28800,"id":"6ac9593fe0bc4b3cabb828e56c00d0ae"}}} 12 2021-01-11T17:09:59.661+0800 INFO [beat] instance/beat.go:977 Process info {"system_info": {"process": {"capabilities": {"inheritable":null,"permitted":["chown","dac_override","dac_read_search","fowner","fsetid","kill","setgid","setuid","setpcap","linux_immutable","net_bind_service","net_broadcast","net_admin","net_raw","ipc_lock","ipc_owner","sys_module","sys_rawio","sys_chroot","sys_ptrace","sys_pacct","sys_admin","sys_boot","sys_nice","sys_resource","sys_time","sys_tty_config","mknod","lease","audit_write","audit_control","setfcap","mac_override","mac_admin","syslog","wake_alarm","block_suspend"],"effective":["chown","dac_override","dac_read_search","fowner","fsetid","kill","setgid","setuid","setpcap","linux_immutable","net_bind_service","net_broadcast","net_admin","net_raw","ipc_lock","ipc_owner","sys_module","sys_rawio","sys_chroot","sys_ptrace","sys_pacct","sys_admin","sys_boot","sys_nice","sys_resource","sys_time","sys_tty_config","mknod","lease","audit_write","audit_control","setfcap","mac_override","mac_admin","syslog","wake_alarm","block_suspend"],"bounding":["chown","dac_override","dac_read_search","fowner","fsetid","kill","setgid","setuid","setpcap","linux_immutable","net_bind_service","net_broadcast","net_admin","net_raw","ipc_lock","ipc_owner","sys_module","sys_rawio","sys_chroot","sys_ptrace","sys_pacct","sys_admin","sys_boot","sys_nice","sys_resource","sys_time","sys_tty_config","mknod","lease","audit_write","audit_control","setfcap","mac_override","mac_admin","syslog","wake_alarm","block_suspend"],"ambient":null}, "cwd": "/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64", "exe": "/home/hadoop/soft/packetbeat-6.7.0-linux-x86_64/packetbeat", "name": "packetbeat", "pid": 4529, "ppid": 4527, "seccomp": {"mode":"filter"}, "start_time": "2021-01-11T17:09:58.920+0800"}}} 13 2021-01-11T17:09:59.661+0800 INFO instance/beat.go:280 Setup Beat: packetbeat; Version: 6.7.0 14 2021-01-11T17:09:59.670+0800 INFO [publisher] pipeline/module.go:110 Beat name: slaver1 15 2021-01-11T17:09:59.670+0800 INFO procs/procs.go:101 Process watcher disabled 16 2021-01-11T17:09:59.672+0800 WARN [cfgwarn] protos/protos.go:118 DEPRECATED: dictionary style protocols configuration has been deprecated. Please use list-style protocols configuration. Will be removed in version: 7.0.0 17 2021-01-11T17:09:59.673+0800 INFO [monitoring] log/log.go:117 Starting metrics logging every 30s 18 2021-01-11T17:09:59.673+0800 INFO instance/beat.go:402 packetbeat start running. 19 2021-01-11T17:10:02.245+0800 INFO pipeline/output.go:95 Connecting to backoff(async(tcp://192.168.110.133:5044)) 20 2021-01-11T17:10:02.246+0800 INFO pipeline/output.go:105 Connection to backoff(async(tcp://192.168.110.133:5044)) established

6、此时,整个流程就已经搞完了,现在在Elasticsearch业务集群或者节点,然后在Elasticsearch监控集群或者节点就可以查看相关的信息了。

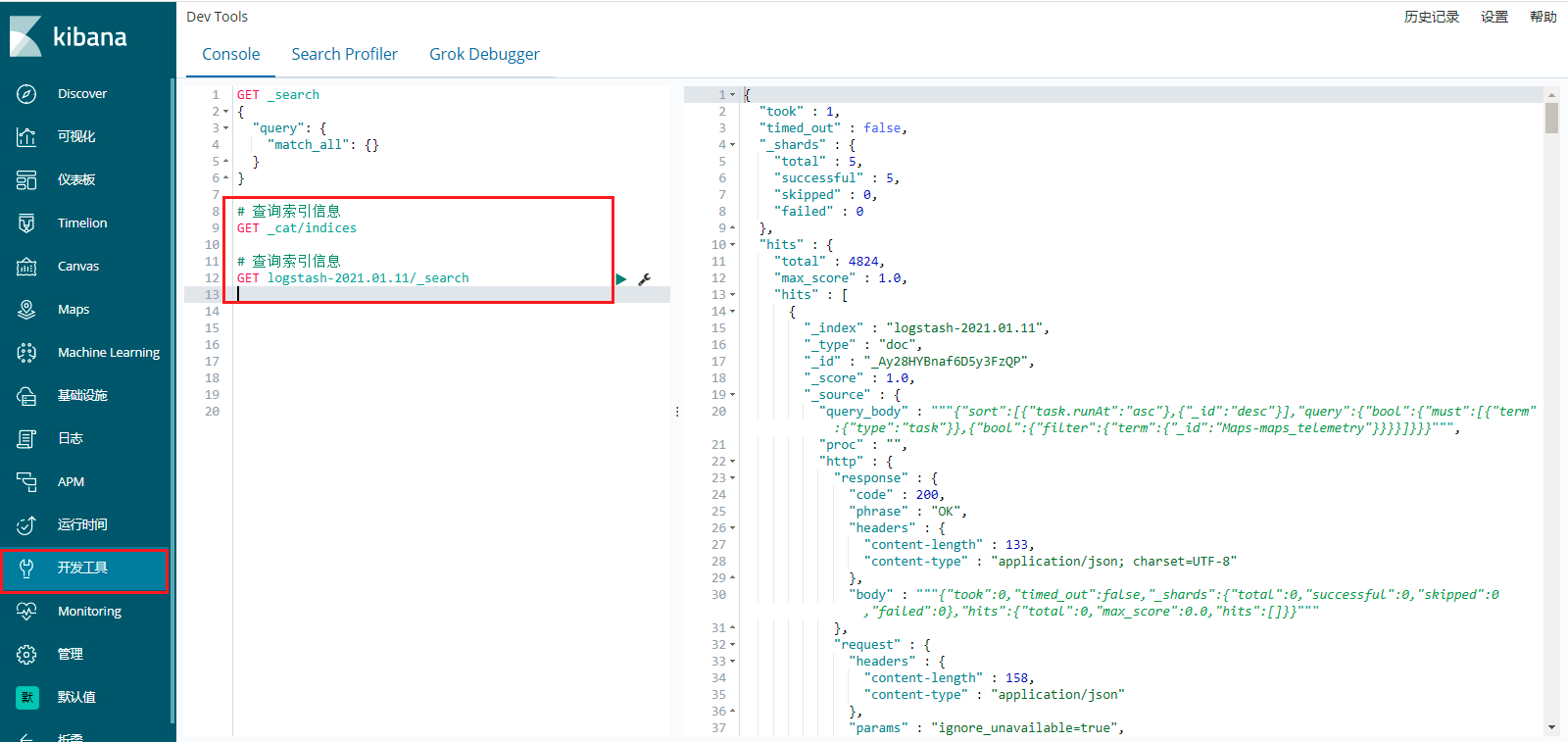

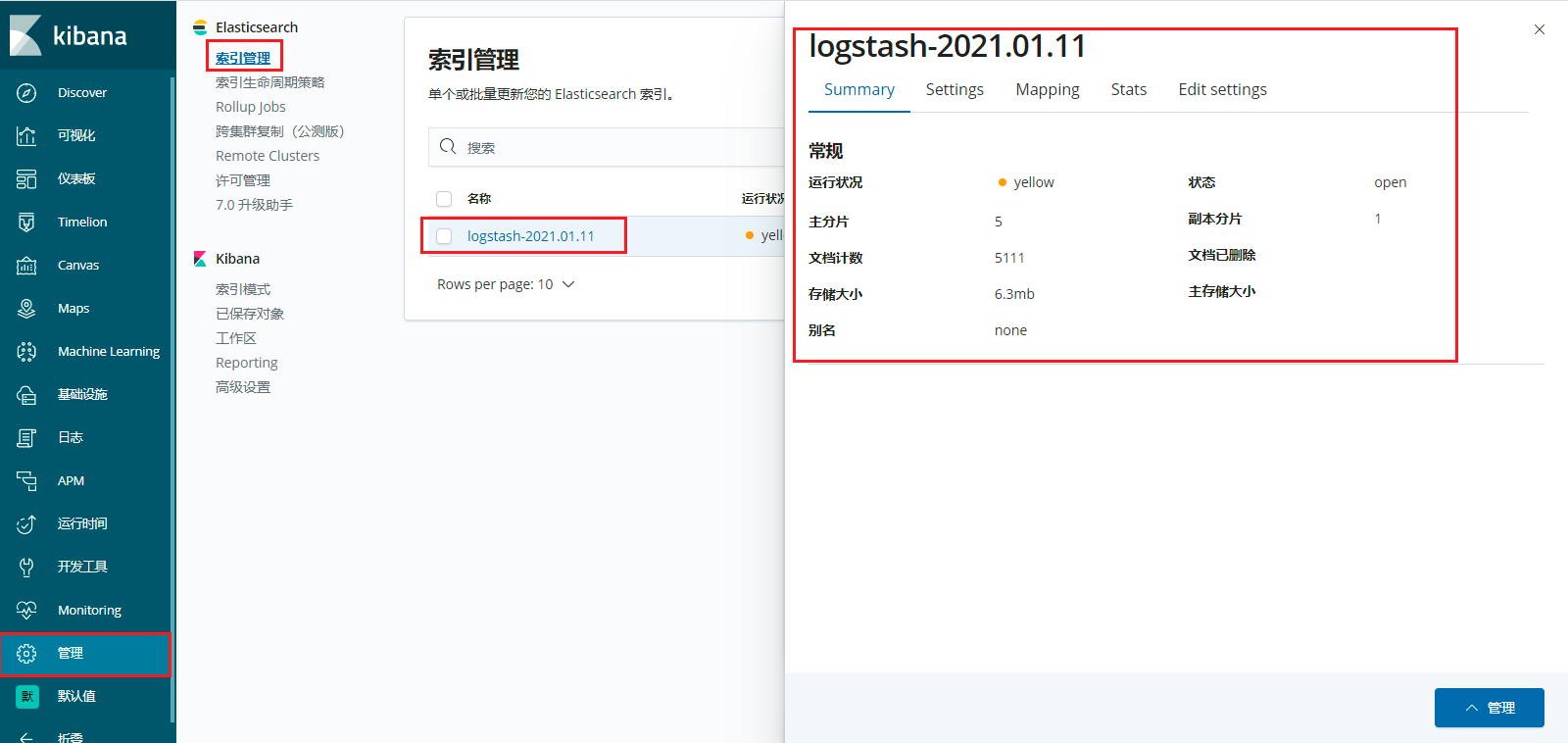

查看http://192.168.110.133:8601/ 这个Elasticsearch监控集群或者节点,发现已经有logstash-2021.01.11这个索引了,可以查看一下这个索引信息。

然后查看管理,点击索引管理,可以查看Elasticsearch创建的索引信息,查看一些具体的配置什么的。

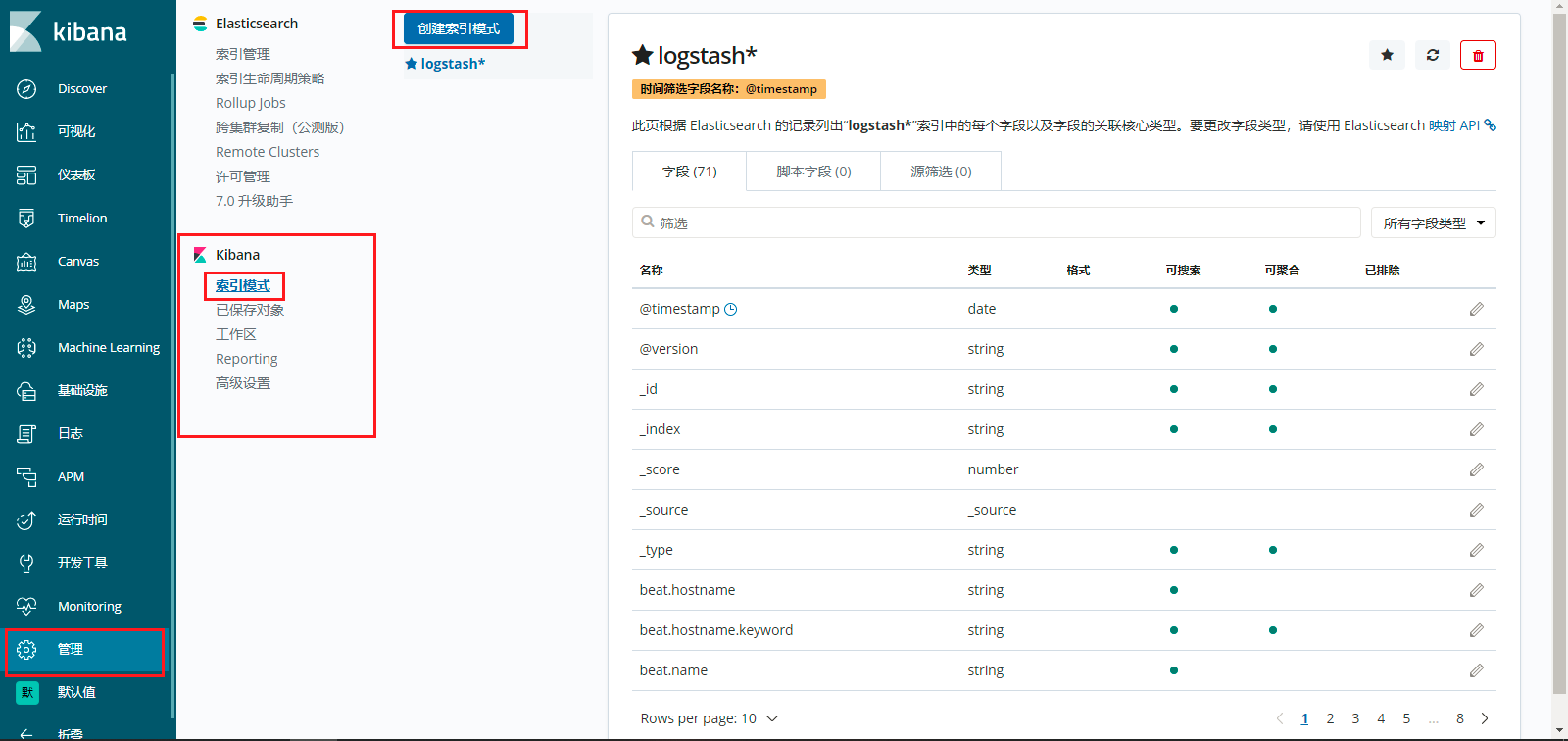

然后可以点击管理,索引模式,创建索引模式,将elasticsearch的索引和kibana进行关联,让kibana管理elasticsearch的索引。

点击创建索引模式,起一个索引模式的名称,如下所示:

然后配置设置,这里根据时间进行筛选数据。

创建完毕,是这样的,如下所示:

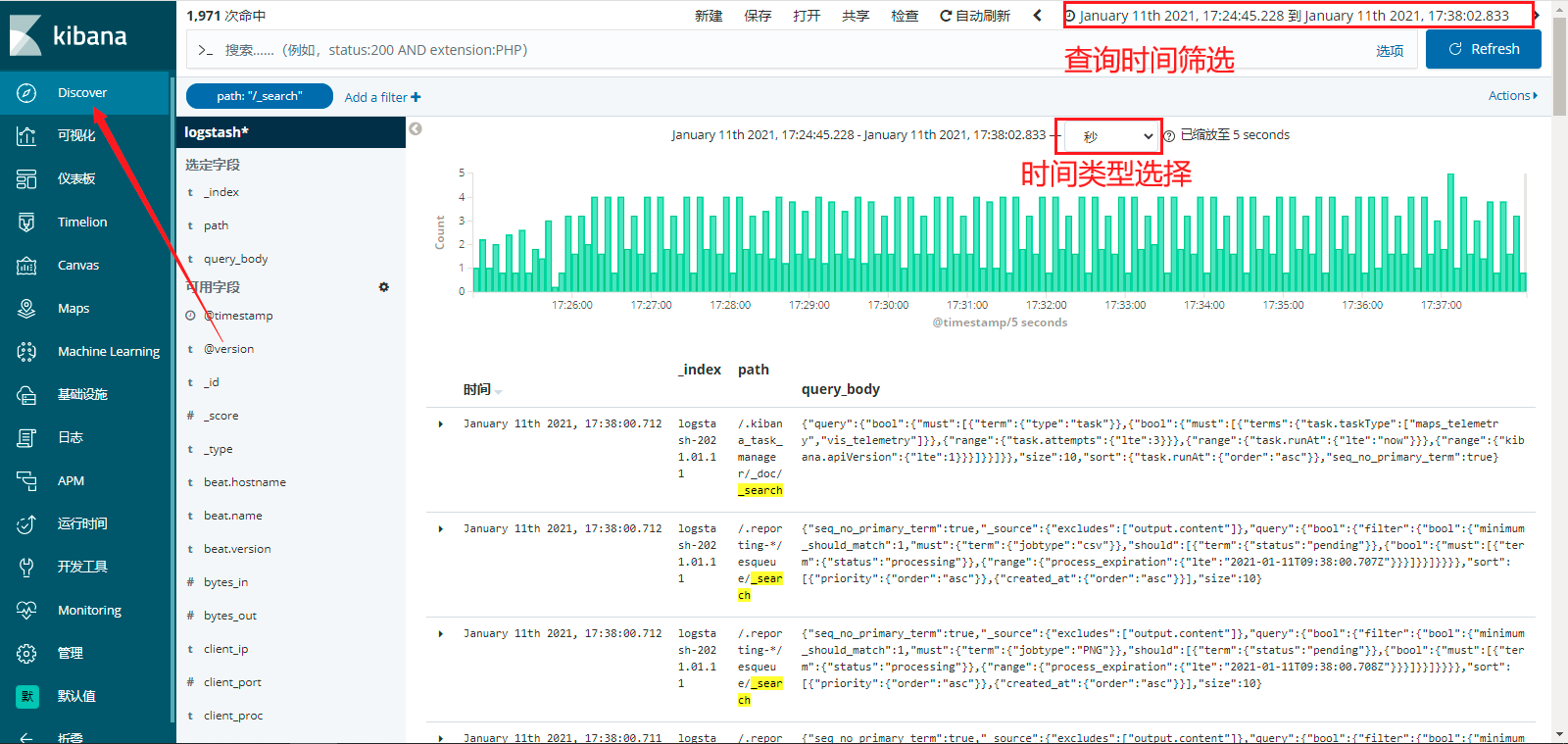

将elasticsearch的索引和kibana进行关联,让kibana管理elasticsearch的索引,然后,可以在Discover进行查看,如下所示:

那么,现在访问http://192.168.110.133:5601/ 这个Elasticsearch业务集群或者节点,创建索引,然后进行查询,就可以在这个Elasticsearch监控集群或者节点进行查看。

然后,在这个Elasticsearch监控集群或者节点进行查看,注意查询时间的选择哦。

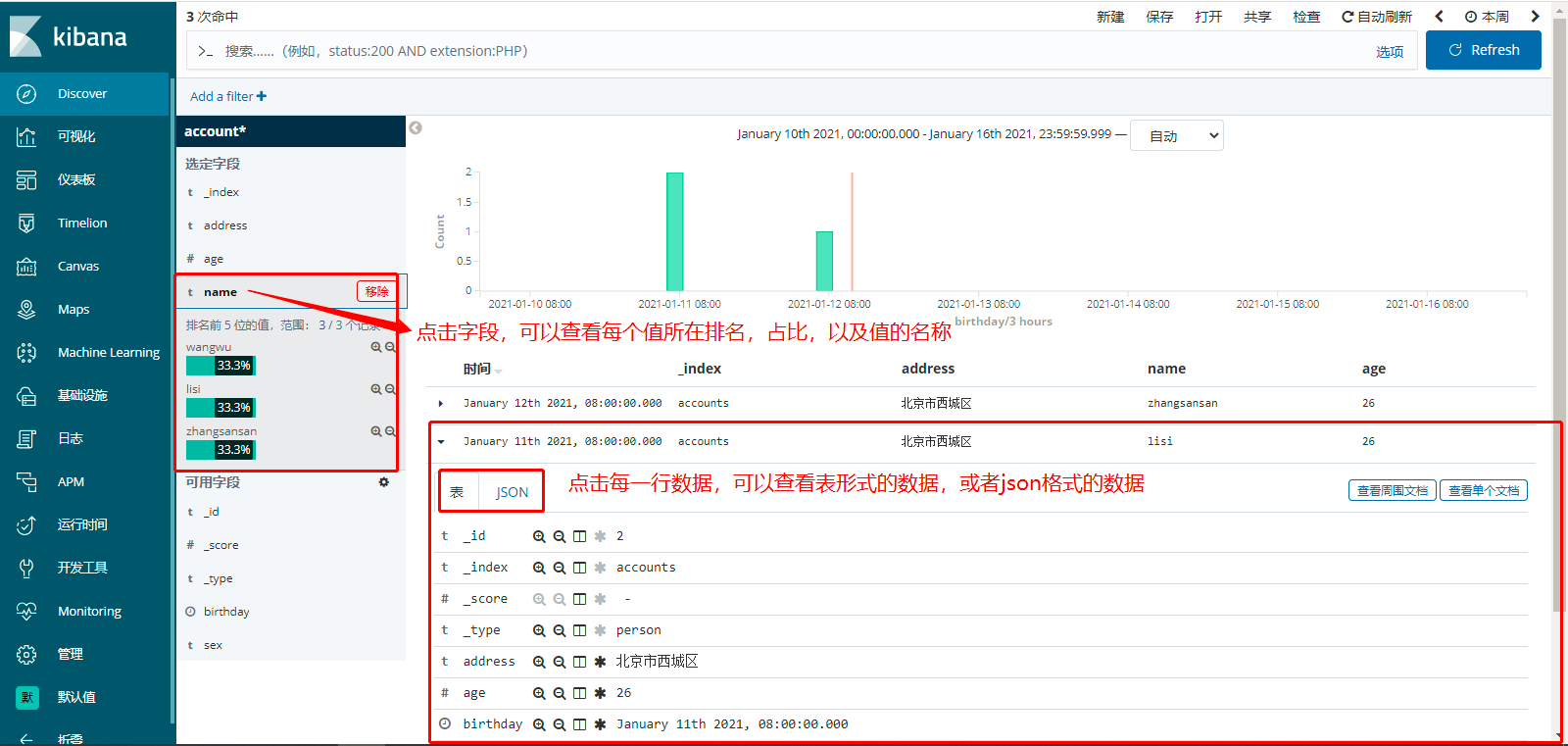

7、关于Kibana的Discover功能的使用,如下所示:

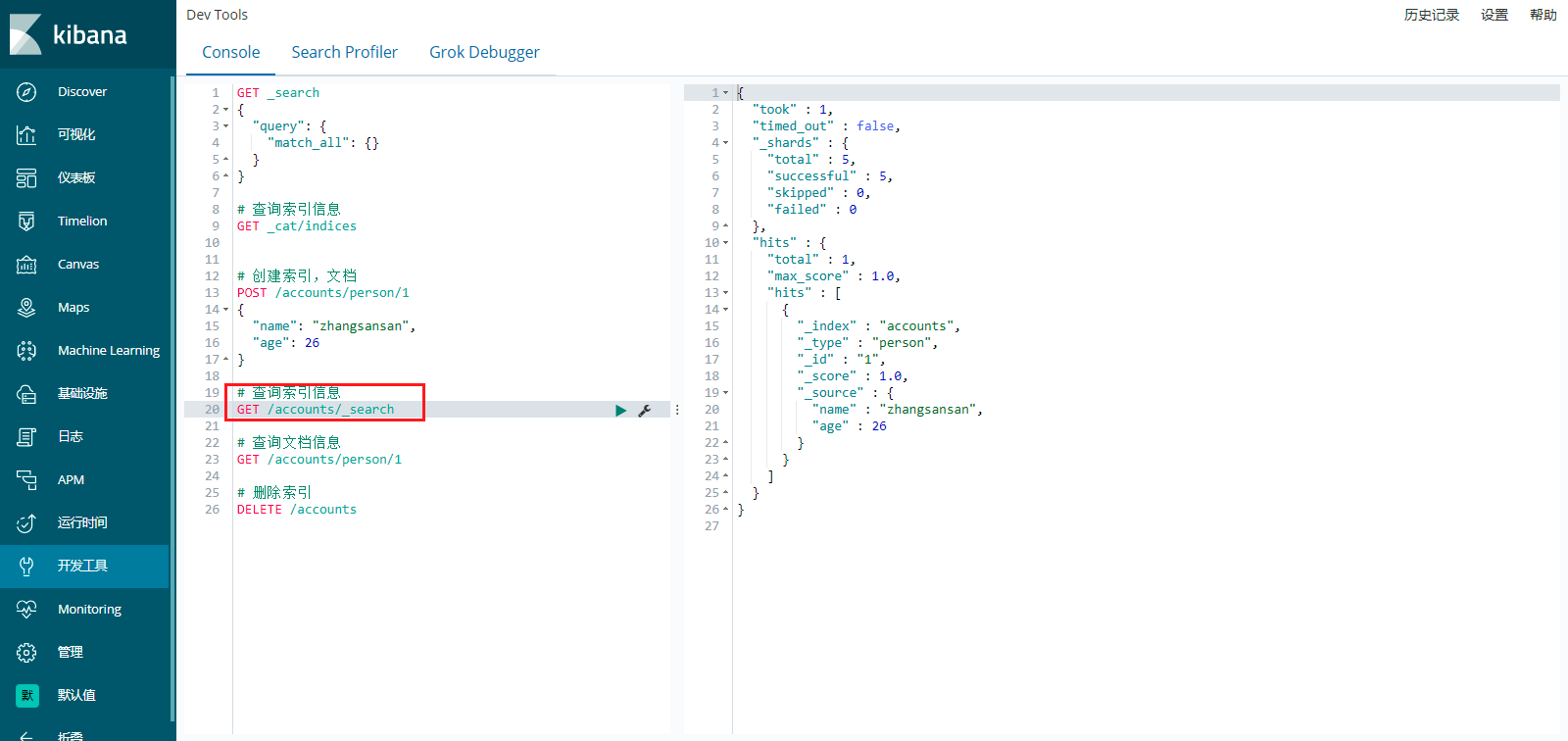

第一步:关于Kibana的使用流程,首先需要创建索引的,可以在Dev Tools(开发工具)功能菜单,创建索引。

第二步:然后在管理功能菜单,Elasticsearch,索引管理,查看创建的索引信息(包含索引配置信息等信息)。

第三步:然后在管理功能菜单,Kibana,索引模式,创建索引模式,创建索引模式成功之后,就可以进行查看了。

第四步:然后在Discover功能菜单、可视化功能菜单,进行查看相关功能。特别需要注意,创建索引模式的时候,第二步将选定时间作为筛选条件,如果Discover右上角的日期时间选择不正确,文档数据是不会正常显示的。

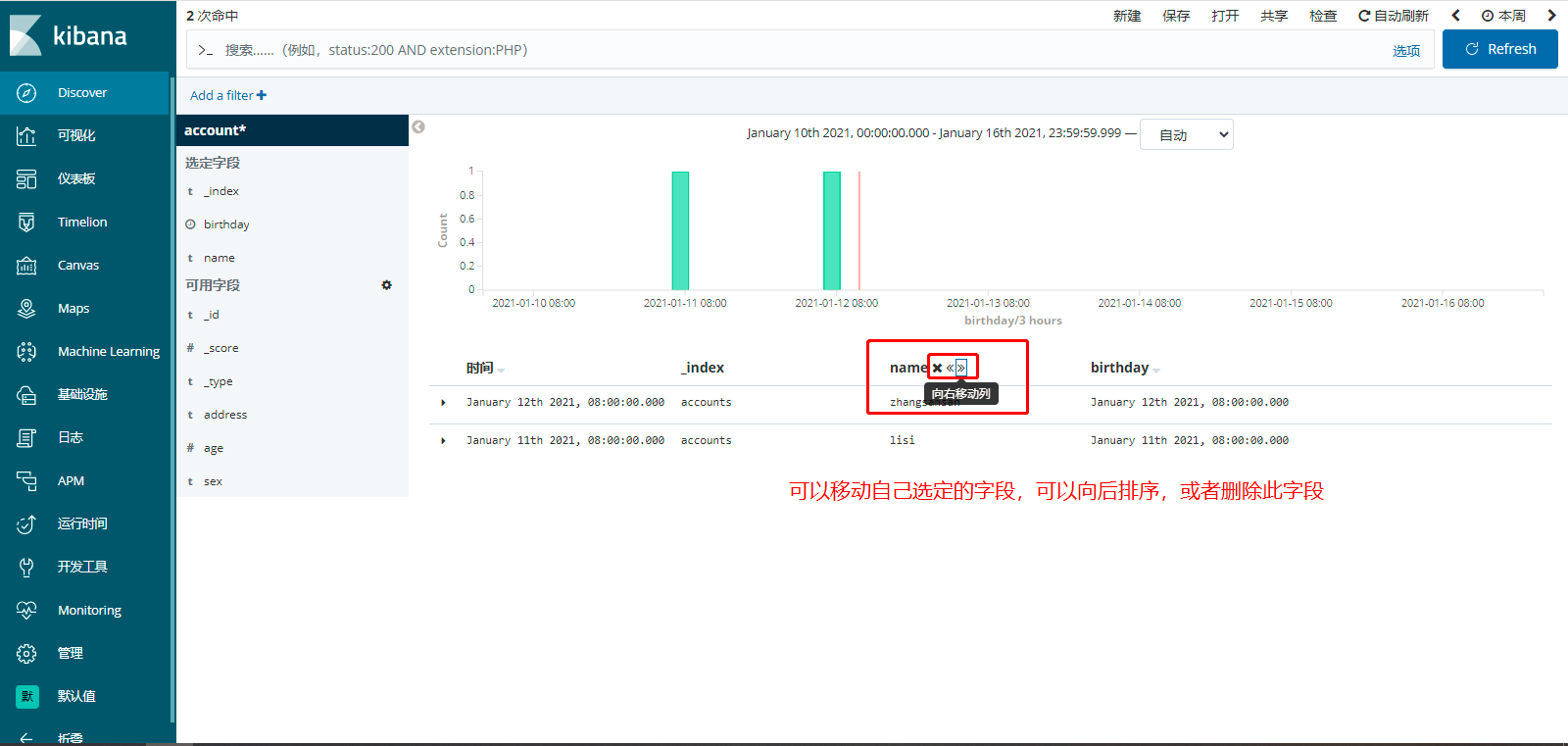

关于,展示的字段,可以排序字段的顺序和是否展示此字段,如下所示:

可以查看,每个字段的值占比,值的内容,以及表格里面每一行的表形式或者json形式展示。

如何使用新建、保存、打开功能,可以方便保存查询条件,方便下次使用,如下所示:

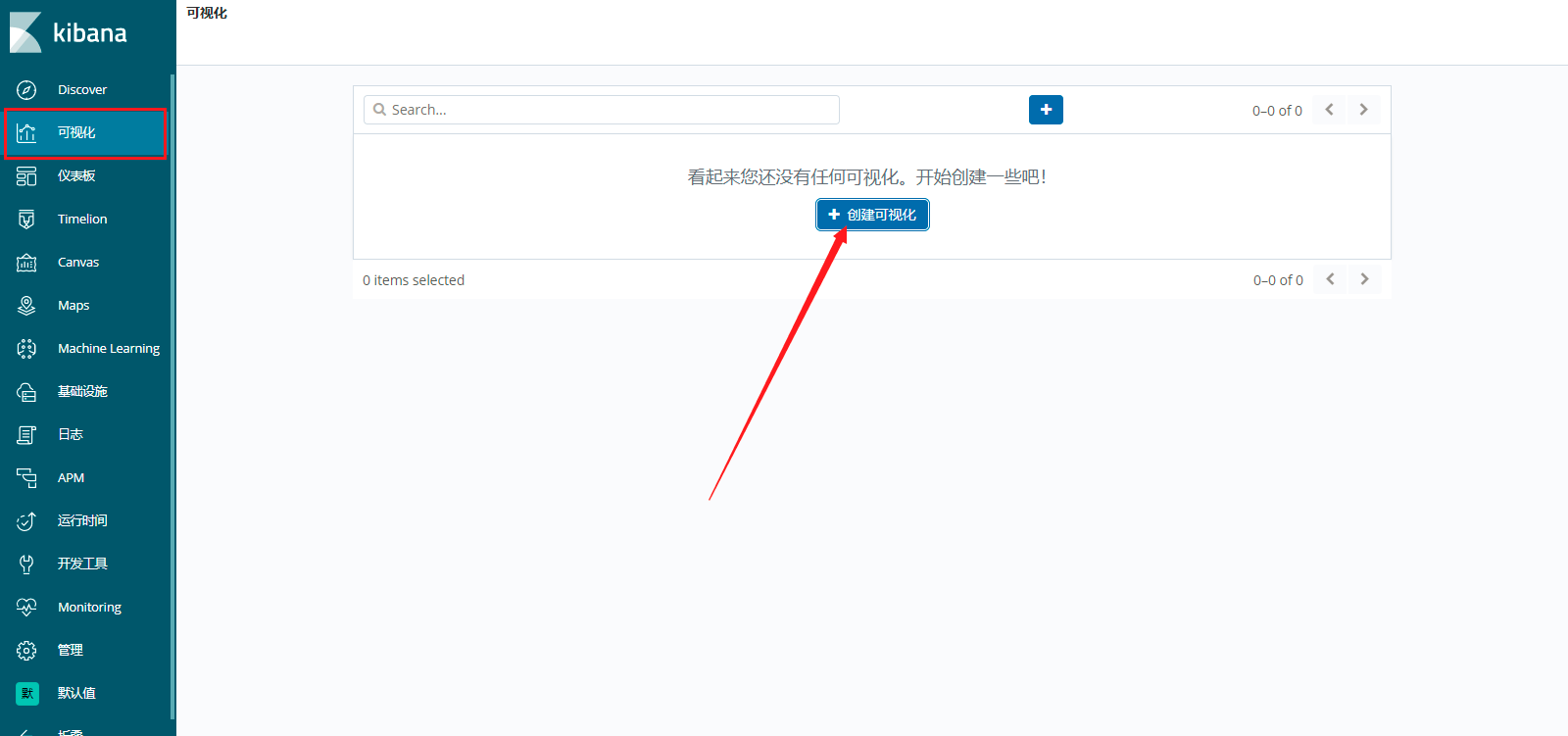

8、Kibana的Visualize可视化分析,虽是拖拉拽,但是这个会了,可以观察接口调用超时、统计指标、方便观察等等指标。

点击创建可视化,选择适合自己的图指标,这玩意没有的话,还得自己写,现在搞成了拖拉拽,方便了很多,如下所示:

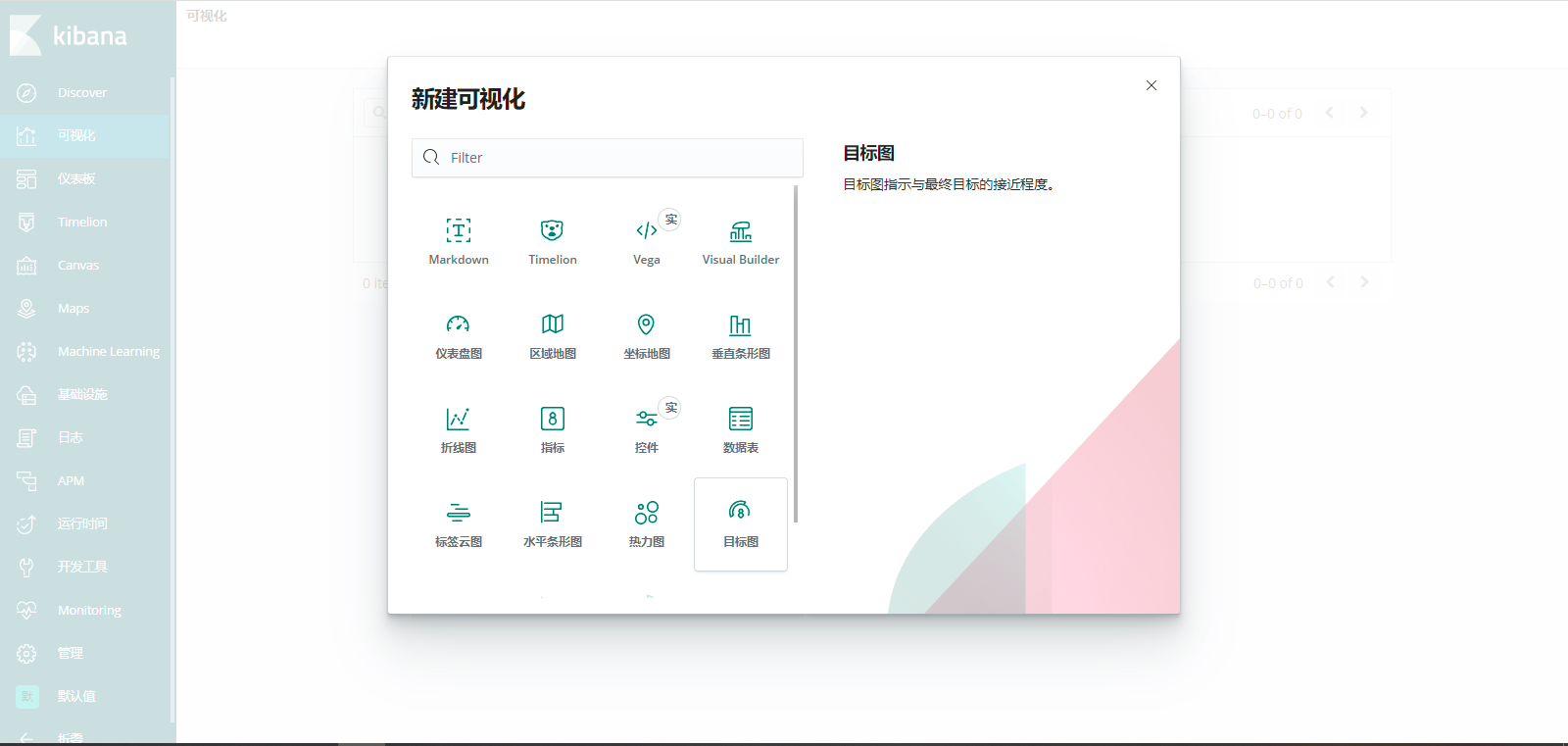

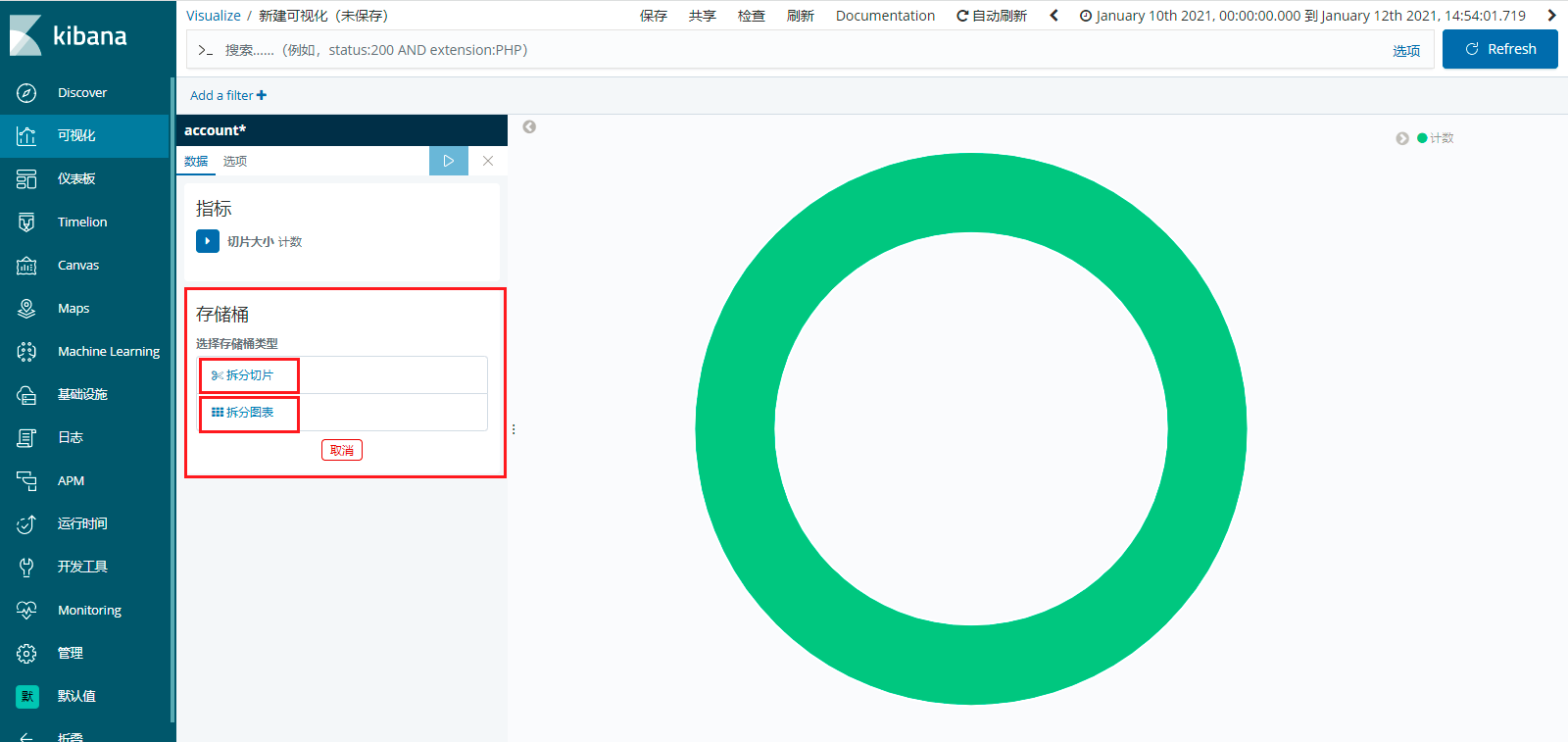

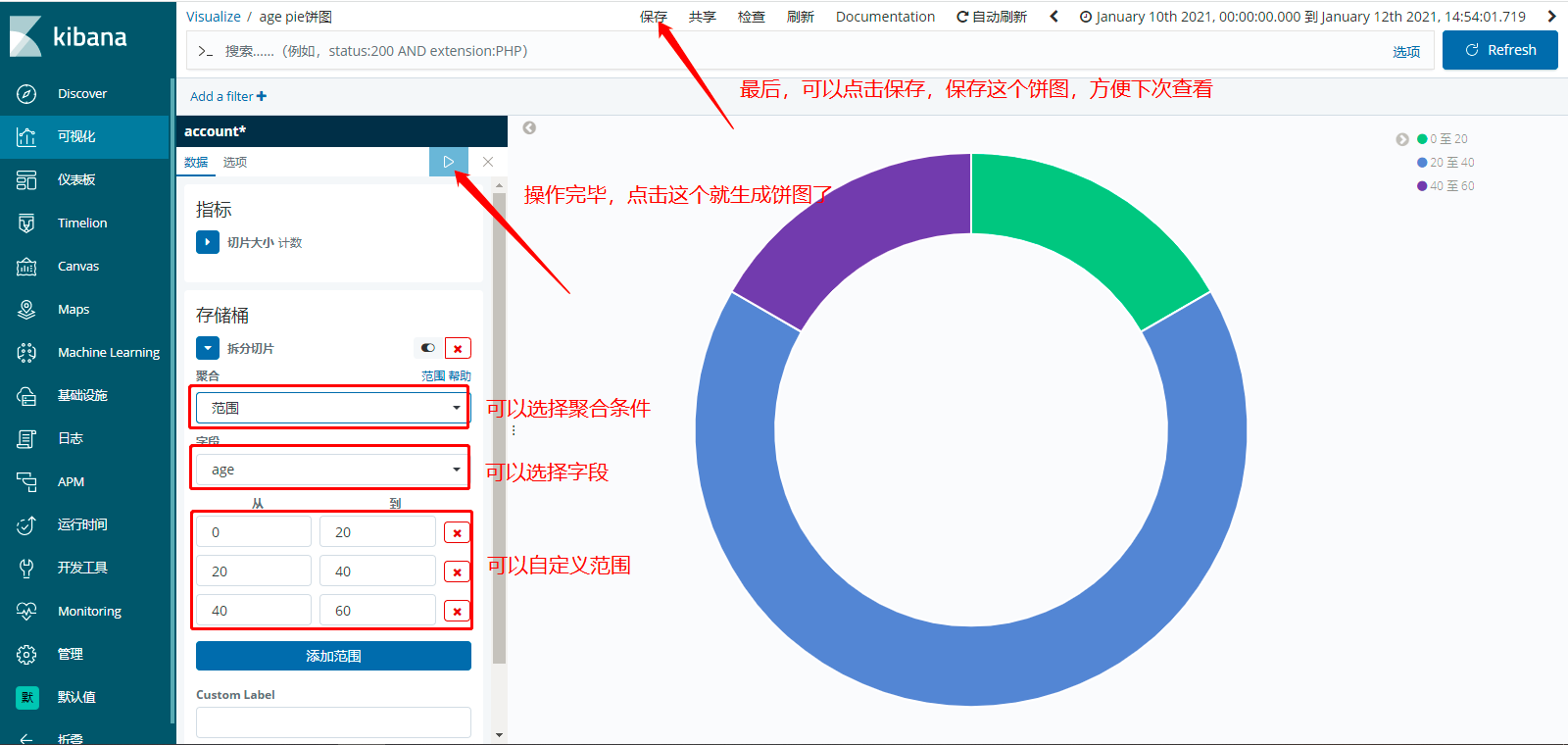

创建饼图,点击饼图,显示如下所示:

可以看到,可以选择,拆分切片、拆分图表,如下所示:

最后,如何制作一个饼图呢,如下所示:

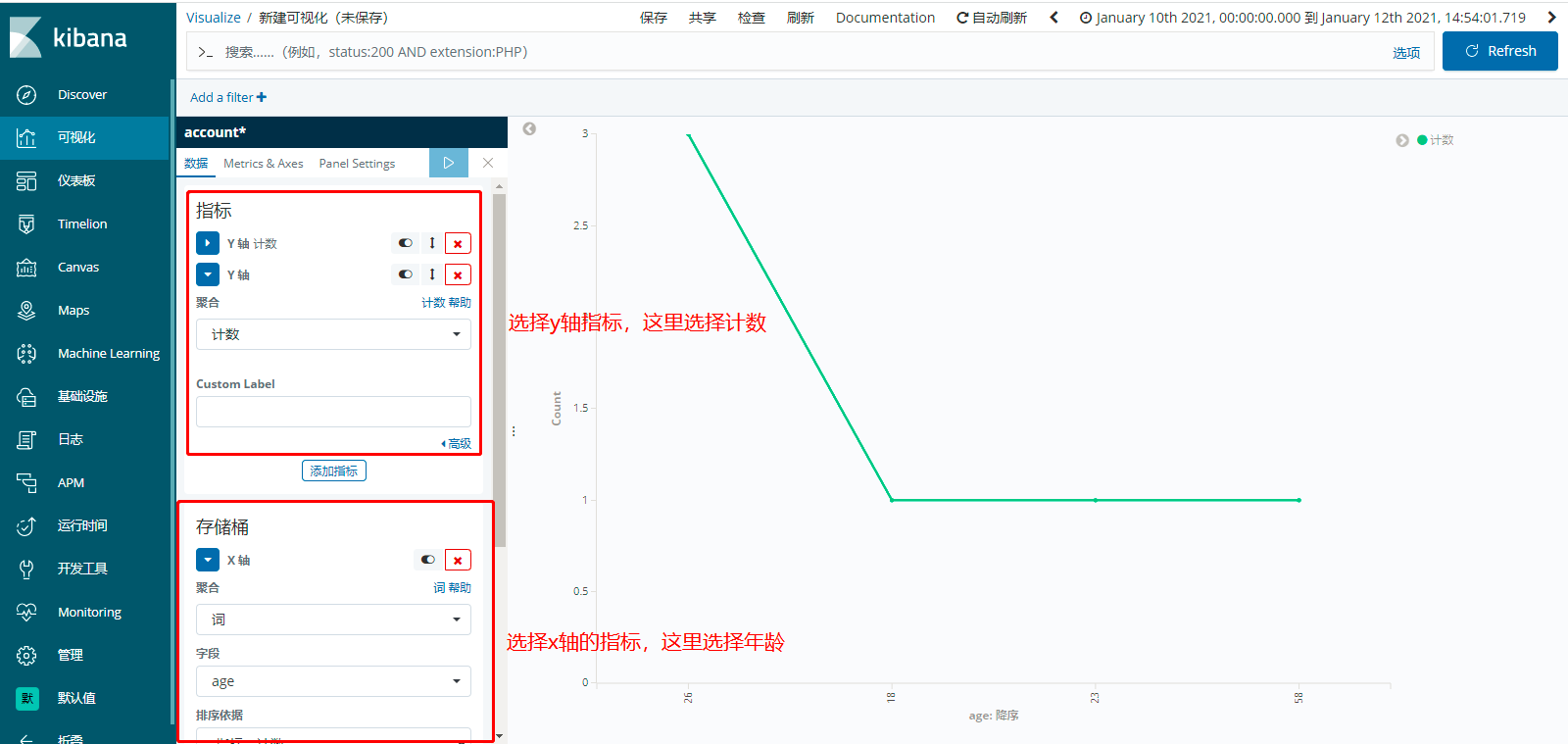

创建折线图,点击折线图。然后,点击基于“新搜索”,选择“索引”。然后添加指标,如下所示:

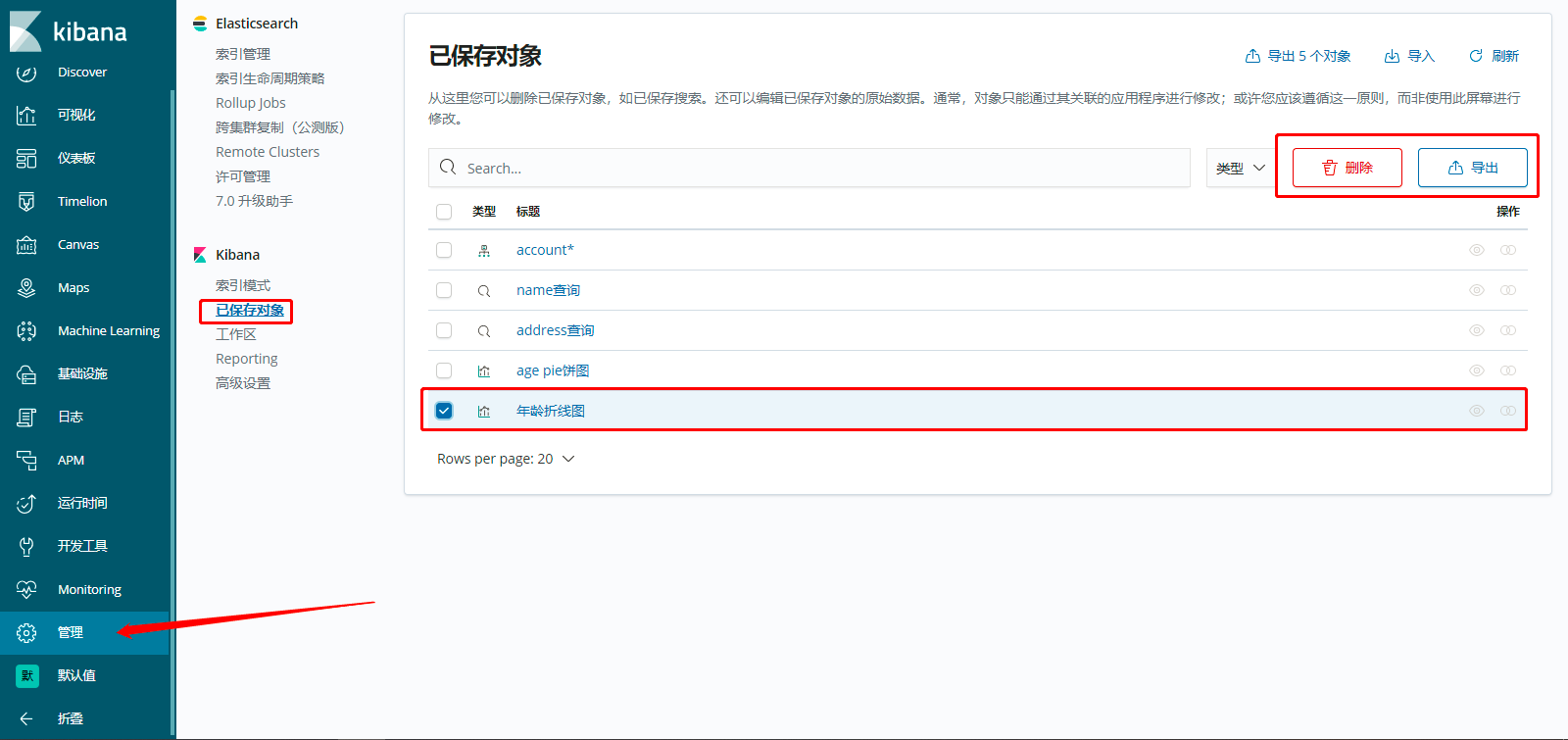

如何删除保存的可视化图,或者保存的查询条件,可以选择删除或者导出功能,如下所示:

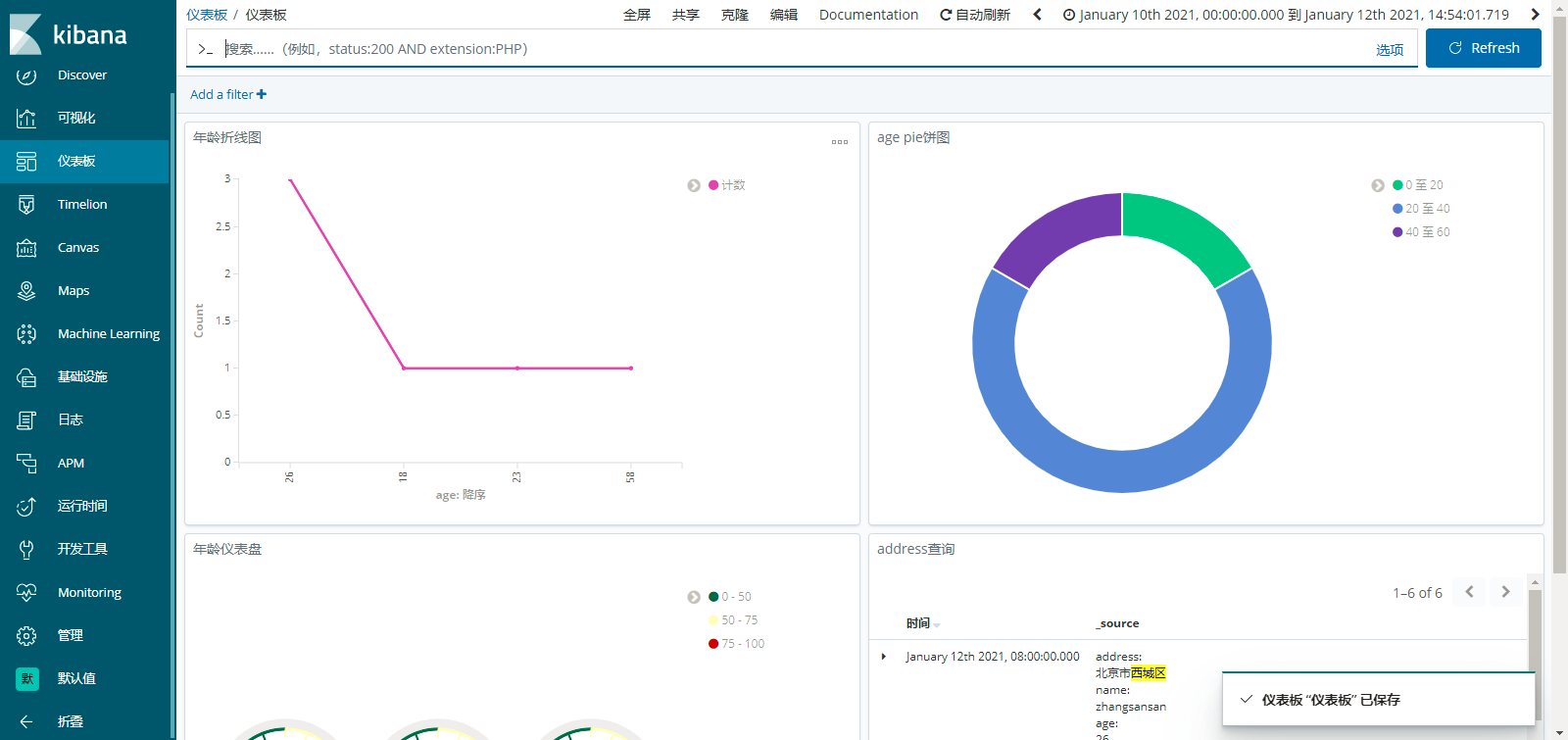

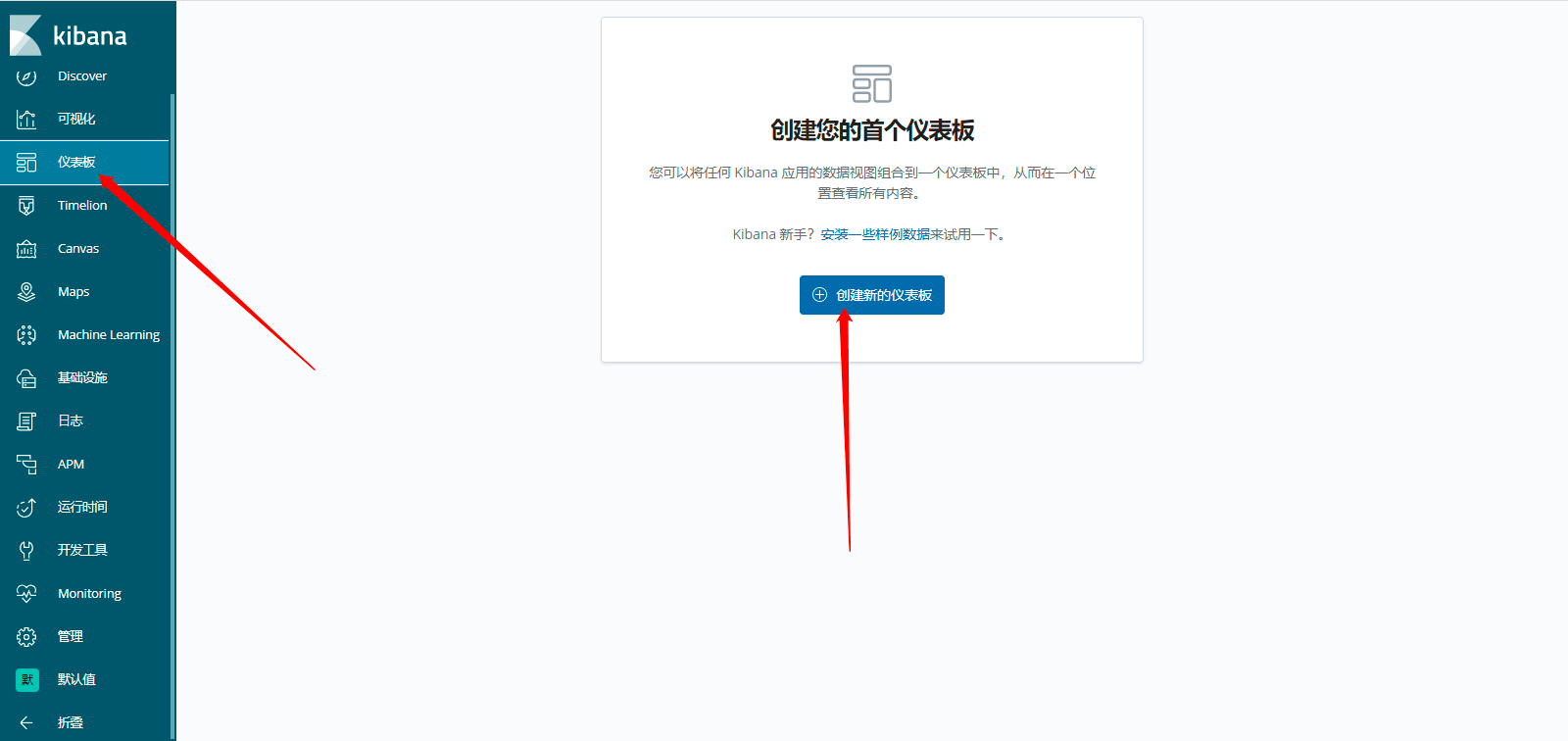

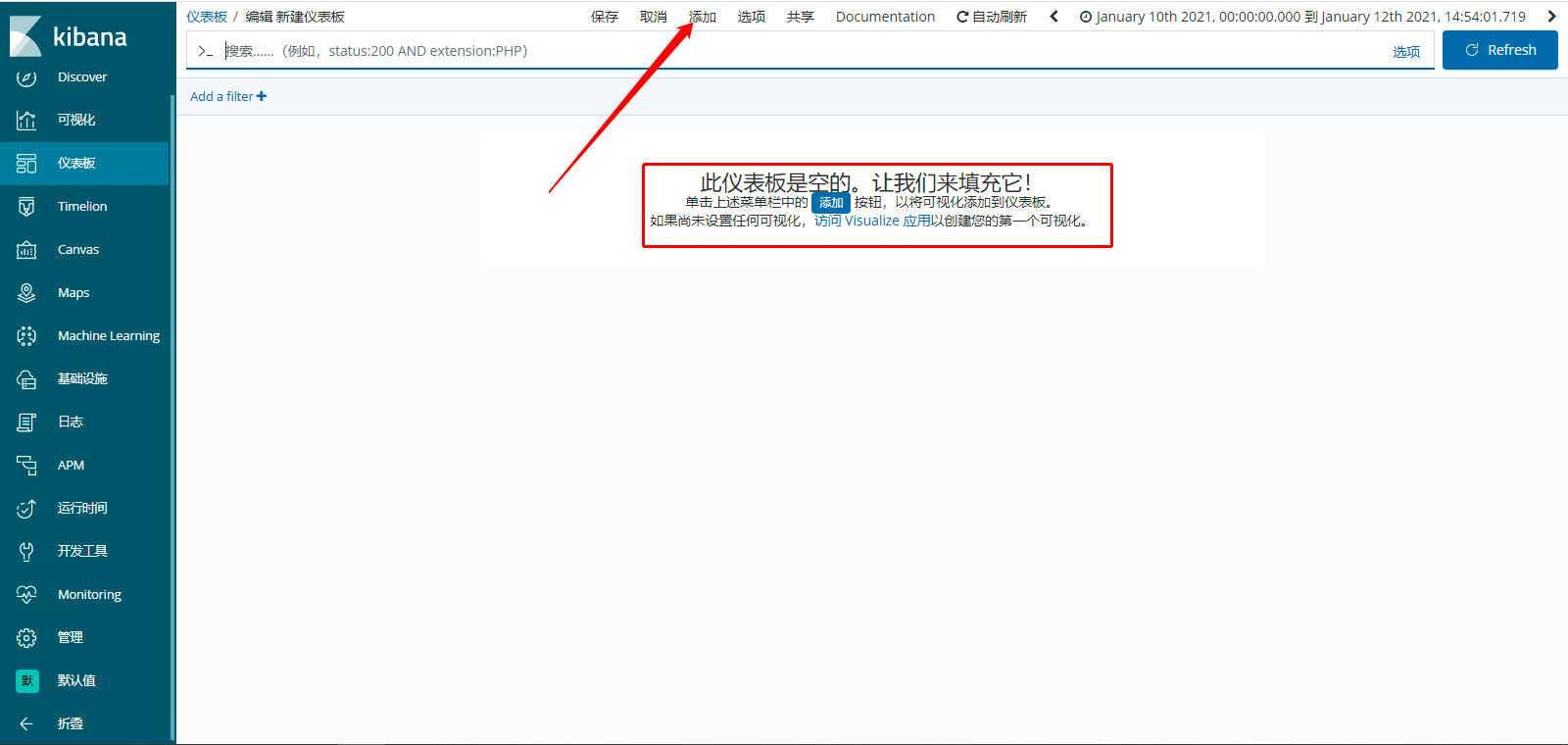

9、如何Kibana的可视化分析已经创建完毕了,可以做一个仪表盘,有时候老外的思想不得不佩服,如下所示:

然后,点击添加按钮,如下所示:

下面,将可视化或者已保存的搜索添加到仪表盘,如下所示:

最终,不过,自己记得保存一下自己添加的仪表盘,不然下次找不到的哦,展示效果,如下所示: