1、什么是SolrCloud

SolrCloud(solr 云)是Solr提供的分布式搜索方案,当你需要大规模,容错,分布式索引和检索能力时使用 SolrCloud。

当一个系统的索引数据量少的时候是不需要使用SolrCloud的,当索引量很大,搜索请求并发很高,这时需要使用SolrCloud来满足这些需求。SolrCloud是基于Solr和Zookeeper的分布式搜索方案,它的主要思想是使用Zookeeper作为集群的配置信息中心。

它有几个特色功能:

1)集中式的配置信息。

2)自动容错。

3)近实时搜索。

4)查询时自动负载均衡。

注意:一般使用zookeeper,就是将他当作一个注册中心使用。SolrCloud使用zookeeper是使用其的管理集群的,请求过来,先连接zookeeper,然后再看看分发到那台solr机器上面,决定了那台服务器进行搜索的,对Solr配置文件进行集中管理。

2、zookeeper是个什么玩意?

顾名思义zookeeper就是动物园管理员,他是用来管hadoop(大象)、Hive(蜜蜂)、pig(小猪)的管理员, Apache Hbase和 Apache Solr 的分布式集群都用到了zookeeper;Zookeeper:是一个分布式的、开源的程序协调服务,是hadoop项目下的一个子项目。

3、SolrCloud结构

SolrCloud为了降低单机的处理压力,需要由多台服务器共同来完成索引和搜索任务。实现的思路是将索引数据进行Shard(分片)拆分,每个分片由多台的服务器共同完成,当一个索引或搜索请求过来时会分别从不同的Shard的服务器中操作索引。

SolrCloud需要Solr基于Zookeeper部署,Zookeeper是一个集群管理软件,由于SolrCloud需要由多台服务器组成,由zookeeper来进行协调管理。

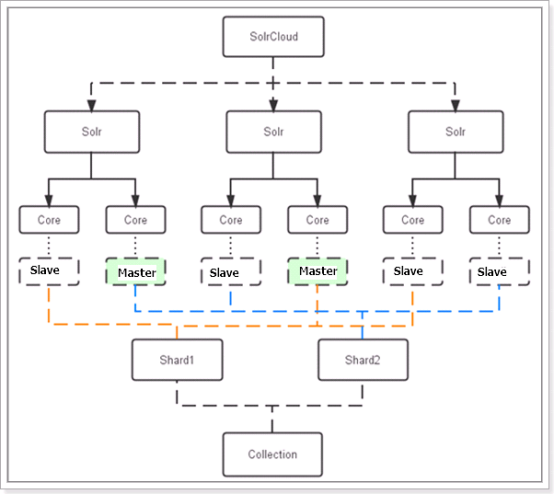

下图是一个SolrCloud应用的例子:

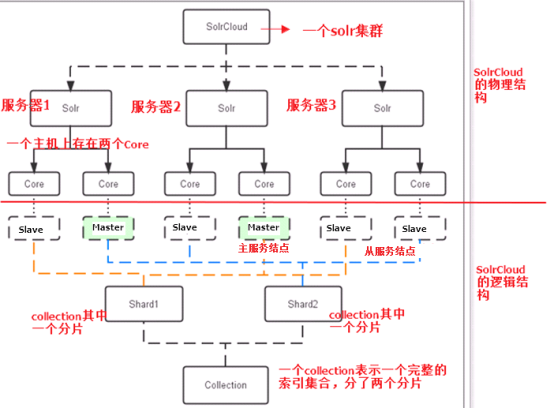

对上图进行图解,如下图所示:

1 1)、物理结构 2 三个Solr实例( 每个实例包括两个Core),组成一个SolrCloud。 3 2)、逻辑结构 4 索引集合包括两个Shard(shard1和shard2),shard1和shard2分别由三个Core组成,其中一个Leader两个Replication,Leader是由zookeeper选举产生,zookeeper控制每个shard上三个Core的索引数据一致,解决高可用问题。 5 用户发起索引请求分别从shard1和shard2上获取,解决高并发问题。 6 a、collection 7 Collection在SolrCloud集群中是一个逻辑意义上的完整的索引结构。它常常被划分为一个或多个Shard(分片),它们使用相同的配置信息。 8 比如:针对商品信息搜索可以创建一个collection。 9 collection=shard1+shard2+....+shardX 10 b、Core 11 每个Core是Solr中一个独立运行单位,提供 索引和搜索服务。一个shard需要由一个Core或多个Core组成。由于collection由多个shard组成所以collection一般由多个core组成。 12 c、Master或Slave 13 Master是master-slave结构中的主结点(通常说主服务器),Slave是master-slave结构中的从结点(通常说从服务器或备服务器)。同一个Shard下master和slave存储的数据是一致的,这是为了达到高可用目的。 14 d、Shard 15 Collection的逻辑分片。每个Shard被化成一个或者多个replication,通过选举确定哪个是Leader。 16 注意:collection就是一个完整的索引库,分片存储,有两片,两片的内容不一样的。一片存储在三个节点,一主两从,他们之间的内容就是一样的。

如下图所示:

4、SolrCloud搭建

本教程的这套安装是单机版的安装,所以采用伪集群的方式进行安装,如果是真正的生成环境,将伪集群的ip改下就可以了,步骤是一样的。

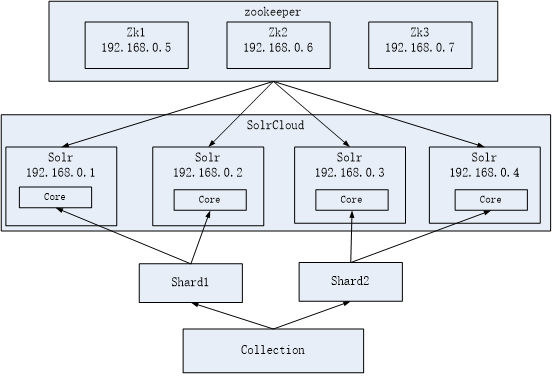

SolrCloud结构图如下:

环境准备,请安装好自己的jdk。

注意,我搭建的是伪集群,一个虚拟机,分配了1G内存,然后搭建的集群。

5、zookeeper集群安装。

第一步:解压zookeeper,tar -zxvf zookeeper-3.4.6.tar.gz将zookeeper-3.4.6拷贝到/usr/local/solr-cloud下,复制三份分别并将目录名改为zookeeper1、zookeeper2、zookeeper3。

1 # 首先将zookeeper进行解压缩操作。 2 [root@localhost package]# tar -zxvf zookeeper-3.4.6.tar.gz -C /home/hadoop/soft/ 3 # 然后创建一个solr-cloud目录。 4 [root@localhost ~]# cd /usr/local/ 5 [root@localhost local]# ls 6 bin etc games include lib libexec sbin share solr src 7 [root@localhost local]# mkdir solr-cloud 8 [root@localhost local]# ls 9 bin etc games include lib libexec sbin share solr solr-cloud src 10 [root@localhost local]# 11 # 复制三份分别并将目录名改为zookeeper1、zookeeper2、zookeeper3。 12 [root@localhost soft]# cp -r zookeeper-3.4.6/ /usr/local/solr-cloud/zookeeper1 13 [root@localhost soft]# cd /usr/local/solr-cloud/ 14 [root@localhost solr-cloud]# ls 15 zookeeper1 16 [root@localhost solr-cloud]# cp -r zookeeper1/ zookeeper2 17 [root@localhost solr-cloud]# cp -r zookeeper1/ zookeeper3

第二步:进入zookeeper1文件夹,创建data目录。并在data目录中创建一个myid文件内容为"1"(echo 1 >> data/myid)。

1 [root@localhost solr-cloud]# ls 2 zookeeper1 zookeeper2 zookeeper3 3 [root@localhost solr-cloud]# cd zookeeper1 4 [root@localhost zookeeper1]# ls 5 bin CHANGES.txt contrib docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 6 build.xml conf dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 7 [root@localhost zookeeper1]# mkdir data 8 [root@localhost zookeeper1]# cd data/ 9 [root@localhost data]# ls 10 [root@localhost data]# echo 1 >> myid 11 [root@localhost data]# cat myid

第三步:进入conf文件夹,把zoo_sample.cfg改名为zoo.cfg。

1 [root@localhost zookeeper1]# ls 2 bin CHANGES.txt contrib dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 3 build.xml conf data docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 4 [root@localhost zookeeper1]# cd conf/ 5 [root@localhost conf]# ls 6 configuration.xsl log4j.properties zoo_sample.cfg 7 [root@localhost conf]# mv zoo_sample.cfg zoo.cfg 8 [root@localhost conf]# ls 9 configuration.xsl log4j.properties zoo.cfg 10 [root@localhost conf]#

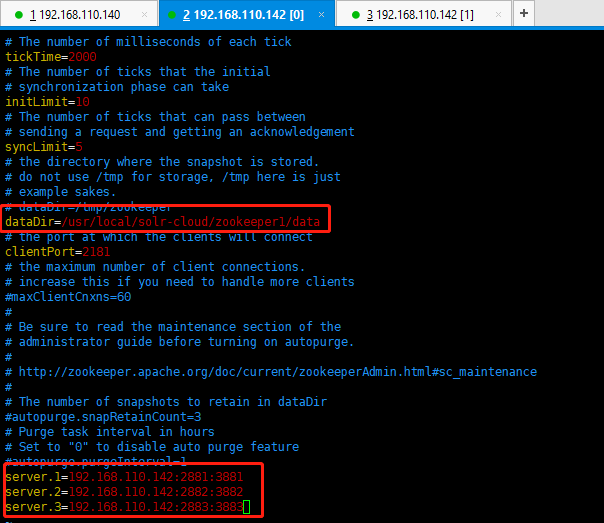

第四步:修改zoo.cfg。

修改:dataDir=/usr/local/solr-cloud/zookeeper1/data

注意:clientPort=2181(zookeeper2中为2182、zookeeper3中为2183)

可以在末尾添加这三项配置(注意:2881和3881分别是zookeeper之间通信的端口号、zookeeper之间选举使用的端口号。2181是客户端连接的端口号,别搞错了哦):

server.1=192.168.110.142:2881:3881

server.2=192.168.110.142:2882:3882

server.3=192.168.110.142:2883:3883

第五步:对zookeeper2、zookeeper3中的设置做第二步至第四步修改。

zookeeper2修改如下所示:

a)、myid内容为2

b)、dataDir=/usr/local/solr-cloud/zookeeper2/data

c)、clientPort=2182

Zookeeper3修改如下所示:

a)、myid内容为3

b)、dataDir=/usr/local/solr-cloud/zookeeper3/data

c)、clientPort=2183

1 [root@localhost conf]# cd ../../zookeeper2 2 [root@localhost zookeeper2]# ls 3 bin CHANGES.txt contrib docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 4 build.xml conf dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 5 [root@localhost zookeeper2]# mkdir data 6 [root@localhost zookeeper2]# cd data/ 7 [root@localhost data]# ls 8 [root@localhost data]# echo 2 >> myid 9 [root@localhost data]# ls 10 myid 11 [root@localhost data]# cat myid 12 2 13 [root@localhost data]# ls 14 myid 15 [root@localhost data]# cd .. 16 [root@localhost zookeeper2]# ls 17 bin CHANGES.txt contrib dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 18 build.xml conf data docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 19 [root@localhost zookeeper2]# cd conf/ 20 [root@localhost conf]# ls 21 configuration.xsl log4j.properties zoo_sample.cfg 22 [root@localhost conf]# mv zoo_sample.cfg zoo.cfg 23 [root@localhost conf]# ls 24 configuration.xsl log4j.properties zoo.cfg 25 [root@localhost conf]# vim zoo.cfg 26 [root@localhost conf]# cd ../.. 27 [root@localhost solr-cloud]# ls 28 zookeeper1 zookeeper2 zookeeper3 29 [root@localhost solr-cloud]# cd zookeeper3 30 [root@localhost zookeeper3]# ls 31 bin CHANGES.txt contrib docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 32 build.xml conf dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 33 [root@localhost zookeeper3]# mkdir data 34 [root@localhost zookeeper3]# ls 35 bin CHANGES.txt contrib dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 36 build.xml conf data docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 37 [root@localhost zookeeper3]# cd data/ 38 [root@localhost data]# ls 39 [root@localhost data]# echo 3 >> myid 40 [root@localhost data]# ls 41 myid 42 [root@localhost data]# cd ../.. 43 [root@localhost solr-cloud]# ls 44 zookeeper1 zookeeper2 zookeeper3 45 [root@localhost solr-cloud]# cd zookeeper3 46 [root@localhost zookeeper3]# ls 47 bin CHANGES.txt contrib dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 48 build.xml conf data docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 49 [root@localhost zookeeper3]# cd conf/ 50 [root@localhost conf]# ls 51 configuration.xsl log4j.properties zoo_sample.cfg 52 [root@localhost conf]# mv zoo_sample.cfg zoo.cfg 53 [root@localhost conf]# ls 54 configuration.xsl log4j.properties zoo.cfg 55 [root@localhost conf]# vim zoo.cfg 56 [root@localhost conf]#

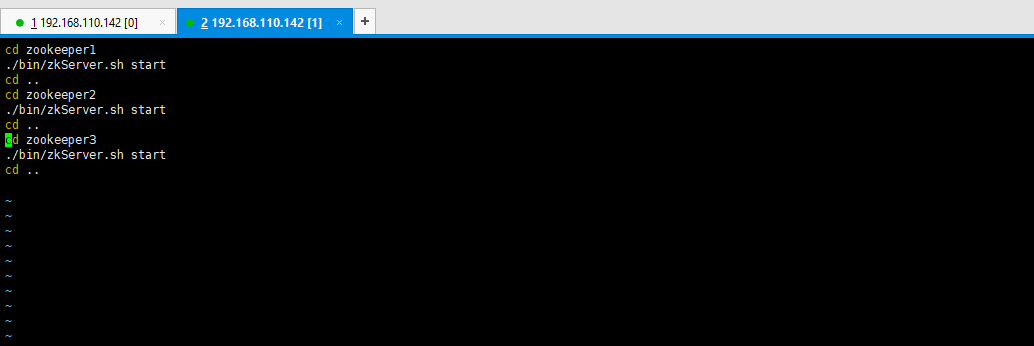

第六步:启动三个zookeeper。

/usr/local/solr-cloud/zookeeper1/bin/zkServer.sh start

/usr/local/solr-cloud/zookeeper2/bin/zkServer.sh start

/usr/local/solr-cloud/zookeeper3/bin/zkServer.sh start

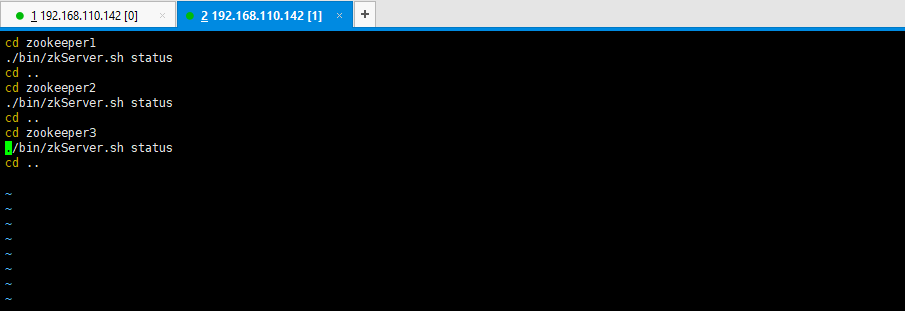

查看集群状态:

/usr/local/solr-cloud/zookeeper1/bin/zkServer.sh status

/usr/local/solr-cloud/zookeeper2/bin/zkServer.sh status

/usr/local/solr-cloud/zookeeper3/bin/zkServer.sh status

1 [root@localhost ~]# /usr/local/solr-cloud/zookeeper1/bin/zkServer.sh start 2 JMX enabled by default 3 Using config: /usr/local/solr-cloud/zookeeper1/bin/../conf/zoo.cfg 4 Starting zookeeper ... STARTED 5 [root@localhost ~]# /usr/local/solr-cloud/zookeeper2/bin/zkServer.sh start 6 JMX enabled by default 7 Using config: /usr/local/solr-cloud/zookeeper2/bin/../conf/zoo.cfg 8 Starting zookeeper ... STARTED 9 [root@localhost ~]# /usr/local/solr-cloud/zookeeper3/bin/zkServer.sh start 10 JMX enabled by default 11 Using config: /usr/local/solr-cloud/zookeeper3/bin/../conf/zoo.cfg 12 Starting zookeeper ... STARTED 13 [root@localhost ~]# /usr/local/solr-cloud/zookeeper1/bin/zkServer.sh status 14 JMX enabled by default 15 Using config: /usr/local/solr-cloud/zookeeper1/bin/../conf/zoo.cfg 16 Mode: follower 17 [root@localhost ~]# /usr/local/solr-cloud/zookeeper2/bin/zkServer.sh status 18 JMX enabled by default 19 Using config: /usr/local/solr-cloud/zookeeper2/bin/../conf/zoo.cfg 20 Mode: leader 21 [root@localhost ~]# /usr/local/solr-cloud/zookeeper3/bin/zkServer.sh status 22 JMX enabled by default 23 Using config: /usr/local/solr-cloud/zookeeper3/bin/../conf/zoo.cfg 24 Mode: follower 25 [root@localhost ~]#

第七步:开启zookeeper用到的端口,或者直接关闭防火墙。

service iptables stop

可以编写简单的启动脚本,如下所示:

1 [root@localhost solr-cloud]# vim start-zookeeper.sh 2 [root@localhost solr-cloud]# chmod u+x start-zookeeper.sh 3 [root@localhost solr-cloud]# ll 4 total 16 5 -rwxr--r--. 1 root root 133 Sep 13 18:32 start-zookeeper.sh 6 drwxr-xr-x. 11 root root 4096 Sep 13 02:13 zookeeper1 7 drwxr-xr-x. 11 root root 4096 Sep 13 02:35 zookeeper2 8 drwxr-xr-x. 11 root root 4096 Sep 13 02:37 zookeeper3 9 [root@localhost solr-cloud]#

查看启动状态的脚本信息如下所示:

6、tomcat安装。 (注意:solrCloud部署依赖zookeeper,需要先启动每一台zookeeper服务器。)

注意:使用之前搭建好的solr单机版,这里为了节省时间,直接拷贝之前的solr单机版,然后修改配置文件即可。

https://www.cnblogs.com/biehongli/p/11443347.html

第一步:将apache-tomcat-7.0.47.tar.gz解压,tar -zxvf apache-tomcat-7.0.47.tar.gz。

1 [root@localhost solr-cloud]# tar -zxvf apache-tomcat-7.0.47.tar.gz

第二步:把解压后的tomcat复制到/usr/local/solr-cloud/目录下复制四份。

/usr/local/solr-cloud/tomcat1

/usr/local/solr-cloud/tomcat2

/usr/local/solr-cloud/tomcat3

/usr/local/solr-cloud/tomcat4

1 [root@localhost package]# cp -r apache-tomcat-7.0.47 /usr/local/solr-cloud/tomcat01 2 [root@localhost package]# cp -r apache-tomcat-7.0.47 /usr/local/solr-cloud/tomcat02 3 [root@localhost package]# cp -r apache-tomcat-7.0.47 /usr/local/solr-cloud/tomcat03 4 [root@localhost package]# cp -r apache-tomcat-7.0.47 /usr/local/solr-cloud/tomcat04 5 [root@localhost package]# cd /usr/local/solr-cloud/ 6 [root@localhost solr-cloud]# ls 7 start-zookeeper.sh status-zookeeper.sh stop-zookeeper.sh tomcat01 tomcat02 tomcat03 tomcat04 zookeeper1 zookeeper2 zookeeper3 8 [root@localhost solr-cloud]#

第三步:修改tomcat的server.xml,把其中的端口后都加一。保证两个tomcat可以正常运行不发生端口冲突。

[root@localhost solr-cloud]# vim tomcat01/conf/server.xml

[root@localhost solr-cloud]# vim tomcat02/conf/server.xml

[root@localhost solr-cloud]# vim tomcat03/conf/server.xml

[root@localhost solr-cloud]# vim tomcat04/conf/server.xml

tomcat01修改的端口分别是8005修改为8105,8080修改为8180,8009修改为8189。其他tomcat第二位依次累加1即可。

1 <?xml version='1.0' encoding='utf-8'?> 2 <!-- 3 Licensed to the Apache Software Foundation (ASF) under one or more 4 contributor license agreements. See the NOTICE file distributed with 5 this work for additional information regarding copyright ownership. 6 The ASF licenses this file to You under the Apache License, Version 2.0 7 (the "License"); you may not use this file except in compliance with 8 the License. You may obtain a copy of the License at 9 10 http://www.apache.org/licenses/LICENSE-2.0 11 12 Unless required by applicable law or agreed to in writing, software 13 distributed under the License is distributed on an "AS IS" BASIS, 14 WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. 15 See the License for the specific language governing permissions and 16 limitations under the License. 17 --> 18 <!-- Note: A "Server" is not itself a "Container", so you may not 19 define subcomponents such as "Valves" at this level. 20 Documentation at /docs/config/server.html 21 --> 22 <Server port="8105" shutdown="SHUTDOWN"> 23 <!-- Security listener. Documentation at /docs/config/listeners.html 24 <Listener className="org.apache.catalina.security.SecurityListener" /> 25 --> 26 <!--APR library loader. Documentation at /docs/apr.html --> 27 <Listener className="org.apache.catalina.core.AprLifecycleListener" SSLEngine="on" /> 28 <!--Initialize Jasper prior to webapps are loaded. Documentation at /docs/jasper-howto.html --> 29 <Listener className="org.apache.catalina.core.JasperListener" /> 30 <!-- Prevent memory leaks due to use of particular java/javax APIs--> 31 <Listener className="org.apache.catalina.core.JreMemoryLeakPreventionListener" /> 32 <Listener className="org.apache.catalina.mbeans.GlobalResourcesLifecycleListener" /> 33 <Listener className="org.apache.catalina.core.ThreadLocalLeakPreventionListener" /> 34 35 <!-- Global JNDI resources 36 Documentation at /docs/jndi-resources-howto.html 37 --> 38 <GlobalNamingResources> 39 <!-- Editable user database that can also be used by 40 UserDatabaseRealm to authenticate users 41 --> 42 <Resource name="UserDatabase" auth="Container" 43 type="org.apache.catalina.UserDatabase" 44 description="User database that can be updated and saved" 45 factory="org.apache.catalina.users.MemoryUserDatabaseFactory" 46 pathname="conf/tomcat-users.xml" /> 47 </GlobalNamingResources> 48 49 <!-- A "Service" is a collection of one or more "Connectors" that share 50 a single "Container" Note: A "Service" is not itself a "Container", 51 so you may not define subcomponents such as "Valves" at this level. 52 Documentation at /docs/config/service.html 53 --> 54 <Service name="Catalina"> 55 56 <!--The connectors can use a shared executor, you can define one or more named thread pools--> 57 <!-- 58 <Executor name="tomcatThreadPool" namePrefix="catalina-exec-" 59 maxThreads="150" minSpareThreads="4"/> 60 --> 61 62 63 <!-- A "Connector" represents an endpoint by which requests are received 64 and responses are returned. Documentation at : 65 Java HTTP Connector: /docs/config/http.html (blocking & non-blocking) 66 Java AJP Connector: /docs/config/ajp.html 67 APR (HTTP/AJP) Connector: /docs/apr.html 68 Define a non-SSL HTTP/1.1 Connector on port 8080 69 --> 70 <Connector port="8180" protocol="HTTP/1.1" 71 connectionTimeout="20000" 72 redirectPort="8443" /> 73 <!-- A "Connector" using the shared thread pool--> 74 <!-- 75 <Connector executor="tomcatThreadPool" 76 port="8080" protocol="HTTP/1.1" 77 connectionTimeout="20000" 78 redirectPort="8443" /> 79 --> 80 <!-- Define a SSL HTTP/1.1 Connector on port 8443 81 This connector uses the JSSE configuration, when using APR, the 82 connector should be using the OpenSSL style configuration 83 described in the APR documentation --> 84 <!-- 85 <Connector port="8443" protocol="HTTP/1.1" SSLEnabled="true" 86 maxThreads="150" scheme="https" secure="true" 87 clientAuth="false" sslProtocol="TLS" /> 88 --> 89 90 <!-- Define an AJP 1.3 Connector on port 8009 --> 91 <Connector port="8109" protocol="AJP/1.3" redirectPort="8443" /> 92 93 94 <!-- An Engine represents the entry point (within Catalina) that processes 95 every request. The Engine implementation for Tomcat stand alone 96 analyzes the HTTP headers included with the request, and passes them 97 on to the appropriate Host (virtual host). 98 Documentation at /docs/config/engine.html --> 99 100 <!-- You should set jvmRoute to support load-balancing via AJP ie : 101 <Engine name="Catalina" defaultHost="localhost" jvmRoute="jvm1"> 102 --> 103 <Engine name="Catalina" defaultHost="localhost"> 104 105 <!--For clustering, please take a look at documentation at: 106 /docs/cluster-howto.html (simple how to) 107 /docs/config/cluster.html (reference documentation) --> 108 <!-- 109 <Cluster className="org.apache.catalina.ha.tcp.SimpleTcpCluster"/> 110 --> 111 112 <!-- Use the LockOutRealm to prevent attempts to guess user passwords 113 via a brute-force attack --> 114 <Realm className="org.apache.catalina.realm.LockOutRealm"> 115 <!-- This Realm uses the UserDatabase configured in the global JNDI 116 resources under the key "UserDatabase". Any edits 117 that are performed against this UserDatabase are immediately 118 available for use by the Realm. --> 119 <Realm className="org.apache.catalina.realm.UserDatabaseRealm" 120 resourceName="UserDatabase"/> 121 </Realm> 122 123 <Host name="localhost" appBase="webapps" 124 unpackWARs="true" autoDeploy="true"> 125 126 <!-- SingleSignOn valve, share authentication between web applications 127 Documentation at: /docs/config/valve.html --> 128 <!-- 129 <Valve className="org.apache.catalina.authenticator.SingleSignOn" /> 130 --> 131 132 <!-- Access log processes all example. 133 Documentation at: /docs/config/valve.html 134 Note: The pattern used is equivalent to using pattern="common" --> 135 <Valve className="org.apache.catalina.valves.AccessLogValve" directory="logs" 136 prefix="localhost_access_log." suffix=".txt" 137 pattern="%h %l %u %t "%r" %s %b" /> 138 139 </Host> 140 </Engine> 141 </Service> 142 </Server>

tomcat01修改的端口分别是8005修改为8105,8080修改为8180,8009修改为8189。其他tomcat第二位依次累加1即可。

7、开始部署solr。将之前的solr部署到四台tomcat下面。

1 [root@localhost solr-cloud]# cp -r ../solr/tomcat/webapps/solr-4.10.3 tomcat01/webapps/ 2 [root@localhost solr-cloud]# cp -r ../solr/tomcat/webapps/solr-4.10.3 tomcat02/webapps/ 3 [root@localhost solr-cloud]# cp -r ../solr/tomcat/webapps/solr-4.10.3 tomcat03/webapps/ 4 [root@localhost solr-cloud]# cp -r ../solr/tomcat/webapps/solr-4.10.3 tomcat04/webapps/

然后将单机版的solrhome复制四份,分别是solrhome01,solrhome02,solrhome03,solrhome04。

1 [root@localhost solr-cloud]# cp -r ../solr/solrhome/ solrhome01 2 [root@localhost solr-cloud]# cp -r ../solr/solrhome/ solrhome02 3 [root@localhost solr-cloud]# cp -r ../solr/solrhome/ solrhome03 4 [root@localhost solr-cloud]# cp -r ../solr/solrhome/ solrhome04 5 [root@localhost solr-cloud]# ls 6 solrhome01 solrhome02 solrhome03 solrhome04 start-zookeeper.sh status-zookeeper.sh stop-zookeeper.sh tomcat01 tomcat02 tomcat03 tomcat04 zookeeper1 zookeeper2 zookeeper3 7 [root@localhost solr-cloud]#

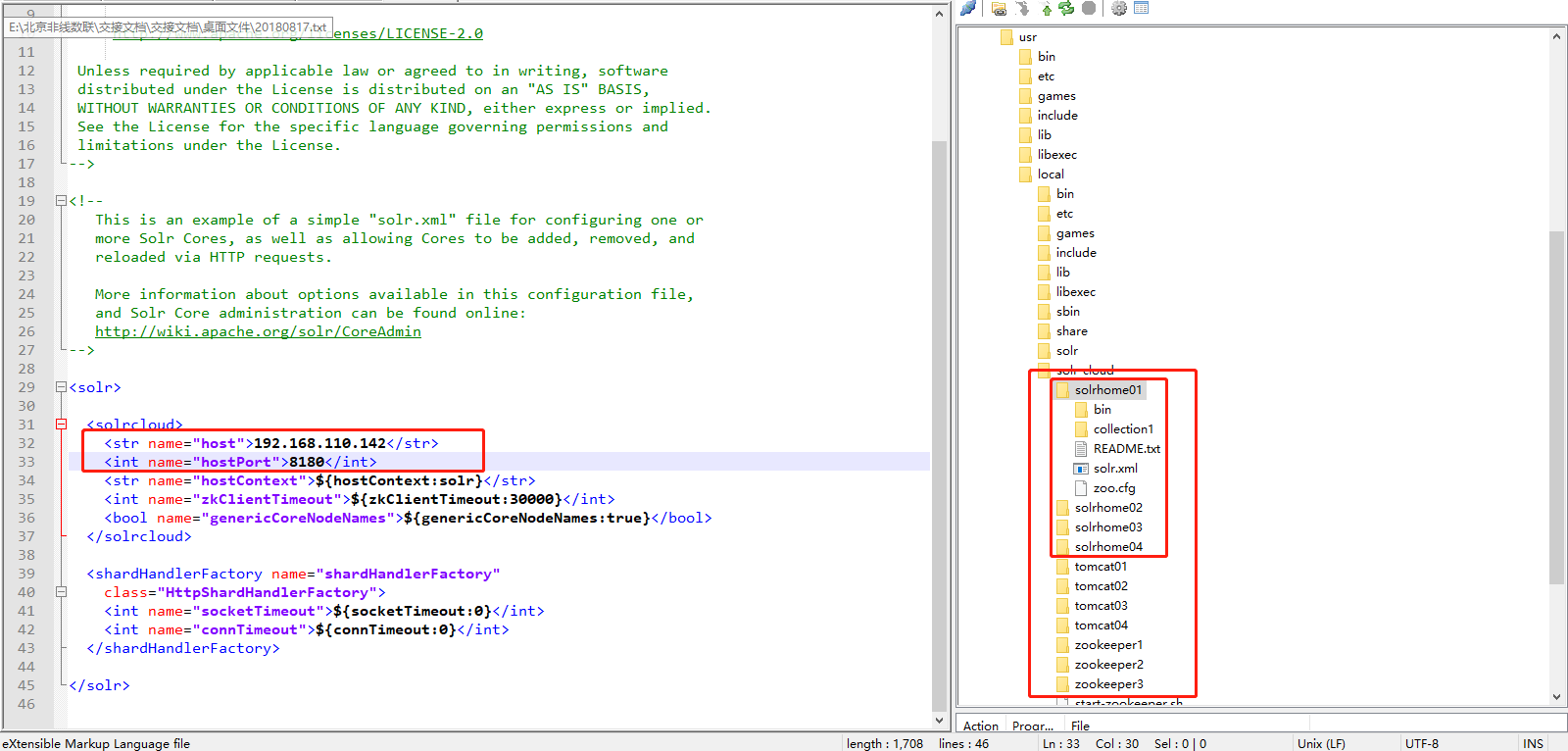

然后开始编辑solrhome01,solrhome02,solrhome03,solrhome04。下面的solr.xml配置文件(修改每个solrhome的solr.xml文件)。让tomcat01和solrhome01对应上,其他依次类推,让tomcat02和solrhome02对应上,让tomcat03和solrhome03对应上,让tomcat04和solrhome04对应上。

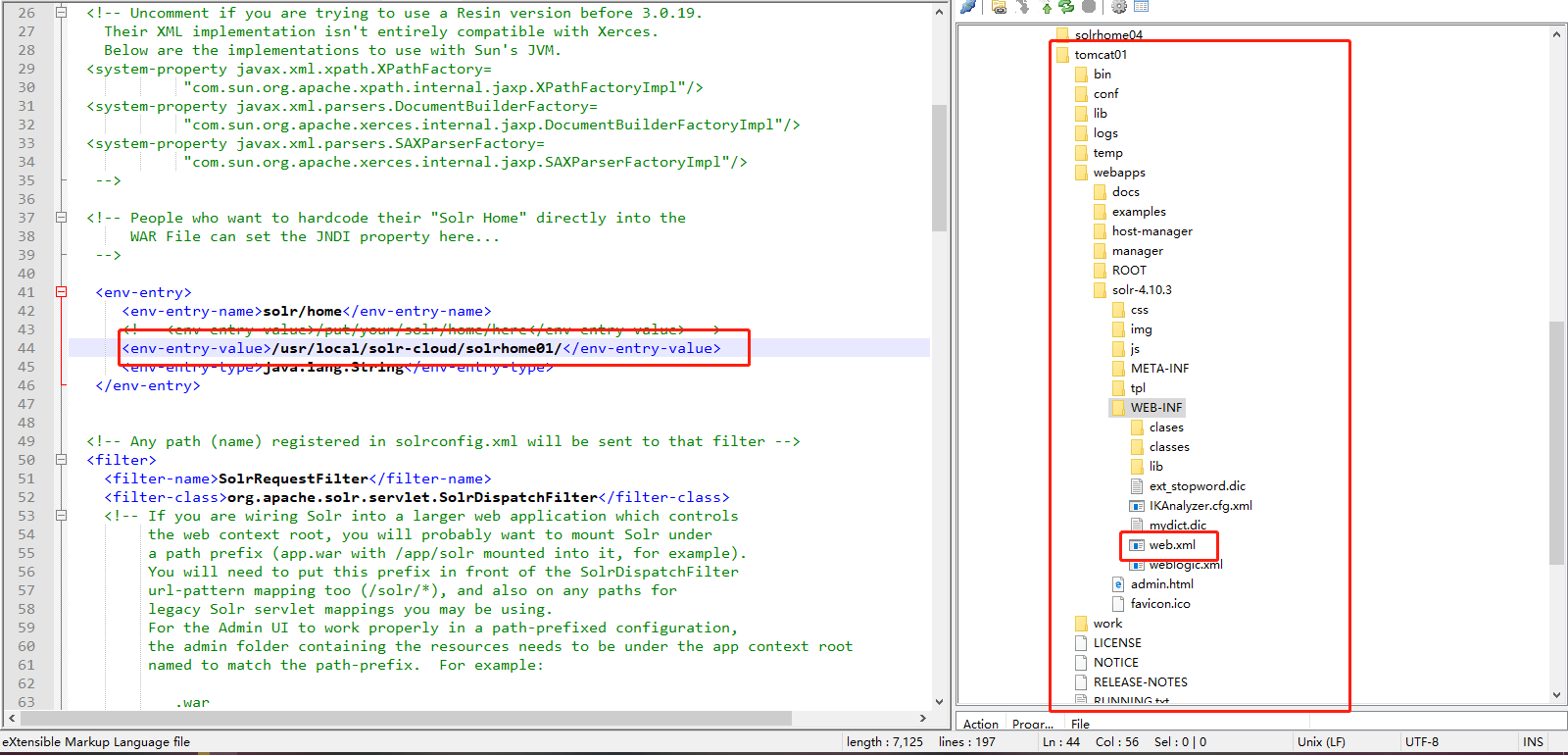

然后将solr和solrhome之间建立关系。

1 [root@localhost solr-cloud]# vim tomcat01/webapps/solr-4.10.3/WEB-INF/web.xml 2 [root@localhost solr-cloud]# vim tomcat02/webapps/solr-4.10.3/WEB-INF/web.xml 3 [root@localhost solr-cloud]# vim tomcat03/webapps/solr-4.10.3/WEB-INF/web.xml 4 [root@localhost solr-cloud]# vim tomcat04/webapps/solr-4.10.3/WEB-INF/web.xml

如下图所示:

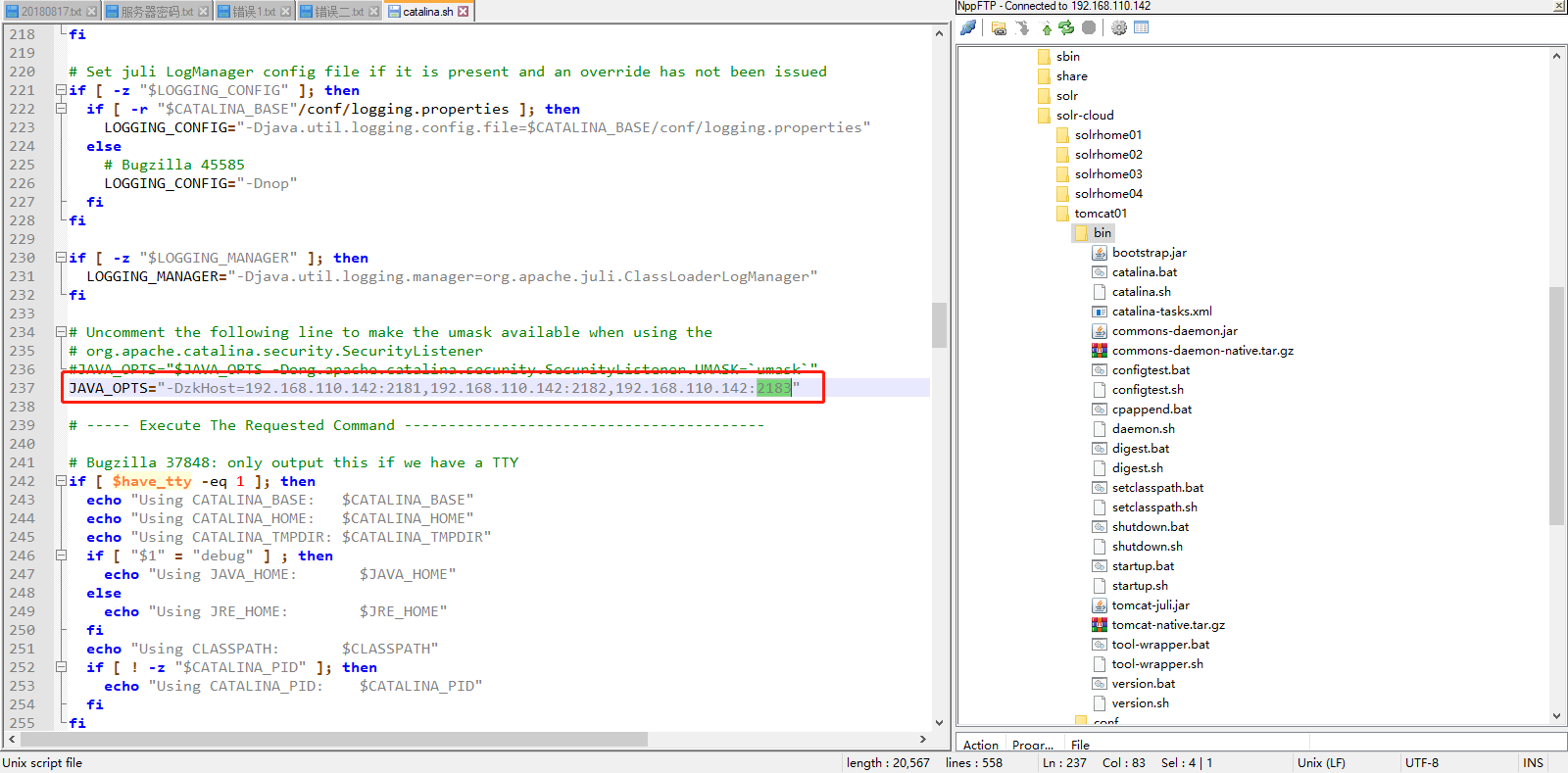

然后将tomcat和zookeeper建立关系。每一台solr和zookeeper关联。

修改每一个tomcat的bin目录下catalina.sh文件中加入DzkHost指定zookeeper服务器地址: JAVA_OPTS="-DzkHost=192.168.110.142:2181,192.168.110.142:2182,192.168.110.142:2183"

注意:可以使用vim的查找功能查找到JAVA_OPTS的定义的位置,然后添加。

1 [root@localhost solr-cloud]# vim tomcat01/bin/catalina.sh 2 [root@localhost solr-cloud]# vim tomcat02/bin/catalina.sh 3 [root@localhost solr-cloud]# vim tomcat03/bin/catalina.sh 4 [root@localhost solr-cloud]# vim tomcat04/bin/catalina.sh

现在,每个部署在tomcat下面的solr都有自己的独立的solrhome。在solr集群环境下, solrhome下面的配置文件应该只有一份,所以这里将配置文件上传到zookeeper,让zookeeper进行管理配置文件。将solrhome下面的conf目录上传到zookeeper。使用solr自带的工具执行,将cong目录上传到zookeeper。

注意:由于zookeeper统一管理solr的配置文件(主要是schema.xml、solrconfig.xml), solrCloud各各节点使用zookeeper管理的配置文件。./zkcli.sh此命令在solr-4.10.3/example/scripts/cloud-scripts/目录下。

1 [root@localhost solrhome01]# cd /home/hadoop/soft/ 2 [root@localhost soft]# ls 3 apache-tomcat-7.0.47 jdk1.7.0_55 solr-4.10.3 zookeeper-3.4.6 4 [root@localhost soft]# cd solr-4.10.3/ 5 [root@localhost solr-4.10.3]# ls 6 bin CHANGES.txt contrib dist docs example licenses LICENSE.txt LUCENE_CHANGES.txt NOTICE.txt README.txt SYSTEM_REQUIREMENTS.txt 7 [root@localhost solr-4.10.3]# cd example/ 8 [root@localhost example]# ls 9 contexts etc example-DIH exampledocs example-schemaless lib logs multicore README.txt resources scripts solr solr-webapp start.jar webapps 10 [root@localhost example]# cd scripts/ 11 [root@localhost scripts]# ls 12 cloud-scripts map-reduce 13 [root@localhost scripts]# cd cloud-scripts/ 14 [root@localhost cloud-scripts]# ls 15 log4j.properties zkcli.bat zkcli.sh 16 [root@localhost cloud-scripts]# ./zkcli.sh -zkhost 192.168.110.142:2181,192.168.110.142:2182,192.168.110.142:2183 -cmd upconfig -confdir /usr/local/solr-cloud/solrhome01/collection1/conf -confname myconf

然后登陆zookeeper服务器查询配置文件,查看是否上传成功。上传成功以后所有节点共用这一份配置文件的。

1 [root@localhost cloud-scripts]# cd /usr/local/solr-cloud/ 2 [root@localhost solr-cloud]# ls 3 solrhome01 solrhome02 solrhome03 solrhome04 start-zookeeper.sh status-zookeeper.sh stop-zookeeper.sh tomcat01 tomcat02 tomcat03 tomcat04 zookeeper1 zookeeper2 zookeeper3 4 [root@localhost solr-cloud]# cd zookeeper1/ 5 [root@localhost zookeeper1]# ls 6 bin CHANGES.txt contrib dist-maven ivysettings.xml lib NOTICE.txt README.txt src zookeeper-3.4.6.jar.asc zookeeper-3.4.6.jar.sha1 7 build.xml conf data docs ivy.xml LICENSE.txt README_packaging.txt recipes zookeeper-3.4.6.jar zookeeper-3.4.6.jar.md5 zookeeper.out 8 [root@localhost zookeeper1]# cd bin/ 9 [root@localhost bin]# ls 10 README.txt zkCleanup.sh zkCli.cmd zkCli.sh zkEnv.cmd zkEnv.sh zkServer.cmd zkServer.sh 11 [root@localhost bin]# ./zkCli.sh 12 Connecting to localhost:2181 13 2019-09-13 20:06:46,748 [myid:] - INFO [main:Environment@100] - Client environment:zookeeper.version=3.4.6-1569965, built on 02/20/2014 09:09 GMT 14 2019-09-13 20:06:46,756 [myid:] - INFO [main:Environment@100] - Client environment:host.name=localhost 15 2019-09-13 20:06:46,757 [myid:] - INFO [main:Environment@100] - Client environment:java.version=1.7.0_55 16 2019-09-13 20:06:46,776 [myid:] - INFO [main:Environment@100] - Client environment:java.vendor=Oracle Corporation 17 2019-09-13 20:06:46,776 [myid:] - INFO [main:Environment@100] - Client environment:java.home=/home/hadoop/soft/jdk1.7.0_55/jre 18 2019-09-13 20:06:46,776 [myid:] - INFO [main:Environment@100] - Client environment:java.class.path=/usr/local/solr-cloud/zookeeper1/bin/../build/classes:/usr/local/solr-cloud/zookeeper1/bin/../build/lib/*.jar:/usr/local/solr-cloud/zookeeper1/bin/../lib/slf4j-log4j12-1.6.1.jar:/usr/local/solr-cloud/zookeeper1/bin/../lib/slf4j-api-1.6.1.jar:/usr/local/solr-cloud/zookeeper1/bin/../lib/netty-3.7.0.Final.jar:/usr/local/solr-cloud/zookeeper1/bin/../lib/log4j-1.2.16.jar:/usr/local/solr-cloud/zookeeper1/bin/../lib/jline-0.9.94.jar:/usr/local/solr-cloud/zookeeper1/bin/../zookeeper-3.4.6.jar:/usr/local/solr-cloud/zookeeper1/bin/../src/java/lib/*.jar:/usr/local/solr-cloud/zookeeper1/bin/../conf: 19 2019-09-13 20:06:46,776 [myid:] - INFO [main:Environment@100] - Client environment:java.library.path=/usr/java/packages/lib/i386:/lib:/usr/lib 20 2019-09-13 20:06:46,777 [myid:] - INFO [main:Environment@100] - Client environment:java.io.tmpdir=/tmp 21 2019-09-13 20:06:46,782 [myid:] - INFO [main:Environment@100] - Client environment:java.compiler=<NA> 22 2019-09-13 20:06:46,783 [myid:] - INFO [main:Environment@100] - Client environment:os.name=Linux 23 2019-09-13 20:06:46,798 [myid:] - INFO [main:Environment@100] - Client environment:os.arch=i386 24 2019-09-13 20:06:46,798 [myid:] - INFO [main:Environment@100] - Client environment:os.version=2.6.32-358.el6.i686 25 2019-09-13 20:06:46,798 [myid:] - INFO [main:Environment@100] - Client environment:user.name=root 26 2019-09-13 20:06:46,798 [myid:] - INFO [main:Environment@100] - Client environment:user.home=/root 27 2019-09-13 20:06:46,798 [myid:] - INFO [main:Environment@100] - Client environment:user.dir=/usr/local/solr-cloud/zookeeper1/bin 28 2019-09-13 20:06:46,803 [myid:] - INFO [main:ZooKeeper@438] - Initiating client connection, connectString=localhost:2181 sessionTimeout=30000 watcher=org.apache.zookeeper.ZooKeeperMain$MyWatcher@932892 29 Welcome to ZooKeeper! 30 2019-09-13 20:06:46,983 [myid:] - INFO [main-SendThread(localhost:2181):ClientCnxn$SendThread@975] - Opening socket connection to server localhost/0:0:0:0:0:0:0:1:2181. Will not attempt to authenticate using SASL (unknown error) 31 JLine support is enabled 32 2019-09-13 20:06:47,071 [myid:] - INFO [main-SendThread(localhost:2181):ClientCnxn$SendThread@852] - Socket connection established to localhost/0:0:0:0:0:0:0:1:2181, initiating session 33 2019-09-13 20:06:47,163 [myid:] - INFO [main-SendThread(localhost:2181):ClientCnxn$SendThread@1235] - Session establishment complete on server localhost/0:0:0:0:0:0:0:1:2181, sessionid = 0x16d2d6bfd280001, negotiated timeout = 30000 34 35 WATCHER:: 36 37 WatchedEvent state:SyncConnected type:None path:null 38 [zk: localhost:2181(CONNECTED) 0] ls / 39 [configs, zookeeper] 40 [zk: localhost:2181(CONNECTED) 1] ls /configs 41 [myconf] 42 [zk: localhost:2181(CONNECTED) 2] ls /configs/myconf 43 [admin-extra.menu-top.html, currency.xml, protwords.txt, mapping-FoldToASCII.txt, _schema_analysis_synonyms_english.json, _rest_managed.json, solrconfig.xml, _schema_analysis_stopwords_english.json, stopwords.txt, lang, spellings.txt, mapping-ISOLatin1Accent.txt, admin-extra.html, xslt, scripts.conf, synonyms.txt, update-script.js, velocity, elevate.xml, admin-extra.menu-bottom.html, schema.xml, clustering]

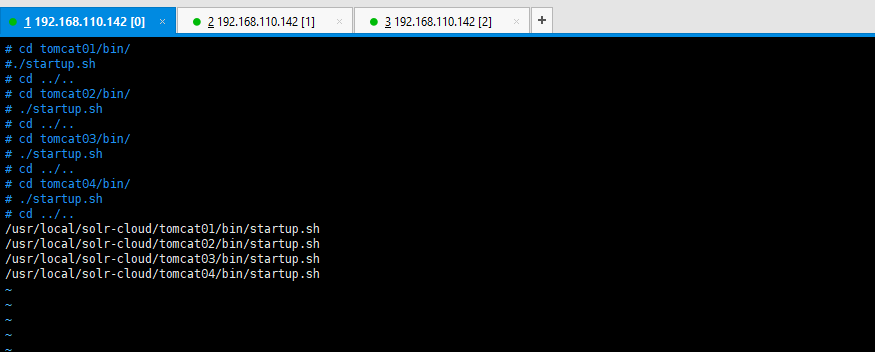

然后将所有的tomcat启动即可。然后也写一个批处理启动tomcat的脚本。

1 [root@localhost solr-cloud]# vim start-tomcat.sh 2 [root@localhost solr-cloud]# ls 3 solrhome01 solrhome02 solrhome03 solrhome04 start-tomcat.sh start-zookeeper.sh status-zookeeper.sh stop-zookeeper.sh tomcat01 tomcat02 tomcat03 tomcat04 zookeeper1 zookeeper2 zookeeper3 4 [root@localhost solr-cloud]# chmod u+x start-tomcat.sh 5 [root@localhost solr-cloud]#

如下图所示:

然后启动你的脚本文件:

1 [root@localhost solr-cloud]# ./start-tomcat.sh 2 Using CATALINA_BASE: /usr/local/solr-cloud/tomcat01 3 Using CATALINA_HOME: /usr/local/solr-cloud/tomcat01 4 Using CATALINA_TMPDIR: /usr/local/solr-cloud/tomcat01/temp 5 Using JRE_HOME: /home/hadoop/soft/jdk1.7.0_55 6 Using CLASSPATH: /usr/local/solr-cloud/tomcat01/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat01/bin/tomcat-juli.jar 7 Using CATALINA_BASE: /usr/local/solr-cloud/tomcat02 8 Using CATALINA_HOME: /usr/local/solr-cloud/tomcat02 9 Using CATALINA_TMPDIR: /usr/local/solr-cloud/tomcat02/temp 10 Using JRE_HOME: /home/hadoop/soft/jdk1.7.0_55 11 Using CLASSPATH: /usr/local/solr-cloud/tomcat02/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat02/bin/tomcat-juli.jar 12 Using CATALINA_BASE: /usr/local/solr-cloud/tomcat03 13 Using CATALINA_HOME: /usr/local/solr-cloud/tomcat03 14 Using CATALINA_TMPDIR: /usr/local/solr-cloud/tomcat03/temp 15 Using JRE_HOME: /home/hadoop/soft/jdk1.7.0_55 16 Using CLASSPATH: /usr/local/solr-cloud/tomcat03/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat03/bin/tomcat-juli.jar 17 Using CATALINA_BASE: /usr/local/solr-cloud/tomcat04 18 Using CATALINA_HOME: /usr/local/solr-cloud/tomcat04 19 Using CATALINA_TMPDIR: /usr/local/solr-cloud/tomcat04/temp 20 Using JRE_HOME: /home/hadoop/soft/jdk1.7.0_55 21 Using CLASSPATH: /usr/local/solr-cloud/tomcat04/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat04/bin/tomcat-juli.jar

然后查看启动的状况,如下所示:

1 [root@localhost solr-cloud]# jps 2 3628 Bootstrap 3 3641 Bootstrap 4 3714 Jps 5 3026 QuorumPeerMain 6 3058 QuorumPeerMain 7 2999 QuorumPeerMain 8 3618 Bootstrap 9 3654 Bootstrap 10 [root@localhost solr-cloud]# ps -aux | grep tomcat 11 Warning: bad syntax, perhaps a bogus '-'? See /usr/share/doc/procps-3.2.8/FAQ 12 root 3618 14.3 10.0 403096 104072 pts/0 Sl 20:18 0:14 /home/hadoop/soft/jdk1.7.0_55/bin/java -Djava.util.logging.config.file=/usr/local/solr-cloud/tomcat01/conf/logging.properties -Djava.util.logging.manager=org.apache.juli.ClassLoaderLogManager -DzkHost=192.168.110.142:2181,192.168.110.142:2182,192.168.110.142:2183 -Djava.endorsed.dirs=/usr/local/solr-cloud/tomcat01/endorsed -classpath /usr/local/solr-cloud/tomcat01/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat01/bin/tomcat-juli.jar -Dcatalina.base=/usr/local/solr-cloud/tomcat01 -Dcatalina.home=/usr/local/solr-cloud/tomcat01 -Djava.io.tmpdir=/usr/local/solr-cloud/tomcat01/temp org.apache.catalina.startup.Bootstrap start 13 root 3628 14.3 10.2 403652 105664 pts/0 Sl 20:18 0:14 /home/hadoop/soft/jdk1.7.0_55/bin/java -Djava.util.logging.config.file=/usr/local/solr-cloud/tomcat02/conf/logging.properties -Djava.util.logging.manager=org.apache.juli.ClassLoaderLogManager -DzkHost=192.168.110.142:2181,192.168.110.142:2182,192.168.110.142:2183 -Djava.endorsed.dirs=/usr/local/solr-cloud/tomcat02/endorsed -classpath /usr/local/solr-cloud/tomcat02/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat02/bin/tomcat-juli.jar -Dcatalina.base=/usr/local/solr-cloud/tomcat02 -Dcatalina.home=/usr/local/solr-cloud/tomcat02 -Djava.io.tmpdir=/usr/local/solr-cloud/tomcat02/temp org.apache.catalina.startup.Bootstrap start 14 root 3641 14.5 10.4 406084 107252 pts/0 Sl 20:18 0:14 /home/hadoop/soft/jdk1.7.0_55/bin/java -Djava.util.logging.config.file=/usr/local/solr-cloud/tomcat03/conf/logging.properties -Djava.util.logging.manager=org.apache.juli.ClassLoaderLogManager -DzkHost=192.168.110.142:2181,192.168.110.142:2182,192.168.110.142:2183 -Djava.endorsed.dirs=/usr/local/solr-cloud/tomcat03/endorsed -classpath /usr/local/solr-cloud/tomcat03/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat03/bin/tomcat-juli.jar -Dcatalina.base=/usr/local/solr-cloud/tomcat03 -Dcatalina.home=/usr/local/solr-cloud/tomcat03 -Djava.io.tmpdir=/usr/local/solr-cloud/tomcat03/temp org.apache.catalina.startup.Bootstrap start 15 root 3654 14.8 10.6 405740 109760 pts/0 Sl 20:18 0:14 /home/hadoop/soft/jdk1.7.0_55/bin/java -Djava.util.logging.config.file=/usr/local/solr-cloud/tomcat04/conf/logging.properties -Djava.util.logging.manager=org.apache.juli.ClassLoaderLogManager -DzkHost=192.168.110.142:2181,192.168.110.142:2182,192.168.110.142:2183 -Djava.endorsed.dirs=/usr/local/solr-cloud/tomcat04/endorsed -classpath /usr/local/solr-cloud/tomcat04/bin/bootstrap.jar:/usr/local/solr-cloud/tomcat04/bin/tomcat-juli.jar -Dcatalina.base=/usr/local/solr-cloud/tomcat04 -Dcatalina.home=/usr/local/solr-cloud/tomcat04 -Djava.io.tmpdir=/usr/local/solr-cloud/tomcat04/temp org.apache.catalina.startup.Bootstrap start 16 root 3808 0.0 0.0 4356 732 pts/0 S+ 20:20 0:00 grep tomcat 17 [root@localhost solr-cloud]#

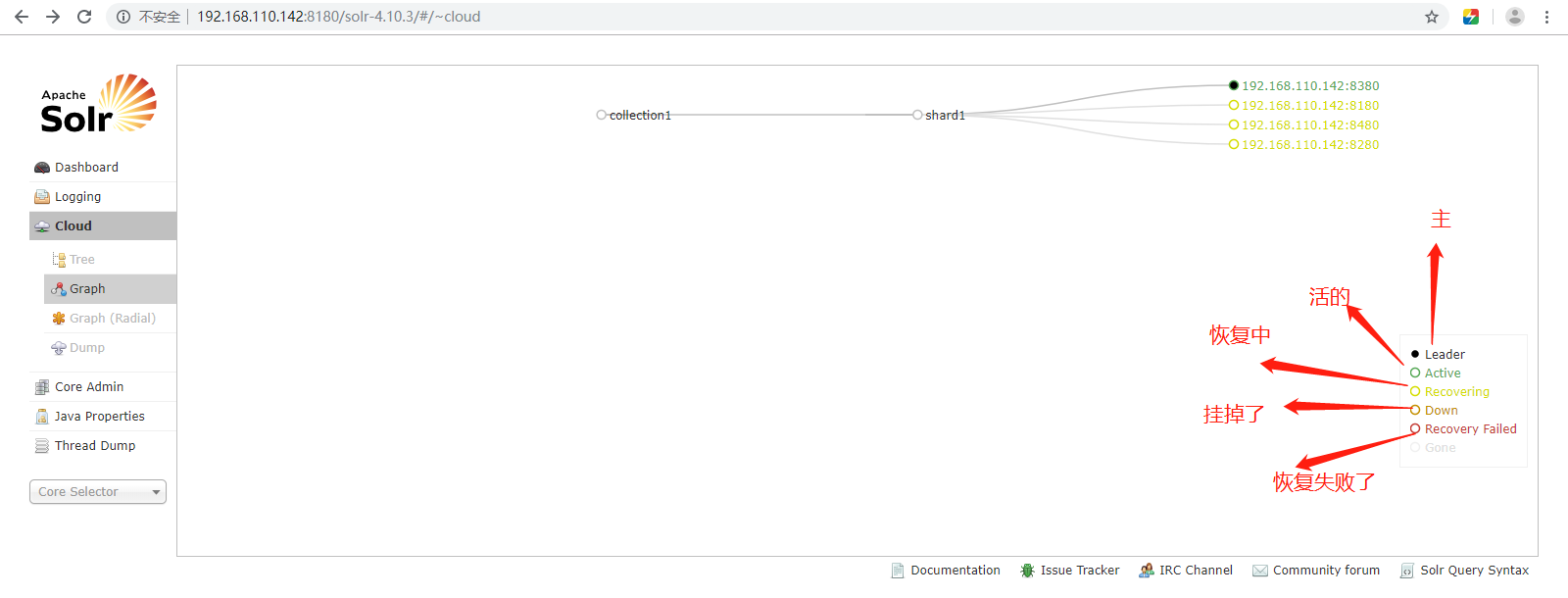

启动以后可以访问一下,看看启动的状况。你可以可以查看启动日志的。我的这个应该是没有启动成功的。然后检查一下原因。

上图中的collection1集群只有一片,可以通过下边的方法配置新的集群。

如果集群中有四个solr节点创建新集群collection2,将集群分为两片,每片两个副本。

做到这里报了一堆莫名奇妙的错误,百度了很久,没有找到具体原因,就是起不来,可能是分配1G的内存太小了,我调到1.5G内存,然后将单机版solr,集群版solr,全部删除了,然后重新搭建,才成功了。如下图所示,这玩意,还是建议多研究,搭建的时候仔细点,不然一不小心就错了。

这内存显示都快使用完了,分配的1.5G内存,那之前的1G内存肯定是不够用了。

网络图如下所示:

注意:访问任意一台solr,左侧菜单出现Cloud。

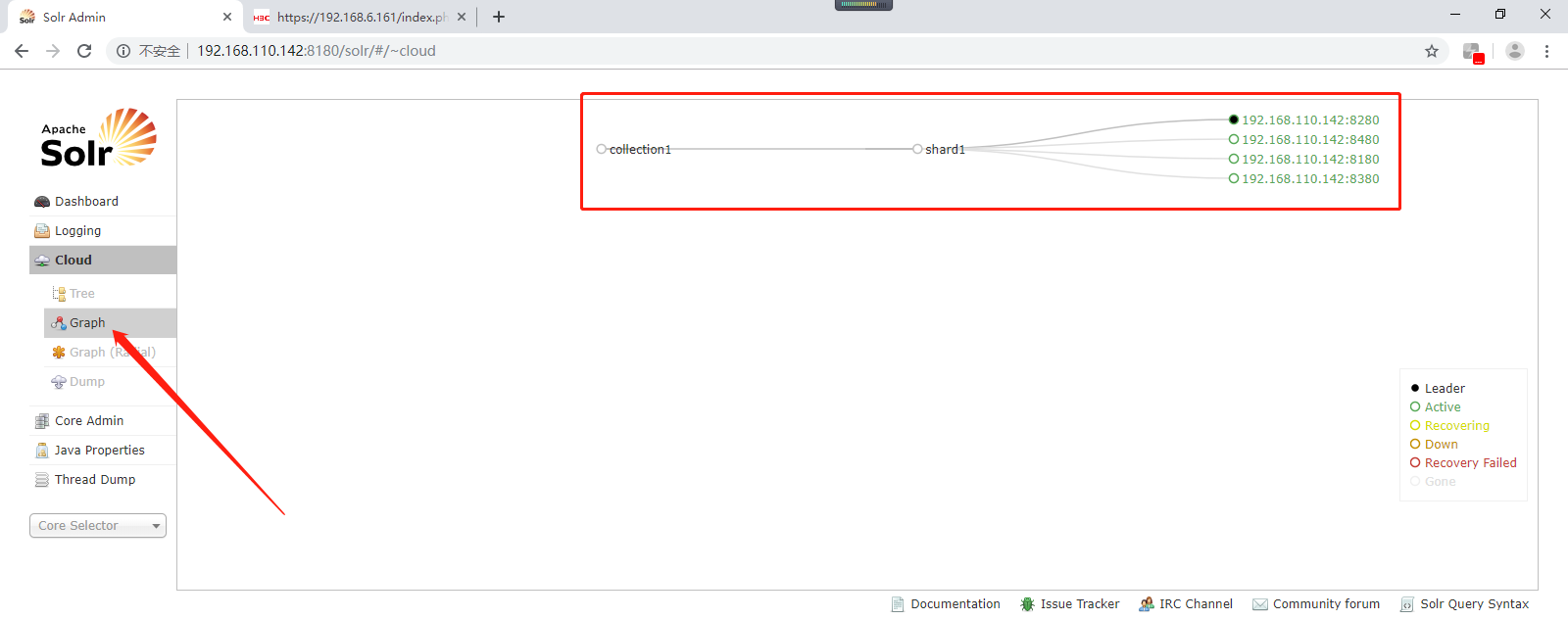

上图中的collection1集群只有一片,可以通过下边的方法配置新的集群。

创建collection的命令如下所示(注意:如果集群中有四个solr节点创建新集群collection2,将集群分为两片,每片两个副本):

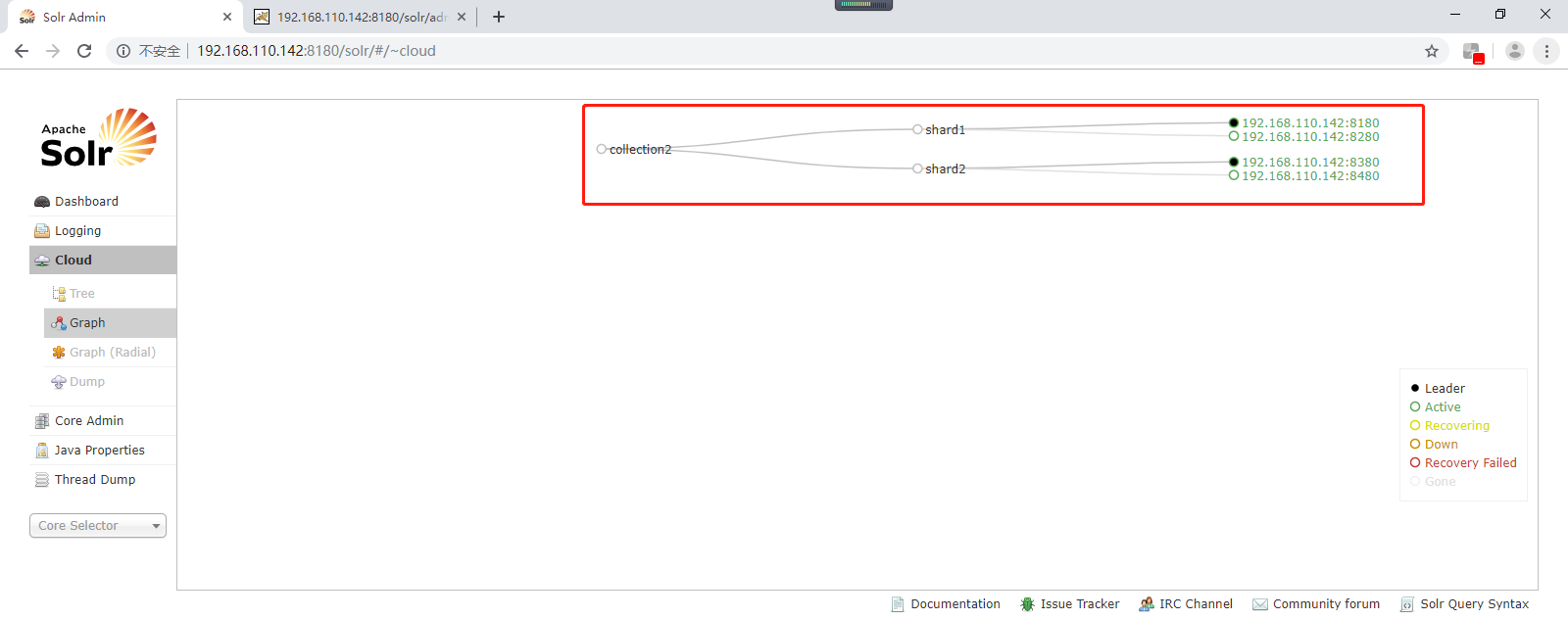

http://192.168.110.142:8180/solr/admin/collections?action=CREATE&name=collection2&numShards=2&replicationFactor=2

刷新页面如下所示:

创建成功以后,就是一个collection分成了两片,一主一备(192.168.110.142:8180、192.168.110.142:8280,依次类比即可)。

删除collection的命令如下所示:http://192.168.110.142:8180/solr/admin/collections?action=DELETE&name=collection1

那么之前的collection1可以删除或者自己留着也行的。

提示tips:启动solrCloud注意,启动solrCloud需要先启动solrCloud依赖的所有zookeeper服务器,再启动每台solr服务器。