一、请知晓

本文是基于:

Event Recommendation Engine Challenge分步解析第一步

Event Recommendation Engine Challenge分步解析第二步

Event Recommendation Engine Challenge分步解析第三步

需要读者先阅读前三篇文章解析

二、构建event和event相似度数据

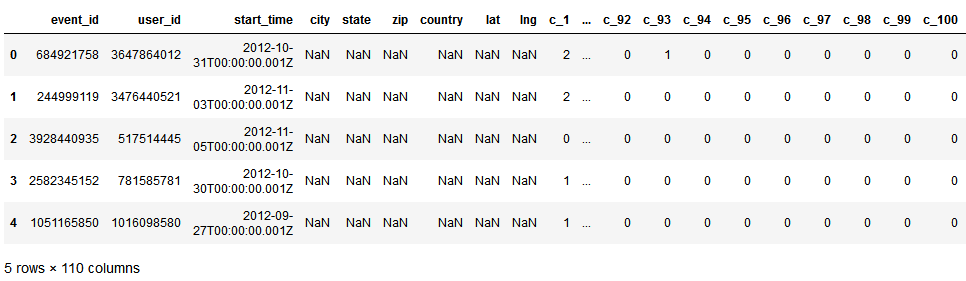

我们先看看events.csv.gz:

import pandas as pd

df_events_csv = pd.read_csv('events.csv.gz', compression='gzip')

df_events_csv.head()

代码实例结果:

文件记录了用户对某event的信息(c_100后面还有一列:c_101):

我们来看看如何对上面表中的列信息进行数值转换

1)start_time:参考Event Recommendation Engine Challenge分步解析第二步4)中的joinedAt列处理

2)city,3)state,4)zip,5)country列处理都利用了hashlib包:注意这里处理event信息的时候,只有那些出现在train.csv和test.csv中的event才会进入数值转换程序

import hashlib

def FeatureHash(value):

if len(value.strip()) == 0:

return -1

else:

return int( hashlib.sha224(value.encode('utf-8')).hexdigest()[0:4] ,16)

print(FeatureHash('Muaraenim'))#47294

print(FeatureHash('a test demo'))#4030

所以,我们在对NA值进行填充或者多种字符串进行数值转换的时候,使用hashlib也是一个不错的选择

6),lat和7)lon列处理:

def getFloatValue(self, value):

if len(value.strip()) == 0:

return 0.0

else:

return float(value)

空值用0.0填充,其他转换为自身的float型

8)c_1之后列(也就是第10列之后)处理:

这里用了一个矩阵eventContMatrix来保存c_1到c_100列信息,但是没有用的c_other列,why?

9)将eventPropMatrix和eventContMatrix矩阵归一化后进行文件保存

10)根据第一步中的uniqueEventPairs来计算event pairs相似度

利用了scipy.spatial.distance的correlation和cosine方法

11)变量介绍

nevents:events数目,即train.csv和test.csv总共多少个events,13418个

self.eventPropMatrix:稀疏矩阵,shape为(13418,7),7代表events.csv.gz中的7列特征,最后进行了归一化

self.eventContMatrix:稀疏矩阵,shape为(13418,100),100代表events.csv.gz文件中的c_1到c_100特征,最后进行了归一化

self.eventPropSim:由user-event行为计算出来的event pair相似度,需要用到第一步中的uniqueEventPairs

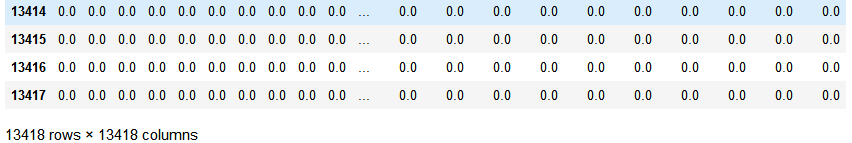

import pandas as pd pd.DataFrame(eventPropSim)

代码示例结果:

self.eventContSim:由event本身的信息计算出的event pair相似度,需要用到第一步中的uniqueEventPairs

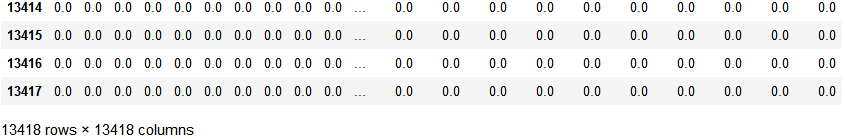

import pandas as pd pd.DataFrame(eventContSim)

代码示例结果:

12)我们来看看第四步代码

from collections import defaultdict

import locale, pycountry

import scipy.sparse as ss

import scipy.io as sio

import itertools

#import cPickle

#From python3, cPickle has beed replaced by _pickle

import _pickle as cPickle

import scipy.spatial.distance as ssd

import datetime

from sklearn.preprocessing import normalize

import gzip

import numpy as np

import hashlib

#处理user和event关联数据

class ProgramEntities:

"""

我们只关心train和test中出现的user和event,因此重点处理这部分关联数据,

经过统计:train和test中总共3391个users和13418个events

"""

def __init__(self):

#统计训练集中有多少独立的用户的events

uniqueUsers = set()#uniqueUsers保存总共多少个用户:3391个

uniqueEvents = set()#uniqueEvents保存总共多少个events:13418个

eventsForUser = defaultdict(set)#字典eventsForUser保存了每个user:所对应的event

usersForEvent = defaultdict(set)#字典usersForEvent保存了每个event:哪些user点击

for filename in ['train.csv', 'test.csv']:

f = open(filename)

f.readline()#跳过第一行

for line in f:

cols = line.strip().split(',')

uniqueUsers.add( cols[0] )

uniqueEvents.add( cols[1] )

eventsForUser[cols[0]].add( cols[1] )

usersForEvent[cols[1]].add( cols[0] )

f.close()

self.userEventScores = ss.dok_matrix( ( len(uniqueUsers), len(uniqueEvents) ) )

self.userIndex = dict()

self.eventIndex = dict()

for i, u in enumerate(uniqueUsers):

self.userIndex[u] = i

for i, e in enumerate(uniqueEvents):

self.eventIndex[e] = i

ftrain = open('train.csv')

ftrain.readline()

for line in ftrain:

cols = line.strip().split(',')

i = self.userIndex[ cols[0] ]

j = self.eventIndex[ cols[1] ]

self.userEventScores[i, j] = int( cols[4] ) - int( cols[5] )

ftrain.close()

sio.mmwrite('PE_userEventScores', self.userEventScores)

#为了防止不必要的计算,我们找出来所有关联的用户或者关联的event

#所谓关联用户指的是至少在同一个event上有行为的用户user pair

#关联的event指的是至少同一个user有行为的event pair

self.uniqueUserPairs = set()

self.uniqueEventPairs = set()

for event in uniqueEvents:

users = usersForEvent[event]

if len(users) > 2:

self.uniqueUserPairs.update( itertools.combinations(users, 2) )

for user in uniqueUsers:

events = eventsForUser[user]

if len(events) > 2:

self.uniqueEventPairs.update( itertools.combinations(events, 2) )

#rint(self.userIndex)

cPickle.dump( self.userIndex, open('PE_userIndex.pkl', 'wb'))

cPickle.dump( self.eventIndex, open('PE_eventIndex.pkl', 'wb') )

#数据清洗类

class DataCleaner:

def __init__(self):

#一些字符串转数值的方法

#载入locale

self.localeIdMap = defaultdict(int)

for i, l in enumerate(locale.locale_alias.keys()):

self.localeIdMap[l] = i + 1

#载入country

self.countryIdMap = defaultdict(int)

ctryIdx = defaultdict(int)

for i, c in enumerate(pycountry.countries):

self.countryIdMap[c.name.lower()] = i + 1

if c.name.lower() == 'usa':

ctryIdx['US'] = i

if c.name.lower() == 'canada':

ctryIdx['CA'] = i

for cc in ctryIdx.keys():

for s in pycountry.subdivisions.get(country_code=cc):

self.countryIdMap[s.name.lower()] = ctryIdx[cc] + 1

self.genderIdMap = defaultdict(int, {'male':1, 'female':2})

#处理LocaleId

def getLocaleId(self, locstr):

#这样因为localeIdMap是defaultdict(int),如果key中没有locstr.lower(),就会返回默认int 0

return self.localeIdMap[ locstr.lower() ]

#处理birthyear

def getBirthYearInt(self, birthYear):

try:

return 0 if birthYear == 'None' else int(birthYear)

except:

return 0

#性别处理

def getGenderId(self, genderStr):

return self.genderIdMap[genderStr]

#joinedAt

def getJoinedYearMonth(self, dateString):

dttm = datetime.datetime.strptime(dateString, "%Y-%m-%dT%H:%M:%S.%fZ")

return "".join( [str(dttm.year), str(dttm.month) ] )

#处理location

def getCountryId(self, location):

if (isinstance( location, str)) and len(location.strip()) > 0 and location.rfind(' ') > -1:

return self.countryIdMap[ location[location.rindex(' ') + 2: ].lower() ]

else:

return 0

#处理timezone

def getTimezoneInt(self, timezone):

try:

return int(timezone)

except:

return 0

def getFeatureHash(self, value):

if len(value.strip()) == 0:

return -1

else:

#return int( hashlib.sha224(value).hexdigest()[0:4], 16) python3会报如下错误

#TypeError: Unicode-objects must be encoded before hashing

return int( hashlib.sha224(value.encode('utf-8')).hexdigest()[0:4], 16)#python必须先进行encode

def getFloatValue(self, value):

if len(value.strip()) == 0:

return 0.0

else:

return float(value)

#用户与用户相似度矩阵

class Users:

"""

构建user/user相似度矩阵

"""

def __init__(self, programEntities, sim=ssd.correlation):#spatial.distance.correlation(u, v) #计算向量u和v之间的相关系数

cleaner = DataCleaner()

nusers = len(programEntities.userIndex.keys())#3391

#print(nusers)

fin = open('users.csv')

colnames = fin.readline().strip().split(',') #7列特征

self.userMatrix = ss.dok_matrix( (nusers, len(colnames)-1 ) )#构建稀疏矩阵

for line in fin:

cols = line.strip().split(',')

#只考虑train.csv中出现的用户,这一行是作者注释上的,但是我不是很理解

#userIndex包含了train和test的所有用户,为何说只考虑train.csv中出现的用户

if cols[0] in programEntities.userIndex:

i = programEntities.userIndex[ cols[0] ]#获取user:对应的index

self.userMatrix[i, 0] = cleaner.getLocaleId( cols[1] )#locale

self.userMatrix[i, 1] = cleaner.getBirthYearInt( cols[2] )#birthyear,空值0填充

self.userMatrix[i, 2] = cleaner.getGenderId( cols[3] )#处理性别

self.userMatrix[i, 3] = cleaner.getJoinedYearMonth( cols[4] )#处理joinedAt列

self.userMatrix[i, 4] = cleaner.getCountryId( cols[5] )#处理location

self.userMatrix[i, 5] = cleaner.getTimezoneInt( cols[6] )#处理timezone

fin.close()

#归一化矩阵

self.userMatrix = normalize(self.userMatrix, norm='l1', axis=0, copy=False)

sio.mmwrite('US_userMatrix', self.userMatrix)

#计算用户相似度矩阵,之后会用到

self.userSimMatrix = ss.dok_matrix( (nusers, nusers) )#(3391,3391)

for i in range(0, nusers):

self.userSimMatrix[i, i] = 1.0

for u1, u2 in programEntities.uniqueUserPairs:

i = programEntities.userIndex[u1]

j = programEntities.userIndex[u2]

if (i, j) not in self.userSimMatrix:

#print(self.userMatrix.getrow(i).todense()) 如[[0.00028123,0.00029847,0.00043592,0.00035208,0,0.00032346]]

#print(self.userMatrix.getrow(j).todense()) 如[[0.00028123,0.00029742,0.00043592,0.00035208,0,-0.00032346]]

usim = sim(self.userMatrix.getrow(i).todense(),self.userMatrix.getrow(j).todense())

self.userSimMatrix[i, j] = usim

self.userSimMatrix[j, i] = usim

sio.mmwrite('US_userSimMatrix', self.userSimMatrix)

#用户社交关系挖掘

class UserFriends:

"""

找出某用户的那些朋友,想法非常简单

1)如果你有更多的朋友,可能你性格外向,更容易参加各种活动

2)如果你朋友会参加某个活动,可能你也会跟随去参加一下

"""

def __init__(self, programEntities):

nusers = len(programEntities.userIndex.keys())#3391

self.numFriends = np.zeros( (nusers) )#array([0., 0., 0., ..., 0., 0., 0.]),保存每一个用户的朋友数

self.userFriends = ss.dok_matrix( (nusers, nusers) )

fin = gzip.open('user_friends.csv.gz')

print( 'Header In User_friends.csv.gz:',fin.readline() )

ln = 0

#逐行打开user_friends.csv.gz文件

#判断第一列的user是否在userIndex中,只有user在userIndex中才是我们关心的user

#获取该用户的Index,和朋友数目

#对于该用户的每一个朋友,如果朋友也在userIndex中,获取其朋友的userIndex,然后去userEventScores中获取该朋友对每个events的反应

#score即为该朋友对所有events的平均分

#userFriends矩阵记录了用户和朋友之间的score

#如851286067:1750用户出现在test.csv中,该用户在User_friends.csv.gz中一共2151个朋友

#那么其朋友占比应该是2151 / 总的朋友数sumNumFriends=3731377.0 = 2151 / 3731377 = 0.0005764627910822198

for line in fin:

if ln % 200 == 0:

print( 'Loading line:', ln )

cols = line.decode().strip().split(',')

user = cols[0]

if user in programEntities.userIndex:

friends = cols[1].split(' ')#获得该用户的朋友列表

i = programEntities.userIndex[user]

self.numFriends[i] = len(friends)

for friend in friends:

if friend in programEntities.userIndex:

j = programEntities.userIndex[friend]

#the objective of this score is to infer the degree to

#and direction in which this friend will influence the

#user's decision, so we sum the user/event score for

#this user across all training events

eventsForUser = programEntities.userEventScores.getrow(j).todense()#获取朋友对每个events的反应:0, 1, or -1

#print(eventsForUser.sum(), np.shape(eventsForUser)[1] )

#socre即是用户朋友在13418个events上的平均分

score = eventsForUser.sum() / np.shape(eventsForUser)[1]#eventsForUser = 13418,

#print(score)

self.userFriends[i, j] += score

self.userFriends[j, i] += score

ln += 1

fin.close()

#归一化数组

sumNumFriends = self.numFriends.sum(axis=0)#每个用户的朋友数相加

#print(sumNumFriends)

self.numFriends = self.numFriends / sumNumFriends#每个user的朋友数目比例

sio.mmwrite('UF_numFriends', np.matrix(self.numFriends) )

self.userFriends = normalize(self.userFriends, norm='l1', axis=0, copy=False)

sio.mmwrite('UF_userFriends', self.userFriends)

#构造event和event相似度数据

class Events:

"""

构建event-event相似度,注意这里有2种相似度

1)由用户-event行为,类似协同过滤算出的相似度

2)由event本身的内容(event信息)计算出的event-event相似度

"""

def __init__(self, programEntities, psim=ssd.correlation, csim=ssd.cosine):

cleaner = DataCleaner()

fin = gzip.open('events.csv.gz')

fin.readline()#skip header

nevents = len(programEntities.eventIndex)

print(nevents)#13418

self.eventPropMatrix = ss.dok_matrix( (nevents, 7) )

self.eventContMatrix = ss.dok_matrix( (nevents, 100) )

ln = 0

for line in fin:

#if ln > 10:

#break

cols = line.decode().strip().split(',')

eventId = cols[0]

if eventId in programEntities.eventIndex:

i = programEntities.eventIndex[eventId]

self.eventPropMatrix[i, 0] = cleaner.getJoinedYearMonth( cols[2] )#start_time

self.eventPropMatrix[i, 1] = cleaner.getFeatureHash( cols[3] )#city

self.eventPropMatrix[i, 2] = cleaner.getFeatureHash( cols[4] )#state

self.eventPropMatrix[i, 3] = cleaner.getFeatureHash( cols[5] )#zip

self.eventPropMatrix[i, 4] = cleaner.getFeatureHash( cols[6] )#country

self.eventPropMatrix[i, 5] = cleaner.getFloatValue( cols[7] )#lat

self.eventPropMatrix[i, 6] = cleaner.getFloatValue( cols[8] )#lon

for j in range(9, 109):

self.eventContMatrix[i, j-9] = cols[j]

ln += 1

fin.close()

self.eventPropMatrix = normalize(self.eventPropMatrix, norm='l1', axis=0, copy=False)

sio.mmwrite('EV_eventPropMatrix', self.eventPropMatrix)

self.eventContMatrix = normalize(self.eventContMatrix, norm='l1', axis=0, copy=False)

sio.mmwrite('EV_eventContMatrix', self.eventContMatrix)

#calculate similarity between event pairs based on the two matrices

self.eventPropSim = ss.dok_matrix( (nevents, nevents) )

self.eventContSim = ss.dok_matrix( (nevents, nevents) )

for e1, e2 in programEntities.uniqueEventPairs:

i = programEntities.eventIndex[e1]

j = programEntities.eventIndex[e2]

if not ((i, j) in self.eventPropSim):

epsim = psim( self.eventPropMatrix.getrow(i).todense(), self.eventPropMatrix.getrow(j).todense())

self.eventPropSim[i, j] = epsim

self.eventPropSim[j, i] = epsim

if not ((i, j) in self.eventContSim):

ecsim = csim( self.eventContMatrix.getrow(i).todense(), self.eventContMatrix.getrow(j).todense())

self.eventContSim[i, j] = ecsim

self.eventContSim[j, i] = ecsim

sio.mmwrite('EV_eventPropSim', self.eventPropSim)

sio.mmwrite('EV_eventContSim', self.eventContSim)

print('第1步:统计user和event相关信息...')

pe = ProgramEntities()

print('第1步完成...

')

print('第2步:计算用户相似度信息,并用矩阵形式存储...')

#Users(pe)

print('第2步完成...

')

print('第3步:计算用户社交关系信息,并存储...')

UserFriends(pe)

print('第3步完成...

')

print('第4步:计算event相似度信息,并用矩阵形式存储...')

Events(pe)

print('第4步完成...

')

至此,第四步完成,哪里有不明白的请留言