莫凡大佬的原文章https://mofanpy.com/tutorials/machine-learning/tensorflow/intro-RNN/

RNN 的用途

可以读取数据中的顺序,获取顺序的特点, 对于预测, 顺序排列是多么重要。 我们可以预测下一个按照一定顺序排列的字, 但是打乱顺序, 我们就没办法分析自己到底在说什么了。

序列数据

我们想象现在有一组序列数据 data 0,1,2,3. 在当预测 result0 的时候,我们基于的是 data0, 同样在预测其他数据的时候, 我们也都只单单基于单个的数据. 每次使用的神经网络都是同一个 NN. 不过这些数据是有关联 顺序的 , 就像在厨房做菜, 酱料 A要比酱料 B 早放, 不然就串味了. 所以普通的神经网络结构并不能让 NN 了解这些数据之间的关联.

处理序列数据的神经网络

如何让序列数据之间的关联也被分析呢?只能像人一样具有记忆能力,让神经网络也具备这种记住之前发生的事的能力。再分析 Data0 的时候, 我们把分析结果存入记忆start0. 然后当分析 data1的时候, NN会产生新的记忆start2, 但是新记忆和老记忆是没有联系的. 我们就简单的把老记忆调用过来, 一起分析. 如果继续分析更多的有序数据 , RNN就会把之前的记忆都累积起来, 一起分析。

RNN 的弊端

之前我们说RNN具有记忆能力但一般的RNN比较健忘,根据之前的说法RNN会记住 Data0; data1等等之后所有的start,太多了就记不清了。

为什么会这样呢?

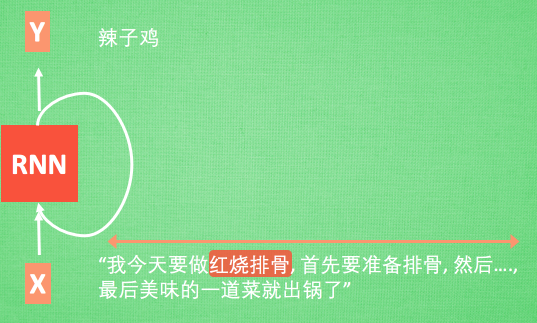

想像现在有这样一个 RNN, 他的输入值是一句话: ‘我今天要做红烧排骨, 首先要准备排骨, 然后…., 最后美味的一道菜就出锅了’, shua ~ 说着说着就流口水了. 现在请 RNN 来分析, 我今天做的到底是什么菜呢. RNN可能会给出“辣子鸡”这个答案. 由于判断失误, RNN就要开始学习 这个长序列 X 和 ‘红烧排骨’ 的关系 , 而RNN需要的关键信息 ”红烧排骨”却出现在句子开头,

再来看看 RNN是怎样学习的吧. 红烧排骨这个信息原的记忆要进过长途跋涉才能抵达最后一个时间点. 然后我们得到误差, 而且在 反向传递 得到的误差的时候, 他在每一步都会 乘以一个自己的参数 W. 如果这个 W 是一个小于1 的数, 比如0.9. 这个0.9 不断乘以误差, 误差传到初始时间点也会是一个接近于零的数, 所以对于初始时刻, 误差相当于就消失了. 我们把这个问题叫做梯度消失或者梯度弥散 Gradient vanishing. 反之如果 W 是一个大于1 的数, 比如1.1 不断累乘, 则到最后变成了无穷大的数, RNN被这无穷大的数撑死了, 这种情况我们叫做剃度爆炸, Gradient exploding. 这就是普通 RNN 没有办法回忆起久远记忆的原因.

分类例子

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data # 数据 mnist = input_data.read_data_sets('MNIST_data', one_hot=True) # 超参 hyperparameters lr = 0.001 # 学习率 training_iters = 100000 # train step 上限 batch_size = 128 n_inputs = 28 # MNIST data input (img shape: 28*28) n_steps = 28 # time steps 一次输入28个像素(一整行),共28次(共28行) n_hidden_units = 128 # 隐藏层中的神经元 neurons in hidden layer n_classes = 10 # MNIST classes (0-9 digits) # 为网络输入定义占位符 x = tf.placeholder(tf.float32, [None, n_steps, n_inputs]) y = tf.placeholder(tf.float32, [None, n_classes]) # 定义权重 weights 和 biases weights = { #(28,128) "in": tf.Variable(tf.random_normal([n_inputs, n_hidden_units])), #(128,10) "out": tf.Variable(tf.random_normal([n_hidden_units, n_classes])) } biases = { # (128, ) 'in': tf.Variable(tf.constant(0.1, shape=[n_hidden_units, ])), # (10, ) 'out': tf.Variable(tf.constant(0.1, shape=[n_classes, ])) } # 接着开始定义 RNN 主体结构, 这个 RNN 总共有 3 个组成部分 # ( input_layer, cell, output_layer). def RNN(X, weights, biases): # 首先我们先定义 input_layer: # 原始的 X 是 3 维数据, 我们需要把它变成 2 维数据才能使用 weights 的矩阵乘法 # X ==> (128 batches * 28 steps, 28 inputs) X = tf.reshape(X,[-1, n_inputs]) # X_in = W*X + b # ==>(128 natch* 28 steps, 128 hidden) X_in = tf.matmul(X, weights['in']) + biases['in'] # X_in ==> (128 batch, 28 steps, 128 hidden) 换回三维 X_in = tf.reshape(X_in,[-1, n_steps, n_hidden_units]) # cell ########################################## # 因Tensorflow版本升级原因, state_is_tuple = True将在之后的版本中变为默认. # 对于lstm来说, state可被分为(c_state, h_state). LSTM_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True) init_state = LSTM_cell.zero_state(batch_size, dtype=tf.float32) # 初始化全零 state # 使用tf.nn.dynamic_rnn(cell, inputs), 我们要确定inputs的格式. # tf.nn.dynamic_rnn中的time_major参数会针对不同inputs格式有不同的值. # 如果inputs为(batches, steps, inputs) == > time_major = False; # 如果inputs为(steps, batches, inputs) == > time_major = True; outputs, final_state = tf.nn.dynamic_rnn(LSTM_cell, X_in, initial_state=init_state, time_major=False) # hidden layer for output as the final results ############################################# 有两个方法 #法一: results = tf.matmul(final_state[1], weights['out']) + biases['out'] # or 法二 outputs = tf.unstack(tf.transpose(outputs, [1,0,2])) # transpose是进行三维张量转置,102代表第一第二维度交换,利用transpose将outputs的三个维度整理变成(n_steps,batch_size,output) results = tf.matmul(outputs[-1], weights['out']) + biases['out'] # shape = (128, 10) return results pred = RNN(x, weights, biases) cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=pred, labels=y)) train_op = tf.train.AdamOptimizer(lr).minimize(cost) # 利用tf.argmax()按行求出真实值y、预测值pred最大值的下标, # 用tf.equal()求出真实值和预测值相等的数量,也就是预测结果正确的数量, # tf.argmax()和tf.equal()一般是结合着用。 correct_pred = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1)) accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32)) init = tf.global_variables_initializer() saver = tf.train.Saver(max_to_keep=1) # 创建saver对象来保存训练的模型 with tf.Session() as sess: sess.run(init) step = 0 max_acc = 0 is_train = True if is_train: while step * batch_size < training_iters: batch_xs, batch_ys = mnist.train.next_batch(batch_size) batch_xs = batch_xs.reshape([batch_size, n_steps, n_inputs]) sess.run([train_op], feed_dict={ x: batch_xs, y: batch_ys, }) if step % 20 == 0: test_acc = sess.run(accuracy, feed_dict={ x: batch_xs, y: batch_ys, }) print(test_acc) # 计算当前模型在测试集上准确率,最终保存准确率最高的一次模型 if test_acc > max_acc: max_acc = test_acc saver.save(sess, './ckptRNN/mnistRNNLSTM.ckpt', global_step=step) step += 1

回归例子

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt import pandas as pd BATCH_START = 0 # 建立 batch data 时候的 index TIME_STEPS = 20 # 通过时间的反向传播time_steps backpropagation through time 的 time_steps BATCH_SIZE = 50 INPUT_SIZE = 1 # sin 数据输入 size OUTPUT_SIZE = 1 # cos 数据输出 size CELL_SIZE = 10 # RNN 的 hidden unit size LR = 0.006 # learning rate # 捏一个数据 def get_batch(): global BATCH_START, TIME_STEPS # xs shape (50batch, 20steps) xs = np.arange(BATCH_START, BATCH_START+TIME_STEPS*BATCH_SIZE).reshape((BATCH_SIZE, TIME_STEPS)) / (10*np.pi) seq = np.sin(xs) res = np.cos(xs) BATCH_START += TIME_STEPS # plt.plot(xs[0, :], res[0, :], 'r', xs[0, :], seq[0, :], 'b--') # plt.show() # returned seq, res and xs: shape (batch, step, input) return [seq[:, :, np.newaxis], res[:, :, np.newaxis], xs] class LSTMRNN(object): def __init__(self, n_steps, input_size, output_size, cell_size, batch_size): self.n_steps = n_steps # cell的个数 self.input_size = input_size #每一个cell输入的长度 self.output_size = output_size self.cell_size = cell_size # 每一个RNNcell隐藏神经元数量 self.batch_size = batch_size with tf.name_scope('inputs'): self.xs = tf.placeholder(tf.float32, [None, n_steps, input_size], name='xs') self.ys = tf.placeholder(tf.float32, [None, n_steps, output_size], name='ys') with tf.variable_scope('in_hidden'): self.add_input_layer() with tf.variable_scope('LSTM_cell'): self.add_cell() with tf.variable_scope('out_hidden'): self.add_output_layer() with tf.name_scope('cost'): self.compute_cost() with tf.name_scope('train'): self.train_op = tf.train.AdamOptimizer(LR).minimize(self.cost) def add_input_layer(self): l_in_x = tf.reshape(self.xs, [-1, self.input_size], name='2_2D') # (batch*n_step, in_size) (1000,1) # Ws (in_size, cell_size) (10,1) Ws_in = self._weight_variable([self.input_size, self.cell_size]) # bs (cell_size, ) (10,) bs_in = self._bias_variable([self.cell_size, ]) # l_in_y = (batch * n_steps, cell_size) (1000,10) with tf.name_scope('Wx_plus_b'): l_in_y = tf.matmul(l_in_x, Ws_in) + bs_in # reshape l_in_y ==> (batch, n_steps, cell_size) (50,20,10) self.l_in_y = tf.reshape(l_in_y, [-1, self.n_steps, self.cell_size], name='2_3D') def add_cell(self): lstm_cell = tf.contrib.rnn.BasicLSTMCell(self.cell_size, forget_bias=1.0, state_is_tuple=True) with tf.name_scope('initial_state'): self.cell_init_state = lstm_cell.zero_state(self.batch_size, dtype=tf.float32) self.cell_outputs, self.cell_final_state = tf.nn.dynamic_rnn( lstm_cell, self.l_in_y, initial_state=self.cell_init_state, time_major=False ) def add_output_layer(self): # shape = (batch * steps, cell_size) (50*20,10) l_out_x = tf.reshape(self.cell_outputs, [-1, self.cell_size], name='2_2D') Ws_out = self._weight_variable([self.cell_size, self.output_size]) bs_out = self._bias_variable([self.output_size, ]) # shape = (batch * steps, output_size) with tf.name_scope('Wx_plus_b'): self.pred = tf.matmul(l_out_x, Ws_out) + bs_out def compute_cost(self): losses = tf.contrib.legacy_seq2seq.sequence_loss_by_example( [tf.reshape(self.pred, [-1], name='reshape_pred')], [tf.reshape(self.ys, [-1], name='reshape_target')], [tf.ones([self.batch_size * self.n_steps], dtype=tf.float32)], average_across_timesteps=True, softmax_loss_function=self.ms_error, name='losses' ) with tf.name_scope('average_cost'): self.cost = tf.div( tf.reduce_sum(losses, name='losses_sum'), self.batch_size, name='average_cost') correct_pred = tf.equal(tf.argmax([tf.reshape(self.pred, [-1])], 1), tf.argmax([tf.reshape(self.ys, [-1])], 1)) tf.summary.scalar('cost', self.cost) @staticmethod def ms_error(labels, logits): return tf.square(tf.subtract(labels, logits)) def _weight_variable(self, shape, name='weights'): initializer = tf.random_normal_initializer(mean=0., stddev=1.,) return tf.get_variable(shape=shape, initializer=initializer, name=name) def _bias_variable(self, shape, name='biases'): initializer = tf.constant_initializer(0.1) return tf.get_variable(name=name, shape=shape, initializer=initializer) if __name__ == '__main__': # 搭建 LSTMRNN 模型 model = LSTMRNN(TIME_STEPS, INPUT_SIZE, OUTPUT_SIZE, CELL_SIZE, BATCH_SIZE) sess = tf.Session() merged = tf.summary.merge_all() writer = tf.summary.FileWriter("logs", sess.graph) init = tf.global_variables_initializer() sess.run(init) # relocate to the local dir and run this line to view it on Chrome (http://0.0.0.0:6006/): # $ tensorboard --logdir='logs' plt.ion() plt.show() # 训练 200 次 for i in range(200): seq, res, xs = get_batch()# 提取 batch data if i == 0: # 初始化 data feed_dict = { model.xs: seq, model.ys: res, # create initial state } else: feed_dict = { model.xs: seq, model.ys: res, model.cell_init_state: state # 保持 state 的连续性# use last state as the initial state for this run } # 训练 _, cost, state, pred = sess.run( [model.train_op, model.cost, model.cell_final_state, model.pred], feed_dict=feed_dict) # plotting plt.plot(xs[0, :], res[0].flatten(), 'r', xs[0, :], pred.flatten()[:TIME_STEPS], 'b--') plt.ylim((-1.2, 1.2)) plt.draw() plt.pause(0.3) if i % 20 == 0: print('cost: ', round(cost, 4)) result = sess.run(merged, feed_dict) writer.add_summary(result, i)