11

- 手动请求的发送 - yield scrapy.Request(url,callback)/FormRequest(url,callback,formdata) - 深度爬取/全站数据的爬取 - 请求传参: - 使用场景:爬取的数据没有在同一张页面。深度爬取 - yield scrapy.Request(url,callback,meta={})/FormRequest(url,callback,formdata,meta={}) - item = response.meta['item'] - start_requests(self): - 作用:将start_urls列表中的列表元素进行get请求的发送 - 核心组件 - PyExcJs:模拟执行js程序 - 安装好js环境- nodeJs的环境 - js混淆 - js加密 - robots - UA伪装 - 代理 - Cookie - 动态变化的请求参数 - 验证码 - 图片懒加载 - 页面动态加载的数据

下载中间件 - 作用:批量拦截整个工程中发起的所有请求和响应 - 为什么要拦截请求 - UA伪装: - process_request:request.headers['User-Agent']= xxx - 代理ip的设定 - process_exception:request.meta['proxy']= ’http://ip:port‘ - 为什么要拦截响应 - 篡改响应数据/篡改响应对象 - 注意:中间件需要在配置文件中手动开启 网易新闻爬取 - 需求:网易新闻中国内,国际,军事,航空,无人机这五个板块下的新闻标题和内容 创建一个四字段的库表(title,content,keys,type) - 分析: 1.每一个板块下对应的新闻标题数据都是动态加载出来的 2.新闻详情页的数据不是动态加载的 - 在scrapy中使用selenium - 在爬虫文件的构造方法中实例化一个浏览器对象 - 在爬虫文件中重写一个closed(self,spider)方法,关闭浏览器对象 - 在下载中间件的process_response中获取浏览器对象,然后执行浏览器自动化的相关操作 CrawlSpider的全站数据爬取 - CrawlSpider就是另一种形式的爬虫类。CrawlSpider就是Spider的一个子类 - 创建一个基于CrawlSpider的爬虫文件: - scrapy genspider -t crawl spiderName www.xxx.com 分布式 - 概念:组建一个分布式的机群,让分布式机群对同一组数据进行分布爬取. - 作用:提升数据爬取的效率 - 如何实现分布式? - scrapy+redis实现的分布式 - scrapy结合着scrapy-redis组建实现的分布式 - 原生的scrapy是无法实现分布式 - 调度器无法被分布式机群共享 - 管道无法被分布式机群共享 - scrapy-redis的作用是什么? - 给原生的scrapy提供了可以被共享的调度器和管道 - 为什么这个组建叫做scrapy-redis? - 分布爬取的数据必须存储到redis中 - 编码流程: - pip install scrapy-redis - 创建爬虫文件(CrawlSpider/Spider) - 修改爬虫文件: - 导入scrapy-redis模块封装好的类 - from scrapy_redis.spiders import RedisCrawlSpider - 将爬虫类的父类指定成RedisCrawlSpider - 将allowed_domains和start_urls删除 - 添加一个新属性:redis_key = 'xxx'#可以被共享的调度器队列的名称 - 进行settings文件的配置: - 指定管道: ITEM_PIPELINES = { 'scrapy_redis.pipelines.RedisPipeline': 400 } - 指定调度器 DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter" SCHEDULER = "scrapy_redis.scheduler.Scheduler" SCHEDULER_PERSIST = True - 指定数据库 REDIS_HOST = 'redis服务的ip地址' REDIS_PORT = 6379 - 配置redis的配置文件redis.windows.conf: - 56行:#bind 127.0.0.1 - 关闭保护模式:protected-mode no - 启动redis服务: - redis-server ./redis.windows.conf - redis-cli - 执行程序: 进入到爬虫文件对应的目录中:scrapy runspider xxx.py - 向调度器队列中放入一个起始的url: - 调度器的队列名称就是redis_key值 - 在redis-cli:lpush 队列名称 www.xxx.com

分布式

1pip install scrapy-redis

2创建爬虫文件

3修改爬虫文件

- 导入scrapy-redis模块封装好的类

- from scrapy_redis.spiders import RedisCrawlSpider(仅为举例)

- 将爬虫类的父类指定成RedisCrawlSpider

- 将allowed_domains和start_urls删除

- 添加一个新属性:redis_key = 'xxx'#可以被共享的调度器队列的名称

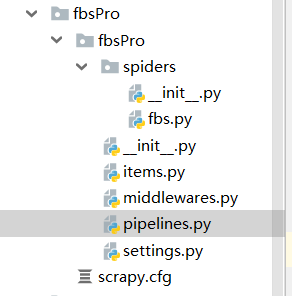

fbs.py

# -*- coding: utf-8 -*- import scrapy from scrapy.linkextractors import LinkExtractor from scrapy.spiders import CrawlSpider, Rule from scrapy_redis.spiders import RedisCrawlSpider from fbsPro.items import FbsproItem class FbsSpider(RedisCrawlSpider): name = 'fbs'

# allowed_domains = ['www.xxx.com'] # start_urls = ['http://www.xxx.com/']

redis_key = 'sunQueue' #可以被共享的调度器队列的名称 rules = ( Rule(LinkExtractor(allow=r'type=4&page=d+'), callback='parse_item', follow=True), ) def parse_item(self, response): tr_list = response.xpath('//*[@id="morelist"]/div/table[2]//tr/td/table//tr') for tr in tr_list: title = tr.xpath('./td[2]/a[2]/text()').extract_first() item = FbsproItem() item['title'] = title yield item

setting 配置

- 指定管道:

ITEM_PIPELINES = {

'scrapy_redis.pipelines.RedisPipeline': 400

}

- 指定调度器

DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter"

SCHEDULER = "scrapy_redis.scheduler.Scheduler"

SCHEDULER_PERSIST = True

- 指定数据库

REDIS_HOST = 'redis服务的ip地址'

BOT_NAME = 'fbsPro' SPIDER_MODULES = ['fbsPro.spiders'] NEWSPIDER_MODULE = 'fbsPro.spiders' USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.87 Safari/537.36' # Crawl responsibly by identifying yourself (and your website) on the user-agent #USER_AGENT = 'fbsPro (+http://www.yourdomain.com)' # Obey robots.txt rules ROBOTSTXT_OBEY = False # Configure maximum concurrent requests performed by Scrapy (default: 16) CONCURRENT_REQUESTS = 2 # Configure a delay for requests for the same website (default: 0) # See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay # See also autothrottle settings and docs #DOWNLOAD_DELAY = 3 # The download delay setting will honor only one of: #CONCURRENT_REQUESTS_PER_DOMAIN = 16 #CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default) #COOKIES_ENABLED = False # Disable Telnet Console (enabled by default) #TELNETCONSOLE_ENABLED = False # Override the default request headers: #DEFAULT_REQUEST_HEADERS = { # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', # 'Accept-Language': 'en', #} # Enable or disable spider middlewares # See https://docs.scrapy.org/en/latest/topics/spider-middleware.html #SPIDER_MIDDLEWARES = { # 'fbsPro.middlewares.FbsproSpiderMiddleware': 543, #} # Enable or disable downloader middlewares # See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html #DOWNLOADER_MIDDLEWARES = { # 'fbsPro.middlewares.FbsproDownloaderMiddleware': 543, #} # Enable or disable extensions # See https://docs.scrapy.org/en/latest/topics/extensions.html #EXTENSIONS = { # 'scrapy.extensions.telnet.TelnetConsole': None, #} # Configure item pipelines # See https://docs.scrapy.org/en/latest/topics/item-pipeline.html #ITEM_PIPELINES = { # 'fbsPro.pipelines.FbsproPipeline': 300, #} # Enable and configure the AutoThrottle extension (disabled by default) # See https://docs.scrapy.org/en/latest/topics/autothrottle.html #AUTOTHROTTLE_ENABLED = True # The initial download delay #AUTOTHROTTLE_START_DELAY = 5 # The maximum download delay to be set in case of high latencies #AUTOTHROTTLE_MAX_DELAY = 60 # The average number of requests Scrapy should be sending in parallel to # each remote server #AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 # Enable showing throttling stats for every response received: #AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default) # See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings #HTTPCACHE_ENABLED = True #HTTPCACHE_EXPIRATION_SECS = 0 #HTTPCACHE_DIR = 'httpcache' #HTTPCACHE_IGNORE_HTTP_CODES = [] #HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage' ITEM_PIPELINES = { 'scrapy_redis.pipelines.RedisPipeline': 400 } # 增加了一个去重容器类的配置, 作用使用Redis的set集合来存储请求的指纹数据, 从而实现请求去重的持久化 DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter" # 使用scrapy-redis组件自己的调度器 SCHEDULER = "scrapy_redis.scheduler.Scheduler" # 配置调度器是否要持久化, 也就是当爬虫结束了, 要不要清空Redis中请求队列和去重指纹的set。如果是True, 就表示要持久化存储, 就不清空数据, 否则清空数据 SCHEDULER_PERSIST = True REDIS_HOST = '192.168.16.53' REDIS_PORT = 6379

- 配置redis的配置文件redis.windows.conf:

- 56行:#bind 127.0.0.1

- 关闭保护模式:protected-mode no

- 启动redis服务:

- redis-server ./redis.windows.conf

- redis-cli

- 执行程序:

进入到爬虫文件对应的目录中:scrapy runspider xxx.py

- 向调度器队列中放入一个起始的url:

- 调度器的队列名称就是redis_key值

- 在redis-cli:lpush 队列名称 www.xxx.com

item .py 文件

import scrapy class FbsproItem(scrapy.Item): # define the fields for your item here like: title = scrapy.Field() # pass