给定一篇新闻的链接newsUrl,获取该新闻的全部信息

标题、作者、发布单位、审核、来源

发布时间:转换成datetime类型

点击:

- newsUrl

- newsId(使用正则表达式re)

- clickUrl(str.format(newsId))

- requests.get(clickUrl)

- newClick(用字符串处理,或正则表达式)

- int()

整个过程包装成一个简单清晰的函数。

尝试去爬取一个你感兴趣的网页。

import re

import requests

from bs4 import BeautifulSoup

def newsnum(url):

newsid = re.match('http://news.gzcc.cn/html/2019/meitishijie_0321/(d+).html', url).group(1)

return newsid

def newstime(soup):

pattern1 = re.compile(r'发布时间:(.*?)xa0', re.S)

time = re.findall(pattern1, soup.select('.show-info')[0].text)[0]

return time

def click(url):

id = re.findall('(d{5})', url)[0]

clickUrl = 'http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80'.format(id)

res = requests.get(clickUrl)

click = re.findall('(d+)', res.text)[-1]

return click

def main(url):

res = requests.get(url)

res.encoding = 'utf-8'

soup = BeautifulSoup(res.text, 'html.parser')

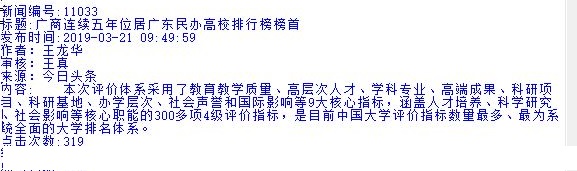

print("新闻编号:" + newsnum(url)) # 新闻编号id

print("标题:" + soup.select('.show-title')[0].text) # 标题

print("发布时间:" + newstime(soup)) # 发布时间

print(soup.select('.show-info')[0].text.split()[2]) # 作者

print(soup.select('.show-info')[0].text.split()[3]) # 审核

print(soup.select('.show-info')[0].text.split()[4]) # 来源

print("内容:" + soup.select('.show-content p')[0].text) # 内容

print("点击次数:" + click(url))

if __name__ == "__main__":

url = "http://news.gzcc.cn/html/2019/meitishijie_0321/11033.html"

main(url)