1. 查看PyTorch版本

import torch

print(torch.__version__)

2. 模型参数量

model = FPN()

num_params = sum(p.numel() for p in model.parameters())

print("num of params: {:.2f}k".format(num_params/1000.0))

# torch.numel()返回tensor的元素数目,即number of elements

3. 打印模型

model = FPN()

num_params = sum(p.numel() for p in model.parameters())

print("num of params: {:.2f}k".format(num_params/1000.0))

print("===========================")

#for p in model.parameters():

# print(p.name)

print(model)

4. with torch.no_grad()

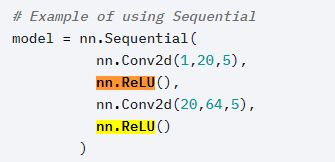

5. 激活函数torch.nn.ReLU(inplace=False)

- 官方文档:https://pytorch.org/docs/stable/nn.html#relu

- inplace的作用:https://www.cnblogs.com/wanghui-garcia/p/10642665.html

6. torch.nn.Sequential

7. 模型保存&&加载

https://zhuanlan.zhihu.com/p/76604532

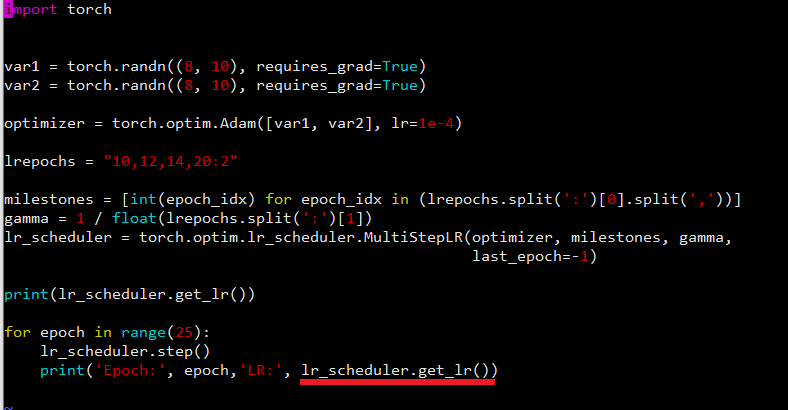

8. 调整学习率 How to adjust learning rate

9. nn.ModuleList

10. detach() 分离,用于切断反向传播

https://www.cnblogs.com/wanghui-garcia/p/10677071.html

11. 二维卷积torch.nn.Conv2d

torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride=1, padding=0,

dilation=1, groups=1, bias=True, padding_mode='zeros')

12. torch.nn.Unfold()

13. 打印网络架构

14. 固定模型的部分参数

15. torch.unbind

Removes a tensor dimension

返回一个tuple

16. torch.clamp

- torch.clamp(input, min, max)

- torch.clamp(input, min=MIN)

- torch.clamp(input, max=MAX)