本次作业来源:https://edu.cnblogs.com/campus/gzcc/GZCC-16SE1/homework/2822

中文词频统计

1. 下载一长篇中文小说。

《追风筝的人》.txt

2. 从文件读取待分析文本。

3. 安装并使用jieba进行中文分词。

pip install jieba

import jieba

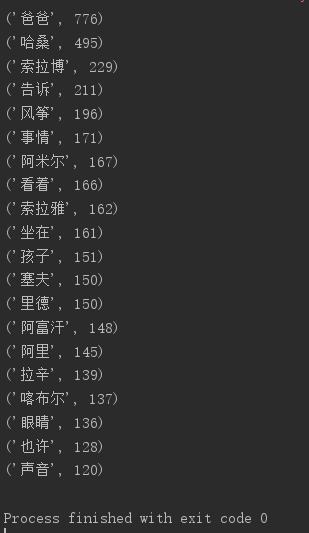

jieba.lcut(text)

4. 更新词库,加入所分析对象的专业词汇。

jieba.add_word('天罡北斗阵') #逐个添加

jieba.load_userdict(word_dict) #词库文本文件

参考词库下载地址:https://pinyin.sogou.com/dict/

转换代码:scel_to_text

5. 生成词频统计

6. 排序

7. 排除语法型词汇,代词、冠词、连词等停用词。

stops

tokens=[token for token in wordsls if token not in stops]

8. 输出词频最大TOP20,把结果存放到文件里

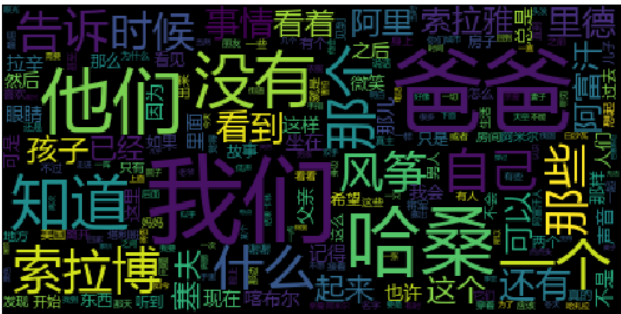

9. 生成词云。

10.

# -*- coding: utf-8 -*- from wordcloud import WordCloud import matplotlib.pyplot as plt import pandas as pd import jieba def stopwordslist(): stopwords = [line.strip() for line in open('F:stops_chinese.txt', encoding='UTF-8').readlines()] return stopwords txt = open('cipher.txt', 'r', encoding='utf-8').read() stopwords = stopwordslist() wordsls = jieba.lcut(txt); wcdict = {} for word in wordsls: if word not in stopwords: if len(word) == 1: continue else: wcdict[word] = wcdict.get(word, 0) + 1 wcls = list(wcdict.items()) wcls.sort(key=lambda x: x[1], reverse=True) for i in range(20): print(wcls[i]) cut_text = " ".join(wordsls) 'print(cut_text)' mywc = WordCloud().generate(cut_text) plt.imshow(mywc) plt.axis("off") plt.show() pd.DataFrame(data=wcls).to_csv('dldl.csv',encoding='utf-8')