在模型训练部分,为了保证模型的真实效果,我们需要对模型进行一些调试和优化,主要分为以下五个环节:

-

计算分类准确率,观测模型训练效果。

交叉熵损失函数只能作为优化目标,无法直接准确衡量模型的训练效果。准确率可以直接衡量训练效果,但由于其离散性质,不适合做为损失函数优化神经网络。

-

检查模型训练过程,识别潜在问题。

如果模型的损失或者评估指标表现异常,我们通常需要打印模型每一层的输入和输出来定位问题,分析每一层的内容来获取错误的原因。

-

加入校验或测试,更好评价模型效果。

理想的模型训练结果是在训练集和验证集上均有较高的准确率,如果训练集上的准确率高于验证集,说明网络训练程度不够;如果验证集的准确率高于训练集,可能是发生了 过拟合现象。通过在优化目标中加入正则化项的办法,可以解决过拟合的问题。

-

加入正则化项,避免模型过拟合。

-

可视化分析。

用户不仅可以通过打印或使用matplotlib库作图,飞桨还集成了更专业的第三方绘图库tb-paddle,提供便捷的可视化分析。

1. 计算模型的分类准确率

飞桨提供了便利的计算分类准确率的API,使用fluid.layers.accuracy(input, label)可以直接计算准确率,该API的输入为预测的分类结果input和对应的标签label。

在下述代码中,我们在模型前向计算过程forward函数中计算分类准确率(132/140行)。

1 # 加载相关库 2 import os 3 import random 4 import paddle 5 import paddle.fluid as fluid 6 from paddle.fluid.dygraph.nn import Conv2D, Pool2D, FC 7 import numpy as np 8 from PIL import Image 9 10 import gzip 11 import json 12 13 # 定义数据集读取器 14 def load_data(mode='train'): 15 16 # 读取数据文件 17 datafile = './work/mnist.json.gz' 18 print('loading mnist dataset from {} ......'.format(datafile)) 19 data = json.load(gzip.open(datafile)) 20 # 读取数据集中的训练集,验证集和测试集 21 train_set, val_set, eval_set = data 22 23 # 数据集相关参数,图片高度IMG_ROWS, 图片宽度IMG_COLS 24 IMG_ROWS = 28 25 IMG_COLS = 28 26 # 根据输入mode参数决定使用训练集,验证集还是测试 27 if mode == 'train': 28 imgs = train_set[0] 29 labels = train_set[1] 30 elif mode == 'valid': 31 imgs = val_set[0] 32 labels = val_set[1] 33 elif mode == 'eval': 34 imgs = eval_set[0] 35 labels = eval_set[1] 36 # 获得所有图像的数量 37 imgs_length = len(imgs) 38 # 验证图像数量和标签数量是否一致 39 assert len(imgs) == len(labels), 40 "length of train_imgs({}) should be the same as train_labels({})".format( 41 len(imgs), len(labels)) 42 43 index_list = list(range(imgs_length)) 44 45 # 读入数据时用到的batchsize 46 BATCHSIZE = 100 47 48 # 定义数据生成器 49 def data_generator(): 50 # 训练模式下,打乱训练数据 51 if mode == 'train': 52 random.shuffle(index_list) 53 imgs_list = [] 54 labels_list = [] 55 # 按照索引读取数据 56 for i in index_list: 57 # 读取图像和标签,转换其尺寸和类型 58 img = np.reshape(imgs[i], [1, IMG_ROWS, IMG_COLS]).astype('float32') 59 label = np.reshape(labels[i], [1]).astype('int64') 60 imgs_list.append(img) 61 labels_list.append(label) 62 # 如果当前数据缓存达到了batch size,就返回一个批次数据 63 if len(imgs_list) == BATCHSIZE: 64 yield np.array(imgs_list), np.array(labels_list) 65 # 清空数据缓存列表 66 imgs_list = [] 67 labels_list = [] 68 69 # 如果剩余数据的数目小于BATCHSIZE, 70 # 则剩余数据一起构成一个大小为len(imgs_list)的mini-batch 71 if len(imgs_list) > 0: 72 yield np.array(imgs_list), np.array(labels_list) 73 74 return data_generator 75 76 77 # 定义模型结构 78 class MNIST(fluid.dygraph.Layer): 79 def __init__(self, name_scope): 80 super(MNIST, self).__init__(name_scope) 81 name_scope = self.full_name() 82 # 定义卷积层,输出通道20,卷积核大小为5,步长为1,padding为2,使用relu激活函数 83 self.conv1 = Conv2D(name_scope, num_filters=20, filter_size=5, stride=1, padding=2, act='relu') 84 # 定义池化层,池化核为2,采用最大池化方式 85 self.pool1 = Pool2D(name_scope, pool_size=2, pool_stride=2, pool_type='max') 86 # 定义卷积层,输出通道20,卷积核大小为5,步长为1,padding为2,使用relu激活函数 87 self.conv2 = Conv2D(name_scope, num_filters=20, filter_size=5, stride=1, padding=2, act='relu') 88 # 定义池化层,池化核为2,采用最大池化方式 89 self.pool2 = Pool2D(name_scope, pool_size=2, pool_stride=2, pool_type='max') 90 # 定义全连接层,输出节点数为10,激活函数使用softmax 91 self.fc = FC(name_scope, size=10, act='softmax') 92 93 # 定义网络的前向计算过程 94 def forward(self, inputs, label=None): 95 x = self.conv1(inputs) 96 x = self.pool1(x) 97 x = self.conv2(x) 98 x = self.pool2(x) 99 x = self.fc(x) 100 if label is not None: 101 acc = fluid.layers.accuracy(input=x, label=label) 102 return x, acc 103 else: 104 return x 105 106 #调用加载数据的函数 107 train_loader = load_data('train') 108 109 #在使用GPU机器时,可以将use_gpu变量设置成True 110 use_gpu = True 111 place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace() 112 113 with fluid.dygraph.guard(place): 114 model = MNIST("mnist") 115 model.train() 116 117 #四种优化算法的设置方案,可以逐一尝试效果 118 #optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01) 119 optimizer=fluid.optimizer.MomentumOptimizer(learning_rate=0.01, momentum=0.9) 120 #optimizer = fluid.optimizer.AdagradOptimizer(learning_rate=0.01) 121 #optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01) 122 123 EPOCH_NUM = 5 124 for epoch_id in range(EPOCH_NUM): 125 for batch_id, data in enumerate(train_loader()): 126 #准备数据 127 image_data, label_data = data 128 image = fluid.dygraph.to_variable(image_data) 129 label = fluid.dygraph.to_variable(label_data) 130 131 #前向计算的过程,同时拿到模型输出值和分类准确率 132 predict, avg_acc = model(image, label) 133 134 #计算损失,取一个批次样本损失的平均值 135 loss = fluid.layers.cross_entropy(predict, label) 136 avg_loss = fluid.layers.mean(loss) 137 138 #每训练了200批次的数据,打印下当前Loss的情况 139 if batch_id % 200 == 0: 140 print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(),avg_acc.numpy())) 141 142 #后向传播,更新参数的过程 143 avg_loss.backward() 144 optimizer.minimize(avg_loss) 145 model.clear_gradients() 146 147 #保存模型参数 148 fluid.save_dygraph(model.state_dict(), 'mnist')

loading mnist dataset from ./work/mnist.json.gz ...... epoch: 0, batch: 0, loss is: [2.453652], acc is [0.14] epoch: 0, batch: 200, loss is: [0.13674787], acc is [0.97] epoch: 0, batch: 400, loss is: [0.06896302], acc is [0.99] epoch: 1, batch: 0, loss is: [0.04395049], acc is [0.99] epoch: 1, batch: 200, loss is: [0.16760933], acc is [0.97] epoch: 1, batch: 400, loss is: [0.07087836], acc is [0.99] epoch: 2, batch: 0, loss is: [0.04871644], acc is [0.99] epoch: 2, batch: 200, loss is: [0.04871706], acc is [0.98] epoch: 2, batch: 400, loss is: [0.16377419], acc is [0.95] epoch: 3, batch: 0, loss is: [0.03665862], acc is [0.99] epoch: 3, batch: 200, loss is: [0.05040814], acc is [0.97] epoch: 3, batch: 400, loss is: [0.04509254], acc is [0.99] epoch: 4, batch: 0, loss is: [0.01414255], acc is [1.] epoch: 4, batch: 200, loss is: [0.05578731], acc is [0.96] epoch: 4, batch: 400, loss is: [0.03861135], acc is [0.99]

上面即为训练结果。

2. 检查模型训练过程,识别潜在训练问题

不同于某些深度学习框架的高层API,使用飞桨动态图编程可以方便的查看和调试训练的执行过程。在网络定义的Forward函数中,可以打印每一层输入输出的尺寸,以及每层网络的参数。通过查看这些信息,不仅可以让我们可以更好的理解训练的执行过程,还可以发现潜在问题,或者启发继续优化的思路。

1 # 定义模型结构 2 class MNIST(fluid.dygraph.Layer): 3 def __init__(self, name_scope): 4 super(MNIST, self).__init__(name_scope) 5 name_scope = self.full_name() 6 self.conv1 = Conv2D(name_scope, num_filters=20, filter_size=5, stride=1, padding=2) 7 self.pool1 = Pool2D(name_scope, pool_size=2, pool_stride=2, pool_type='max') 8 self.conv2 = Conv2D(name_scope, num_filters=20, filter_size=5, stride=1, padding=2) 9 self.pool2 = Pool2D(name_scope, pool_size=2, pool_stride=2, pool_type='max') 10 self.fc = FC(name_scope, size=10, act='softmax') 11 12 #加入对每一层输入和输出的尺寸和数据内容的打印,根据check参数决策是否打印每层的参数和输出尺寸 13 def forward(self, inputs, label=None, check_shape=False, check_content=False): 14 # 给不同层的输出不同命名,方便调试 15 outputs1 = self.conv1(inputs) 16 outputs2 = self.pool1(outputs1) 17 outputs3 = self.conv2(outputs2) 18 outputs4 = self.pool2(outputs3) 19 outputs5 = self.fc(outputs4) 20 21 # 选择是否打印神经网络每层的参数尺寸和输出尺寸,验证网络结构是否设置正确 22 if check_shape: 23 # 打印每层网络设置的超参数-卷积核尺寸,卷积步长,卷积padding,池化核尺寸 24 print(" ########## print network layer's superparams ##############") 25 print("conv1-- kernel_size:{}, padding:{}, stride:{}".format(self.conv1.weight.shape, self.conv1._padding, self.conv1._stride)) 26 print("conv2-- kernel_size:{}, padding:{}, stride:{}".format(self.conv2.weight.shape, self.conv2._padding, self.conv2._stride)) 27 print("pool1-- pool_type:{}, pool_size:{}, pool_stride:{}".format(self.pool1._pool_type, self.pool1._pool_size, self.pool1._pool_stride)) 28 print("pool2-- pool_type:{}, poo2_size:{}, pool_stride:{}".format(self.pool2._pool_type, self.pool2._pool_size, self.pool2._pool_stride)) 29 print("fc-- weight_size:{}, bias_size_{}, activation:{}".format(self.fc.weight.shape, self.fc.bias.shape, self.fc._act)) 30 31 # 打印每层的输出尺寸 32 print(" ########## print shape of features of every layer ###############") 33 print("inputs_shape: {}".format(inputs.shape)) 34 print("outputs1_shape: {}".format(outputs1.shape)) 35 print("outputs2_shape: {}".format(outputs2.shape)) 36 print("outputs3_shape: {}".format(outputs3.shape)) 37 print("outputs4_shape: {}".format(outputs4.shape)) 38 print("outputs5_shape: {}".format(outputs5.shape)) 39 40 # 选择是否打印训练过程中的参数和输出内容,可用于训练过程中的调试 41 if check_content: 42 # 打印卷积层的参数-卷积核权重,权重参数较多,此处只打印部分参数 43 print(" ########## print convolution layer's kernel ###############") 44 print("conv1 params -- kernel weights:", self.conv1.weight[0][0]) 45 print("conv2 params -- kernel weights:", self.conv2.weight[0][0]) 46 47 # 创建随机数,随机打印某一个通道的输出值 48 idx1 = np.random.randint(0, outputs1.shape[1]) 49 idx2 = np.random.randint(0, outputs3.shape[1]) 50 # 打印卷积-池化后的结果,仅打印batch中第一个图像对应的特征 51 print(" The {}th channel of conv1 layer: ".format(idx1), outputs1[0][idx1]) 52 print("The {}th channel of conv2 layer: ".format(idx2), outputs3[0][idx2]) 53 print("The output of last layer:", outputs5[0], ' ') 54 55 # 如果label不是None,则计算分类精度并返回 56 if label is not None: 57 acc = fluid.layers.accuracy(input=outputs5, label=label) 58 return outputs5, acc 59 else: 60 return outputs5 61 62 63 #在使用GPU机器时,可以将use_gpu变量设置成True 64 use_gpu = True 65 place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace() 66 67 with fluid.dygraph.guard(place): 68 model = MNIST("mnist") 69 model.train() 70 71 #四种优化算法的设置方案,可以逐一尝试效果 72 optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01) 73 #optimizer = fluid.optimizer.MomentumOptimizer(learning_rate=0.01) 74 #optimizer = fluid.optimizer.AdagradOptimizer(learning_rate=0.01) 75 #optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01) 76 77 EPOCH_NUM = 1 78 for epoch_id in range(EPOCH_NUM): 79 for batch_id, data in enumerate(train_loader()): 80 #准备数据,变得更加简洁 81 image_data, label_data = data 82 image = fluid.dygraph.to_variable(image_data) 83 label = fluid.dygraph.to_variable(label_data) 84 85 #前向计算的过程,同时拿到模型输出值和分类准确率 86 if batch_id == 0 and epoch_id==0: 87 # 打印模型参数和每层输出的尺寸 88 predict, acc = model(image, label, check_shape=True, check_content=False) 89 elif batch_id==401: 90 # 打印模型参数和每层输出的值 91 predict, avg_acc = model(image, label, check_shape=False, check_content=True) 92 else: 93 predict, avg_acc = model(image, label) 94 95 #计算损失,取一个批次样本损失的平均值 96 loss = fluid.layers.cross_entropy(predict, label) 97 avg_loss = fluid.layers.mean(loss) 98 99 #每训练了100批次的数据,打印下当前Loss的情况 100 if batch_id % 200 == 0: 101 print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(),avg_acc.numpy())) 102 103 #后向传播,更新参数的过程 104 avg_loss.backward() 105 optimizer.minimize(avg_loss) 106 model.clear_gradients() 107 108 #保存模型参数 109 fluid.save_dygraph(model.state_dict(), 'mnist')

在88/91/93行中调用model()函数时,实际调用13行的forward(),根据参数check_shape,check_content来判断是否打印调试结果。

下图为打印的调试输出(只运行一个批次):

Debug Output

Debug Output

1 ########## print network layer's superparams ############## 2 conv1-- kernel_size:[20, 1, 5, 5], padding:[2, 2], stride:[1, 1] 3 conv2-- kernel_size:[20, 20, 5, 5], padding:[2, 2], stride:[1, 1] 4 pool1-- pool_type:max, pool_size:[2, 2], pool_stride:[2, 2] 5 pool2-- pool_type:max, poo2_size:[2, 2], pool_stride:[2, 2] 6 fc-- weight_size:[980, 10], bias_size_[10], activation:softmax 7 8 ########## print shape of features of every layer ############### 9 inputs_shape: [100, 1, 28, 28] 10 outputs1_shape: [100, 20, 28, 28] 11 outputs2_shape: [100, 20, 14, 14] 12 outputs3_shape: [100, 20, 14, 14] 13 outputs4_shape: [100, 20, 7, 7] 14 outputs5_shape: [100, 10] 15 epoch: 0, batch: 0, loss is: [2.833605], acc is [0.97] 16 epoch: 0, batch: 200, loss is: [0.3450297], acc is [0.91] 17 epoch: 0, batch: 400, loss is: [0.284598], acc is [0.92] 18 19 ########## print convolution layer's kernel ############### 20 conv1 params -- kernel weights: name tmp_7228, dtype: VarType.FP32 shape: [5, 5] lod: {} 21 dim: 5, 5 22 layout: NCHW 23 dtype: float 24 data: [0.0417931 0.148238 0.234299 -0.170058 0.022536 0.388339 0.35604 0.26834 -0.0265003 0.306087 -0.413583 -0.109627 0.496471 0.652652 -0.164484 0.016321 -0.014093 -0.0783259 -0.048394 -0.0432972 -0.23372 0.235798 0.0142133 0.245447 0.283901] 25 26 conv2 params -- kernel weights: name tmp_7230, dtype: VarType.FP32 shape: [5, 5] lod: {} 27 dim: 5, 5 28 layout: NCHW 29 dtype: float 30 data: [0.0301458 -0.0083977 0.122417 0.07717 0.123363 0.0390582 0.0386526 0.0208216 0.0494713 0.0714368 -0.00223818 -0.0330977 -0.114399 -0.0830213 -0.00123063 0.123892 -0.0415339 0.0259391 -0.051009 -0.071314 0.103089 0.0380028 0.152786 -0.0623465 -0.137493] 31 32 33 The 10th channel of conv1 layer: name tmp_7232, dtype: VarType.FP32 shape: [28, 28] lod: {} 34 dim: 28, 28 35 layout: NCHW 36 dtype: float 37 data: [-0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.227416 -0.341168 -0.00898913 0.245888 0.203894 0.0158452 0.00830419 0.0561035 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.0271134 -0.310818 -0.344543 -0.201866 -0.41639 -0.0805225 0.616205 0.447202 0.122654 0.115708 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.094523 -0.353209 -0.276969 -0.188898 -0.370524 -0.0135588 0.226655 0.000799649 0.0601707 0.225055 0.0223338 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.0271134 -0.31421 -0.426021 -0.244359 -0.508873 -0.33502 0.0642141 0.585103 0.70857 0.425303 0.0200628 0.0281492 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.175312 -0.444617 -0.286869 -0.535482 -0.542795 -0.197564 0.136141 0.923833 1.7959 1.40511 0.632616 0.145223 0.0320405 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.469567 -0.417733 -0.474001 -0.233533 0.090552 -0.238024 0.672436 1.27637 1.30291 1.52437 0.95958 0.172325 0.109885 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.494165 -0.715875 -0.505472 0.35959 0.652144 0.77834 0.670472 0.762846 0.929859 0.719077 0.668767 0.246665 0.0155299 0.0719344 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.525673 -0.904378 -0.265834 0.343386 1.16095 1.23854 1.00445 0.647635 0.685046 0.941925 0.641869 0.295427 0.225691 0.0959096 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.733092 -0.834154 -0.0549029 0.774414 1.71971 0.998786 0.640441 0.625185 0.933412 1.20161 1.29194 0.599725 0.14729 0.085043 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.586884 -0.892087 -0.085339 1.06036 1.73759 0.747157 -0.135803 0.131083 0.589838 1.50793 1.48482 1.05853 0.431647 0.0437523 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.276015 -0.743183 -0.400213 0.792531 1.24229 0.88946 0.0784385 -0.596246 0.350287 1.36152 1.57825 1.27972 0.52862 0.109994 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.264183 -0.311866 -0.359332 0.44633 0.988894 0.811296 -0.114045 -0.102798 0.377372 0.864523 1.44074 1.32035 0.664752 0.117657 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.229782 -0.241305 -0.329568 0.423641 1.1405 0.698666 0.0643131 0.136629 0.622223 1.27759 1.41772 0.931909 0.462135 0.0685794 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.0347595 -0.460302 -0.492766 0.123706 0.626997 0.754893 0.743404 0.767749 1.30776 1.61465 1.17583 0.74578 0.132815 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.325539 -0.43819 -0.441965 -0.524221 0.511401 0.850486 0.895314 1.46339 1.8233 1.5277 1.09828 0.524725 0.0639521 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.106079 -0.465974 -0.369364 -0.538873 -0.087559 0.179577 0.35095 1.02317 1.43502 1.75764 1.31522 0.908732 0.251842 -0.000574195 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.485362 -0.301338 -0.37676 -0.369458 -0.070034 0.0149574 0.943519 0.722266 1.4009 1.73945 1.205 0.660178 0.131438 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.221638 -0.434888 -0.537166 -0.65911 0.067112 0.279011 0.844792 0.692166 1.06196 1.7318 1.60627 1.17025 0.575702 0.0744205 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.292149 -0.482934 -0.777972 -0.337539 -0.102133 0.947546 1.06326 0.926256 1.11653 1.13417 1.50645 1.22528 0.429329 0.0685228 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 0.00519593 -0.598682 -0.999808 -0.270425 0.596526 0.989157 0.83917 0.786463 0.636592 1.09794 1.48123 0.916883 0.451473 0.0611396 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.0991777 -0.298492 -0.140628 -0.0516299 0.535211 0.933824 0.89026 0.681906 0.893806 1.38018 1.11581 0.875768 0.302748 0.0261167 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.195025 -0.257898 0.0945173 0.611554 1.17706 0.979482 0.924687 0.888834 1.27574 1.23745 1.01893 0.564557 0.0977506 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.0144523 -0.0673982 -0.235353 0.211315 0.841964 1.28418 1.42209 1.43951 1.24168 0.94783 0.576813 0.144289 0.00367208 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.0104721 -0.0104104 0.048745 0.242489 0.67455 0.806947 0.772788 0.560504 0.336613 0.0851565 -0.000574195 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352 -0.00785352] 38 39 The 7th channel of conv2 layer: name tmp_7234, dtype: VarType.FP32 shape: [14, 14] lod: {} 40 dim: 14, 14 41 layout: NCHW 42 dtype: float 43 data: [0.028245 0.020599 0.0210198 0.0874535 0.494471 0.630649 0.149109 -0.0728132 0.230379 1.58221 1.28339 0.248955 0.00272413 0.00537403 0.0322912 0.020086 -0.0301809 0.496768 0.653191 0.169372 0.144909 -0.231259 0.389402 2.76422 2.63198 0.607453 0.102271 -0.0036014 0.0465655 0.0364478 0.300417 0.840682 0.240587 -1.01291 -1.99307 -0.833542 1.05192 3.61047 3.42107 1.30671 0.244999 -0.00237758 0.0465655 0.0364477 0.382605 0.286884 -0.402162 -2.5869 -3.01405 -0.427758 1.46716 2.88402 4.11867 2.11142 0.490123 -0.00237744 0.0465654 0.0364476 0.371547 -0.829134 -1.39772 -3.34309 -4.2324 1.17491 1.95059 2.51751 3.82009 3.1198 0.598566 -0.00237748 0.0465654 0.0364476 0.200699 -0.994076 -1.31838 -3.55505 -3.5458 0.684372 1.0964 0.849535 4.03469 3.21928 0.576193 -0.00237729 0.0465654 0.0408133 -0.194576 -0.142703 -1.24451 -3.09615 -3.25116 -0.0809503 0.134056 1.42078 4.7647 2.91172 0.379939 -0.00237742 0.0465654 0.0447424 0.212362 0.171268 -1.81562 -2.98974 -3.83104 -1.09958 0.149632 2.92131 4.80793 1.88384 0.108982 -0.00237737 0.0465654 0.181403 0.271504 -0.0868469 -1.61158 -3.34 -3.13256 -0.844484 0.928895 4.5633 4.70677 1.05049 -0.082796 -0.00237751 0.0465654 0.445047 0.268099 -0.61444 -1.94418 -4.81323 -2.56377 0.0803734 1.60131 4.7976 3.51906 0.307156 0.00976629 -0.00237739 0.0465654 0.351043 -0.285457 -1.04642 -2.18551 -4.23739 -1.07251 1.03124 1.68661 3.72981 2.1613 0.212911 0.00733458 -0.00237745 0.0465654 -0.153882 -0.728159 -1.98605 -3.36631 -3.30576 -0.994109 0.368019 1.57114 2.32655 0.936484 -0.0348572 0.00366797 -0.00237727 0.0460116 -0.196705 -0.422216 -0.903646 -2.28753 -1.8149 -0.497868 0.377633 0.95845 1.15005 0.0417288 -0.0580622 0.00685634 -0.00675346 0.0376359 -0.0106585 -0.0300542 -0.420301 -0.51015 -0.341925 -0.179612 0.433956 0.431884 0.0852679 -0.209331 0.034039 0.0114855 -0.00292668] 44 45 The output of last layer: name tmp_7235, dtype: VarType.FP32 shape: [10] lod: {} 46 dim: 10 47 layout: NCHW 48 dtype: float 49 data: [0.000341895 0.0105792 0.0836315 0.00980286 0.00223727 0.00221005 0.0176872 0.00238195 0.806833 0.06429463. 加入校验或测试,更好评价模型效果

在训练过程中,我们会发现模型在训练样本集上的损失在不断减小。但这是否代表模型在未来的应用场景上依然有效?为了验证模型的有效性,通常将样本集合分成三份,训练集、校验集和测试集。

- 训练集。用于训练模型的参数,即训练过程中主要完成的工作。

- 校验集。用于对模型超参数的选择,比如网络结构的调整、正则化项权重的选择等。

- 测试集。用于模拟模型在应用后的真实效果。因为测试集没有参与任何模型优化或参数训练的工作,所以它对模型来说是完全未知的样本。在不以校验数据优化网络结构或模型超参数时,校验数据和测试数据的效果是类似的,均更真实的反映模型效果。

如下程序读取上一步训练保存的模型参数,读取校验数据集,测试模型在校验数据集上的效果。从测试的效果来看,模型在从来没有见过的数据集上依然有?%的准确率,证明它是有预测效果的。

1 with fluid.dygraph.guard(): 2 print('start evaluation .......') 3 #加载模型参数 4 model = MNIST("mnist") 5 model_state_dict, _ = fluid.load_dygraph('mnist') 6 model.load_dict(model_state_dict) 7 8 model.eval() 9 eval_loader = load_data('eval') 10 11 acc_set = [] 12 avg_loss_set = [] 13 for batch_id, data in enumerate(eval_loader()): 14 x_data, y_data = data 15 img = fluid.dygraph.to_variable(x_data) 16 label = fluid.dygraph.to_variable(y_data) 17 prediction, acc = model(img, label) 18 loss = fluid.layers.cross_entropy(input=prediction, label=label) 19 avg_loss = fluid.layers.mean(loss) 20 acc_set.append(float(acc.numpy())) 21 avg_loss_set.append(float(avg_loss.numpy())) 22 23 #计算多个batch的平均损失和准确率 24 acc_val_mean = np.array(acc_set).mean() 25 avg_loss_val_mean = np.array(avg_loss_set).mean() 26 27 print('loss={}, acc={}'.format(avg_loss_val_mean, acc_val_mean))

start evaluation ....... loading mnist dataset from ./work/mnist.json.gz ...... loss=0.24452914014458657, acc=0.9324000030755997

4. 加入正则化项,避免模型过拟合

对于样本量有限、但需要使用强大模型的复杂任务,模型很容易出现过拟合的表现,即在训练集上的损失小,在校验集或测试集上的损失较大。关于过拟合的详细理论,参考《机器学习的思考故事》课程。

为了避免模型过拟合,在没有扩充样本量的可能下,只能降低模型的复杂度。降低模型的复杂度,可以通过限制参数的数量或可能取值(参数值尽量小)实现。具体来说,在模型的优化目标(损失)中人为加入对参数规模的惩罚项。当参数越多或取值越大时,该惩罚项就越大。通过调整惩罚项的权重系数,可以使模型在“尽量减少训练损失”和“保持模型的泛化能力”之间取得平衡。泛化能力表示模型在没有见过的样本上依然有效。正则化项的存在,增加了模型在训练集上的损失。

飞桨框架支持为所有参数加上统一的正则化项,也支持为特定的参数添加正则化项。前者的实现如下代码所示,仅在优化器中设置regularization参数即可实现。使用参数regularization_coeff调节正则化项的权重,权重越大时,对模型复杂度的惩罚越高。

1 with fluid.dygraph.guard(): 2 model = MNIST("mnist") 3 model.train() 4 5 #四种优化算法的设置方案,可以逐一尝试效果 6 #optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01) 7 #optimizer = fluid.optimizer.MomentumOptimizer(learning_rate=0.01) 8 #optimizer = fluid.optimizer.AdagradOptimizer(learning_rate=0.01) 9 #optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01) 10 11 #各种优化算法均可以加入正则化项,避免过拟合,参数regularization_coeff调节正则化项的权重 12 #optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01, regularization=fluid.regularizer.L2Decay(regularization_coeff=0.1)) 13 optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01, regularization=fluid.regularizer.L2Decay(regularization_coeff=0.1)) 14 15 EPOCH_NUM = 10 16 for epoch_id in range(EPOCH_NUM): 17 for batch_id, data in enumerate(train_loader()): 18 #准备数据,变得更加简洁 19 image_data, label_data = data 20 image = fluid.dygraph.to_variable(image_data) 21 label = fluid.dygraph.to_variable(label_data) 22 23 #前向计算的过程,同时拿到模型输出值和分类准确率 24 predict, avg_acc = model(image, label) 25 26 #计算损失,取一个批次样本损失的平均值 27 loss = fluid.layers.cross_entropy(predict, label) 28 avg_loss = fluid.layers.mean(loss) 29 30 #每训练了100批次的数据,打印下当前Loss的情况 31 if batch_id % 100 == 0: 32 print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(),avg_acc.numpy())) 33 34 #后向传播,更新参数的过程 35 avg_loss.backward() 36 optimizer.minimize(avg_loss) 37 model.clear_gradients() 38 39 #保存模型参数 40 fluid.save_dygraph(model.state_dict(), 'mnist')

正则化项参见13行。下面为打印输出结果:

1 epoch: 0, batch: 0, loss is: [2.9618127], acc is [0.09] 2 epoch: 0, batch: 100, loss is: [0.4821284], acc is [0.82] 3 epoch: 0, batch: 200, loss is: [0.4689779], acc is [0.86] 4 epoch: 0, batch: 300, loss is: [0.3175511], acc is [0.91] 5 epoch: 0, batch: 400, loss is: [0.3862023], acc is [0.87] 6 epoch: 1, batch: 0, loss is: [0.34007072], acc is [0.92] 7 epoch: 1, batch: 100, loss is: [0.4564435], acc is [0.86] 8 epoch: 1, batch: 200, loss is: [0.22314773], acc is [0.95] 9 epoch: 1, batch: 300, loss is: [0.5216203], acc is [0.85] 10 epoch: 1, batch: 400, loss is: [0.1880288], acc is [0.97] 11 epoch: 2, batch: 0, loss is: [0.31458455], acc is [0.9] 12 epoch: 2, batch: 100, loss is: [0.29387832], acc is [0.91] 13 epoch: 2, batch: 200, loss is: [0.19327974], acc is [0.94] 14 epoch: 2, batch: 300, loss is: [0.34688073], acc is [0.92] 15 epoch: 2, batch: 400, loss is: [0.33402398], acc is [0.88] 16 epoch: 3, batch: 0, loss is: [0.37079352], acc is [0.92] 17 epoch: 3, batch: 100, loss is: [0.27622813], acc is [0.95] 18 epoch: 3, batch: 200, loss is: [0.32804275], acc is [0.91] 19 epoch: 3, batch: 300, loss is: [0.30482328], acc is [0.92] 20 epoch: 3, batch: 400, loss is: [0.35331258], acc is [0.88] 21 epoch: 4, batch: 0, loss is: [0.37278587], acc is [0.91] 22 epoch: 4, batch: 100, loss is: [0.33204514], acc is [0.91] 23 epoch: 4, batch: 200, loss is: [0.33681116], acc is [0.9] 24 epoch: 4, batch: 300, loss is: [0.21702813], acc is [0.94] 25 epoch: 4, batch: 400, loss is: [0.27484477], acc is [0.93] 26 epoch: 5, batch: 0, loss is: [0.27992722], acc is [0.93] 27 epoch: 5, batch: 100, loss is: [0.2436056], acc is [0.94] 28 epoch: 5, batch: 200, loss is: [0.43120566], acc is [0.85] 29 epoch: 5, batch: 300, loss is: [0.26344958], acc is [0.93] 30 epoch: 5, batch: 400, loss is: [0.26599526], acc is [0.93] 31 epoch: 6, batch: 0, loss is: [0.44372514], acc is [0.89] 32 epoch: 6, batch: 100, loss is: [0.3124095], acc is [0.92] 33 epoch: 6, batch: 200, loss is: [0.31063277], acc is [0.95] 34 epoch: 6, batch: 300, loss is: [0.36090645], acc is [0.9] 35 epoch: 6, batch: 400, loss is: [0.32959267], acc is [0.91] 36 epoch: 7, batch: 0, loss is: [0.38626334], acc is [0.9] 37 epoch: 7, batch: 100, loss is: [0.30303052], acc is [0.89] 38 epoch: 7, batch: 200, loss is: [0.3010301], acc is [0.94] 39 epoch: 7, batch: 300, loss is: [0.4417858], acc is [0.87] 40 epoch: 7, batch: 400, loss is: [0.33106077], acc is [0.91] 41 epoch: 8, batch: 0, loss is: [0.2563219], acc is [0.92] 42 epoch: 8, batch: 100, loss is: [0.37621248], acc is [0.92] 43 epoch: 8, batch: 200, loss is: [0.28183213], acc is [0.94] 44 epoch: 8, batch: 300, loss is: [0.399815], acc is [0.89] 45 epoch: 8, batch: 400, loss is: [0.446779], acc is [0.84] 46 epoch: 9, batch: 0, loss is: [0.45045567], acc is [0.87] 47 epoch: 9, batch: 100, loss is: [0.359276], acc is [0.94] 48 epoch: 9, batch: 200, loss is: [0.2855474], acc is [0.9] 49 epoch: 9, batch: 300, loss is: [0.54529166], acc is [0.89] 50 epoch: 9, batch: 400, loss is: [0.4740173], acc is [0.87]

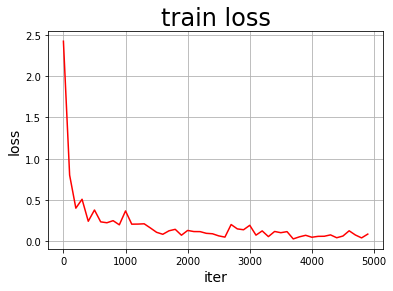

5. 可视化分析

训练模型时,我们经常需要观察模型的评价指标,分析模型的优化过程,以确保训练是有效的。如之前的案例所示,使用轻量级的PLT库作图各种指标是非常简单的。

使用Matplotlib库画出损失随训练下降的曲线图

首先将训练的批次编号作为X轴坐标,该批次的训练损失作为Y轴坐标。使用两个列表变量存储对应的批次编号(iters=[])和训练损失(losses=[]),并将两份数据以参数形式导入PLT的横纵坐标( plt.xlabel("iter", fontsize=14),plt.ylabel("loss", fontsize=14))。最后,调用plt.plot()函数即可完成作图。

1 #引入matplotlib库 2 import matplotlib.pyplot as plt 3 4 with fluid.dygraph.guard(place): 5 model = MNIST("mnist") 6 model.train() 7 8 #四种优化算法的设置方案,可以逐一尝试效果 9 optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01) 10 11 EPOCH_NUM = 10 12 iter=0 13 iters=[] 14 losses=[] 15 for epoch_id in range(EPOCH_NUM): 16 for batch_id, data in enumerate(train_loader()): 17 #准备数据,变得更加简洁 18 image_data, label_data = data 19 image = fluid.dygraph.to_variable(image_data) 20 label = fluid.dygraph.to_variable(label_data) 21 22 #前向计算的过程,同时拿到模型输出值和分类准确率 23 predict, avg_acc = model(image, label) 24 25 #计算损失,取一个批次样本损失的平均值 26 loss = fluid.layers.cross_entropy(predict, label) 27 avg_loss = fluid.layers.mean(loss) 28 29 #每训练了100批次的数据,打印下当前Loss的情况 30 if batch_id % 100 == 0: 31 #print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(),avg_acc.numpy())) 32 iters.append(iter) 33 losses.append(avg_loss.numpy()) 34 iter = iter + 100 35 36 #后向传播,更新参数的过程 37 avg_loss.backward() 38 optimizer.minimize(avg_loss) 39 model.clear_gradients() 40 41 #保存模型参数 42 fluid.save_dygraph(model.state_dict(), 'mnist') 43 44 #画出训练过程中Loss的变化曲线 45 plt.figure() 46 plt.title("train loss", fontsize=24) 47 plt.xlabel("iter", fontsize=14) 48 plt.ylabel("loss", fontsize=14) 49 plt.plot(iters, losses,color='red',label='train loss') 50 plt.grid() 51 plt.show()

画图中所需的参数(迭代次数及损失函数值)见代码32-34行。