CoroSync+Pacemaker实现web高可用 2015-04-12 23:38:19

一、简介

CoroSync最初只是用来演示OpenAIS集群框架接口规范的一个应用,可以说CoroSync是OpenAIS的一部分,但后面的发展明显超越了官方最初的设想,越来越多的厂商尝试使用CoroSync作为集群解决方案。如Redhat的RHCS集群套件就是基于CoroSync实现。

CoroSync只提供了message layer,而没有直接提供CRM,一般使用Pacemaker进行资源管理。

CoroSync和Pacemaker的配合使用有2种方式:①Pacemaker以插件形式使用 ②Pacemaker独立的守护进程

本文Pacemaker以插件的形式运行。

二、配置web高可用

0、前提

①时间同步、ssh互信、hosts域名通信 、uname -n的节点名称(略)

②安装httpd服务,开机不启动

③关闭NetworkManager,开启不启动,开启network服务

1、安装CoroSync、Pacemaker、crmsh

|

1

|

# yum install corosync pacemaker |

CoroSync的软件包组成:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

|

[root@node1 ~]# rpm -ql corosync/etc/corosync #CoroSync的配置文件目录/etc/corosync/corosync.conf.example #CoroSync的配置样例/etc/corosync/corosync.conf.example.udpu/etc/corosync/service.d/etc/corosync/uidgid.d/etc/dbus-1/system.d/corosync-signals.conf/etc/rc.d/init.d/corosync #CoroSync的服务脚本/etc/rc.d/init.d/corosync-notifyd/usr/bin/corosync-blackbox/usr/libexec/lcrso/usr/libexec/lcrso/coroparse.lcrso/usr/libexec/lcrso/objdb.lcrso/usr/libexec/lcrso/quorum_testquorum.lcrso/usr/libexec/lcrso/quorum_votequorum.lcrso/usr/libexec/lcrso/service_cfg.lcrso/usr/libexec/lcrso/service_confdb.lcrso/usr/libexec/lcrso/service_cpg.lcrso/usr/libexec/lcrso/service_evs.lcrso/usr/libexec/lcrso/service_pload.lcrso/usr/libexec/lcrso/vsf_quorum.lcrso/usr/libexec/lcrso/vsf_ykd.lcrso/usr/sbin/corosync/usr/sbin/corosync-cfgtool/usr/sbin/corosync-cpgtool/usr/sbin/corosync-fplay/usr/sbin/corosync-keygen #生成节点message layer通信秘钥/usr/sbin/corosync-notifyd/usr/sbin/corosync-objctl/usr/sbin/corosync-pload/usr/sbin/corosync-quorumtool/usr/share/doc/corosync-1.4.1/var/lib/corosync/var/log/cluster |

|

1

|

# yum install -y crmsh-1.2.6-4.el6.x86_64.rpm pssh-2.3.1-2.el6.x86_64.rpm ###crmsh依赖于pssh |

从pacemaker 1.1.8开始,crm发展成了一个独立项目,叫crmsh。也就是说,我们安装了pacemaker后,并没有crm这个命令,我们要实现对集群资源管理,还需要独立安装crmsh。

2、配置CoroSync

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

|

[root@node1 corosync]# cat /etc/corosync/corosync.confcompatibility: whitetank ##是否兼容旧版本totem { version: 2 ##版本号,无法修改 secauth: off ##安全认证,当使用aisexec时,会非常消耗CPU threads: 0 ##线程数,根据CPU个数和核心数确定 interface { ringnumber: 0 bindnetaddr:192.168.192.0 ##绑定心跳网络IP地址 mcastaddr: 226.94.1.1 ##组播地址 mcastport: 5405 ##组播端口 ttl: 1 ##组播的ttl值 }}logging { fileline: off to_stderr: no ##是否发送到标准错误输出 to_logfile: yes ##是否记录到文件 to_syslog: yes ##是否记录到syslog logfile: /var/log/cluster/corosync.log ##日志文件位置 debug: off timestamp: on ##是否打印时间戳,利于定位错误,但会消耗CPU logger_subsys { subsys: AMF debug: off }}service { ##定义pacemaker以插件的形式启动 ver: 0 name: pacemaker }aisexec { ##corosync启动的身份,由于corosync需要管理服务,需要root身份 user: root group: root }amf { mode: disabled} |

3、配置CoroSync的认证(authkey)

使用corosync-keygen生成key时,由于要使用/dev/random生成随机数,因此如果新装的系统操作不多,如果没有足够的熵,可能会出现如下提示:

|

1

2

3

4

5

|

[root@node1 corosync]# corosync-keygen Corosync Cluster Engine Authentication key generator. Gathering 1024 bits for key from /dev/random. Press keys on your keyboard to generate entropy. Press keys on your keyboard to generate entropy (bits = 240). |

解决办法:在本地随意输入即可,可以通过安装,卸载软件方式解决。

authkey的权限为默认400

4、将配置文件复制到集群节点

5、启动CoroSync

|

1

2

3

4

|

[root@node1 corosync]# service corosync start Starting Corosync Cluster Engine (corosync): [确定] [root@node1 corosync]# ssh node2 'service corosync start' Starting Corosync Cluster Engine (corosync): [确定] |

检查CoroSync的引擎启动是否成功:

|

1

2

3

|

[root@node1 corosync]# grep -e "Corosync Cluster Engine" -e "configuration file" /var/log/messages Oct 19 19:21:21 node1 corosync[2360]: [MAIN ] Corosync Cluster Engine ('1.4.1'): started and ready to provide service. Oct 19 19:21:21 node1 corosync[2360]: [MAIN ] Successfully read main configuration file '/etc/corosync/corosync.conf'. |

查看初始化成员节点通知是否正常发出:

|

1

2

3

4

5

6

|

[root@node1 corosync]# grep TOTEM /var/log/messages Oct 19 19:21:21 node1 corosync[2360]: [TOTEM ] Initializing transport (UDP/IP Multicast). Oct 19 19:21:21 node1 corosync[2360]: [TOTEM ] Initializing transmit/receive security: libtomcrypt SOBER128/SHA1HMAC (mode 0). Oct 19 19:21:22 node1 corosync[2360]: [TOTEM ] The network interface [192.168.192.208] is now up. Oct 19 19:21:23 node1 corosync[2360]: [TOTEM ] Process pause detected for 1264 ms, flushing membership messages. Oct 19 19:21:23 node1 corosync[2360]: [TOTEM ] A processor joined or left the membership and a new membership was formed. |

检查启动过程中是否有错误产生:

|

1

2

3

|

[root@node1 corosync]# grep ERROR: /var/log/messages | grep -v unpack_resources Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] ERROR: process_ais_conf: You have configured a cluster using the Pacemaker plugin for Corosync. The plugin is not supported in this environment and will be removed very soon. Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] ERROR: process_ais_conf: Please see Chapter 8 of 'Clusters from Scratch' (http://www.clusterlabs.org/doc) for details on using Pacemaker with CMAN |

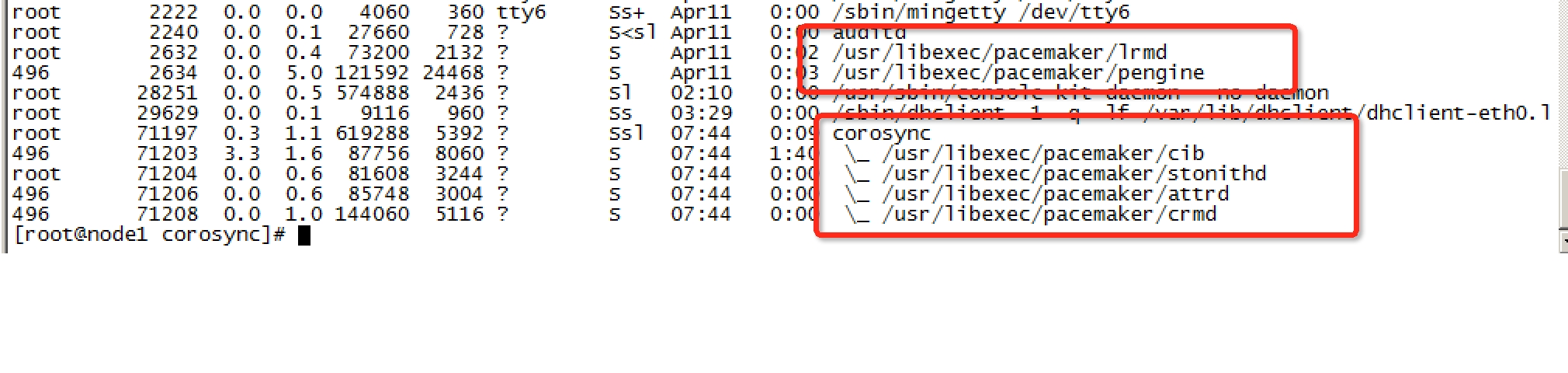

查看pacemaker是否正常启动:

|

1

2

3

4

5

6

|

[root@node1 corosync]# grep pcmk_startup /var/log/messages Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] info: pcmk_startup: CRM: Initialized Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] Logging: Initialized pcmk_startup Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] info: pcmk_startup: Maximum core file size is: 18446744073709551615 Oct 19 19:21:23 node1 corosync[2360]: [pcmk ] info: pcmk_startup: Service: 9 Oct 19 19:21:23 node1 corosync[2360]: [pcmk ] info: pcmk_startup: Local hostname: node1.yu.com |

这里可能存在的问题:iptables,selinux

6、配置crmsh实现资源管理

crmsh具有补全命令功能,并且交互式,可随时help查看帮助说明

crmsh简介:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

|

[root@node1 ~]# crm <--进入crmsh crm(live)# help ##查看帮助This is crm shell, a Pacemaker command line interface.Available commands: cib manage shadow CIBs ##CIB管理模块 resource resources management ##资源管理模块 configure CRM cluster configuration ##CRM配置,包含资源粘性、资源类型、资源约束等 node nodes management ##节点管理 options user preferences ##用户偏好 history CRM cluster history ##CRM 历史 site Geo-cluster support ##地理集群支持 ra resource agents information center ##资源代理配置 status show cluster status ##查看集群状态 help,? show help (help topics for list of topics) ##查看帮助 end,cd,up go back one level ##返回上一级 quit,bye,exit exit the program ##退出 crm(live)# configure <--进入配置模式crm(live)configure# show ##查看当前配置 node node1.yu.com node node2.yu.com property $id="cib-bootstrap-options" dc-version="1.1.8-7.el6-394e906" cluster-infrastructure="classic openais (with plugin)" expected-quorum-votes="2"crm(live)configure# verify ##检查当前配置语法,由于没有STONITH,所以报错,可关闭 error: unpack_resources: Resource start-up disabled since no STONITH resources have been defined error: unpack_resources: Either configure some or disable STONITH with the stonith-enabled option error: unpack_resources: NOTE: Clusters with shared data need STONITH to ensure data integrity Errors found during check: config not valid -V may provide more details crm(live)configure# property stonith-enabled=false ##禁用stonith后再次检查配置,无报错 crm(live)configure# verifycrm(live)configure# commit ##提交配置crm(live)configure# cdcrm(live)# ra <--进入RA(资源代理配置)模式 crm(live)ra# helpThis level contains commands which show various information about the installed resource agents. It is available both at the top level and at the `configure` level.Available commands: classes list classes and providers ##查看RA类型 list list RA for a class (and provider) ##查看指定类型(或提供商)的RA meta,info show meta data for a RA ##查看RA详细信息 providers show providers for a RA and a class ##查看指定资源的提供商和类型 help,? show help (help topics for list of topics) end,cd,up go back one level quit,bye,exit exit the program crm(live)ra# classes lsb ocf / heartbeat pacemaker redhat service stonithcrm(live)ra# list ocf pacemaker ClusterMon Dummy HealthCPU HealthSMART Stateful SysInfo SystemHealth controld o2cb ping pingdcrm(live)ra# info ocf:heartbeat:IPaddrManages virtual IPv4 addresses (portable version) (ocf:heartbeat:IPaddr)This script manages IP alias IP addresses It can add an IP alias, or remove one.Parameters (* denotes required, [] the default):ip* (string): IPv4 address The IPv4 address to be configured in dotted quad notation, for example "192.168.192.208".nic (string, [eth0]): Network interface The base network interface on which the IP address will be brought online.……下略……crm(live)ra# cd crm(live)# status <--查看集群状态 Last updated: Sun Oct 20 22:06:16 2013 Last change: Sun Oct 20 21:58:46 2013 via cibadmin on node1.yu.com Stack: classic openais (with plugin) Current DC: node2.yu.com - partition with quorum Version: 1.1.8-7.el6-394e906 2 Nodes configured, 2 expected votes 0 Resources configured.Online: [ node1.yu.com node2.yu.com ] |

法定票数问题:

在双节点集群中,由于票数是偶数,当心跳出现问题(脑裂)时,两个节点都将达不到法定票数,默认quorum策略会关闭集群服务,为了避免这种情况,可以增加票数为奇数(如前文的增加ping节点,qdisk),或者调整默认quorum策略为【ignore】。

|

1

2

3

4

5

6

7

8

9

10

11

|

crm(live)configure# property no-quorum-policy=ignore crm(live)configure# show node node1.yu.com node node2.yu.com property $id="cib-bootstrap-options" dc-version="1.1.8-7.el6-394e906" cluster-infrastructure="classic openais (with plugin)" expected-quorum-votes="2" stonith-enabled="false" no-quorum-policy="ignore" crm(live)configure# commit |

资源来回转移问题:(防止资源转移后,故障点恢复又转移)

故障发生时,资源会迁移到正常节点上,但当故障节点恢复后,资源可能再次回到原来节点,这样有时候不一定是好事,例如某些繁忙的场景,来回飘逸就会出现问题,这里通过资源粘性来避免

|

1

|

crm(live)configure# property default-resource-stickiness=INFINITY |

7.配置httpd的高可用样例

|

1

2

3

|

## 配置浮动IPcrm(live)configure# primitive webip ocf:heartbeat:IPaddr params ip=192.168.192.222crm(live)configure# commit |

|

1

2

3

4

5

6

|

##配置httpdcrm(live)configure# primitive web lsb:httpd op monitor interval="30s" timeout="20s" on-fail="restart" meta target-role="Started" ##监控,超时为20s,间隔30s,失败后资源重启,失败则有可能在其他节点启动crm(live)configure# commit |

|

1

2

3

4

5

6

|

##配置nfs实现web的页面crm(live)configure# primitive webstore ocf:heartbeat:Filesystem params device="192.168.192.196:/webdocs" fstype="nfs" directory="/var/www/html" op start timeout="60" interval="0" op stop timeout="60" interval="0" op monitor interval="60" timeout="60" on-fail="standby" |

|

1

2

3

|

##资源,默认会平均分配在各个不同的几点,将webip、webstore、httpd定义为一个组资源,可以将资源运行于同一个节点crm(live)configure#group web_srv webip webstore webcrm(live)configure# commit |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

[root@node1 corosync]# crmcrm(live)# statusLast updated: Sun Apr 12 08:31:35 2015Last change: Sun Apr 12 08:17:47 2015 via cibadmin on node1.yu.comStack: classic openais (with plugin)Current DC: node1.yu.com - partition with quorumVersion: 1.1.8-7.el6-394e9062 Nodes configured, 2 expected votes5 Resources configured.Online: [ node1.yu.com node2.yu.com ] Resource Group: web_srv webip (ocf::heartbeat:IPaddr): Started node2.yu.com web (lsb:httpd): Started node2.yu.com webstore (ocf::heartbeat:Filesystem): Started node2.yu.com |

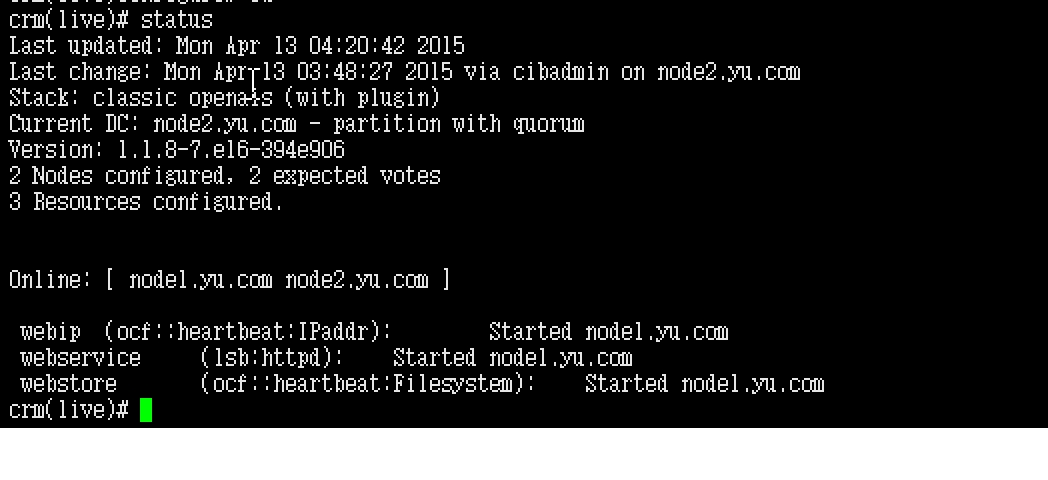

至此httpd的HA完成。

8、去除group属性,通过约束来完成资源的融合

前提: 已经配置了 webip、httpd、webstore主资源

colocation: 约束资源是否运行在同一个节点

|

1

|

crm(live)configure# colocation webservice_with_webstore_with_webip inf: web webstore webip |

order :约束资源的启动关闭顺序

|

1

|

crm(live)configure# order webip_before_webstore_before_httpd webip webstore web |

效果:

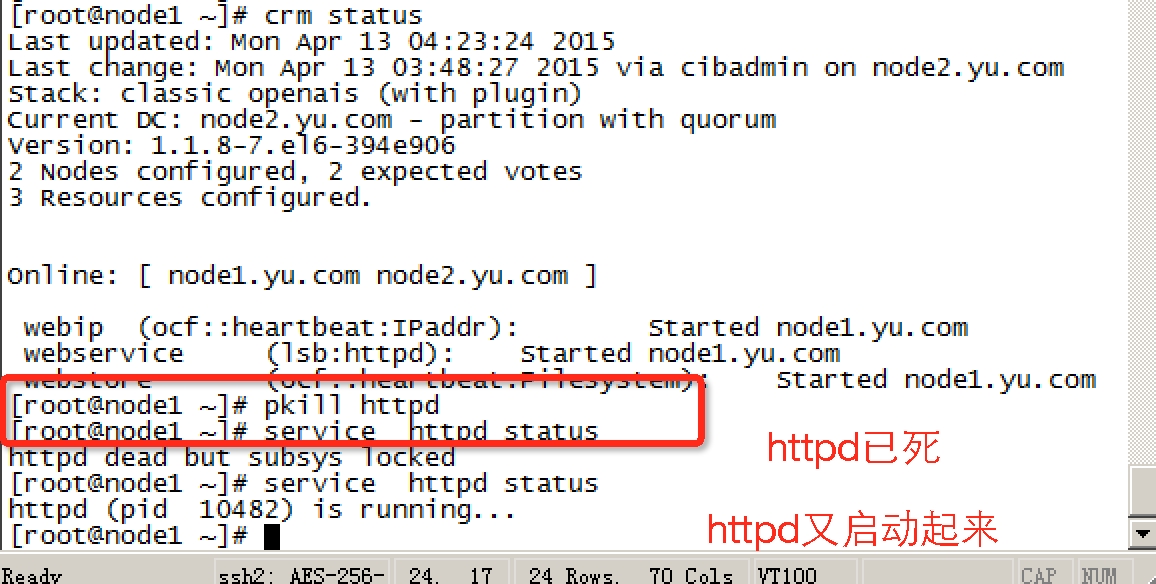

切换效果,pkill httpd,看httpd是否会自动起来:

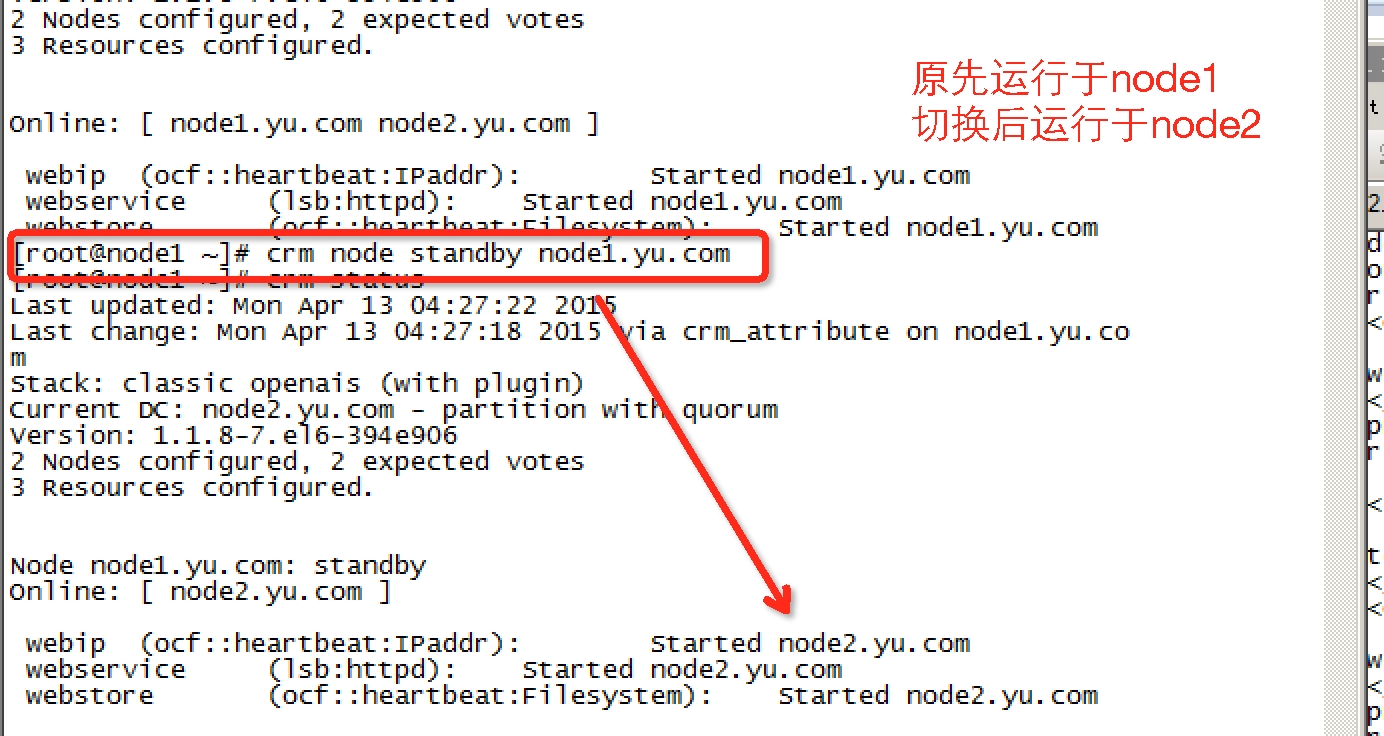

模拟节点故障,切换是否成功: