1.安装规划:

pod分配IP: 10.244.0.0/16

cluster分配地址段:10.99.0.0/16

CoreDNS:10.99.110.110

统一安装路径:/data/apps

软件版本:kubernetes1.13.0版本

[root@master1 software]# ll

总用量 417216

-rw-r--r-- 1 root root 9706487 10月 18 21:46 flannel-v0.10.0-linux-amd64.tar.gz

-rw-r--r-- 1 root root 417509744 10月 18 12:51 kubernetes-server-linux-amd64.tar.gz ##kubernetes1.13.0版本

| 主机名 | ip地址 | 组件 | 集群 | |

| master1 | 192.168.100.63 | Kube-apiserver 、kube controller-manager、kube-scheduler、etcd、kube-proxy、kubelet、flanneld、docker、keepalived、haproxy | VIP:192.168.100.100 | |

| master2 | 192.168.100.65 | |||

| master3 | 192.168.100.66 | |||

| node1 | 192.168.100.61 | kubelet、kube-proxy、flanneld、docker | ||

| node2 | 192.168.100.62 | |||

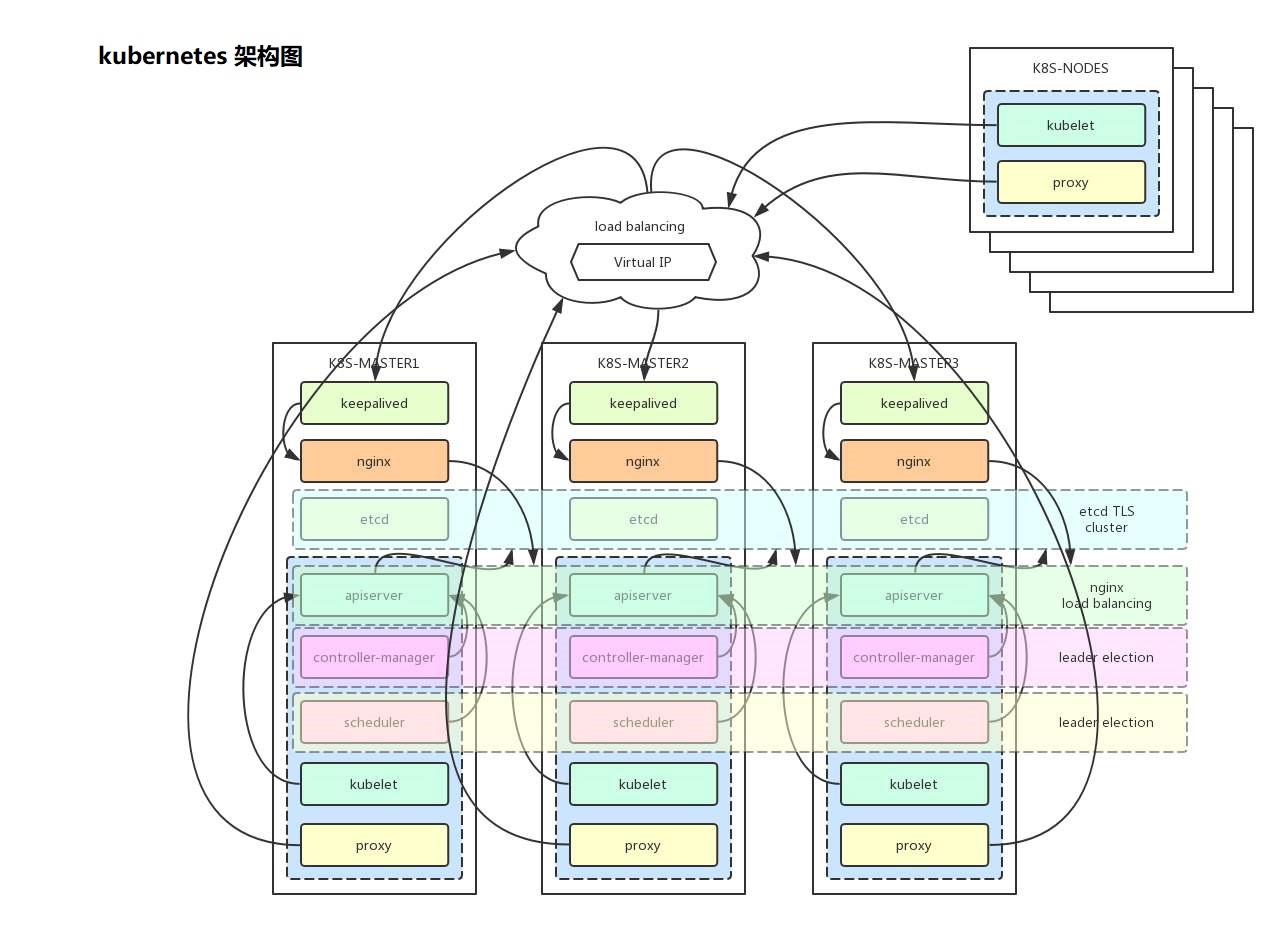

Kubernetes 主要由以下几个核心组件组成:

1.etcd 保存了整个集群的状态;

2.kube-apiserver 提供了资源操作的唯一入口,并提供认证、授权、访问控制、API 注册和发现等机制;

3.kube-controller-manager 负责维护集群的状态,比如故障检测、自动扩展、滚动更新等;

4.kube-scheduler 负责资源的调度,按照预定的调度策略将 Pod 调度到相应的机器上;

5.kubelet 负责维持容器的生命周期,同时也负责 Volume(CVI)和网络(CNI)的管理;

6.Container runtime 负责镜像管理以及 Pod 和容器的真正运行(CRI),默认的容器运行时为 Docker;

7.kube-proxy 负责为 Service 提供 cluster 内部的服务发现和负载均衡;

2.初始化系统:

1.修改各个节点的主机名,此处省略..... 2.关闭各个节点的firewalld和selinux;此处省略..... 3.准备好yum源配置;此处省略..... 4.做个各个节点的双击互信;此处省略..... 5.关闭各个节点的swap分区和修改fatab文件;此处省略.....

6.加载ipvs模块:

1)编写加载ipvs模块脚本:

[root@master ~]# mkdir ipvs [root@master ~]#cd ipvs [root@master ipvs]# cat ipvs.sh #!/bin/bash modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack_ipv4 [root@master ipvs]# chmod +x ipvs.sh [root@master ipvs]# echo "bash /root/ipvs/ipvs.sh" >> /etc/rc.local ##添加开机自启 [root@master ipvs]# chmod +x /etc/rc.d/rc.local ##给执行权限,否则脚本无法执行

2).分发加载ipvs的脚本至各个节点并添加开机自启:

[root@master ipvs]# ansible nodes -m yum -a 'name=rsync state=present' ##安装rsync [root@master ipvs]# for i in master2 master3 node1 node2 ; do rsync -avzP /root/ipvs $i:/root/ ; done ##ipvs目录同步各个节点 [root@master ipvs]# for i in master2 master3 node1 node2 ; do ssh -X $i echo "bash /root/ipvs/ipvs.sh >>/etc/rc.local" ; done [root@master ipvs]# ansible nodes -m shell -a 'chmod +x /etc/rc.d/rc.local'

7.关闭swap分区各个节点:

[root@master ipvs]# swapoff -a [root@master ipvs]# ansible nodes -m shell -a 'swapoff -a' [root@master ipvs]# sed -i '/swap/s@(.*)@#1@' /etc/fstab ##每个节点需要执行一遍 ##我这里提前配置好了ansible;

8.各个节点安装docker:

1)安装docker:

[root@master1 ~]# yum install docker-ce-18.06.2.ce-3.el7 -y [root@master1 ~]# systemctl enable docker && systemctl restart docker [root@master1 ~]# ansible nodes -m shell -a 'systemctl enable docker' [root@master1 ~]# ansible nodes -m shell -a 'systemctl restart docker'

2).配置 docker 参数:

[root@master1 ~]# vim /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-iptables=1 net.bridge.bridge-nf-call-ip6tables=1 net.ipv4.ip_forward=1 net.ipv4.tcp_tw_recycle=0 vm.swappiness=0 vm.overcommit_memory=1 vm.panic_on_oom=0 fs.inotify.max_user_watches=89100 fs.file-max=52706963 fs.nr_open=52706963 net.ipv6.conf.all.disable_ipv6=1 net.netfilter.nf_conntrack_max=2310720 注意: 1.tcp_tw_recycle 和 Kubernetes 的 NAT 冲突,必须关闭 ,否则会导致服务不通; 2.关闭不使用的 IPV6 协议栈,防止触发 docker BUG; [root@master1 ~]# sysctl -p /etc/sysctl.d/k8s.conf

3).分发其他节点:

[root@master1 ~]# for i in master2 master3 node1 node2 ; do scp /etc/sysctl.d/k8s.conf $i:/etc/sysctl.d/ ;done [root@master1 ~]# for i in master2 master3 node1 node2 ; do ssh -X $i sysctl -p /etc/sysctl.d/k8s.conf ; done

4).docker配置文件:

[root@master2 ~]# vim /etc/docker/daemon.json { "registry-mirrors": ["https://l6ydvf0r.mirror.aliyuncs.com"], "log-level": "warn", "data-root": "/data/docker", "exec-opts": [ "native.cgroupdriver=cgroupfs" ], "live-restore": true } [root@master2 ~]# systemctl daemon-reload [root@master2 ~]# systemctl restart docker [root@master2 ~]# mkdir /data/docker [root@master1 ~]# for i in master2 master3 node1 node2 ; do scp /etc/docker/daemon.json $i:/etc/docker/; done ##分发配置 [root@master1 ~]# ansible nodes -m file -a 'path=/data/docker state=directory' [root@master1 ~]# ansible nodes -m shell -a 'systemctl daemon-reload && systemctl restart docker'

9.安装 Cfssl:

[root@master1 ~]# mkdir cfssl [root@master1 ~]# cd cfssl [root@master1 cfssl]# yum install wget -y [root@master1 cfssl]#wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 [root@master1 cfssl]#wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 [root@master1 cfssl]#wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

[root@master1 cfssl]# ll 总用量 18808 -rw-r--r-- 1 root root 6595195 3月 30 2016 cfssl-certinfo_linux-amd64 -rw-r--r-- 1 root root 2277873 3月 30 2016 cfssljson_linux-amd64 -rw-r--r-- 1 root root 10376657 3月 30 2016 cfssl_linux-amd64 [root@master1 cfssl]# mv cfssl-certinfo_linux-amd64 cfssl-certinfo [root@master1 cfssl]# mv cfssljson_linux-amd64 cfssljson [root@master1 cfssl]# mv cfssl_linux-amd64 cfssl

[root@master1 cfssl]# ll 总用量 18808 -rw-r--r-- 1 root root 10376657 3月 30 2016 cfssl -rw-r--r-- 1 root root 6595195 3月 30 2016 cfssl-certinfo -rw-r--r-- 1 root root 2277873 3月 30 2016 cfssljson [root@master1 cfssl]# chmod +x * [root@master1 cfssl]# cp * /usr/bin/

说明:以上系统初始化完成。

3.安装 Etcd 集群:

1)安装etcd: [root@master1 ~]# yum list etcd --showduplicates 已加载插件:fastestmirror Loading mirror speeds from cached hostfile * base: centos.ustc.edu.cn * epel: mirrors.aliyun.com * extras: ftp.sjtu.edu.cn * updates: ftp.sjtu.edu.cn 可安装的软件包 etcd.x86_64 3.3.11-2.el7.centos extras [root@master1 ~]# yum install etcd -y [root@master1 ~]# for i in master2 master3 ; do ssh -X $i yum install etcd -y ; done

2.创建ca证书:

[root@master1 ~]# mkdir etcd-ssl [root@master1 ~]# cd etcd-ssl/ [root@master1 etcd-ssl]# cfssl print-defaults config > ca-config.json [root@master1 etcd-ssl]# cat ca-config.json { "signing": { "default": { "expiry": "87600h" }, "profiles": { "kubernetes": { "usages": [ "signing", "key encipherment", "server auth", "client auth" ], "expiry": "87600h" } } } }

3. 生成CA证书请求文件:

[root@master1 etcd-ssl]# cat etcd-ca-csr.json { "CN": "etcd", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "HangZhou", "ST": "ZheJiang", "O": "etcd", "OU": "etcd security" } ] }

4.生成etcd证书请求文件:

[root@master1 etcd-ssl]# cat etcd-csr.json { "CN": "etcd", "hosts": [ "127.0.0.1", "192.168.100.63", "192.168.100.65", "192.168.100.66", "192.168.100.100" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "HangZhou", "ST": "ZheJiang", "O": "etcd", "OU": "etcd security" } ] }

5.生成CA证书:

[root@master1 etcd-ssl]# cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare etcd-ca [root@master1 etcd-ssl]# ll 总用量 28 -rw-r--r-- 1 root root 405 10月 18 16:25 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 16:35 ca.csr -rw-r--r-- 1 root root 1005 10月 18 16:37 etcd-ca.csr -rw-r--r-- 1 root root 269 10月 18 16:30 etcd-ca-csr.json -rw------- 1 root root 1675 10月 18 16:37 etcd-ca-key.pem -rw-r--r-- 1 root root 1371 10月 18 16:37 etcd-ca.pem -rw-r--r-- 1 root root 403 10月 18 16:34 etcd-csr.json

6.签署证书:

[root@master1 etcd-ssl]# cfssl gencert -ca=etcd-ca.pem -ca-key=etcd-ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd [root@master1 etcd-ssl]# ll 总用量 40 -rw-r--r-- 1 root root 405 10月 18 16:25 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 16:35 ca.csr -rw-r--r-- 1 root root 1005 10月 18 16:37 etcd-ca.csr -rw-r--r-- 1 root root 269 10月 18 16:30 etcd-ca-csr.json -rw------- 1 root root 1675 10月 18 16:37 etcd-ca-key.pem -rw-r--r-- 1 root root 1371 10月 18 16:37 etcd-ca.pem -rw-r--r-- 1 root root 1086 10月 18 16:39 etcd.csr -rw-r--r-- 1 root root 403 10月 18 16:34 etcd-csr.json -rw------- 1 root root 1679 10月 18 16:39 etcd-key.pem -rw-r--r-- 1 root root 1460 10月 18 16:39 etcd.pem

7.创建存放证书的位置:

[root@master1 etcd-ssl]# mkdir /etc/etcd/ssl [root@master1 etcd-ssl]# for i in master2 master3 ; do ssh -X $i mkdir /etc/etcd/ssl ;done [root@master1 etcd-ssl]# cp *.pem /etc/etcd/ssl [root@master1 etcd-ssl]# chmod 644 /etc/etcd/ssl/etcd-key.pem

8.分发证书至各个节点:

[root@master1 etcd-ssl]# for i in master2 master3 ; do rsync -avzP /etc/etcd/ssl $i:/etc/etcd/;done [root@master1 etcd-ssl]# cd /etc/etcd/ [root@master1 etcd]# ll 总用量 4 -rw-r--r-- 1 root root 1686 2月 14 2019 etcd.conf drwxr-xr-x 2 root root 84 10月 18 16:44 ssl [root@master1 etcd]# cp etcd.conf{,.bak}

9. 编辑etcd配置文件:

[root@master1 etcd]# for i in master2 master3 ; do scp /etc/etcd/etcd.conf $i:/etc/etcd/etcd.conf ; done [root@master2 etcd]# sed -i '/ETCD_NAME/s/etcd1/etcd2/' etcd.conf [root@master3 ssl]# sed -i '/ETCD_NAME/s/etcd1/etcd3/' /etc/etcd/etcd.conf [root@master2 etcd]# sed -i '/63/s/63/65/' etcd.conf [root@master3 ssl]# sed -i '/63/s/63/66/' /etc/etcd/etcd.conf [root@master2 etcd]# sed -i '/etcd1=/s@etcd1=https://192.168.100.65@etcd1=https://192.168.100.63@' etcd.conf [root@master3 ssl]# sed -i '/etcd1=/s@etcd1=https://192.168.100.66@etcd1=https://192.168.100.63@' /etc/etcd/etcd.conf [root@master1 /]# mkdir /data/etcd -pv mkdir: 已创建目录 "/data" mkdir: 已创建目录 "/data/etcd" [root@master1 ~]#chown etcd:etcd /data/etcd -R [root@master1 ~]# for i in master2 master3 ; do ssh -X $i chown etcd:etcd -R /data/etcd ; done

说明:最终的etcd配置如下: [root@master1 ~]# grep -E -v "^#|^$" /etc/etcd/etcd.conf ETCD_DATA_DIR="/data/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://192.168.100.63:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.100.63:2379" ETCD_NAME="etcd1" ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.100.63:2380" ETCD_ADVERTISE_CLIENT_URLS="https://192.168.100.63:2379" ETCD_INITIAL_CLUSTER="etcd1=https://192.168.100.63:2380,etcd2=https://192.168.100.65:2380,etcd3=https://192.168.100.66:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_CERT_FILE="/etc/etcd/ssl/etcd.pem" ETCD_KEY_FILE="/etc/etcd/ssl/etcd-key.pem" ETCD_TRUSTED_CA_FILE="/etc/etcd/ssl/etcd-ca.pem" ETCD_PEER_CERT_FILE="/etc/etcd/ssl/etcd.pem" ETCD_PEER_KEY_FILE="/etc/etcd/ssl/etcd-key.pem" ETCD_PEER_TRUSTED_CA_FILE="/etc/etcd/ssl/etcd-ca.pem"

##其他两台master节点配置如上,至需要改对应ip和ETCD_NAME即可。

10.检查etcd集群是否正常:

[root@master1 etcd]# cat etcd.sh #!/bin/bash etcdctl --endpoints "https://192.168.100.63:2379,https://192.168.100.65:2379,https://192.168.100.66:2379" --ca-file=/etc/etcd/ssl/etcd-ca.pem --cert-file=/etc/etcd/ssl/etcd.pem --key-file=/etc/etcd/ssl/etcd-key.pem $@

[root@master1 etcd]# chmod +x etcd.sh [root@master1 etcd]# ./etcd.sh member list 26d26991e2461035: name=etcd2 peerURLs=https://192.168.100.65:2380 clientURLs=https://192.168.100.65:2379 isLeader=false 271303f214771755: name=etcd1 peerURLs=https://192.168.100.63:2380 clientURLs=https://192.168.100.63:2379 isLeader=false ab0d29b48ecb1666: name=etcd3 peerURLs=https://192.168.100.66:2380 clientURLs=https://192.168.100.66:2379 isLeader=true

[root@master1 etcd]# ./etcd.sh cluster-health member 26d26991e2461035 is healthy: got healthy result from https://192.168.100.65:2379 member 271303f214771755 is healthy: got healthy result from https://192.168.100.63:2379 member ab0d29b48ecb1666 is healthy: got healthy result from https://192.168.100.66:2379 cluster is healthy

说明:etcd集群安装完成。

4.安装kuberletes集群组件:

1.安装集群的CA证书:

[root@master1 ~]# mkdir k8s-ssl [root@master1 ~]#cd k8s-ssl [root@master1 k8s-ssl]# cp ../etcd-ssl/ca-config.json . 配置ca证书请求文件信息: [root@master1 k8s-ssl]# cat ca-csr.json { "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "ZheJiang", "ST": "HangZhou", "O": "k8s", "OU": "System" } ], "ca": { "expiry": "87600h" } }

2.生成CA证书:

[root@master1 k8s-ssl]# cfssl gencert -initca ca-csr.json |cfssljson -bare ca [root@master1 k8s-ssl]# ll 总用量 20 -rw-r--r-- 1 root root 405 10月 18 20:45 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 20:55 ca.csr -rw-r--r-- 1 root root 319 10月 18 20:51 ca-csr.json -rw------- 1 root root 1675 10月 18 20:55 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 20:55 ca.pem

3.配置kube-apiserver证书:

[root@master1 k8s-ssl]# cat kube-apiserver-csr.json { "CN": "kube-apiserver", "hosts": [ "127.0.0.1", "192.168.100.63", "192.168.100.65", "192.168.100.66", "192.168.100.100" "10.99.0.1", "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.ziji", "kubernetes.default.svc.ziji.work" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "ZheJiang", "ST": "HangZhou", "O": "k8s", "OU": "System" } ] }

5.生成kube-apiserver证书:

[root@master1 k8s-ssl]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver [root@master1 k8s-ssl]# ll 总用量 36 -rw-r--r-- 1 root root 405 10月 18 20:45 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 20:55 ca.csr -rw-r--r-- 1 root root 319 10月 18 20:51 ca-csr.json -rw------- 1 root root 1675 10月 18 20:55 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 20:55 ca.pem -rw-r--r-- 1 root root 1265 10月 18 21:04 kube-apiserver.csr -rw-r--r-- 1 root root 608 10月 18 21:04 kube-apiserver-csr.json -rw------- 1 root root 1679 10月 18 21:04 kube-apiserver-key.pem -rw-r--r-- 1 root root 1639 10月 18 21:04 kube-apiserver.pem

6.配置kube-controller-manager证书:

[root@master1 k8s-ssl]# vim kube-controller-manager-csr.json { "CN": "kube-controller-manager", "hosts": [ "127.0.0.1", "192.168.100.63", "192.168.100.65", "192.168.100.66", "192.168.100.100" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "ZheJiang", "ST": "HangZhou", "O": "system:kube-controller-manager", "OU": "System" } ] }

7.生成kube-controller-manager的证书:

[root@master1 k8s-ssl]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager [root@master1 k8s-ssl]# ll 总用量 52 -rw-r--r-- 1 root root 405 10月 18 20:45 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 20:55 ca.csr -rw-r--r-- 1 root root 319 10月 18 20:51 ca-csr.json -rw------- 1 root root 1675 10月 18 20:55 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 20:55 ca.pem -rw-r--r-- 1 root root 1265 10月 18 21:04 kube-apiserver.csr -rw-r--r-- 1 root root 608 10月 18 21:04 kube-apiserver-csr.json -rw------- 1 root root 1679 10月 18 21:04 kube-apiserver-key.pem -rw-r--r-- 1 root root 1639 10月 18 21:04 kube-apiserver.pem -rw-r--r-- 1 root root 1139 10月 18 21:09 kube-controller-manager.csr -rw-r--r-- 1 root root 454 10月 18 21:08 kube-controller-manager-csr.json -rw------- 1 root root 1679 10月 18 21:09 kube-controller-manager-key.pem -rw-r--r-- 1 root root 1513 10月 18 21:09 kube-controller-manager.pem

8.配置kube-scheduler证书:

[root@master1 k8s-ssl]# cat kube-scheduler-csr.json

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.100.63",

"192.168.100.65",

"192.168.100.66",

"192.168.100.100"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "ZheJiang",

"ST": "HangZhou",

"O": "system:kube-scheduler",

"OU": "System"

}

]

}

9.生成kube-scheduler证书:

[root@master1 k8s-ssl]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler [root@master1 k8s-ssl]# ll 总用量 68 -rw-r--r-- 1 root root 405 10月 18 20:45 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 20:55 ca.csr -rw-r--r-- 1 root root 319 10月 18 20:51 ca-csr.json -rw------- 1 root root 1675 10月 18 20:55 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 20:55 ca.pem -rw-r--r-- 1 root root 1265 10月 18 21:04 kube-apiserver.csr -rw-r--r-- 1 root root 608 10月 18 21:04 kube-apiserver-csr.json -rw------- 1 root root 1679 10月 18 21:04 kube-apiserver-key.pem -rw-r--r-- 1 root root 1639 10月 18 21:04 kube-apiserver.pem -rw-r--r-- 1 root root 1147 10月 18 21:13 kube-controller-manager.csr -rw-r--r-- 1 root root 461 10月 18 21:12 kube-controller-manager-csr.json -rw------- 1 root root 1675 10月 18 21:13 kube-controller-manager-key.pem -rw-r--r-- 1 root root 1521 10月 18 21:13 kube-controller-manager.pem -rw-r--r-- 1 root root 1123 10月 18 21:15 kube-scheduler.csr -rw-r--r-- 1 root root 443 10月 18 21:14 kube-scheduler-csr.json -rw------- 1 root root 1679 10月 18 21:15 kube-scheduler-key.pem -rw-r--r-- 1 root root 1497 10月 18 21:15 kube-scheduler.pem

10.配置 kube-proxy 证书:

[root@master1 k8s-ssl]# vim kube-proxy-csr.json { "CN": "system:kube-proxy", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "ZheJiang", "ST": "HangZhou", "O": "system:kube-proxy", "OU": "System" } ] }

11.生成kube-proxy证书:

[root@master1 k8s-ssl]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy [root@master1 k8s-ssl]# ll 总用量 84 -rw-r--r-- 1 root root 405 10月 18 20:45 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 20:55 ca.csr -rw-r--r-- 1 root root 319 10月 18 20:51 ca-csr.json -rw------- 1 root root 1675 10月 18 20:55 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 20:55 ca.pem -rw-r--r-- 1 root root 1265 10月 18 21:04 kube-apiserver.csr -rw-r--r-- 1 root root 608 10月 18 21:04 kube-apiserver-csr.json -rw------- 1 root root 1679 10月 18 21:04 kube-apiserver-key.pem -rw-r--r-- 1 root root 1639 10月 18 21:04 kube-apiserver.pem -rw-r--r-- 1 root root 1147 10月 18 21:13 kube-controller-manager.csr -rw-r--r-- 1 root root 461 10月 18 21:12 kube-controller-manager-csr.json -rw------- 1 root root 1675 10月 18 21:13 kube-controller-manager-key.pem -rw-r--r-- 1 root root 1521 10月 18 21:13 kube-controller-manager.pem -rw-r--r-- 1 root root 1033 10月 18 21:20 kube-proxy.csr -rw-r--r-- 1 root root 288 10月 18 21:19 kube-proxy-csr.json -rw------- 1 root root 1675 10月 18 21:20 kube-proxy-key.pem -rw-r--r-- 1 root root 1428 10月 18 21:20 kube-proxy.pem -rw-r--r-- 1 root root 1123 10月 18 21:15 kube-scheduler.csr -rw-r--r-- 1 root root 443 10月 18 21:14 kube-scheduler-csr.json -rw------- 1 root root 1679 10月 18 21:15 kube-scheduler-key.pem -rw-r--r-- 1 root root 1497 10月 18 21:15 kube-scheduler.pem

12.配置 admin 证书:

[root@master1 k8s-ssl]# vim admin-csr.json { "CN": "admin", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "ZheJiang", "ST": "HangZhou", "O": "system:masters", "OU": "System" } ] }

13.生成admin证书:

[root@master1 k8s-ssl]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin [root@master1 k8s-ssl]# ll 总用量 100 -rw-r--r-- 1 root root 1013 10月 18 21:23 admin.csr -rw-r--r-- 1 root root 273 10月 18 21:23 admin-csr.json -rw------- 1 root root 1675 10月 18 21:23 admin-key.pem -rw-r--r-- 1 root root 1407 10月 18 21:23 admin.pem -rw-r--r-- 1 root root 405 10月 18 20:45 ca-config.json -rw-r--r-- 1 root root 1005 10月 18 20:55 ca.csr -rw-r--r-- 1 root root 319 10月 18 20:51 ca-csr.json -rw------- 1 root root 1675 10月 18 20:55 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 20:55 ca.pem -rw-r--r-- 1 root root 1265 10月 18 21:04 kube-apiserver.csr -rw-r--r-- 1 root root 608 10月 18 21:04 kube-apiserver-csr.json -rw------- 1 root root 1679 10月 18 21:04 kube-apiserver-key.pem -rw-r--r-- 1 root root 1639 10月 18 21:04 kube-apiserver.pem -rw-r--r-- 1 root root 1147 10月 18 21:13 kube-controller-manager.csr -rw-r--r-- 1 root root 461 10月 18 21:12 kube-controller-manager-csr.json -rw------- 1 root root 1675 10月 18 21:13 kube-controller-manager-key.pem -rw-r--r-- 1 root root 1521 10月 18 21:13 kube-controller-manager.pem -rw-r--r-- 1 root root 1033 10月 18 21:20 kube-proxy.csr -rw-r--r-- 1 root root 288 10月 18 21:19 kube-proxy-csr.json -rw------- 1 root root 1675 10月 18 21:20 kube-proxy-key.pem -rw-r--r-- 1 root root 1428 10月 18 21:20 kube-proxy.pem -rw-r--r-- 1 root root 1123 10月 18 21:15 kube-scheduler.csr -rw-r--r-- 1 root root 443 10月 18 21:14 kube-scheduler-csr.json -rw------- 1 root root 1679 10月 18 21:15 kube-scheduler-key.pem -rw-r--r-- 1 root root 1497 10月 18 21:15 kube-scheduler.pem

5.分发证书:

注意:node节点只需要ca、kube-proxy、kubelet证书;不需要拷贝kube-controller-manager、 kube-schedule、kube-apiserver证书

1.创建证书存放目录:

[root@master1 k8s-ssl]# mkdir /data/apps/kubernetes/{log,certs,pki,etc} -pv

mkdir: 已创建目录 "/data/apps/kubernetes"

mkdir: 已创建目录 "/data/apps/kubernetes/log"

mkdir: 已创建目录 "/data/apps/kubernetes/certs"

mkdir: 已创建目录 "/data/apps/kubernetes/pki"

mkdir: 已创建目录 "/data/apps/kubernetes/etc"

[root@master1 k8s-ssl]# for i in master2 master3 ; do ssh -X $i mkdir /data/apps/kubernetes/{log,certs,pki,etc} -pv ; done

2.拷贝证书文件到master节点:

[root@master1 k8s-ssl]# cp ca*.pem admin*.pem kube-proxy*.pem kube-scheduler*.pem kube-controller-manager*.pem kube-apiserver*.pem /data/apps/kubernetes/pki/ [root@master1 k8s-ssl]# cd /data/apps/kubernetes/pki/ [root@master1 pki]# ll 总用量 48 -rw------- 1 root root 1675 10月 18 21:32 admin-key.pem -rw-r--r-- 1 root root 1407 10月 18 21:32 admin.pem -rw------- 1 root root 1675 10月 18 21:32 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 21:32 ca.pem -rw------- 1 root root 1679 10月 18 21:32 kube-apiserver-key.pem -rw-r--r-- 1 root root 1639 10月 18 21:32 kube-apiserver.pem -rw------- 1 root root 1675 10月 18 21:32 kube-controller-manager-key.pem -rw-r--r-- 1 root root 1521 10月 18 21:32 kube-controller-manager.pem -rw------- 1 root root 1675 10月 18 21:32 kube-proxy-key.pem -rw-r--r-- 1 root root 1428 10月 18 21:32 kube-proxy.pem -rw------- 1 root root 1679 10月 18 21:32 kube-scheduler-key.pem -rw-r--r-- 1 root root 1497 10月 18 21:32 kube-scheduler.pem

[root@master1 pki]# for i in master2 master3 ; do rsync -avzP /data/apps/kubernetes/pki $i:/data/apps/kubernetes/;done

3.拷贝证书到node节点:

[root@master1 pki]# for i in node1 node2 ; do ssh -X $i mkdir /data/apps/kubernetes/{log,certs,pki,etc} -pv ; done [root@master1 pki]# for i in node1 node2; do scp ca*.pem kube-proxy*.pem admin*pem $i:/data/apps/kubernetes/pki/;done [root@node1 ~]# cd /data/apps/kubernetes/pki/

[root@node1 pki]# ll ##node节点查看 总用量 24 -rw------- 1 root root 1675 10月 18 21:37 admin-key.pem -rw-r--r-- 1 root root 1407 10月 18 21:37 admin.pem -rw------- 1 root root 1675 10月 18 21:37 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 21:37 ca.pem -rw------- 1 root root 1675 10月 18 21:37 kube-proxy-key.pem -rw-r--r-- 1 root root 1428 10月 18 21:37 kube-proxy.pem

6.部署Master节点:

1.解压:

[root@master1 software]# tar -xvzf kubernetes-server-linux-amd64.tar.gz ##master1节点解压

[root@node1 software]# tar -xvzf kubernetes-node-linux-amd64.tar.gz ##node1节点解压

2.把master节点和node节点解压出来的kubernetes目录拷贝其他的Master和node节点:

[root@master1 software]# for i in master2 master3 ; do rsync -avzP kubernetes $i:/opt/software/;done [root@node1 software]# scp -r kubernetes node2:/opt/software/

3.master节点和node节点拷贝server目录和node目录至/data/apps/kubernetes:

[root@master1 software]# cp kubernetes/server/ /data/apps/kubernetes/ -r ##master1操作 [root@master2 software]# cp -r kubernetes/server/ /data/apps/kubernetes/ ##master2操作 [root@master3 software]# cp kubernetes/server/ /data/apps/kubernetes/ -r ##master3操作 [root@node1 kubernetes]# cp -r node /data/apps/kubernetes/ ##node1操作 [root@node2 kubernetes]# cp node/ /data/apps/kubernetes/ -r ##node2操作

4.定义变量:

[root@master1 software]# vim /etc/profile.d/k8s.sh #!/bin/bash export PATH=/data/apps/kubernetes/server/bin:$PATH

[root@master1 software]# ansible nodes -m copy -a 'src=/etc/profile.d/k8s.sh dest=/etc/profile.d/' [root@master1 software]# source /etc/profile [root@master1 software]# ansible nodes -m copy -a 'src=/etc/profile.d/k8s.sh dest=/etc/profile.d/'

##master节点需要执行:source /etc/profile ##node节点需要修改:vim /etc/profile.d/k8s.sh export PATH=/data/apps/kubernetes/node/bin:$PATH

[root@node2 kubernetes]# source /etc/profile

[root@master1 software]# kubectl version Client Version: version.Info{Major:"1", Minor:"13", GitVersion:"v1.13.0", GitCommit:"ddf47ac13c1a9483ea035a79cd7c10005ff21a6d", GitTreeState:"clean", BuildDate:"2018-12-03T21:04:45Z", GoVersion:"go1.11.2", Compiler:"gc", Platform:"linux/amd64"} The connection to the server localhost:8080 was refused - did you specify the right host or port?

7.配置 TLS Bootstrapping:

1.生成token:

[root@master1 kubernetes]# vim /etc/profile.d/k8s.sh #!/bin/bash export BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x |tr -d ' ') export PATH=/data/apps/kubernetes/server/bin:$PATH [root@master1 kubernetes]# soruce /etc/profile [root@master1 kubernetes]# for i in master2 master3 ; do scp /etc/profile.d/k8s.sh $i:/etc/profile.d/ ; done

##其他节点执行:source /etc/profile

[root@master1 kubernetes]# ll 总用量 4 drwxr-xr-x 2 root root 6 10月 18 21:28 certs drwxr-xr-x 2 root root 6 10月 18 21:28 etc drwxr-xr-x 2 root root 6 10月 18 21:28 log drwxr-xr-x 2 root root 310 10月 18 21:32 pki drwxr-xr-x 3 root root 17 10月 19 09:24 server -rw-r--r-- 1 root root 70 10月 19 09:49 token.csv

[root@master1 kubernetes]# cat token.csv ${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

2.创建 kubelet bootstrapping kubeconfig:

注意:设置kube-apiserver访问地址, 后面需要对kube-apiserver配置高可用集群, 这里设置apiserver浮动IP。 KUBE_APISERVER=浮动IP

[root@master1 kubernetes]# vim /etc/profile.d/k8s.sh #!/bin/bash export BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x |tr -d ' ') export KUBE_APISERVER="https://192.168.100.100:8443" export PATH=/data/apps/kubernetes/server/bin:$PATH [root@master1 kubernetes]# source /etc/profile

[root@master1 kubernetes]# echo $KUBE_APISERVER https://192.168.100.100:8443 [root@master1 kubernetes]# for i in master2 master3 ; do scp /etc/profile.d/k8s.sh $i:/etc/profile.d/ ; done

##其他节点执行:source /etc/profile

3.配置kube-controller-manager、kube-scheduler、admin.conf、bootstrapkubeconfig、kube-proxy:

kubelet-bootstrap.kubeconfig: [root@master1 kubernetes]# cat bootstrap.sh #!/bin/bash

##设置集群参数## kubectl config set-cluster kubernetes --certificate-authority=/data/apps/kubernetes/pki/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=kubelet-bootstrap.kubeconfig

##设置客户端认证方式## kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

##设置上下文参数## kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

##设置默认上下文## kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

kube-controller-manager.kubeconfig: [root@master1 kubernetes]# cat kube-controller-manager.sh #!/bin/bash

##设置集群参数## kubectl config set-cluster kubernetes --certificate-authority=/data/apps/kubernetes/pki/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=kube-controller-manager.kubeconfig

##设置客户端认证方式## kubectl config set-credentials kube-controller-manager --client-certificate=/data/apps/kubernetes/pki/kube-controller-manager.pem --client-key=/data/apps/kubernetes/pki/kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

##设置上下文参数## kubectl config set-context default --cluster=kubernetes --user=kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

##设置默认上下文参数## kubectl config use-context default --kubeconfig=kube-controller-manager.kubeconfig

kube-scheduler.kubeconfig: [root@master1 kubernetes]# cat kube-scheduler.sh #!/bin/bash

##设置集群参数## kubectl config set-cluster kubernetes --certificate-authority=/data/apps/kubernetes/pki/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=kube-scheduler.kubeconfig

##设置客户端认证方式## kubectl config set-credentials kube-scheduler --client-certificate=/data/apps/kubernetes/pki/kube-scheduler.pem --client-key=/data/apps/kubernetes/pki/kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

##设置上下文参数## kubectl config set-context default --cluster=kubernetes --user=kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

##设置默认上下文参数## kubectl config use-context default --kubeconfig=kube-scheduler.kubeconfig

kube-proxy.kubeconfig: [root@master1 kubernetes]# cat kube-proxy.sh #!/bin/bash ##设置集群参数## kubectl config set-cluster kubernetes --certificate-authority=/data/apps/kubernetes/pki/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=kube-proxy.kubeconfig

##设置客户端认证方式## kubectl config set-credentials kube-proxy --client-certificate=/data/apps/kubernetes/pki/kube-proxy.pem --client-key=/data/apps/kubernetes/pki/kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

##设置上下文参数## kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

##设置默认上下参数## kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

admin.conf: [root@master1 kubernetes]# cat admin.sh #!/bin/bash ##设置集群参数## kubectl config set-cluster kubernetes --certificate-authority=/data/apps/kubernetes/pki/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=admin.conf

##设置客户端认证方式## kubectl config set-credentials admin --client-certificate=/data/apps/kubernetes/pki/admin.pem --client-key=/data/apps/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=admin.conf

##设置上下文参数## kubectl config set-context default --cluster=kubernetes --user=admin --kubeconfig=admin.conf

##设置默认上下文参数## kubectl config use-context default --kubeconfig=admin.conf

4.分发kubelet/kube-proxy配置文件到node节点:

[root@master1 kubernetes]#for i in node1 node2 ; do scp /data/apps/kubernetes/etc/kubelet-bootstrap.kubeconfig $i:/data/apps/kubernetes/etc/;done [root@master1 kubernetes]#for i in node1 node2 ; do scp /data/apps/kubernetes/etc/kube-proxy.kubeconfig $i:/data/apps/kubernetes/etc/;done

5.分发配置文件到其他master节点:

[root@node1 etc]# for i in master2 master3 ; do rsync -avzP /data/apps/kubernetes/etc $i:/data/apps/kubernetes/;done [root@master1 kubernetes]# for i in master2 master3 ; do scp token.csv $i:/data/apps/kubernetes/;done

8.配置kube-apiserver:

1.创建sa证书:

[root@master1 kubernetes]# cd /data/apps/kubernetes/pki/ [root@master1 pki]# openssl genrsa -out /data/apps/kubernetes/pki/sa.key 2048 [root@master1 pki]# openssl rsa -in /data/apps/kubernetes/pki/sa.key -pubout -out /data/apps/kubernetes/pki/sa.pub

[root@master1 pki]# ll 总用量 56 -rw------- 1 root root 1675 10月 18 21:32 admin-key.pem -rw-r--r-- 1 root root 1407 10月 18 21:32 admin.pem -rw------- 1 root root 1675 10月 18 21:32 ca-key.pem -rw-r--r-- 1 root root 1363 10月 18 21:32 ca.pem -rw------- 1 root root 1679 10月 18 21:32 kube-apiserver-key.pem -rw-r--r-- 1 root root 1639 10月 18 21:32 kube-apiserver.pem -rw------- 1 root root 1675 10月 18 21:32 kube-controller-manager-key.pem -rw-r--r-- 1 root root 1521 10月 18 21:32 kube-controller-manager.pem -rw------- 1 root root 1675 10月 18 21:32 kube-proxy-key.pem -rw-r--r-- 1 root root 1428 10月 18 21:32 kube-proxy.pem -rw------- 1 root root 1679 10月 18 21:32 kube-scheduler-key.pem -rw-r--r-- 1 root root 1497 10月 18 21:32 kube-scheduler.pem -rw-r--r-- 1 root root 1679 10月 19 10:32 sa.key -rw-r--r-- 1 root root 451 10月 19 10:33 sa.pub

2.分发文件到其他apiserver节点:

[root@master1 pki]# for i in master2 master3 ; do scp sa.* $i:/data/apps/kubernetes/pki/;done

3.创建apiserver配置文件:

[root@master1 etc]# cat kube-apiserver.conf KUBE_API_ADDRESS="--advertise-address=192.168.100.63" ##其他两台master修改自己的ip KUBE_ETCD_ARGS="--etcd-servers=https://192.168.100.63:2379,https://192.168.100.65:2379,https://192.168.100.66:2379 --etcd-cafile=/etc/etcd/ssl/etcd-ca.pem --etcd-certfile=/etc/etcd/ssl/etcd.pem --etcd-keyfile=/etc/etcd/ssl/etcd-key.pem" KUBE_LOGTOSTDERR="--logtostderr=false" KUBE_LOG_LEVEL="--log-dir=/data/apps/kubernetes/log/ --v=2 --audit-log-maxage=7 --audit-log-maxbackup=10 --audit-log-maxsize=100 --audit-log-path=/data/apps/kubernetes/log/kubernetes.audit --event-ttl=12h" KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.99.0.0/16" KUBE_ADMISSION_CONTROL="--enable-admission-plugins=Initializers,NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota" KUBE_APISERVER_ARGS="--storage-backend=etcd3 --apiserver-count=3 --endpoint-reconciler-type=lease --runtime-config=api/all,settings.k8s.io/v1alpha1=true,admissionregistration.k8s.io/v1beta1 --allow-privileged=true --authorization-mode=Node,RBAC --enable-bootstrap-token-auth=true --token-auth-file=/data/apps/kubernetes/token.csv --service-node-port-range=30000-40000 --tls-cert-file=/data/apps/kubernetes/pki/kube-apiserver.pem --tls-private-key-file=/data/apps/kubernetes/pki/kube-apiserver-key.pem --client-ca-file=/data/apps/kubernetes/pki/ca.pem --service-account-key-file=/data/apps/kubernetes/pki/sa.pub --enable-swagger-ui=false --secure-port=6443 --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname --anonymous-auth=false --kubelet-client-certificate=/data/apps/kubernetes/pki/admin.pem --kubelet-client-key=/data/apps/kubernetes/pki/admin-key.pem"

4.启动文件:

[root@master1 etc]# cat /usr/lib/systemd/system/kube-apiserver.service [Unit] Description=Kubernetes API Service Documentation=https://github.com/kubernetes/kubernetes After=network.target [Service] EnvironmentFile=-/data/apps/kubernetes/etc/kube-apiserver.conf ExecStart=/data/apps/kubernetes/server/bin/kube-apiserver $KUBE_LOGTOSTDERR $KUBE_LOG_LEVEL $KUBE_ETCD_ARGS $KUBE_API_ADDRESS $KUBE_SERVICE_ADDRESSES $KUBE_ADMISSION_CONTROL $KUBE_APISERVER_ARGS Restart=on-failure Type=notify LimitNOFILE=65536 [Install] WantedBy=multi-user.target

5.分发配置文件:

[root@master1 etc]# for i in master2 master3 ; do scp ./etc/kube-apiserver.conf $i:/data/apps/kubernetes/etc/;done [root@master1 etc]# for i in master2 master3 ; do scp /usr/lib/systemd/system/kube-apiserver.service $i:/usr/lib/systemd/system/;done

注意: 其他master节点需要修改"--advertise-address=192.168.100.63"

6. 启动服务:

[root@master1 etc]#systemctl daemon-reload [root@master1 etc]#systemctl enable kube-apiserver [root@master1 etc]#systemctl start kube-apiserver

7.访问测试:

注意:出现以下说明搭建成功

[root@master1 etc]# curl -k https://192.168.100.63:6443 { "kind": "Status", "apiVersion": "v1", "metadata": { }, "status": "Failure", "message": "Unauthorized", "reason": "Unauthorized", "code": 401

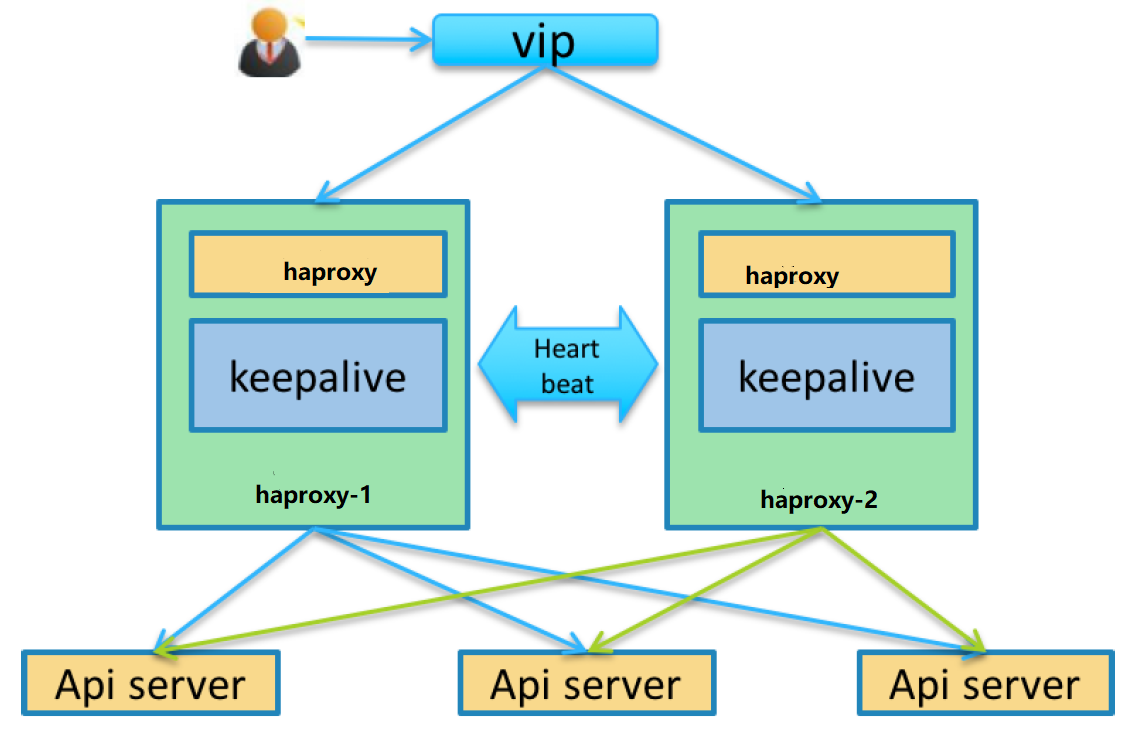

9.配置apiserver高可用

1.三台master节点安装keepalived haproxy

[root@master1 etc]# yum install keepalived haproxy -y

2.配置haproxy:

[root@master1 etc]# cd /etc/haproxy/ [root@master1 haproxy]# cp haproxy.cfg{,.bak}

[root@master1 haproxy]# ll 总用量 8 -rw-r--r-- 1 root root 3142 8月 6 21:44 haproxy.cfg -rw-r--r-- 1 root root 3142 10月 19 11:13 haproxy.cfg.bak

[root@master1 haproxy]# cat haproxy.cfg ..............................默认配置 defaults mode tcp log global option tcplog option dontlognull option httpclose option abortonclose option redispatch retries 3 timeout connect 5000s timeout client 1h timeout server 1h maxconn 32000 #--------------------------------------------------------------------- # main frontend which proxys to the backends #--------------------------------------------------------------------- frontend k8s_apiserver bind *:8443 mode tcp default_backend kube-apiserver listen stats mode http bind :10086 stats enable stats uri /admin?stats stats auth admin:admin stats admin if TRUE #--------------------------------------------------------------------- # static backend for serving up images, stylesheets and such #--------------------------------------------------------------------- #--------------------------------------------------------------------- # round robin balancing between the various backends #--------------------------------------------------------------------- backend kube-apiserver balance roundrobin server master1 192.168.100.63:6443 check inter 2000 fall 2 rise 2 weight 100 server master2 192.168.100.65:6443 check inter 2000 fall 2 rise 2 weight 100 server master3 192.168.100.66:6443 check inter 2000 fall 2 rise 2 weight 100

分发haproxy配置文件: [root@master1 haproxy]# for i in master2 master3 ; do scp haproxy.cfg $i:/etc/haproxy/;done [root@master1 haproxy]# systemctl enable haproxy && systemctl restart haproxy

启动服务并设置开机自启:

[root@master1 haproxy]# systemctl status haproxy ● haproxy.service - HAProxy Load Balancer Loaded: loaded (/usr/lib/systemd/system/haproxy.service; enabled; vendor preset: disabled) Active: active (running) since 六 2019-10-19 11:35:44 CST; 3min 35s ago Main PID: 13844 (haproxy-systemd) CGroup: /system.slice/haproxy.service ├─13844 /usr/sbin/haproxy-systemd-wrapper -f /etc/haproxy/haproxy.cfg -p /run/haproxy.pid ├─13845 /usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -p /run/haproxy.pid -Ds └─13846 /usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -p /run/haproxy.pid -Ds 10月 19 11:35:44 master1 systemd[1]: Started HAProxy Load Balancer. 10月 19 11:35:44 master1 haproxy-systemd-wrapper[13844]: haproxy-systemd-wrapper: executing /usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -p /run/...pid -Ds Hint: Some lines were ellipsized, use -l to show in full.

3.配置keepalived:

[root@master1 haproxy]# cd /etc/keepalived/

[root@master1 keepalived]# cp keepalived.conf{,.bak}

[root@master1 keepalived]# cat keepalived.conf ! Configuration File for keepalived global_defs { notification_email { acassen@firewall.loc failover@firewall.loc sysadmin@firewall.loc } notification_email_from Alexandre.Cassen@firewall.loc smtp_server 127.0.0.1 smtp_connect_timeout 30 router_id LVS_DEVEL } vrrp_script check_haproxy { script "/root/haproxy/check_haproxy.sh" interval 3 } vrrp_instance VI_1 { state MASTER interface ens33 virtual_router_id 51 priority 100 advert_int 1 authentication { auth_type PASS auth_pass 1111 } unicast_peer { 192.168.100.63 192.168.100.65 192.168.100.66 } virtual_ipaddress { 192.168.100.100/24 label ens33:0 } track_script { check_haproxy } }

[root@master1 keepalived]# mkdir /root/haproxy [root@master1 keepalived]# cd /root/haproxy

探测haproxy脚本: [root@master1 haproxy]# cat check_haproxy.sh #!/bin/bash ##检查haproxy健康状态 systemctl status haproxy &>/dev/null if [ $? -ne 0 ]; then echo -e "�33[31m haproxy is down ;closed is keepalived �33[0m" systemctl stop keepalived else echo -e "�33[32m haproxy is running �33[0m" fi

设置keepalived开机自启: [root@master1 haproxy]# systemctl enable keepalived [root@master1 haproxy]# for i in master2 master3 ; do scp /etc/keepalived/keepalived.conf $i:/etc/keepalived/;done [root@master1 haproxy]# for i in master2 master3 ; do ssh -X $i systemctl enable keepalived && systemctl restart keepalived; done [root@master1 haproxy]# for i in master2 master3 ; do ssh -X $i mkdir /root/haproxy;done [root@master1 haproxy]# for i in master2 master3 ; do scp /root/haproxy/check_haproxy.sh $i:/root/haproxy/;done 注意: 其他master节点修改: state MASTER --------BACKUP interface ens33 virtual_router_id 51 priority 100 -------------90 .....80.... 测试:查看vip是否会漂移,如果haproxy没有起来,keepalived是无法启动的。

10.配置启动kube-controller-manager:

1.配置kube-controller-manager的配置文件:

[root@master1 etc]# cat kube-controller-manager.conf KUBE_LOGTOSTDERR="--logtostderr=false" KUBE_LOG_LEVEL="--v=2 --log-dir=/data/apps/kubernetes/log/" KUBECONFIG="--kubeconfig=/data/apps/kubernetes/etc/kube-controller-manager.kubeconfig" KUBE_CONTROLLER_MANAGER_ARGS="--bind-address=127.0.0.1 --cluster-cidr=10.99.0.0/16 --cluster-name=kubernetes --cluster-signing-cert-file=/data/apps/kubernetes/pki/ca.pem --cluster-signing-key-file=/data/apps/kubernetes/pki/ca-key.pem --service-account-private-key-file=/data/apps/kubernetes/pki/sa.key --root-ca-file=/data/apps/kubernetes/pki/ca.pem --leader-elect=true --use-service-account-credentials=true --node-monitor-grace-period=10s --pod-eviction-timeout=10s --allocate-node-cidrs=true --controllers=*,bootstrapsigner,tokencleaner --horizontal-pod-autoscaler-use-rest-clients=true --experimental-cluster-signing-duration=87600h0m0s --tls-cert-file=/data/apps/kubernetes/pki/kube-controller-manager.pem --tls-private-key-file=/data/apps/kubernetes/pki/kube-controller-manager-key.pem --feature-gates=RotateKubeletServerCertificate=true"

2.配置启动服务:

[root@master1 etc]# cat /usr/lib/systemd/system/kube-controller-manager.service Description=Kubernetes Controller Manager Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/data/apps/kubernetes/etc/kube-controller-manager.conf ExecStart=/data/apps/kubernetes/server/bin/kube-controller-manager $KUBE_LOGTOSTDERR $KUBE_LOG_LEVEL $KUBECONFIG $KUBE_CONTROLLER_MANAGER_ARGS Restart=always RestartSec=10s LimitNOFILE=65536 [Install] WantedBy=multi-user.target

3.分发启动文件和配置文件:

[root@master1 etc]#systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl restart kube-controller-manager

11.配置kubelet:

[root@master1 etc]# cp ~/.kube/ ~/.kube.bak -a [root@master1 etc]#cp /data/apps/kubernetes/etc/admin.conf $HOME/.kube/config [root@master1 etc]#sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@master1 etc]# kubectl get cs NAME STATUS MESSAGE ERROR scheduler Unhealthy Get http://127.0.0.1:10251/healthz: dial tcp 127.0.0.1:10251: connect: connection refused controller-manager Healthy ok etcd-1 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} etcd-2 Healthy {"health":"true"}

[root@master1 etc]# kubectl cluster-info

Kubernetes master is running at https://192.168.100.100:8443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[root@master1 etc]# netstat -tanp |grep 8443

tcp 0 0 0.0.0.0:8443 0.0.0.0:* LISTEN 14271/haproxy

tcp 0 0 192.168.100.100:8443 192.168.100.63:37034 ESTABLISHED 14271/haproxy

tcp 0 0 192.168.100.63:36976 192.168.100.100:8443 ESTABLISHED 42206/kube-controll

tcp 0 0 192.168.100.100:8443 192.168.100.66:54528 ESTABLISHED 14271/haproxy

tcp 0 0 192.168.100.100:8443 192.168.100.65:53980 ESTABLISHED 14271/haproxy

tcp 0 0 192.168.100.100:8443 192.168.100.63:36976 ESTABLISHED 14271/haproxy

tcp 0 0 192.168.100.63:37034 192.168.100.100:8443 ESTABLISHED 42206/kube-controll

注意:

1. 如果执行 kubectl 命令式时输出如下错误信息,则说明使用的 ~/.kube/config文件不对,请切换到正确的账户后再执行该命令:

The connection to the server localhost:8080 was refused - did you specify the right host or port?

2. 执行 kubectl get componentstatuses 命令时,apiserver 默认向 127.0.0.1 发送请求。当controller-manager、scheduler 以集群模式运行时,有可能和 kube-apiserver 不在一台机器上,这时 controller-manager 或 scheduler 的状态为Unhealthy,但实际上它们工作正常。

2.配置kubelet使用bootstrap:

[root@master1 etc]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

12.配置启动kube-scheduler:

kubescheduler 负责分配调度 Pod 到集群内的节点上,它监听 kube apiserver ,查询还未分配 Node 的 Pod ,然后根据调度策略为这些 Pod 分配节点。

按照预定的调度策略将Pod 调度到相应的机器上(更新 Pod 的 NodeName 字段)。

1.创建 kube scheduler .conf 配置文件:

[root@master1 etc]# cat kube-scheduler.conf KUBE_LOGTOSTDERR="--logtostderr=false" KUBE_LOG_LEVEL="--v=2 --log-dir=/data/apps/kubernetes/log/" KUBECONFIG="--kubeconfig=/data/apps/kubernetes/etc/kube-scheduler.kubeconfig" KUBE_SCHEDULER_ARGS="--leader-elect=true --address=127.0.0.1"

2.创建启动文件:

[root@master1 etc]# cat kube-scheduler.conf KUBE_LOGTOSTDERR="--logtostderr=false" KUBE_LOG_LEVEL="--v=2 --log-dir=/data/apps/kubernetes/log/" KUBECONFIG="--kubeconfig=/data/apps/kubernetes/etc/kube-scheduler.kubeconfig" KUBE_SCHEDULER_ARGS="--leader-elect=true --address=127.0.0.1" [root@master1 etc]# cat /usr/lib/systemd/system/kube-scheduler.service [Unit] Description=Kubernetes Scheduler Plugin Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=-/data/apps/kubernetes/etc/kube-scheduler.conf ExecStart=/data/apps/kubernetes/server/bin/kube-scheduler $KUBE_LOGTOSTDERR $KUBE_LOG_LEVEL $KUBECONFIG $KUBE_SCHEDULER_ARGS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target

3.启动服务:

[root@master1 etc]# systemctl daemon-reload && systemctl enable kube-scheduler && systemctl start kube-scheduler [root@master1 etc]# systemctl status kube-scheduler ● kube-scheduler.service - Kubernetes Scheduler Plugin Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled) Active: active (running) since 日 2019-10-20 13:50:46 CST; 2min 16s ago Docs: https://github.com/kubernetes/kubernetes Main PID: 47877 (kube-scheduler) Tasks: 10 Memory: 50.0M CGroup: /system.slice/kube-scheduler.service └─47877 /data/apps/kubernetes/server/bin/kube-scheduler --logtostderr=false --v=2 --log-dir=/data/apps/kubernetes/log/ --kubeconfig=/data/apps/... 10月 20 13:50:46 master1 systemd[1]: Started Kubernetes Scheduler Plugin.

4.分发配置文件:

[root@master1 etc]# for i in master2 master3 ; do scp kube-scheduler.conf $i:/data/apps/kubernetes/etc/;done [root@master1 etc]# for i in master2 master3 ; do scp /usr/lib/systemd/system/kube-scheduler.service $i:/usr/lib/systemd/system/;done

其他master节点执行: [root@master2 etc]# systemctl daemon-reload && systemctl enable kube-scheduler && systemctl restart kube-scheduler

[root@master1 etc]# kubectl get cs NAME STATUS MESSAGE ERROR controller-manager Healthy ok scheduler Healthy ok etcd-1 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} etcd-2 Healthy {"health":"true"}

13.部署Node节点:

说明:

kubelet负责维持容器的生命周期,同时也负责 Volume CVI )和网络 CNI )的管理每个节点上都运行一个kubelet 服务进程,默认监听 10250 端口;

接收并执行 master发来的指令,管理 Pod 及 Pod 中的容器。每个 kubelet 进程会在 API Server 上注册节点自身信息,

定期向 master 节点汇报节点的资源使用情况,并通过 cAdvisor/me tric server 监控节点和容器的资源。

kublet 运行在每个 worker 节点上,接收 kube-apiserver 发送的请求,管理 Pod 容器,执行交互式命令,如 exec、run、logs 等。

kublet 启动时自动向 kube-apiserver 注册节点信息,内置的 cadvisor 统计和监控节点的资源使用情况。

为确保安全,本文档只开启接收 https 请求的安全端口,对请求进行认证和授权,拒绝未授权的访问(如 apiserver、heapster)。

1.创建kubelet配置文件:master与node节点都需要安装

[root@master1 etc]# ll 总用量 60 -rw-rw-r-- 1 root root 6273 10月 19 10:14 admin.conf -rw-rw-r-- 1 root root 1688 10月 20 12:27 kube-apiserver.conf -rw-rw-r-- 1 root root 1772 10月 20 10:17 kube-apiserver.conf.bak -rw-rw-r-- 1 root root 1068 10月 19 20:04 kube-controller-manager.conf -rw-rw-r-- 1 root root 6461 10月 19 10:06 kube-controller-manager.kubeconfig -rw-rw-r-- 1 root root 2177 10月 19 09:59 kubelet-bootstrap.kubeconfig -rw-r--r-- 1 root root 602 10月 20 14:46 kubelet.conf -rw-r--r-- 1 root root 492 10月 20 14:42 kubelet-config.yml -rw-rw-r-- 1 root root 6311 10月 19 10:12 kube-proxy.kubeconfig -rw-r--r-- 1 root root 242 10月 20 13:48 kube-scheduler.conf -rw-rw-r-- 1 root root 6415 10月 19 10:09 kube-scheduler.kubeconfig

[root@master1 etc]# cat kubelet.conf KUBE_LOGTOSTDERR="--logtostderr=false" KUBE_LOG_LEVEL="--log-dir=/data/apps/kubernetes/log/ --v=2" KUBELET_HOSTNAME="--hostname-override=192.168.100.63" KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1" KUBELET_CONFIG="--config=/data/apps/kubernetes/etc/kubelet-config.yml" KUBELET_ARGS="--bootstrap-kubeconfig=/data/apps/kubernetes/etc/kubelet-bootstrap.kubeconfig --kubeconfig=/data/apps/kubernetes/etc/kubelet.kubeconfig --cert-dir=/data/apps/kubernetes/pki --feature-gates=RotateKubeletClientCertificate=true"

[root@master1 etc]# cat kubelet-config.yml kind: KubeletConfiguration apiVersion: kubelet.config.k8s.io/v1beta1 address: 192.168.100.63 port: 10250 cgroupDriver: cgroupfs clusterDNS: - 10.99.110.110 clusterDomain: ziji.work. hairpinMode: promiscuous-bridge maxPods: 200 failSwapOn: false imageGCHighThresholdPercent: 90 imageGCLowThresholdPercent: 80 imageMinimumGCAge: 5m0s serializeImagePulls: false authentication: x509: clientCAFile: /data/apps/kubernetes/pki/ca.pem anonymous: enbaled: false webhook: enbaled: false

2.配置启动文件:

[root@master1 etc]# cat /usr/lib/systemd/system/kubelet.service [Unit] Description=Kubernetes Kubelet Server Documentation=https://github.com/kubernetes/kubernetes After=docker.service Requires=docker.service [Service] EnvironmentFile=-/data/apps/kubernetes/etc/kubelet.conf ExecStart=/data/apps/kubernetes/server/bin/kubelet $KUBE_LOGTOSTDERR $KUBE_LOG_LEVEL $KUBELET_CONFIG $KUBELET_HOSTNAME $KUBELET_POD_INFRA_CONTAINER $KUBELET_ARGS Restart=on-failure [Install] WantedBy=multi-user.target

3.启动服务:

3.启动服务: [root@master1 etc]# systemctl daemon-reload && systemctl enable kubelet && systemctl start kubelet [root@master1 etc]# systemctl status kubelet ● kubelet.service - Kubernetes Kubelet Server Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled) Active: active (running) since 日 2019-10-20 14:54:28 CST; 24s ago Docs: https://github.com/kubernetes/kubernetes Main PID: 52253 (kubelet) Tasks: 9 Memory: 13.6M CGroup: /system.slice/kubelet.service └─52253 /data/apps/kubernetes/server/bin/kubelet --logtostderr=false --log-dir=/data/apps/kubernetes/log/ --v=2 --config=/data/apps/kubernetes/... 10月 20 14:54:28 master1 systemd[1]: Started Kubernetes Kubelet Server. 10月 20 14:54:28 master1 kubelet[52253]: Flag --feature-gates has been deprecated, This parameter should be set via the config file specified by...ormation. 10月 20 14:54:28 master1 kubelet[52253]: Flag --feature-gates has been deprecated, This parameter should be set via the config file specified by...ormation. Hint: Some lines were ellipsized, use -l to show in full.

4.分发配置文件到其他的master节点上:

[root@master1 etc]# for i in master2 master3 ; do scp kubelet.conf kubelet-config.yml $i:/data/apps/kubernetes/etc/;done [root@master1 etc]# for i in master2 master3 ; do scp /usr/lib/systemd/system/kubelet.service $i:/usr/lib/systemd/system/;done

注意: 其他master节点修改kubelet.conf kubelet-config文件: [root@master2 etc]#vim kubelet.conf: KUBELET_HOSTNAME="--hostname-override=192.168.100.65"

[root@master2 etc]#vim kubelet-config.yml address: 192.168.100.65 [root@master2 etc]# systemctl daemon-reload && systemctl enable kubelet && systemctl restart kubelet

[root@master2 etc]# systemctl status kubelet ● kubelet.service - Kubernetes Kubelet Server Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled) Active: active (running) since 日 2019-10-20 15:00:38 CST; 5s ago Docs: https://github.com/kubernetes/kubernetes Main PID: 52614 (kubelet) Tasks: 8 Memory: 8.6M CGroup: /system.slice/kubelet.service └─52614 /data/apps/kubernetes/server/bin/kubelet --logtostderr=false --log-dir=/data/apps/kubernetes/log/ --v=2 --config=/data/apps/kubernetes/... 10月 20 15:00:38 master2 systemd[1]: Started Kubernetes Kubelet Server. 10月 20 15:00:43 master2 kubelet[52614]: Flag --feature-gates has been deprecated, This parameter should be set via the config file specified by...ormation. 10月 20 15:00:43 master2 kubelet[52614]: Flag --feature-gates has been deprecated, This parameter should be set via the config file specified by...ormation. Hint: Some lines were ellipsized, use -l to show in full. master3节点也需要操作。修改配置文件的ip

5.在node 上操作:

##重复以上步骤, 修改kubelet-config.yml address:地址为node 节点ip, --hostnameoverride=为node ip 地址

6.配置文件分发:

[root@master1 etc]# for i in node1 node2 ; do scp kubelet.conf kubelet-config.yml $i:/data/apps/kubernetes/etc/;done [root@master1 etc]# for i in node1 node2 ; do scp /usr/lib/systemd/system/kubelet.service $i:/usr/lib/systemd/system/;done [root@node1 ~]# cd /data/apps/kubernetes/etc/

[root@node1 etc]# ll 总用量 20 -rw------- 1 root root 2177 10月 19 09:59 kubelet-bootstrap.kubeconfig -rw-r--r-- 1 root root 602 10月 20 15:04 kubelet.conf -rw-r--r-- 1 root root 492 10月 20 15:04 kubelet-config.yml -rw------- 1 root root 6311 10月 19 10:12 kube-proxy.kubeconfig

注意: 修改kubelet-config.yml address:地址为node 节点ip, --hostnameoverride=为node ip 地址 修改/usr/lib/systemd/system/kubelet.service文件的启动命令: ExecStart=/data/apps/kubernetes/node/bin/kubelet

7.node节点重启服务并开机自启:

[root@node1 etc]# systemctl daemon-reload && systemctl enable kubelet && systemctl restart kubelet

[root@node1 etc]# systemctl status kubelet ● kubelet.service - Kubernetes Kubelet Server Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled) Active: active (running) since 日 2019-10-20 15:08:22 CST; 25s ago Docs: https://github.com/kubernetes/kubernetes Main PID: 11470 (kubelet) Tasks: 7 Memory: 112.5M CGroup: /system.slice/kubelet.service └─11470 /data/apps/kubernetes/node/bin/kubelet --logtostderr=false --log-dir=/data/apps/kubernetes/log/ --v=2 --config=/data/apps/kubernetes/et... 10月 20 15:08:22 node1 systemd[1]: Started Kubernetes Kubelet Server. 10月 20 15:08:45 node1 kubelet[11470]: Flag --feature-gates has been deprecated, This parameter should be set via the config file specified by t...ormation. 10月 20 15:08:46 node1 kubelet[11470]: Flag --feature-gates has been deprecated, This parameter should be set via the config file specified by t...ormation. Hint: Some lines were ellipsized, use -l to show in full

说明:另一个node操作如上。

14.配置kube-proxy:

所有节点都要配置kube-proxy

说明:

kube-proxy 负责为 Service 提供 cluster 内部的服务发现和负载均衡;

每台机器上都运行一个 kube-proxy 服务,它监听 API server 中 service和 endpoint 的变化情况,

并通过 ipvs/iptables 等为服务配置负载均衡(仅支持 TCP 和 UDP)。

注意:使用 ipvs 模式时,需要预先在每台 Node 上加载内核模块;

启用ipvs 主要就是把kube-proxy 的--proxy-mode 配置选项修改为ipvs,并且要启用--masquerade-all,使用iptables 辅助ipvs 运行。

[root@node1 etc]# lsmod |grep ip_vs ip_vs_sh 12688 0 ip_vs_wrr 12697 0 ip_vs_rr 12600 0 ip_vs 145497 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr nf_conntrack 133095 7 ip_vs,nf_nat,nf_nat_ipv4,xt_conntrack,nf_nat_masquerade_ipv4,nf_conntrack_netlink,nf_conntrack_ipv4 libcrc32c 12644 4 xfs,ip_vs,nf_nat,nf_conntrack

master 节点操作:

1.安装conntrack-tools:

[root@master1 etc]# yum install -y conntrack-tools ipvsadm ipset conntrack libseccomp

[root@master1 etc]# ansible nodes -m shell -a 'yum install -y conntrack-tools ipvsadm ipset conntrack libseccomp'

2.配置kube-proxy配置文件:

[root@master1 etc]# cat kube-proxy.conf KUBE_LOGTOSTDERR="--logtostderr=false" KUBE_LOG_LEVEL="--v=2 --log-dir=/data/apps/kubernetes/log/" KUBECONFIG="--kubeconfig=/data/apps/kubernetes/etc/kube-proxy.kubeconfig" KUBE_PROXY_ARGS="--proxy-mode=ipvs --masquerade-all=true --cluster-cidr=10.99.0.0/16"

3.配置启动文件:

[root@master1 etc]# cat /usr/lib/systemd/system/kube-proxy.service [Unit] Description=Kubernetes Kube-Proxy Server Documentation=https://github.com/kubernetes/kubernetes After=network.target [Service] EnvironmentFile=-/data/apps/kubernetes/etc/kube-proxy.conf ExecStart=/data/apps/kubernetes/server/bin/kube-proxy $KUBE_LOGTOSTDERR $KUBE_LOG_LEVEL $KUBECONFIG $KUBE_PROXY_ARGS Restart=on-failure LimitNOFILE=65536 KillMode=process [Install] WantedBy=multi-user.target

4.启动服务:

[root@master1 etc]# systemctl daemon-reload && systemctl enable kube-proxy && systemctl restart kube-proxy [root@master1 etc]# systemctl status kube-proxy ● kube-proxy.service - Kubernetes Kube-Proxy Server Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled) Active: active (running) since 日 2019-10-20 15:25:06 CST; 1min 56s ago Docs: https://github.com/kubernetes/kubernetes Main PID: 54748 (kube-proxy) Tasks: 0 Memory: 10.4M CGroup: /system.slice/kube-proxy.service ‣ 54748 /data/apps/kubernetes/server/bin/kube-proxy --logtostderr=false --v=2 --log-dir=/data/apps/kubernetes/log/ --kubeconfig=/data/apps/kube... 10月 20 15:26:42 master1 kube-proxy[54748]: -A KUBE-LOAD-BALANCER -j KUBE-MARK-MASQ 10月 20 15:26:42 master1 kube-proxy[54748]: -A KUBE-FIREWALL -j KUBE-MARK-DROP 10月 20 15:26:42 master1 kube-proxy[54748]: -A KUBE-SERVICES -m set --match-set KUBE-CLUSTER-IP dst,dst -j ACCEPT 10月 20 15:26:42 master1 kube-proxy[54748]: COMMIT 10月 20 15:26:42 master1 kube-proxy[54748]: *filter 10月 20 15:26:42 master1 kube-proxy[54748]: :KUBE-FORWARD - [0:0] 10月 20 15:26:42 master1 kube-proxy[54748]: -A KUBE-FORWARD -m comment --comment "kubernetes forwarding rules" -m mark --mark 0x00004000/0x00004...-j ACCEPT 10月 20 15:26:42 master1 kube-proxy[54748]: -A KUBE-FORWARD -s 10.99.0.0/16 -m comment --comment "kubernetes forwarding conntrack pod source rul...-j ACCEPT 10月 20 15:26:42 master1 kube-proxy[54748]: -A KUBE-FORWARD -m comment --comment "kubernetes forwarding conntrack pod destination rule" -d 10.99...-j ACCEPT 10月 20 15:26:42 master1 kube-proxy[54748]: COMMIT Hint: Some lines were ellipsized, use -l to show in full.

5.配置文件分发其他节点:

[root@master1 etc]# for i in master2 master3 node1 node2; do scp kube-proxy.conf $i:/data/apps/kubernetes/etc/;done

[root@master1 etc]# for i in master2 master3 node1 node2; do scp /usr/lib/systemd/system/kube-proxy.service $i:/usr/lib/systemd/system/;done

6.其他每个节点启动服务:

[root@master2 etc]# systemctl daemon-reload && systemctl enable kube-proxy && systemctl restart kube-proxy

注意:node节点需要修改:

ExecStart=/data/apps/kubernetes/node/bin/kube-proxy

7.查看监听端口:

· 10249:http prometheus metrics port;

· 10256:http healthz port;

15.通过证书验证添加各个节点:

说明:

1、kublet 启动时查找配置的 --kubeletconfig 文件是否存在,如果不存在则使用 --bootstrap-kubeconfig 向 kube-apiserver 发送证书签名请求 (CSR)。

2、kube-apiserver 收到 CSR 请求后,对其中的 Token 进行认证(事先使用 kubeadm 创建的 token),认证通过后将请求的 user 设置为 system:bootstrap:,group 设置为 system:bootstrappers,这一过程称为 Bootstrap Token Auth。

3、默认情况下,这个 user 和 group 没有创建 CSR 的权限,kubelet 启动失败

4、解决办法是:创建一个 clusterrolebinding,将 group system:bootstrappers 和 clusterrole system:node-bootstrapper 绑定:

[root@kube-master ~]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --group=system:bootstrappers

注意:

1.kubelet 启动后使用 --bootstrap-kubeconfig 向 kube-apiserver 发送 CSR 请求,当这个 CSR 被 approve 后,kube-controller-manager 为 kubelet 创建 TLS 客户端证书、私钥和 --kubeletconfig 文件。

2.kube-controller-manager 需要配置 --cluster-signing-cert-file 和 --cluster-signing-key-file 参数,才会为 TLS Bootstrap 创建证书和私钥。

1.查看token.csv的值一定要与kubelet-bootstrap.kubeconfig文件的token值一样。

[root@master1 kubernetes]# cat etc/kubelet-bootstrap.kubeconfig apiVersion: v1 clusters: - cluster: certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUR3akNDQXFxZ0F3SUJBZ0lVUmdsUCtlVFg3RndsQXY0WURZYllRY1Vjc05Rd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1p6RUxNQWtHQTFVRUJoTUNRMDR4RVRBUEJnTlZCQWdUQ0VoaGJtZGFhRzkxTVJFd0R3WURWUVFIRXdoYQphR1ZLYVdGdVp6RU1NQW9HQTFVRUNoTURhemh6TVE4d0RRWURWUVFMRXdaVGVYTjBaVzB4RXpBUkJnTlZCQU1UCkNtdDFZbVZ5Ym1WMFpYTXdIaGNOTVRreE1ERTRNVEkxTURBd1doY05Namt4TURFMU1USTFNREF3V2pCbk1Rc3cKQ1FZRFZRUUdFd0pEVGpFUk1BOEdBMVVFQ0JNSVNHRnVaMXBvYjNVeEVUQVBCZ05WQkFjVENGcG9aVXBwWVc1bgpNUXd3Q2dZRFZRUUtFd05yT0hNeER6QU5CZ05WQkFzVEJsTjVjM1JsYlRFVE1CRUdBMVVFQXhNS2EzVmlaWEp1ClpYUmxjekNDQVNJd0RRWUpLb1pJaHZjTkFRRUJCUUFEZ2dFUEFEQ0NBUW9DZ2dFQkFNTUw0cloxYzdBN3FuUHgKMU1FRW5VN0liL3JJVmpwR003UXFPVnpIZW1EK2VObjVGbmRWZ3BtL0FWWlAwcVFzeFk5U3I1a1dqcHp6RmRRZgpjbEdVQzlsVmx1L2Q4SVdDdDRMSzdWa2RYK2J0K3hWb055RCs1Qndjb01WWDQ5TWt4dENqVGFhWkhWZXBEa2hyCjczYkpSMFRTL29FODA5ZjZLUFo1cmFtMHNOM1Z5eFlYbEVuaGh0SDFScHUwSlhKcko2L1BBNEN0aEcxcHErT2sKQmQ0WVVqa2o3N0V0ZENwclVTRDBCK1ZYUEFWRGhwbk9XcVBvckEwcnZva0tsZmZqRXNaL0V3SDZCZ0xIaENkZQpCNUpjTjN6eVQ0ckpPZUtOdzY2Zk4zeDU2Z0cxSVdBUDNFM05BaFdFRzdrbWV5OHBXM3JnQkZLQ3JMYXQyTU91CnF5YWRFVzhDQXdFQUFhTm1NR1F3RGdZRFZSMFBBUUgvQkFRREFnRUdNQklHQTFVZEV3RUIvd1FJTUFZQkFmOEMKQVFJd0hRWURWUjBPQkJZRUZEQ0d0TU5mSnhCTTVqQ0dPNXBIL1hBTWdvTWRNQjhHQTFVZEl3UVlNQmFBRkRDRwp0TU5mSnhCTTVqQ0dPNXBIL1hBTWdvTWRNQTBHQ1NxR1NJYjNEUUVCQ3dVQUE0SUJBUUE5VXFUMi9VcUVZYm5OCndzcnRoNGE4ckRmUURVT3FsOTFTYXQyUFN6VFY0UEFZUzFvZHdEMWxPZnMrMTdCYTdPalQ5Tkg0UFNqNGpxaGMKUWUvcUNJaW9MTXduZ3BFYzI3elR5a2pJQkpjSDF2U2JTaDhMT2JkaXplZElPWHlleHAySVJwZE92UWxScWl2NwpGQS9vbGJqdEFhZ0phNThqVjNZa0xSUVEvbHhwY09sOXQrSGNFNFBLWUNwckNTMzJpTUc1WTc1bk9jQk1xTFhFCjJ2VlN0TTlFd3JadkNHR1B6anh1N3pOMGtYTHJ2VWV4YmYreUhaY2toOE1hZGhvOE5KVDIrVlBIcmJFQTUzMGgKNWJ5RHhGYmxLMGZXVU1MNjRta2dNek9uYkZGbUNIbWlRY0Zxa0M1K1FJUXJQb0ZYdzRxMjF2eXF3WlJLQS9OZQpzdVdvZ1AzdAotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg== server: https://192.168.100.100:8443 name: kubernetes contexts: - context: cluster: kubernetes user: kubelet-bootstrap name: default current-context: default kind: Config preferences: {} users: - name: kubelet-bootstrap user: token: 4ed0d4c41d65817ee0ec8a24e0812d3d

[root@master1 kubernetes]# cat token.csv 4ed0d4c41d65817ee0ec8a24e0812d3d,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

注意: token.csv的值一定要与kubelet-bootstrap.kubeletconfig的token值一样。所有节点(master和node)都可以共用master1上的token值。 如果其他节点上的token.csv和kubelet-bootstrap.kubeconfig里面的token不一样,则修改和master1上一样即可,重启下kubelet。

2.手动发现csr:注意:这个过程需要等到1---10分钟

[root@master1 etc]# kubectl get csr -w NAME AGE REQUESTOR CONDITION node-csr-605xCG9-hO1Mxw2BXZE3Hk7iSMIzx7bYT3-_aEuFpXk 67m kubelet-bootstrap Approved,Issued ##已经验证成功 node-csr-X3zX4yz7csu80cOTQssiq7X2sVbBBM9uwWNY32W6Rkw 63m kubelet-bootstrap Approved,Issued node-csr-z8okrqWz9ZDF7iywp1913EpJ3n2hfQ2RKCiGGs0N0A8 3h55m kubelet-bootstrap Approved,Issued node-csr-iiJvkmnvDA6BTBD9NYXZtR_eQx2HHuUICwCgL12BVhI 0s kubelet-bootstrap Pending ##等待验证 node-csr-kFEcbDIFC94KGa9zaLHr_SduXrctH_Jop2iSIttNshY 0s kubelet-bootstrap Pending

###正常能看到的是pending状态,Approved状态是已经被验证通过了。这里我是已经验证通过 ,其他两个pending是node节点。

3.验证通过:把pending状态验证:

[root@master1 etc]# kubectl certificate approve node-csr-iiJvkmnvDA6BTBD9NYXZtR_eQx2HHuUICwCgL12BVhI certificatesigningrequest.certificates.k8s.io/node-csr-iiJvkmnvDA6BTBD9NYXZtR_eQx2HHuUICwCgL12BVhI approved

[root@master1 etc]# kubectl certificate approve node-csr-kFEcbDIFC94KGa9zaLHr_SduXrctH_Jop2iSIttNshY certificatesigningrequest.certificates.k8s.io/node-csr-kFEcbDIFC94KGa9zaLHr_SduXrctH_Jop2iSIttNshY approved

4.查看节点:

[root@master1 ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION 192.168.100.61 NotReady <none> 23h v1.13.0 ##因为没有配置网络和kubelet 192.168.100.62 NotReady <none> 23h v1.13.0 192.168.100.63 Ready <none> 25h v1.13.0 192.168.100.65 Ready <none> 24h v1.13.0 192.168.100.66 Ready <none> 24h v1.13.0

5.查看端口:

[root@master1 etc]# ss -nutlp |grep kubelet tcp LISTEN 0 128 127.0.0.1:10248 *:* users:(("kubelet",pid=35551,fd=24)) tcp LISTEN 0 128 192.168.100.63:10250 *:* users:(("kubelet",pid=35551,fd=25)) tcp LISTEN 0 128 127.0.0.1:32814 *:* users:(("kubelet",pid=35551,fd=12))

说明: 32814: cadvisor http 服务; 10248: healthz http 服务; 10250: https API 服务;注意:未开启只读端口 10255;

6.在node节点查看生成的文件:

[root@node1 pki]# ll kubelet* -rw------- 1 root root 1277 10月 21 13:59 kubelet-client-2019-10-21-13-59-17.pem lrwxrwxrwx 1 root root 64 10月 21 13:59 kubelet-client-current.pem -> /data/apps/kubernetes/pki/kubelet-client-2019-10-21-13-59-17.pem -rw-r--r-- 1 root root 2193 10月 20 15:08 kubelet.crt -rw------- 1 root root 1679 10月 20 15:08 kubelet.key

16.设置集群角色:

1.在任意master 节点操作, 为集群节点打label:

[root@master1 pki]# kubectl label nodes 192.168.100.63 node-role.kubernetes.io/master=master1 node/192.168.100.63 labeled

[root@master1 pki]# kubectl label nodes 192.168.100.65 node-role.kubernetes.io/master=master2 node/192.168.100.65 labeled

[root@master1 pki]# kubectl label nodes 192.168.100.66 node-role.kubernetes.io/master=master3 node/192.168.100.66 labeled

2.设置 192.168.100.61 - 62 lable 为 node:

[root@master1 pki]# kubectl label nodes 192.168.100.61 node-role.kubernetes.io/node=node1 node/192.168.100.61 labeled

[root@master1 pki]# kubectl label nodes 192.168.100.62 node-role.kubernetes.io/node=node2 node/192.168.100.62 labeled

3.设置 master 一般情况下不接受负载:

[root@master1 pki]# kubectl taint nodes 192.168.100.63 node-role.kubernetes.io/master=master1:NoSchedule --overwrite node/192.168.100.63 modified

[root@master1 pki]# kubectl taint nodes 192.168.100.65 node-role.kubernetes.io/master=master2:NoSchedule --overwrite node/192.168.100.65 modified

[root@master1 pki]# kubectl taint nodes 192.168.100.66 node-role.kubernetes.io/master=master3:NoSchedule --overwrite node/192.168.100.66 modified

17.配置网络插件:

1.Master 和node 节点:

[root@master1 software]# tar -xvzf flannel-v0.10.0-linux-amd64.tar.gz flanneld mk-docker-opts.sh README.md

[root@master1 software]# ll 总用量 452700 -rwxr-xr-x 1 1001 1001 36327752 1月 24 2018 flanneld -rw-r--r-- 1 root root 9706487 10月 18 21:46 flannel-v0.10.0-linux-amd64.tar.gz drwxr-xr-x 4 root root 79 12月 4 2018 kubernetes -rw-r--r-- 1 root root 417509744 10月 18 12:51 kubernetes-server-linux-amd64.tar.gz -rwxr-xr-x 1 1001 1001 2139 3月 18 2017 mk-docker-opts.sh -rw-rw-r-- 1 1001 1001 4298 12月 24 2017 README.md

[root@master1 software]# mv flanneld mk-docker-opts.sh /data/apps/kubernetes/server/bin/ [root@master1 software]# for i in master2 master3 ; do scp /data/apps/kubernetes/server/bin/flanneld $i:/data/apps/kubernetes/server/bin/;done flanneld 100% 35MB 34.6MB/s 00:01 flanneld 100% 35MB 38.0MB/s 00:00 [root@master1 software]# for i in master2 master3 ; do scp /data/apps/kubernetes/server/bin/mk-docker-opts.sh $i:/data/apps/kubernetes/server/bin/;done mk-docker-opts.sh 100% 2139 1.1MB/s 00:00 mk-docker-opts.sh

node节点操作: [root@node1 software]# mv flanneld mk-docker-opts.sh /data/apps/kubernetes/node/bin/ [root@node1 software]# scp /data/apps/kubernetes/node/bin/flanneld node2:/data/apps/kubernetes/node/bin/ flanneld 100% 35MB 37.4MB/s 00:00

[root@node1 software]# scp /data/apps/kubernetes/node/bin/mk-docker-opts.sh node2:/data/apps/kubernetes/node/bin/ mk-docker-opts.sh

2.创建网络段:

#在etcd 集群执行如下命令, 为docker 创建互联网段: [root@master1 software]# etcdctl --ca-file=/etc/etcd/ssl/etcd-ca.pem --cert-file=/etc/etcd/ssl/etcd.pem --key-file=/etc/etcd/ssl/etcd-key.pem --endpoints="https://192.168.100.63:2379,https://192.168.100.65:2379,https://192.168.100.66:2379" set /coreos.com/network/config '{ "Network": "10.99.0.0/16", "Backend": {"Type": "vxlan"}}'

##master1,master2,master3都需要执行以上命令。因为我这里etcd集群是在master1,master2,master3上面。

注意: 1.flanneld 当前版本 (v0.10.0) 不支持 etcd v3,故使用 etcd v2 API 写入配置 key 和网段数据; 2.写入的 Pod 网段 "Network" 必须是 /16 段地址,必须与kube-controller-manager 的 --cluster-cidr 参数值一致;

3 创建flannel 配置文件:

[root@master1 etc]# cat flanneld.conf FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.100.63:2379,https://192.168.100.65:2379,https://192.168.100.66:2379 -etcd-cafile=/etc/etcd/ssl/etcd-ca.pem -etcd-certfile=/etc/etcd/ssl/etcd.pem -etcd-keyfile=/etc/etcd/ssl/etcd-key.pem"

4.创建启动文件:

[root@master1 etc]# cat /usr/lib/systemd/system/flanneld.service [Unit] Description=Flanneld overlay address etcd agent After=network-online.target network.target Before=docker.service [Service] Type=notify EnvironmentFile=/data/apps/kubernetes/etc/flanneld.conf ExecStart=/data/apps/kubernetes/server/bin/flanneld --ip-masq $FLANNEL_OPTIONS ExecStartPost=/data/apps/kubernetes/server/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env Restart=on-failure [Install] WantedBy=multi-user.target

注意: 1.mk-docker-opts.sh 脚本将分配给 flanneld 的 Pod 子网网段信息写入/run/flannel/subnet.env文件,后续 docker 启动时使用这个文件中的环境变量配置 docker0 网桥; 2.flanneld 使用系统缺省路由所在的接口与其它节点通信,对于有多个网络接口(如内网和公网)的节点,可以用 -iface 参数指定通信接口 3.flanneld 运行时需要 root 权限;

5.启动服务:

[root@master1 etc]# systemctl daemon-reload && systemctl enable flanneld && systemctl restart flanneld [root@master1 etc]# systemctl status flanneld ● flanneld.service - Flanneld overlay address etcd agent Loaded: loaded (/usr/lib/systemd/system/flanneld.service; enabled; vendor preset: disabled) Active: active (running) since 三 2019-10-23 13:21:44 CST; 8min ago Main PID: 17144 (flanneld) CGroup: /system.slice/flanneld.service └─17144 /data/apps/kubernetes/server/bin/flanneld --ip-masq --etcd-endpoints=https://192.168.100.63:2379,https://192.168.100.65:2379,https://19... 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.600755 17144 iptables.go:137] Deleting iptables rule: -s 10.99.0.0/16 ! -d 224.0.0.0/...ASQUERADE 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.602183 17144 main.go:396] Waiting for 22h59m59.987696325s to renew lease 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.606866 17144 iptables.go:137] Deleting iptables rule: ! -s 10.99.0.0/16 -d 10.99.83.0...-j RETURN 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.610901 17144 iptables.go:125] Adding iptables rule: -d 10.99.0.0/16 -j ACCEPT 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.613617 17144 iptables.go:137] Deleting iptables rule: ! -s 10.99.0.0/16 -d 10.99.0.0/...ASQUERADE 10月 23 13:21:44 master1 systemd[1]: Started Flanneld overlay address etcd agent. 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.617079 17144 iptables.go:125] Adding iptables rule: -s 10.99.0.0/16 -d 10.99.0.0/16 -j RETURN 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.620096 17144 iptables.go:125] Adding iptables rule: -s 10.99.0.0/16 ! -d 224.0.0.0/4 ...ASQUERADE 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.622983 17144 iptables.go:125] Adding iptables rule: ! -s 10.99.0.0/16 -d 10.99.83.0/24 -j RETURN 10月 23 13:21:44 master1 flanneld[17144]: I1023 13:21:44.625527 17144 iptables.go:125] Adding iptables rule: ! -s 10.99.0.0/16 -d 10.99.0.0/16...ASQUERADE Hint: Some lines were ellipsized, use -l to show in full.

6.配置文件分发:

[root@master1 etc]# for i in master2 master3; do scp flanneld.conf $i:/data/apps/kubernetes/etc/;done flanneld.conf 100% 244 205.7KB/s 00:00 flanneld.conf 100% 244 4.0KB/s 00:00

[root@master1 etc]# for i in master2 master3; do scp /usr/lib/systemd/system/flanneld.service $i:/usr/lib/systemd/system/;done flanneld.service 100% 457 323.5KB/s 00:00 flanneld.service

7.master2,master3启动flanneld服务:

[root@master3 ~]# systemctl daemon-reload && systemctl enable flanneld && systemctl restart flanneld

8.node节点部署flanneld:

[root@master1 etc]# cat flanneld.conf FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.100.63:2379,https://192.168.100.65:2379,https://192.168.100.66:2379 -etcd-cafile=/etc/etcd/ssl/etcd-ca.pem -etcd-certfile=/etc/etcd/ssl/etcd.pem -etcd-keyfile=/etc/etcd/ssl/etcd-key.pem" ##因为flannel.conf 需要etcd证书。所以首先需要创建etcd证书存放的目录

[root@master1 etc]# for i in node1 node2 ; do rsync -avzP /etc/etcd/ssl $i:/etc/etcd/ ; done [root@master1 etc]# for i in node1 node2; do scp flanneld.conf $i:/data/apps/kubernetes/etc/;done flanneld.conf 100% 244 269.5KB/s 00:00 flanneld.conf

[root@master1 etc]# for i in node1 node2 ; do scp /usr/lib/systemd/system/flanneld.service $i:/usr/lib/systemd/system/;done flanneld.service 100% 457 9.1KB/s 00:00 flanneld.service

注意:node节点需要修改参数: [root@node1 etc]# vim /usr/lib/systemd/system/flanneld.service ExecStart=/data/apps/kubernetes/node/bin/flanneld --ip-masq $FLANNEL_OPTIONS ExecStartPost=/data/apps/kubernetes/node

9.node节点启动服务:

[root@node2 ~]# systemctl daemon-reload && systemctl enable flanneld && systemctl restart flanneld [root@node2 ~]# systemctl status flanneld ● flanneld.service - Flanneld overlay address etcd agent Loaded: loaded (/usr/lib/systemd/system/flanneld.service; enabled; vendor preset: disabled) Active: active (running) since 三 2019-10-23 14:10:33 CST; 4s ago Process: 15862 ExecStartPost=/data/apps/kubernetes/node/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env (code=exited, status=0/SUCCESS) Main PID: 15840 (flanneld) Tasks: 7 Memory: 8.6M CGroup: /system.slice/flanneld.service └─15840 /data/apps/kubernetes/node/bin/flanneld --ip-masq --etcd-endpoints=https://192.168.100.63:2379,https://192.168.100.65:2379,https://192....

10.检查各个pod是否能够正常通信:

编写如下脚本探测:

[root@master1 flanneld]# cat flanneld.sh #!/bin/bash IPADDR=$(ansible nodes -m shell -a 'ip addr show flannel.1 |grep -w inet' | awk '/inet/{print $2}'|cut -d '/' -f1) for i in ${IPADDR[@]} ; do ping -c 2 $i &>/dev/null if [ $? -eq 0 ]; then echo -e "�33[31m $i is online �33[0m" else echo -e "�33[31m $i is offline �33[0m" fi done

[root@master1 flanneld]# bash flanneld.sh 10.99.62.0 is online 10.99.74.0 is online 10.99.3.0 is online 10.99.20.0 is online

18.修改docker.service 启动文件:

1.修改docker 服务启动文件,注入dns 参数:

[Service] Environment="DOCKER_DNS_OPTIONS= --dns 10.99.110.110 --dns 114.114.114.114 --dns-search default.svc.ziji.work --dns-search svc.ziji.work --dns-opt ndots:2 --dns-opt timeout:2 --dns-opt attempts:2"

##dns 根据实际部署的dns 服务来填写

2.添加子网配置文件:

[root@master1 flanneld]# vim /usr/lib/systemd/system/docker.service EnvironmentFile=/run/flannel/subnet.env ExecStart=/usr/bin/dockerd -H unix:// $DOCKER_NETWORK_OPTIONS $DOCKER_DNS_OPTIONS

3.启动服务:

[root@master1 flanneld]# systemctl daemon-reload && systemctl enable docker flanneld && systemctl restart docker flanneld

4.配置文件分发:

[root@master1 flanneld]# for i in master2 master3 node1 node2 ; do rsync -avzP /usr/lib/systemd/system/docker.service.d $i:/usr/lib/systemd/system/;done

[root@master1 flanneld]# for i in master2 master3 node1 node2;do scp /usr/lib/systemd/system/docker.service $i:/usr/lib/systemd/system/;done

[root@master1 flanneld]# ansible nodes -m shell -a 'systemctl daemon-reload && systemctl enable docker flanneld && systemctl restart docker flanneld'

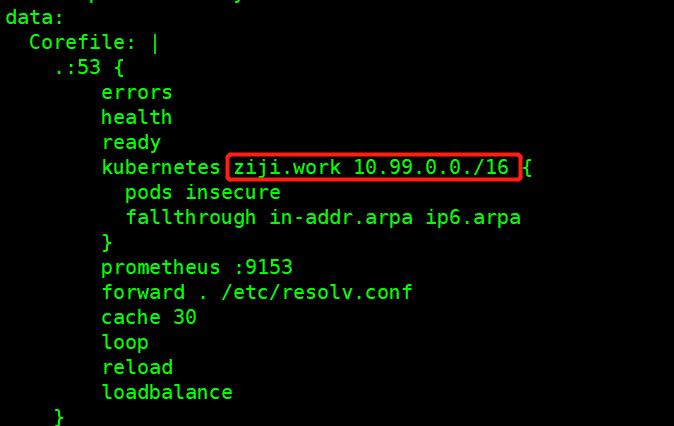

19.配置coredns:

#10.99.110.110 是kubelet 中配置的dns:

1.安装coredns:

[root@master1 flanneld]#wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/coredns.yaml.sed [root@master1 flanneld]#wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/deploy.sh

[root@master1 flanneld]# chmod +x deploy.sh [root@master1 flanneld]#./deploy.sh -i 10.99.110.110 > coredns.yml

[root@master1 flanneld]#vim coredns.yaml

[root@master1 flanneld]# kubectl apply -f coredns.yaml [root@master1 flanneld]# kubectl get pod -n kube-system NAME READY STATUS RESTARTS AGE coredns-6694fb884c-6qbvc 1/1 Running 0 5m11s coredns-6694fb884c-rs6dm 1/1 Running 0 5m11s

##这里我是提前已经coredns的image已经拉取下来的。

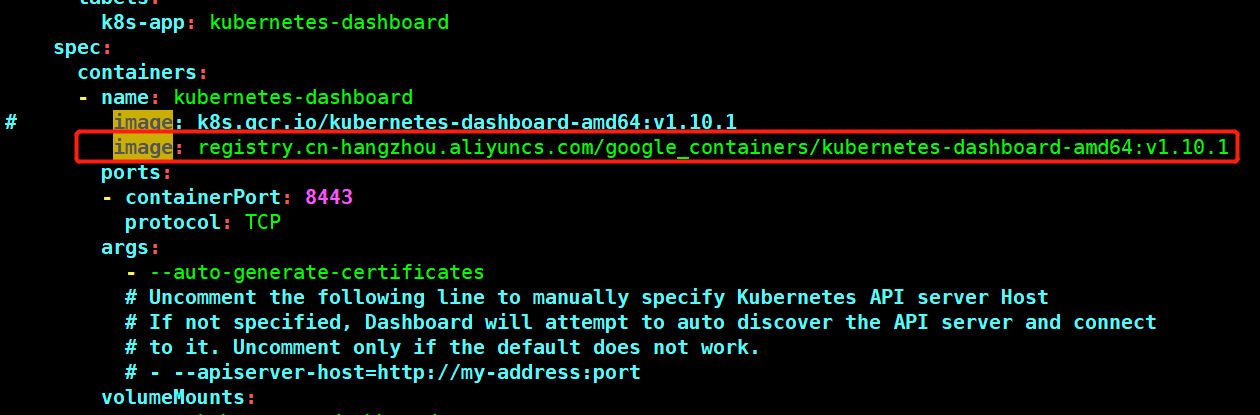

20.部署dashboard:

1.下载dashboard的配置文件:

[root@master1 dashboard]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v1.10.1/src/deploy/recommended/kubernetes-dashboard.yam

[root@master1 dashboard]# ll 总用量 8 -rw-r--r-- 1 root root 4577 10月 26 16:25 kubernetes-dashboard.yaml

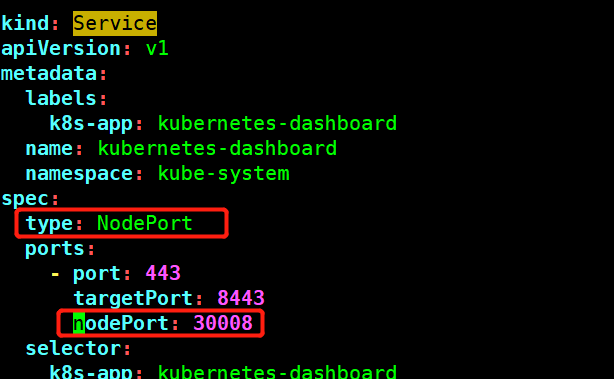

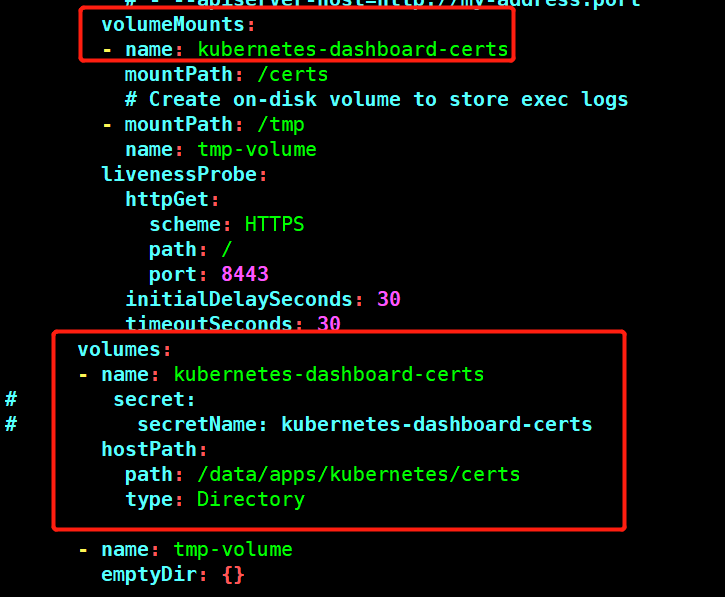

2.修改下载下来的yaml文件:

注意:修改一下配置

image: registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.10.1

type: NodePort

nodePort: 30008

3.CA 证书的生成可以参考如下配置:

[root@master1 dashboard]#openssl genrsa -des3 -passout pass:x -out dashboard.pass.key 2048 [root@master1 dashboard]#openssl rsa -passin pass:x -in dashboard.pass.key -out dashboard.key [root@master1 dashboard]#rm dashboard.pass.key [root@master1 dashboard]#openssl req -new -key dashboard.key -out dashboard.csr ........US ........回车 [root@master1 dashboard]#openssl x509 -req -sha256 -days 365 -in dashboard.csr -signkey dashboard.key -out dashboard.crt 注意: 默认生成证书到期时间为一年, 修改过期时间为10 年 -days 3650

[root@master1 dashboard]# ll 总用量 20 -rw-r--r-- 1 root root 1103 10月 26 17:49 dashboard.crt -rw-r--r-- 1 root root 952 10月 26 17:23 dashboard.csr -rw-r--r-- 1 root root 1679 10月 26 17:22 dashboard.key -rw-r--r-- 1 root root 4776 10月 26 18:53 kubernetes-dashboard.yaml

4.将创建的证书拷贝到其他node 节点:

[root@master1 dashboard]#for i in master2 master3 node1 node2; do rsync -avzP dashboard $i:/data/apps/kubernetes/certs;done

##证书挂载的目录

5.在master 节点执行如下命令,部署ui:

[root@master1 dashboard]# kubectl apply -f kubernetes-dashboard.yaml

6.查看:

[root@master1 dashboard]# kubectl get pod -n kube-system NAME READY STATUS RESTARTS AGE coredns-6694fb884c-6qbvc 1/1 Running 0 5h49m coredns-6694fb884c-rs6dm 1/1 Running 0 5h49m kubernetes-dashboard-5974995975-558vc 1/1 Running 0 14m

[root@master1 dashboard]# kubectl get svc -n kube-system NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kube-dns ClusterIP 10.99.110.110 <none> 53/UDP,53/TCP,9153/TCP 5h50m kubernetes-dashboard NodePort 10.99.149.55 <none> 443:30008/TCP 108m

7.访问宿主机的30008端口 https://node_ip:port

21.配置令牌:

1.创建serviceaccount:

[root@master1 dashboard]# kubectl create serviceaccount dashboard-admin -n kube-system

serviceaccount/dashboard-admin created

2.serviceaccount绑定为clusterrole:

[root@master1 dashboard]# kubectl create clusterrolebinding dashboard-cluster-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

clusterrolebinding.rbac.authorization.k8s.io/dashboard-cluster-admin created

3.获取token:

[root@master1 dashboard]# kubectl describe secret -n kube-system dashboard-admin [root@master1 dashboard]# kubectl describe secret -n kube-system dashboard-admin Name: dashboard-admin-token-4cgjk Namespace: kube-system Labels: <none> Annotations: kubernetes.io/service-account.name: dashboard-admin kubernetes.io/service-account.uid: 707e7067-f7e1-11e9-9fbc-000c29f91854 Type: kubernetes.io/service-account-token Data ==== namespace: 11 bytes token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNGNnamsiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiNzA3ZTcwNjctZjdlMS0xMWU5LTlmYmMtMDAwYzI5ZjkxODU0Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.IM7mu1Rin--kOFTiA4zQC7VVUg1q32gXtU457kmgyzl4rVii5pIo1ExhpFpw3BtwKyuzyGVZ1ThLBST5aBYlnz6rIBilsEalmBukFj39hEeH5pUBdeWSxw91ZdyiyqjnRMLou2GdlmPRyZp4-GJNQn4Fyr5J1OUWqQI6TGaRyHKMEBIEdl7TFTqn_mt61JqZ2oBBz_Dfd6iNviiTXl2enmjBGeBZ43rsqayPAbusUKBDtQdsK9LbAskjtOa67_EHESJPnIeqcFD8fu-fnSizTdsdhN1seL3WhKRUNDpil9BScw09O_uHrF_J9AAmWk02sAKQoVxk9Uwl9zPhHH51NQ

4.登陆: